- The paper introduces the PCLS algorithm, which transforms symmetric tensor decomposition subproblems into polynomial root-finding tasks, reducing the computational swamps encountered by ALS.

- The paper reports empirical results showing over 10x fewer iterations and significant CPU time reductions for both third- and fourth-order tensor cases compared to ALS.

- The paper highlights the theoretical and practical benefits of inherently preserving tensor symmetry, paving the way for more robust and scalable applications in signal processing and related fields.

Iterative Algorithms for Symmetric Outer Product Tensor Decompositions

Introduction and Background

The decomposition of tensors into rank-one components is a fundamental process in multi-way data analysis, with the canonical polyadic (CP) and symmetric outer product decomposition (SOPD) as central paradigms. SOPD seeks to factor fully or partially symmetric tensors into sums of rank-one symmetric tensors. This is particularly relevant in fields such as signal processing, where tensor representations capture higher-order interactions while symmetry arises from physical or structural constraints.

Standard computational approaches for tensor decompositions rely heavily on the Alternating Least Squares (ALS) algorithm due to its conceptual simplicity and robustness. However, ALS faces significant convergence issues when facing symmetry constraints, frequently leading to prohibitively slow progress or failing to exploit the reduced degrees of freedom imposed by symmetry.

Limitations of ALS and the Need for PCLS

The ALS method operates by iteratively solving linear least-squares problems for each factor matrix, with the remaining ones held fixed. While effective for unconstrained decompositions, this Gauss-Seidel process induces three major drawbacks for SOPD problems:

- Symmetry Ignorance: ALS does not inherently enforce symmetry, often yielding factor matrices that violate the requisite structure.

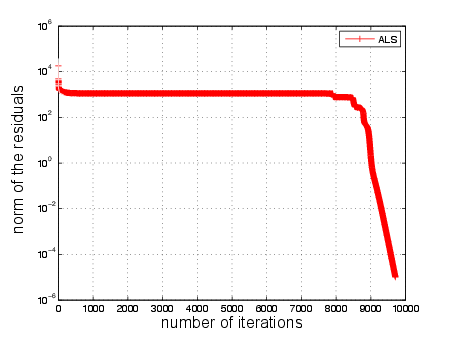

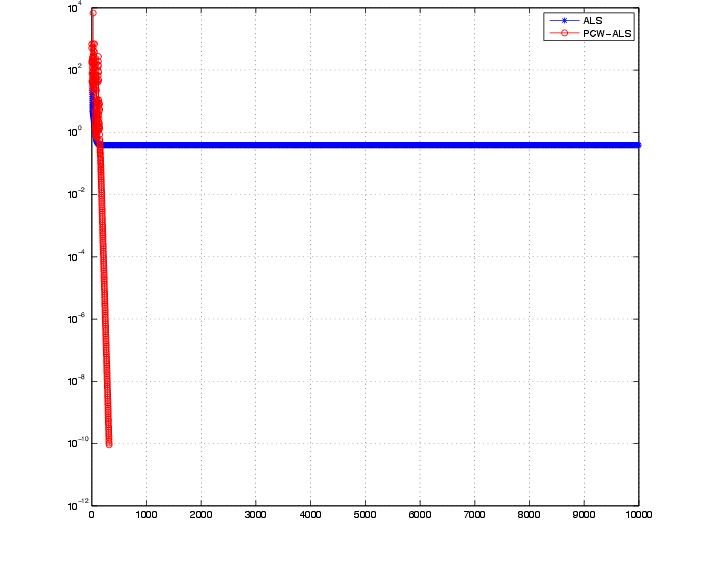

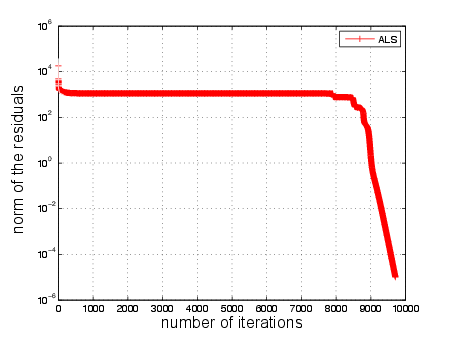

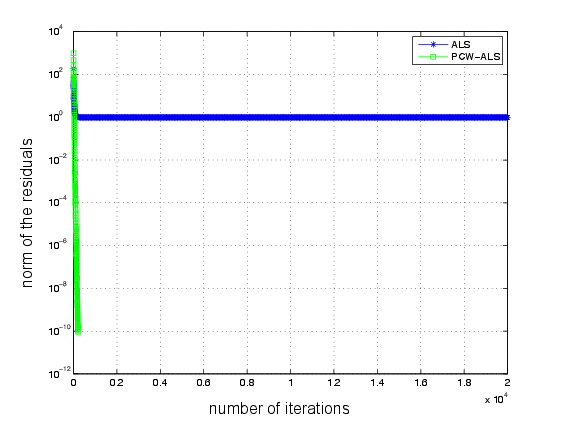

- Non-uniqueness and Ill-conditioning: The least-squares subproblems become highly ill-conditioned, exacerbating "swamps"—flat error landscapes where the method stalls for thousands of iterations (Figure 1).

- Redundant Computation: For partially symmetric tensors, ALS updates a full set of factor matrices rather than taking advantage of symmetric redundancy, resulting in computational inefficiency.

Figure 1: The long flat curve (swamp) in the ALS method. The error stays at 103 during the first 8000 iterations.

These pitfalls motivate the development of specialized algorithms—namely, the Partial Column-wise Least Squares (PCLS) method, tailored to exploit symmetry and reduce computational overhead.

PCLS targets SOPD for both third-order partially symmetric tensors and fourth-order fully symmetric tensors by recasting nonlinear subproblems into polynomial root-finding and reduced linear systems:

- Third-order Partial Symmetry: For a tensor T∈RI×I×K, partially symmetric in modes 1 and 2, PCLS alternates between updating A (the symmetric factor) via column-wise solution of quartic polynomial minimization and updating C via a single least-squares step. This approach leverages the structure of the Khatri-Rao product and reduces subproblem dimensionality.

- Fourth-order Full Symmetry: For T∈RI×I×I×I, the decomposition is reduced, via "square matricization," to matching terms (A⊙A)(A⊙A)T. An orthogonal transformation aligns the factors, allowing each column of A to be found as in the partial symmetry case, and the orthogonality constraint to be enforced via QR decomposition.

- Generalization to Higher Orders: The methodology extends to higher-order partial symmetries by recursive application of appropriate matricizations (leveraging tensor block structures) and structured least-squares subproblems, always focusing on reducing the space to polynomial root-finding and minimal linear updates.

Numerical Validation and Empirical Results

The numerical section provides extensive empirical evidence substantiating the efficiency and reliability of PCLS over ALS. Several classes of tensors were constructed—with both random and structured initializations—and the main performance indicators were number of iterations to convergence and total CPU time.

- Case 1: Third-order Partially Symmetric Tensors

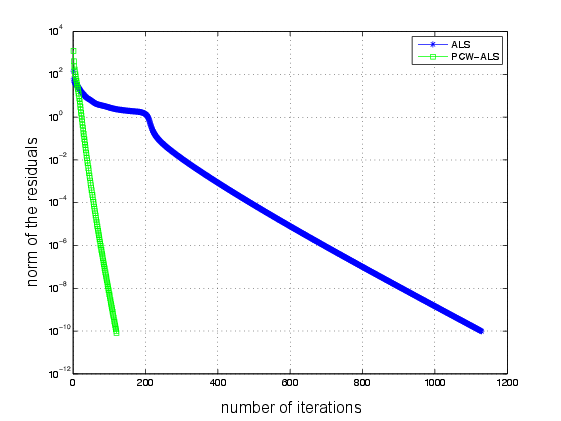

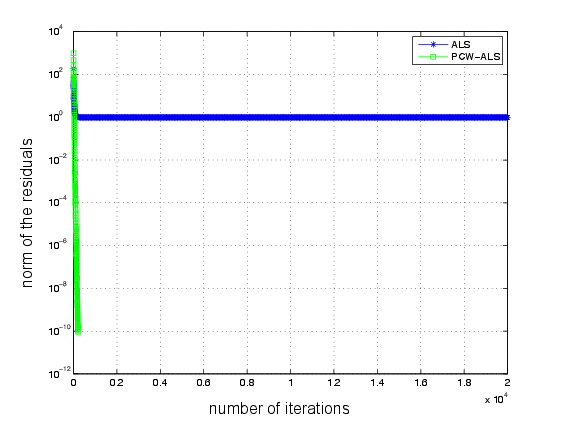

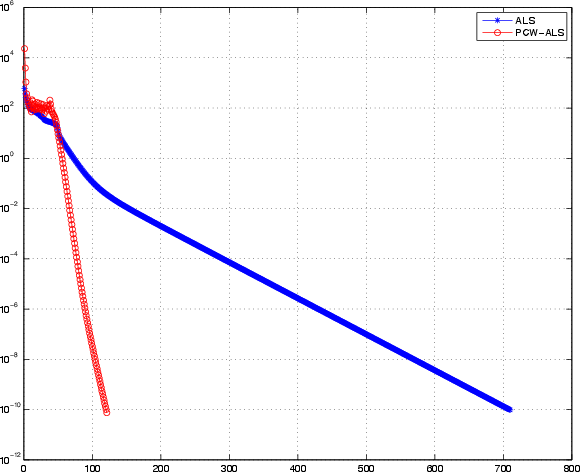

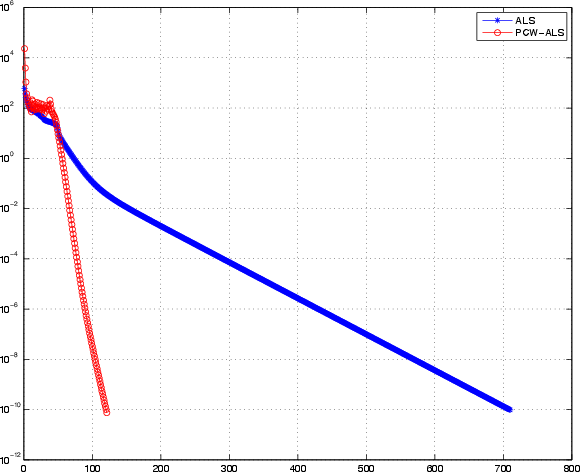

For X∈R17×17×18 and SOPD rank Rps=17, figures indicate that, with a good initialization, PCLS achieves >10× fewer iterations (120 vs. 1129) and about half the computational time (4s vs. 6.4s) compared to ALS. With random initialization, PCLS consistently avoids the ALS swamp, converging in 205 iterations, while ALS fails to descend below unit error after 20000 iterations.

Figure 2: Plots for the Example \ref{example1chap5} showing rapid convergence for PCLS compared to ALS under both favorable and unfavorable initializations.

- Batch Statistics: Over 50 trials, PCLS averaged 258.7 iterations and 6.1s per run, whereas ALS required 3445 iterations and 17.2s, verifying statistical robustness.

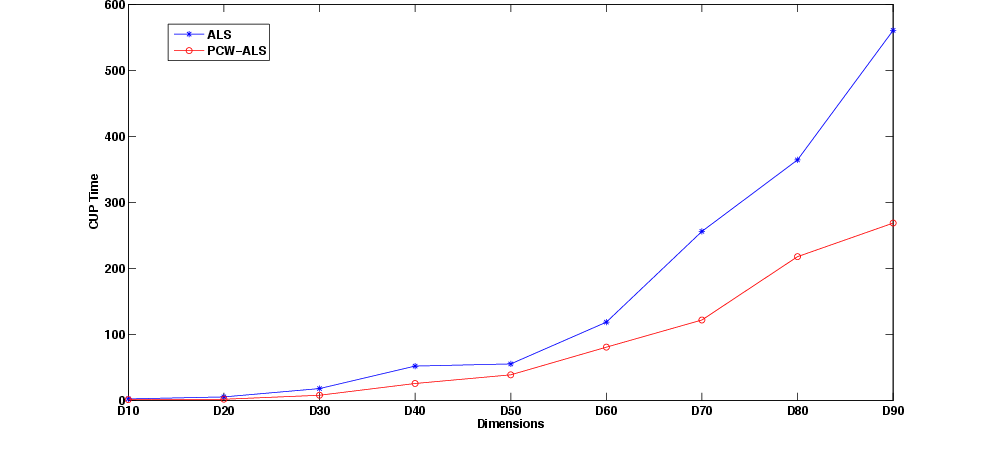

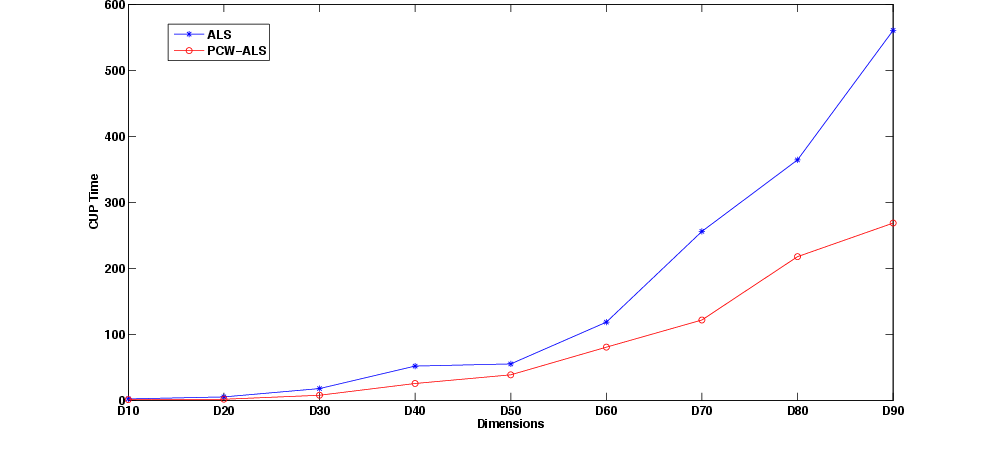

- Scalability: Figure 3 depicts performance as tensor size increases—PCLS exhibits far superior scaling, with CPU time growing much more slowly than ALS.

Figure 3: Plots for the Example \ref{example2bchap5}, demonstrating advantageous scaling of PCLS with tensor dimension compared to ALS.

- Case 2: Fourth-order Fully Symmetric Tensors

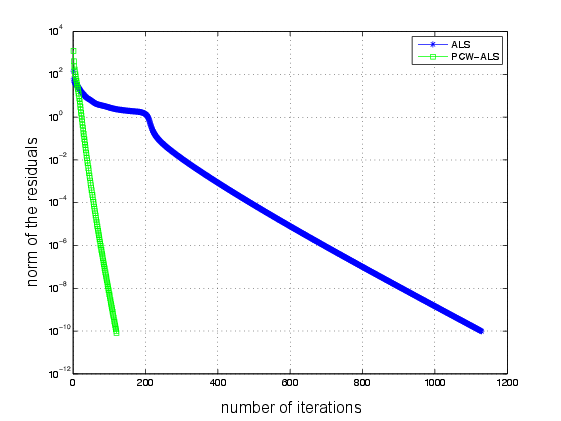

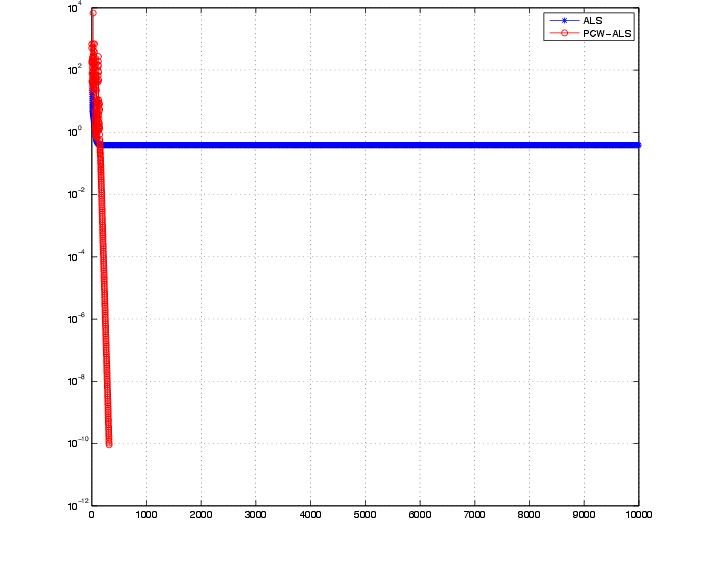

In experiments with X∈R10×10×10×10 and R=10, Figure 4 shows that ALS again encounters swamps while PCLS achieves rapid convergence. For higher dimension (154 tensor), PCLS completes in 4.3s versus ALS's 27.2s (Figure 5).

Figure 4: Plot for the Example \ref{examplefourth1}, illustrating swamp elimination and fast convergence by PCLS for fourth-order fully symmetric tensors.

Figure 5: Plot for the Example \ref{examplefourth2}, showing both methods converge, but PCLS achieves a substantial speedup in CPU time.

Theoretical and Practical Implications

PCLS systematically eliminates two of the central inefficiencies in classical ALS for SOPD:

- By transforming highly nonlinear and ill-conditioned subproblems into globally solvable quartic polynomials and structured least-squares, PCLS improves both iteration complexity and stability.

- Symmetry is natively preserved by the algorithmic structure, sidestepping the need for post-processing symmetrization or ad hoc constraints.

- The method scales efficiently and robustly, as evidenced by its favorable CPU complexity and lack of swamping even for large-scale problems.

These advances have direct practical consequences for high-dimensional tensor applications in signal processing, chemometrics, psychometrics, and related fields, where preserving and exploiting symmetry is critical. On the theoretical side, PCLS motivates exploration of algorithms that combine algebraic reformulations and structured numerical optimization for tensor problems with group-theoretic invariances.

Moving forward, the extension of PCLS to broader classes of structured tensor decompositions—incorporating, e.g., partial symmetries, anti-symmetries, or block structures—presents a promising direction. Furthermore, integration with randomized numerical linear algebra and parallelization could further enhance performance in large-scale scenarios.

Conclusion

The iterative Partial Column-wise Least Squares (PCLS) algorithm represents a substantial advancement in decomposing symmetric and partially symmetric tensors. By exploiting symmetry and leveraging polynomial structure in subproblems, PCLS achieves significantly faster and more reliable convergence compared to ALS, as validated by comprehensive numerical benchmarks. The reduction of computational swamps and improved scaling positions PCLS as the method of choice for SOPD in data-rich, structure-exploiting applications, laying the groundwork for further theoretical investigations and practical developments in tensor analysis.