- The paper presents a latent variable model that distinguishes weak signals from structured noise in multi-output regression.

- It introduces a novel latent signal-to-noise ratio hyperparameter and an infinite-dimensional shrinkage prior for model regularization.

- The approach achieves efficient computation and superior performance in both simulated and real-world high-dimensional datasets.

Multiple Output Regression with Latent Noise

Introduction

The paper "Multiple Output Regression with Latent Noise" (1410.7365) addresses the challenges posed by structured noise in high-dimensional data modeling. Structured noise, arising from both observed and unobserved confounders that affect multiple target variables, can obscure the weak signal in regression tasks. The authors propose a latent variable model to mitigate structured noise effects, utilizing the correlation structures of regression weights through shared latent factors. This model introduces a latent signal-to-noise ratio as a hyperparameter to facilitate modeling weak signals, accompanied by an ordered infinite-dimensional shrinkage prior to resolve rotational unidentifiability.

The authors present a novel model structure:

Y=(XΨ+Ω)Γ+E

Here, Y represents the target variables, X the covariates, Ψ and Γ the projection matrices with reduced-rank assumptions, Ω the latent noise component, and E independent noise. The latent signal mediates through XΨ, while structured noise through Ω. The model leverages a shared Γ to connect both signal and noise with the target data, providing a cohesive framework to extract weak signals obscured by noise.

Methodological Advancements

Latent Signal-to-Noise Ratio

A key innovation is the latent signal-to-noise ratio β, defined as:

β=Trace(Var(Ω))Trace(Var(XΨ))

This ratio acts as a regularization parameter, guiding the model in distinguishing between signal and noise in the latent space. By tuning β, researchers can control how much structured noise versus signal explains the variance in the target data, allowing for more accurate prediction under noisy conditions.

Infinite-Dimensional Shrinkage Priors

The model employs shrinkage priors over infinite-dimensional spaces for Γ, Ψ, and Ω. Shrinkage parameters enforce a sort order on latent components based on their importance, alleviating issues of rotational unidentifiability inherent in reduced-rank regression models.

Efficient Computation

The authors introduce a reparameterization trick to the model, significantly reducing the computational complexity from O(P3S13) to O(P3+S13). This computational efficiency is crucial for scalability in big data contexts.

Simulation and Real-World Application

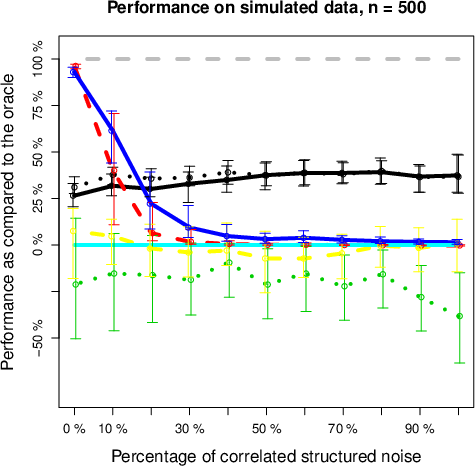

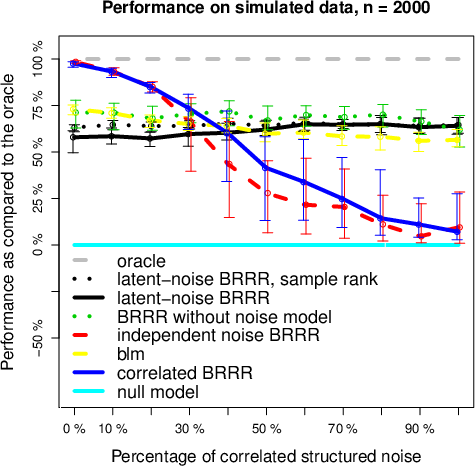

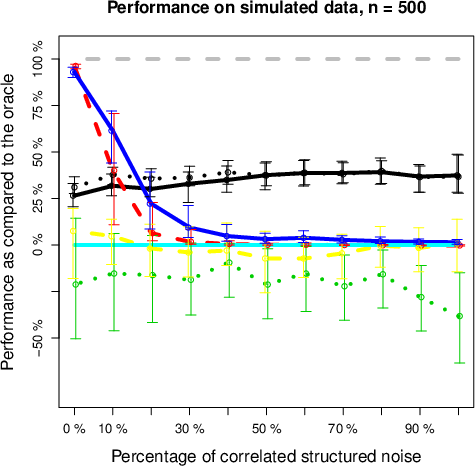

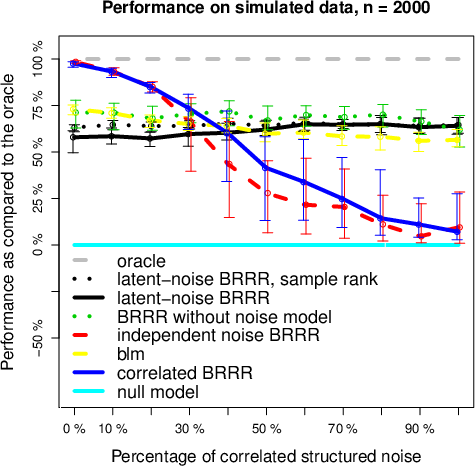

The paper demonstrates the superiority of the latent-noise model over traditional models in both simulated and real-world datasets, including metabolomics prediction from SNP data and fMRI response prediction. In scenarios where latent noise predominates, latent-noise BRRR outperformed even the true model, particularly with insufficient sample sizes where independent-noise models failed.

Figure 1: Performance of different methods, compared to the true model, as a function of the proportion of latent noise with a training set of (a) 500 and (b) 2000 samples.

The model also showed promise in multivariate association detection, enhancing power over traditional methods like canonical correlation analysis (CCA) and univariate linear models, particularly when latent noise was present in metabolomics-genetics associations.

Future Directions

The latent-noise approach opens avenues for exploring structured noise modeling in various domains, suggesting potential enhancements by simultaneously modeling both latent and independent structured noise. Further investigation into computational strategies and hyperparameter tuning, such as automated learning of β, is recommended to optimize performance.

Conclusion

The paper provides a robust framework for handling structured noise in complex datasets, advancing the state-of-the-art in multiple output regression. By leveraging latent signals and structured noise in a unified model, this approach offers improved prediction capabilities and association detection power, signifying a substantial contribution to the computational inference field. The methodologies and insights from this study pave the way for extended applications across different high-dimensional data settings.