- The paper presents a low-cost sensor glove with force feedback that accurately captures human finger movements for effective teleoperation.

- It employs Bayesian linear regression with Gaussian basis functions to model probabilistic trajectories, enabling task generalization from few demonstrations.

- Demonstrated on the iCub platform, the system successfully reproduces tasks like cup stacking, highlighting potential for robust, accessible robotic learning.

Low-Cost Sensor Glove with Force Feedback for Learning from Demonstrations Using Probabilistic Trajectory Representations

Introduction

The paper presents a method for utilizing a low-cost sensor glove, equipped with force feedback, to facilitate imitation learning in robotic manipulation tasks. Specifically, the research is directed towards teleoperating a robotic hand, enabling it to learn and autonomously execute tasks demonstrated by a human operator. A probabilistic approach to trajectory representation is adopted in order to allow the robot to generalize from noisy demonstrations.

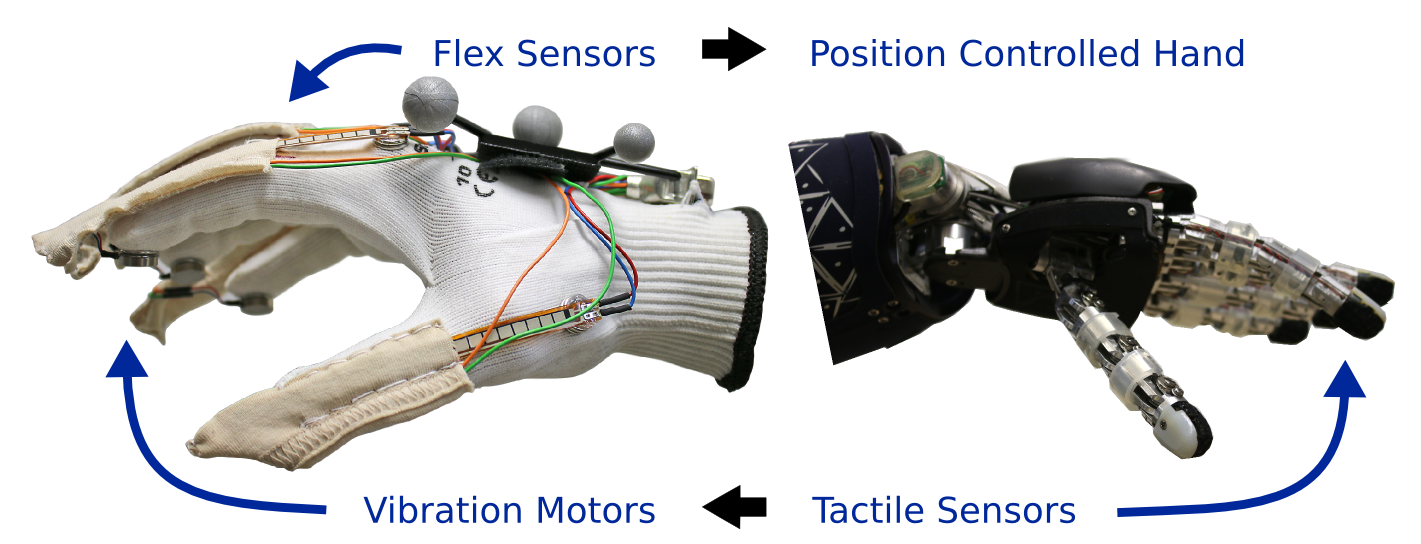

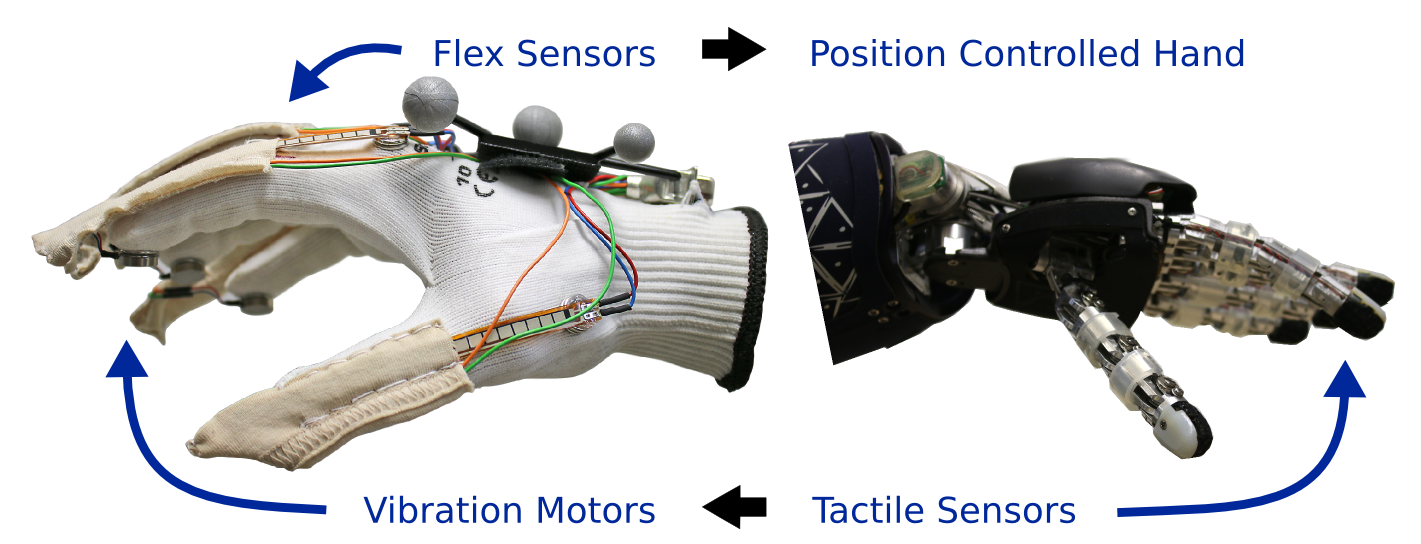

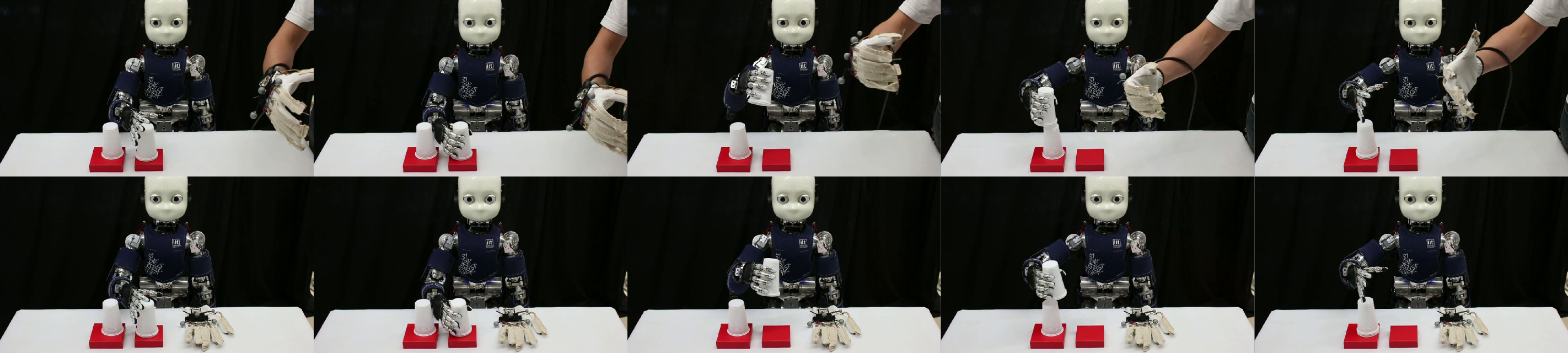

Figure 1: A low-cost sensor glove is used to teleoperate a five-finger robot hand. Tactile information provides force feedback to the teleoperator through activating vibration motors at the glove's fingertips.

Methods

Sensor Glove System

The glove system integrates flex sensors for capturing finger movements and vibration motors for force feedback. The hardware is connected via an Arduino board, and a software interface is provided that supports communication over USB, allowing the streaming of sensor data to a host computer. The glove system is designed with low-cost and open-source principles, expanding accessibility for laboratory and educational settings.

Probabilistic Trajectory Learning

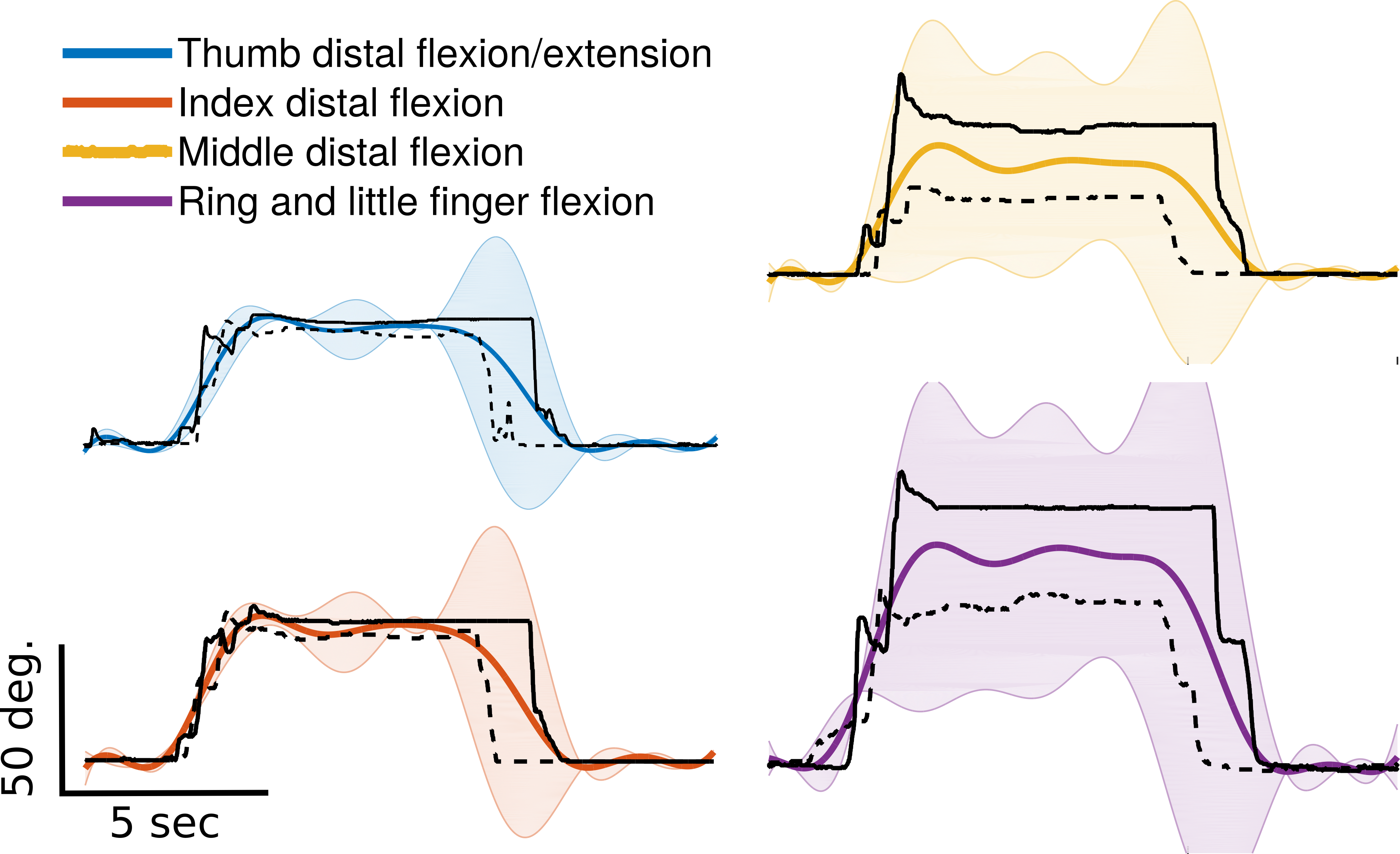

Trajectory learning is realized through Bayesian linear regression, where trajectories are modeled using Gaussian basis functions. The resulting model captures a distribution over probable trajectories, enabling adaptive robotic control strategies. While the research primarily exploits impedance control using pre-set gains, potential future work aims to introduce adaptive gains based on trajectory variance.

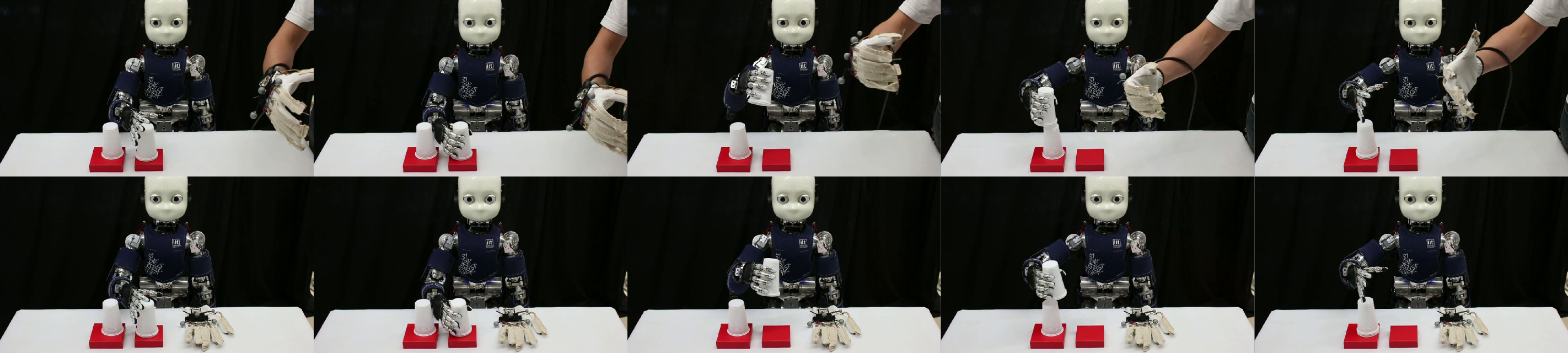

Figure 2: Learning a cup stacking task through teleoperation followed by autonomous reproduction using an impedance controller.

Practical Implementation

The sensor glove's capabilities are demonstrated through a robotic cup stacking task using the iCub humanoid platform. The iCub is teleoperated during demonstration phases, with the glove's flex sensors translating human joint angles to robotic commands. The task execution is further facilitated by an impedance controller that follows the learned mean trajectory.

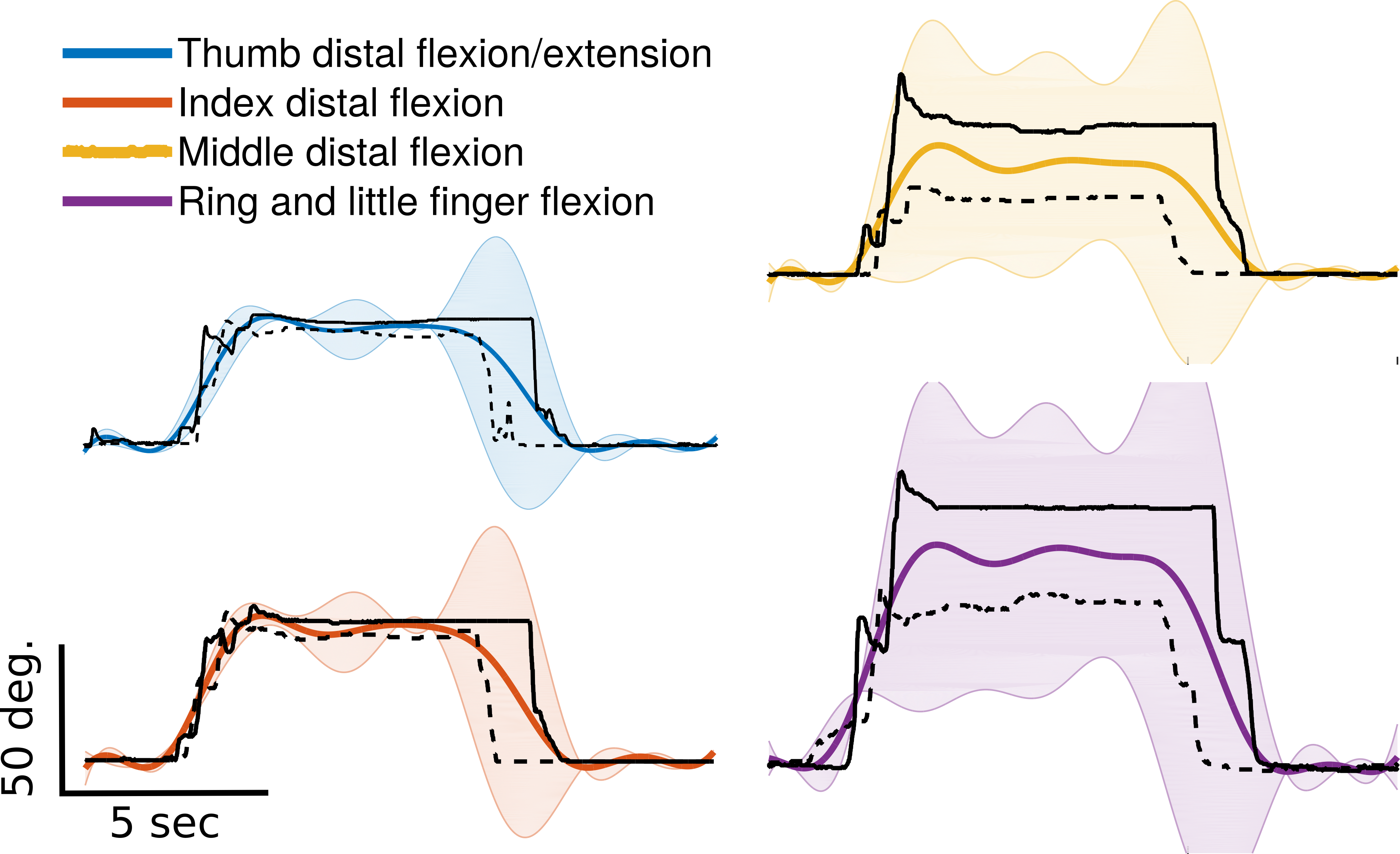

Figure 3: Joint encoder readings of distal fingers for demonstrations illustrate the coupling of little and ring fingers and showcase model predictions with variance.

Training involved just two demonstrations to achieve competent task reproduction, indicating the system's effectiveness in learning from limited data. However, the integration of force feedback not only prevents mechanical stress and deformation on the objects being manipulated but also paves the way for more nuanced interaction capabilities.

Conclusions

The study introduces a sensor glove and probabilistic modeling approach for learning from demonstration in robotic applications. Implemented on the iCub robot, it successfully autonomizes tasks based on few-shot learning techniques. Future enhancements target incorporating inertial sensors for structural autonomy from external tracking systems and refining the control algorithm to be variance-responsive, potentially improving robustness and adaptability in dynamic environments. This research thus lays the groundwork for more sophisticated teleoperation and learning systems in robotics.