- The paper introduces a comprehensive framework for obfuscation, detailing how techniques like randomization, mimicry, and tunneling thwart DPI methods.

- The paper demonstrates that encryption alone is insufficient, as exposed metadata enables DPI to fingerprint circumvention tools.

- The paper underscores the need for continuous innovation to counteract evolving censorship tactics, emphasizing the importance of adaptive, formalized obfuscation strategies.

Network Traffic Obfuscation and Automated Internet Censorship

The paper "Network Traffic Obfuscation and Automated Internet Censorship" discusses the complexities of Internet censorship and the technical means used to circumvent it. As governments worldwide strive to control information access through advanced networking tools like Deep Packet Inspection (DPI), the necessity for sophisticated obfuscation techniques becomes evident.

Internet Censorship and the Role of Obfuscation

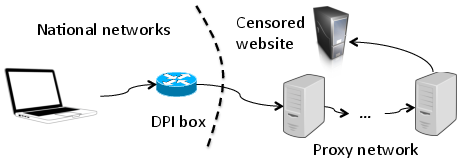

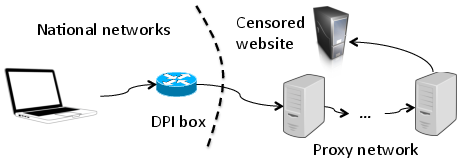

Automated Internet censorship employs DPI methods to filter and block communications based on application-layer content. Prominent examples include China's Great Firewall, which exemplifies state-level attempts to control access to politically sensitive information. In response, tools like Tor have been developed to enable users to circumvent such measures by obfuscating network traffic. DPI systems analyze packet payloads to identify traffic patterns, prompting circumvention tools to adopt methods like randomizing packet payloads or mimicking benign traffic to dodge detection. Obfuscation is classified into categories such as randomization, mimicry, and tunneling, each carrying distinct operational trade-offs.

Figure 1: Proxy-based circumvention of censorship. A client connects to a proxy server that relays its traffic to the intended destination.

Technical Approaches to Obfuscation

Encryption

While encryption renders content unintelligible, it often leaves metadata or protocol headers exposed, which are vulnerable to DPI fingerprinting. For instance, discrepancies in TLS cipher suites have been exploited by censors to identify and block Tor traffic. Consequently, encryption alone is insufficient unless combined with obfuscation measures that neutralize such metadata-based identification.

Randomization

Randomizers aim to present traffic as non-descript by applying stream ciphers to packet payloads. This approach heavily relies on the assumption that censors use blacklisting strategies, allowing anything that does not match specific blocked fingerprints. Tools like ScrambleSuit and the obfs algorithms exemplify this strategy, often necessitating prior key exchanges or embedded encryption layers.

Mimicry and Tunneling

Mimicry involves disguising connection metadata to mirror non-targeted protocols, reducing the likelihood of detection by DPI-capable censors. Implementations like Format-Transforming Encryption (FTE) use regular expressions to match legitimate traffic patterns. Tunneling further obscures traffic by encapsulating it within benign protocols using genuine implementations, difficult to distinguish from regular traffic via superficial inspection.

Challenges to Effective Circumvention

The foremost challenge in censorship circumvention lies in the unpredictable nature of adversarial censors. Real-world adversaries often employ both passive and active techniques, evolving their methodologies to counter new circumvention tools. This arms race necessitates constant adaptation and innovation in obfuscation technologies.

Understanding Censors and Users

Comprehensive threat models are required to factor in the extensive capabilities of censors and their varying tolerance for collateral damage from false positives. Insight into user behavior and circumvention tool usage further aids in refining these models. Gathering such data introduces ethical and privacy challenges, making it imperative to conduct studies under rigorous protection measures.

Future-Proofing Obfuscation Mechanisms

The sustainability of obfuscation mechanisms underlines the importance of developing adaptive systems capable of withstanding evolving censorship strategies. This entails the application of formal methods to design obfuscation strategies with robust theoretical underpinnings and interoperable cryptographic protocols enhancing the security, efficiency, and usability of circumvention tools.

Conclusion

The efficiency and robustness of network traffic obfuscation are crucial to counteracting sophisticated international censorship efforts. Continued research into innovative obfuscation techniques and the socio-political dynamics influencing censor behavior is essential. By fostering a robust theoretical framework and adaptable technological toolkit, the research community can enhance users' ability to access unfiltered information and support principles of open and free Internet access.