- The paper introduces adaptive early exit strategies and network selection systems to reduce computation time without compromising accuracy.

- The methodology employs a weighted binary classification mechanism for early exits and a directed acyclic graph for network selection, achieving up to 2.8x speed-ups.

- Experimental evaluations on ImageNet demonstrate that adaptive inference maintains high accuracy while significantly lowering test-time computing costs.

Adaptive Neural Networks for Efficient Inference

The paper "Adaptive Neural Networks for Efficient Inference" proposes a novel approach to improving the efficiency of deep neural networks by adaptively selecting computation paths. Two schemes are introduced: early exit strategies and network selection systems, which reduce computational time without significantly affecting accuracy. This summary details the methodology, experimental results, and implications of these schemes.

Introduction

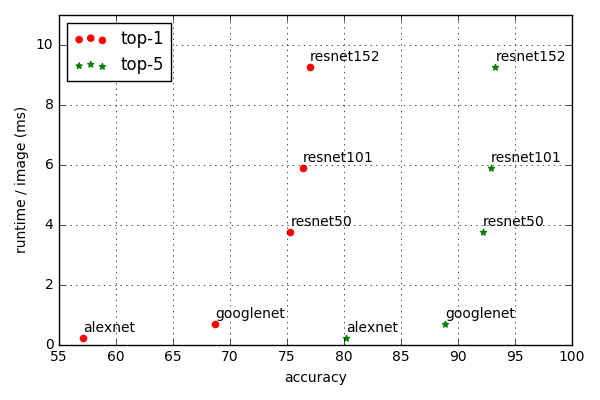

Deep neural networks (DNNs) are known for their exceptional capabilities in various applications but suffer from high computational costs at test time. The proposed approach addresses this cost by dynamically utilizing simpler networks for "easy" examples and reserving complex networks for "difficult" ones. The research introduces adaptive neural evaluations comprising two strategies: adaptive early exits and network selection policies, aiming to minimize computational demands.

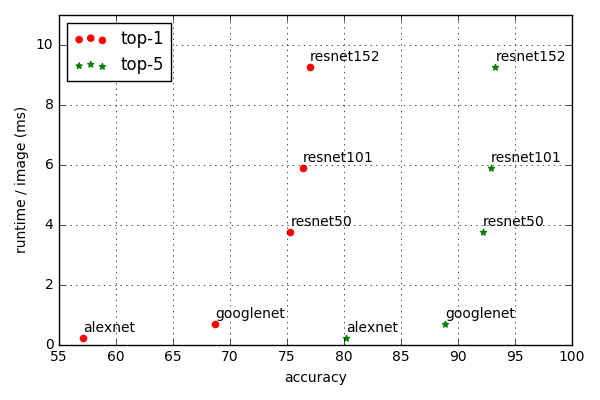

Figure 1: Performance versus evaluation complexity of the DNN architectures that won the ImageNet challenge over past several years. The model evaluation times increase exponentially with respect to the increase in accuracy.

Adaptive Early Exit Networks

The adaptive early exit network strategy allows examples to bypass parts of the network, exiting early when sufficient confidence is achieved in predictions. The decision-making process is modeled as a layer-by-layer weighted binary classification (WBC), optimizing computation versus accuracy trade-offs. Specifically, models are trained to identify when examples can confidently exit without proceeding through the entire network, thereby saving computational resources.

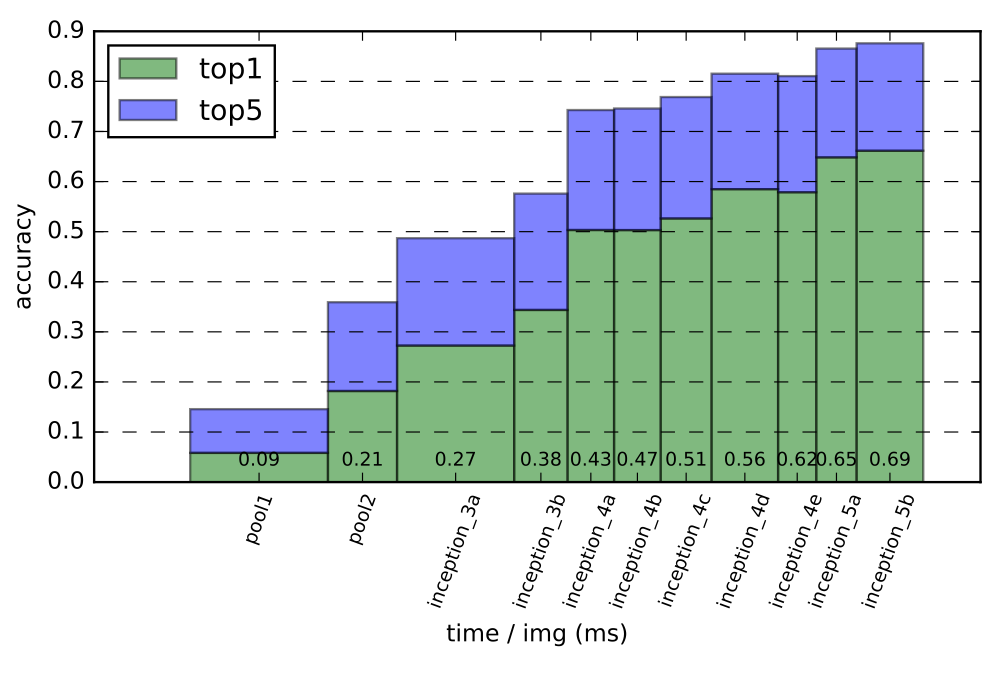

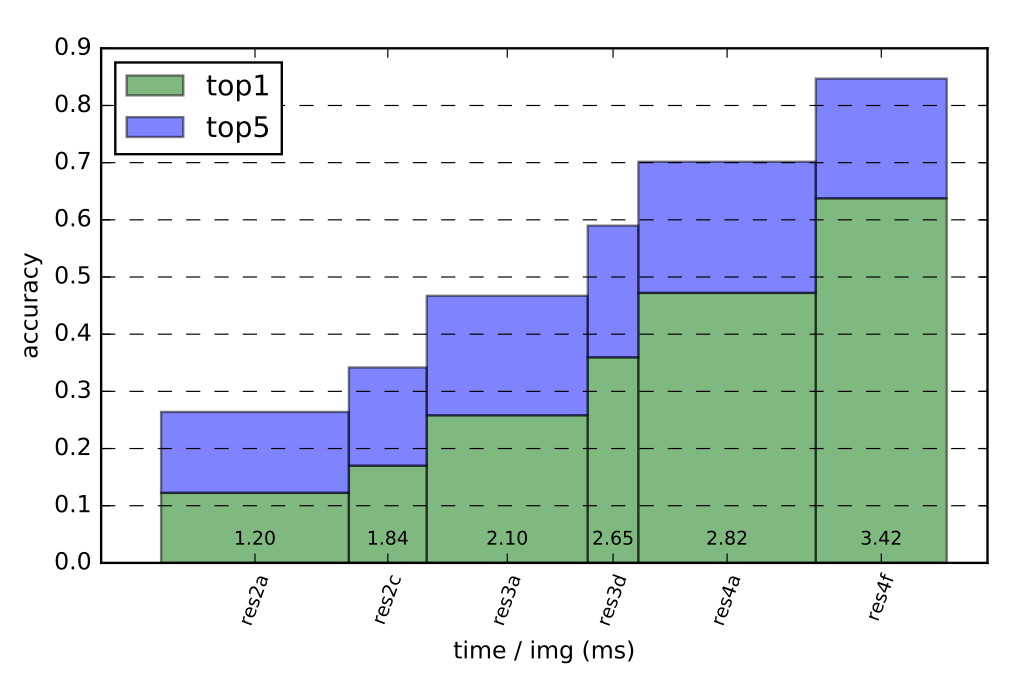

For practical implementation, classifiers are deployed at specific exits within networks, evaluating the necessity to proceed. This system can lead to significant speed-ups, with experimental results showing up to 30% reduction in evaluation time for networks like GoogLeNet and ResNet50.

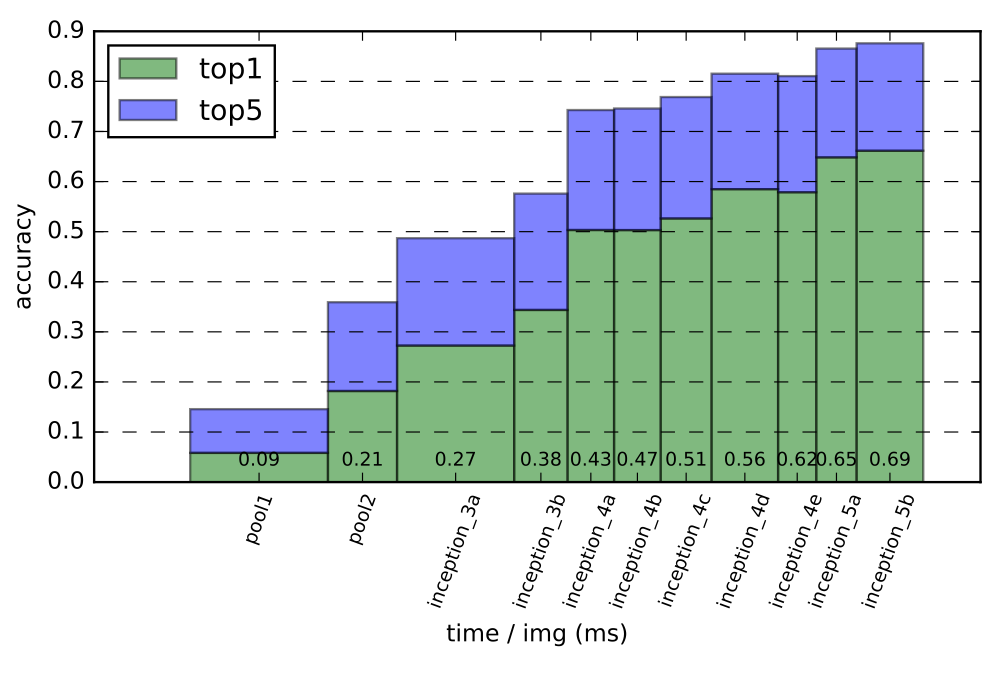

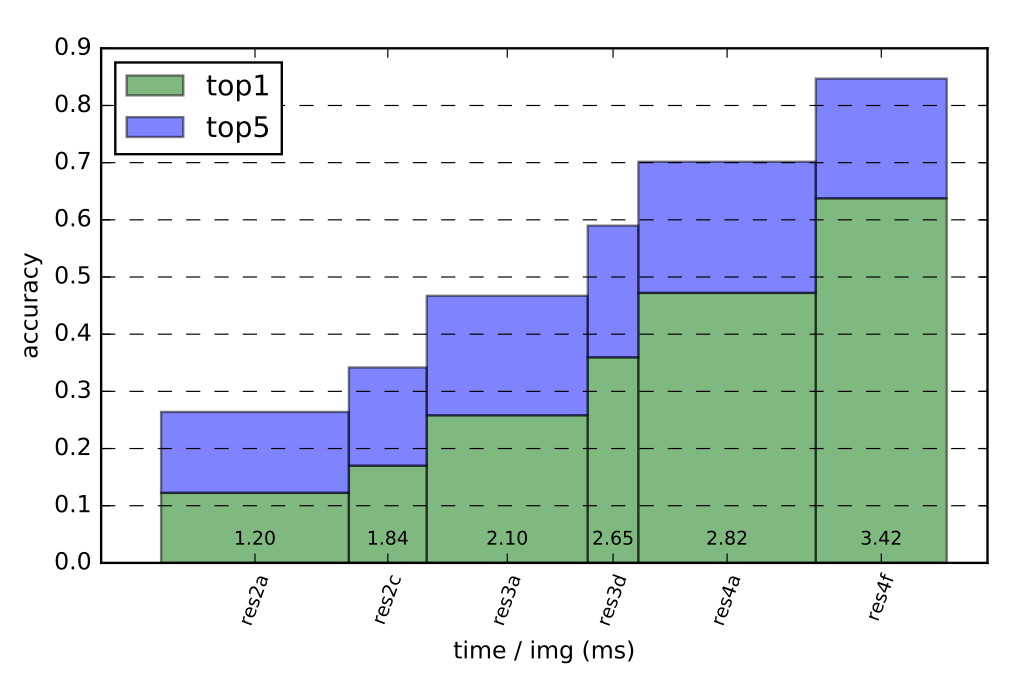

Figure 2: The plots show the accuracy gains at different layers for early exits for networks GoogLeNet (top) and Resnet50 (bottom).

Network Selection

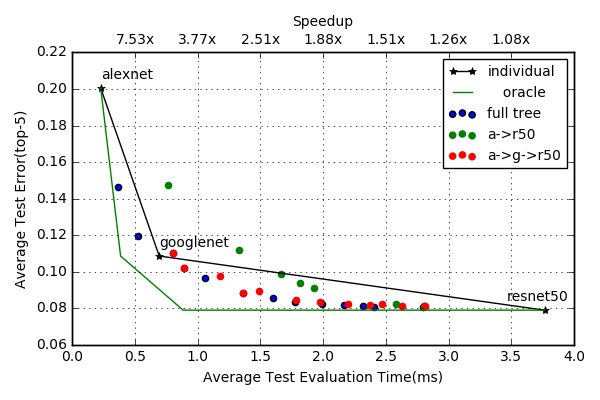

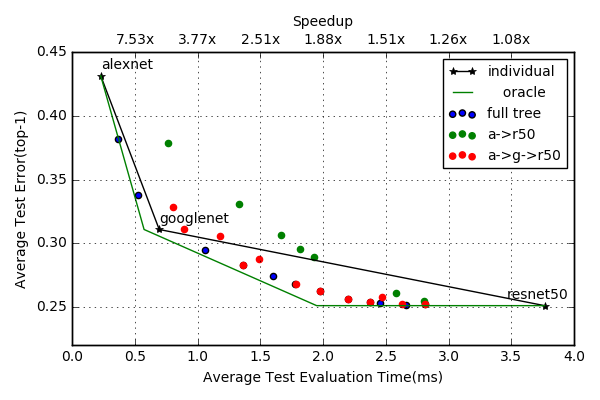

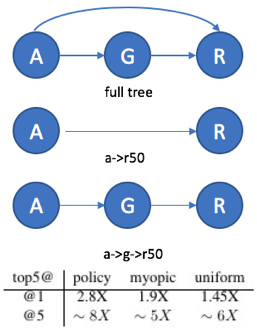

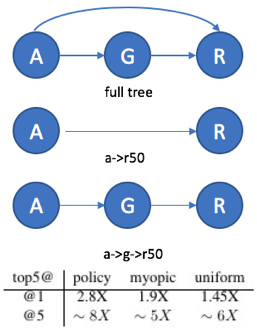

The network selection approach orders multiple pretrained networks in a directed acyclic graph, allowing adaptive decisions on which network to use. For each example, a decision policy selects the cheapest model able to deliver accurate results. Policies are learned bottom-up, optimizing for speed and accuracy on datasets such as ImageNet, with substantial speed-ups reported when switching dynamically across networks.

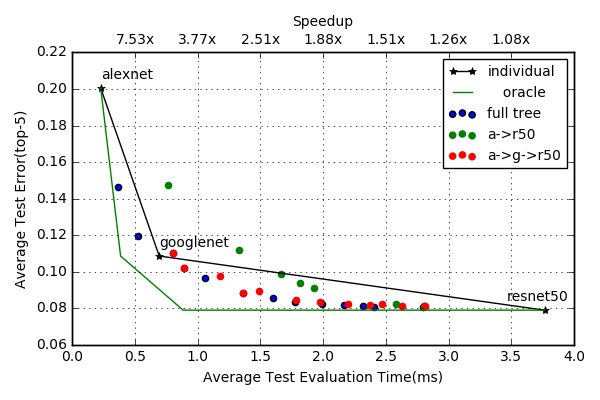

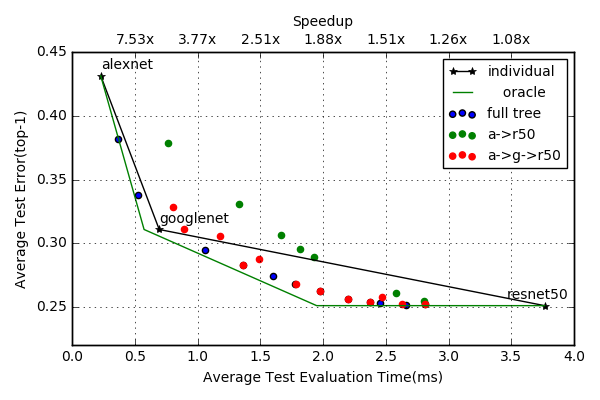

Figure 3: Performance of network selection policy on Imagenet (Left: top-5 error Right: top-1 error). Our full adaptive system (denoted with blue dots) significantly outperforms any individual network for almost all budget regions and is close to the performance of the oracle.

Experimental Evaluation

The methods were evaluated on the Imagenet 2012 classification dataset using AlexNet, GoogLeNet, and ResNet50 models. Results indicated substantial reductions in computational time, with up to 2.8x speed-ups possible with minimal loss in accuracy. The approach's advantage is most notable in network selection scenarios, where non-linear evaluation cost increases can be mitigated.

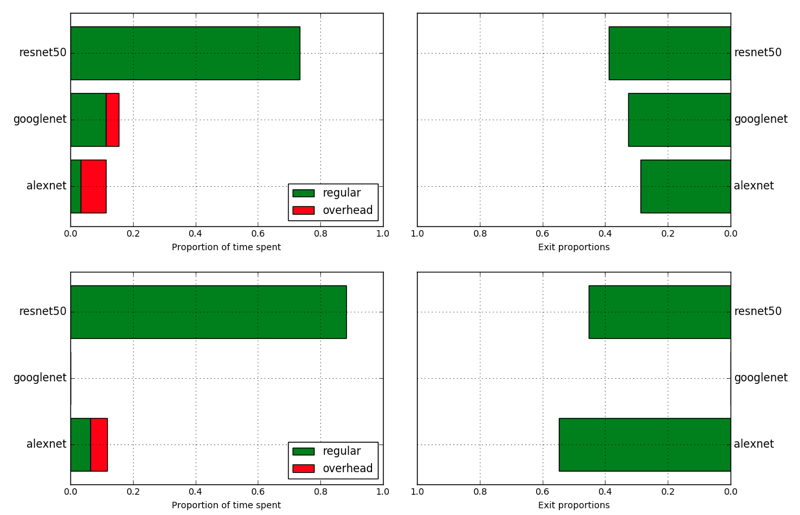

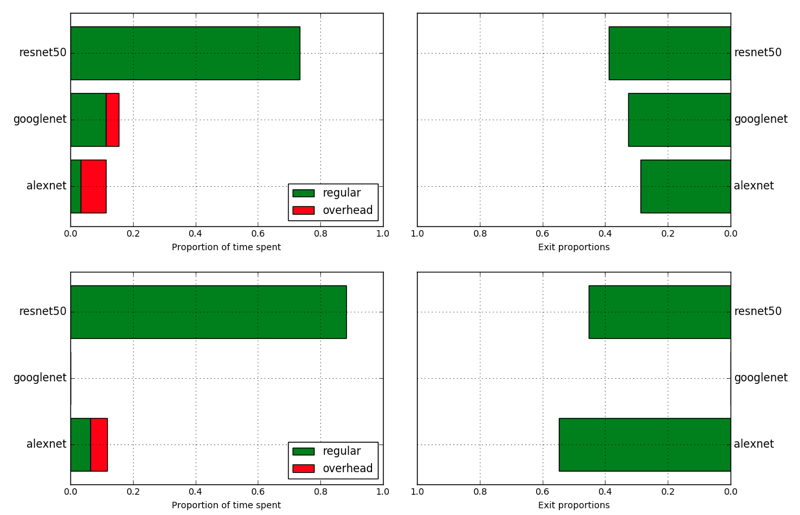

Figure 4: (Left) Different network selection topologies that we considered. Arrows denote possible jumps allowed to the policy. A, G and R denote Alexnet, GoogLeNet and Resnet50, respectively. (Right) Statistics for proportion of total time spent on different networks and proportion of samples that exit at each network. Top row is sampled at 2.0ms and bottom row is sampled at 2.8ms system evaluation.

Conclusion

The proposed adaptive inference strategies offer significant reductions in test-time computational demands for DNNs while maintaining their accuracy. The research supports deployment in resource-constrained environments, such as mobile and IoT devices, where computation costs are critical. Future developments may involve hybrid models and integration with distributed processing systems to leverage fog computing capabilities.

This investigation demonstrates the potential for adaptive systems to optimize computation resources, positing them as crucial advancements in efficient AI deployment on constrained devices.