- The paper demonstrates that evolutionary algorithms can evolve novel plasticity rules and neural architectures that enable lifelong adaptive learning.

- It shows that integrating evolution with neuromodulation and indirect encoding leads to scalable, robust performance across dynamic environments.

- The study highlights methodological challenges in search spaces and abstraction, setting a clear agenda for future EPANN research.

Evolved Plastic Artificial Neural Networks: Foundations, Achievements, and Future Directions

Introduction

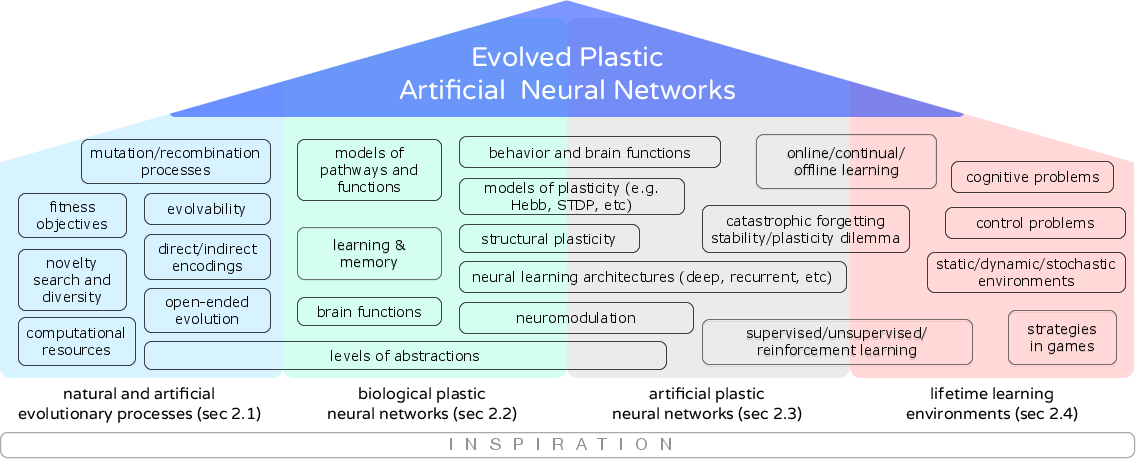

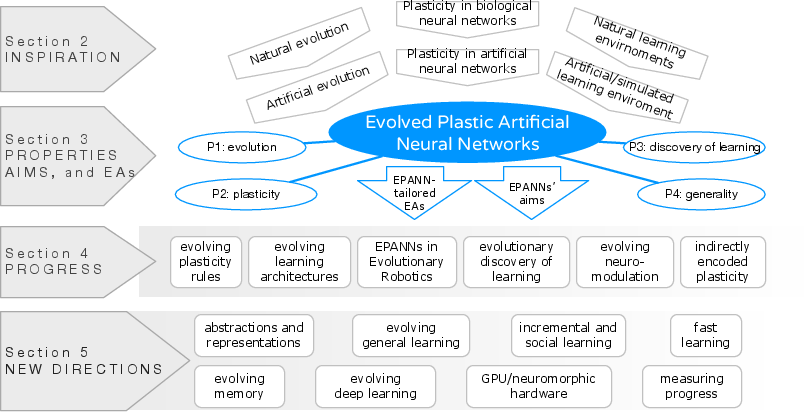

This essay provides an in-depth technical analysis of "Born to Learn: the Inspiration, Progress, and Future of Evolved Plastic Artificial Neural Networks" (1703.10371). The paper systematizes the field of Evolved Plastic Artificial Neural Networks (EPANNs), focusing on artificial neural systems that utilize evolutionary algorithms to discover and optimize plasticity rules, architectures, and adaptive behavior. EPANNs integrate three central biological inspiration sources: evolution, development, and neural plasticity, transferred into artificial agents to yield lifelong learning. The work synthesizes the progression of theoretical and empirical frameworks, analyses methodological challenges, and sets an agenda for future research.

Conceptual Foundations and Motivations

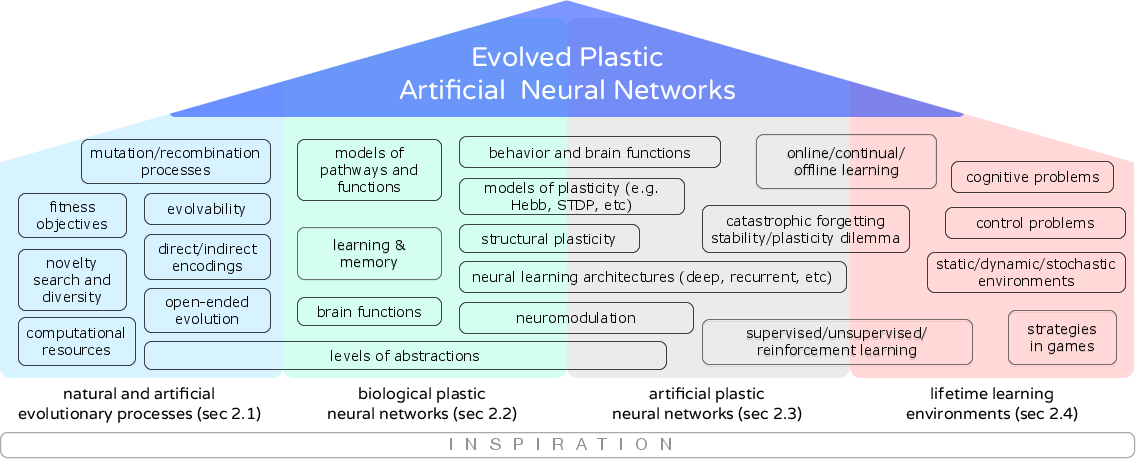

EPANNs originate in the synthesis of evolutionary computation and neuroplasticity to automate the design of neural systems capable of adaptive, incremental, and general learning. The paper distinguishes EPANNs from traditional neuroevolution by requiring that the evolved agents exhibit adaptive changes during their lifetime, beyond static parameter optimization. Biological inspiration encompasses: (1) natural evolutionary mechanisms, (2) diverse forms of neural and structural plasticity, (3) varied learning environments, and (4) complex genotype-to-phenotype mappings.

Figure 1: A comprehensive overview of EPANN inspiration sources, illustrating intersections between neurobiology, computation, and artificial life.

EPANNs are characterized by four principal properties:

- Evolutionary process as meta-learning

- Intrinsic neural plasticity mechanisms

- Synergistic discovery of learning via evolution and plasticity

- Generality with respect to tasks, architectures, and learning rules

The field grapples with key open problems: setup of suitable evolutionary pressures to induce learning; abstraction trade-offs in neural modeling; search over vast parameter spaces; and assessment of generalization versus overfitting in lifelong adaptation.

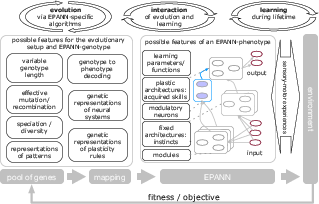

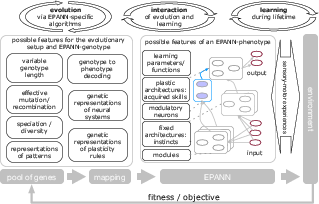

EPANN System Architecture and Evolutionary Design

The canonical EPANN setup integrates simulated evolution of genotypes with in-lifetime learning and environmental interaction. Evolution not only optimizes weights and static topologies, but can also encode adaptive mechanisms—plasticity rules, modulatory pathways, and structural change protocols.

Figure 2: Evolving EPANNs through simulated evolutionary cycles, in-lifetime plastic adaptation, and environmental feedback.

Desirable properties of evolutionary algorithms for EPANNs include variable-length/genotype scalability, indirect encoding (e.g., compositional pattern producing networks), preservation of regularities and symmetries, robust genotype-phenotype mapping with effective recombination, and explicit encoding of diverse plasticity rules. Diversity maintenance, low selection pressure, and open-endedness are critical for supporting the emergence of complex adaptive mechanisms. EPANN research shows a strong methodological alignment with the neuroevolution literature, e.g., NEAT, HyperNEAT, and indirect encoding techniques.

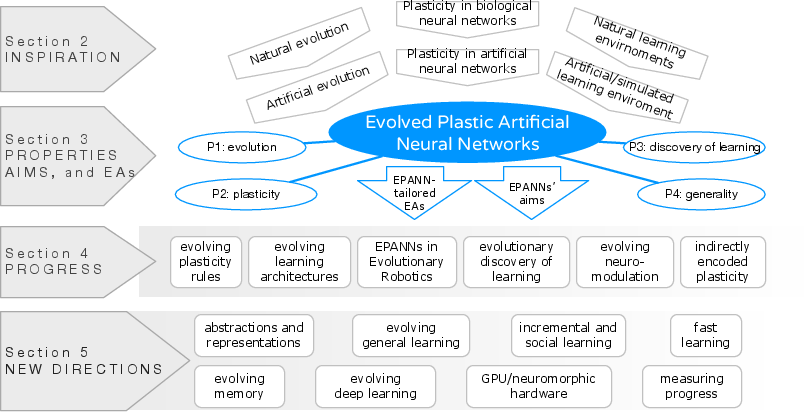

Empirical Exploration: Progress and Results

The field's development spans several axes, reflected in both the experimental paradigm and technical findings.

Figure 3: Disciplinary structure and methodological axes of EPANN research, illustrating progression from rule evolution to high-level system synthesis.

Evolution of Plasticity Rules and Architectures

Initial studies demonstrated that evolutionary search can rediscover canonical plasticity rules (e.g., Hebb, Widrow-Hoff). Empirical results confirm that evolution can produce new, non-trivial plasticity rules suited to idiosyncratic environments, yielding superior performance to hand-designed systems when operating over extended and heterogeneous task distributions.

Evolution of learning architectures—beyond mere rule selection—enables co-optimization of network topology, modulatory structures, and adaptive parameters. Such approaches have achieved state-of-the-art generalization and learning in time series, navigation, and robotics control domains.

EPANNs in Adaptive and Robotic Systems

Evolutionary robotics supplied a rich testbed for EPANNs, exposing the performance advantages of lifelong plasticity, notably in bridging the simulation-to-reality gap and in dynamic, non-stationary environments. An explicit comparison demonstrates that recurrent networks with fixed weights can sometimes match plastic networks in associative tasks; however, evolved plasticity consistently yields superior adaptability and robustness under environmental perturbation.

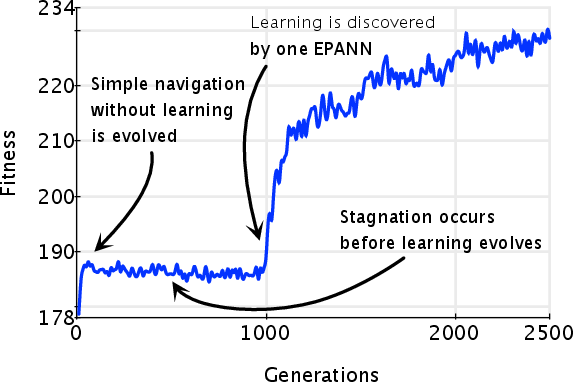

Evolutionary Discovery of Learning and Behavioral Plasticity

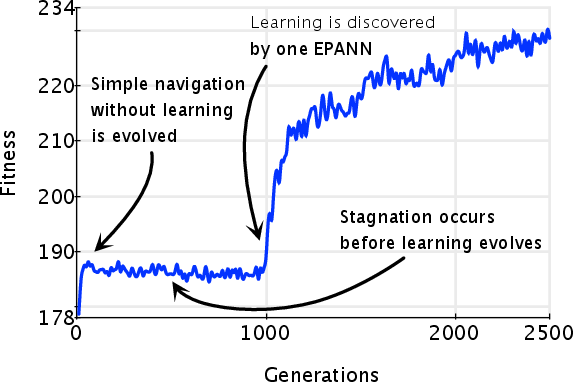

The paper presents compelling evidence that the evolution of learning in non-stationary reward environments is characterized by punctuated equilibrium—stochastic leap in fitness upon discovery of adaptive plasticity, followed by rapid optimization.

Figure 4: Evolutionary trajectory of learning in a non-stationary environment, with a marked jump in fitness as a plasticity-enabled agent emerges.

Stepping stone analysis affirms the critical role of novelty search and low selection pressure in circumventing evolutionary deception, promoting exploration over exploitation as a precursor to robust learning evolution.

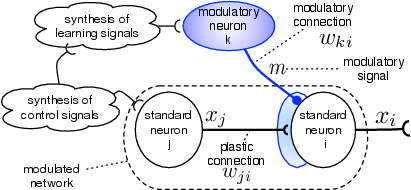

Neuromodulation and Selective Plasticity

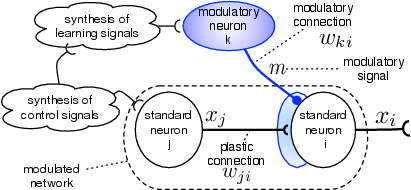

A central claim is that neuromodulatory signalling, analogous to biological dopamine or other neuromodulators, confers evolutionary advantages, both in reinforcement-based and reinforcement-free learning environments. Evolved networks consistently exploit modulatory neurons to gate plasticity, improving search efficiency and stability.

Figure 5: Schematic of local learning regulated by an evolved modulatory neuron, separating the learning signal from the activation signal.

Neuromodulation has empirical and theoretical implications for addressing catastrophic forgetting, evolvability, and selective adaptation, and can be flexibly evolved as both a topological and functional attribute.

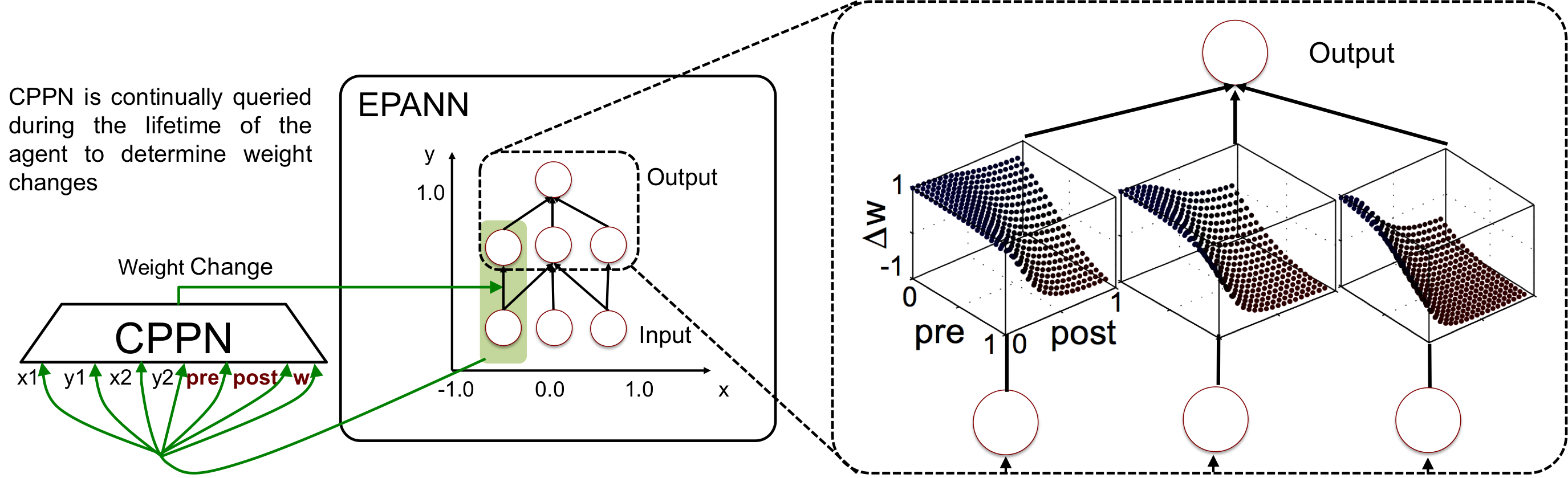

Indirect Encodings and Large-Scale Patterned Plasticity

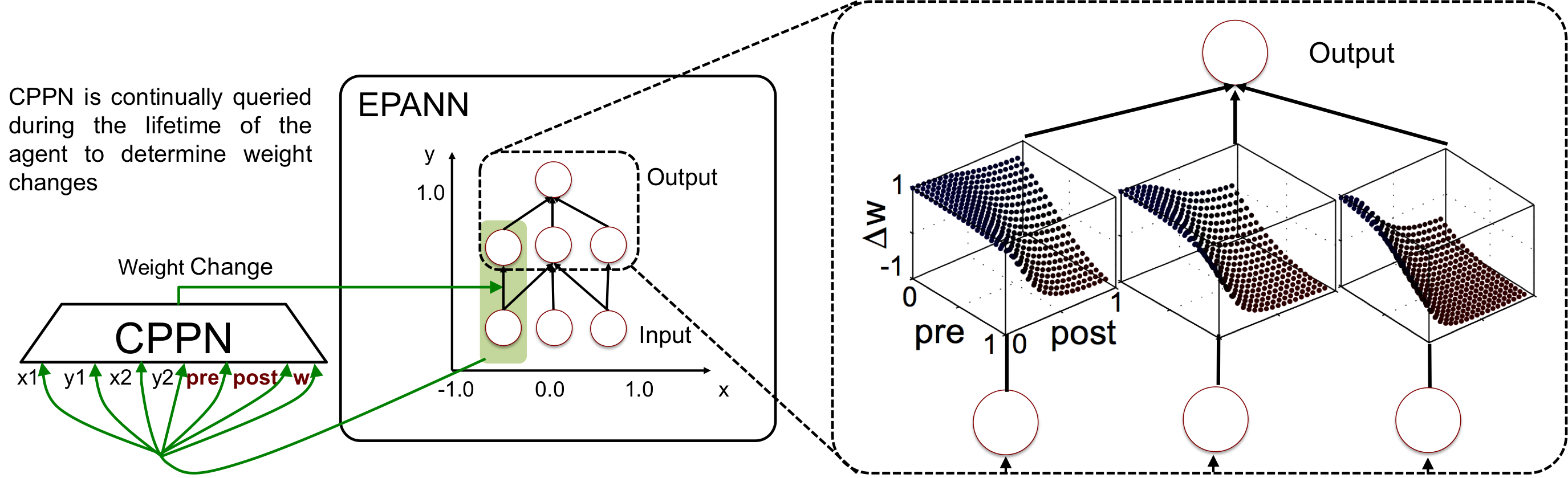

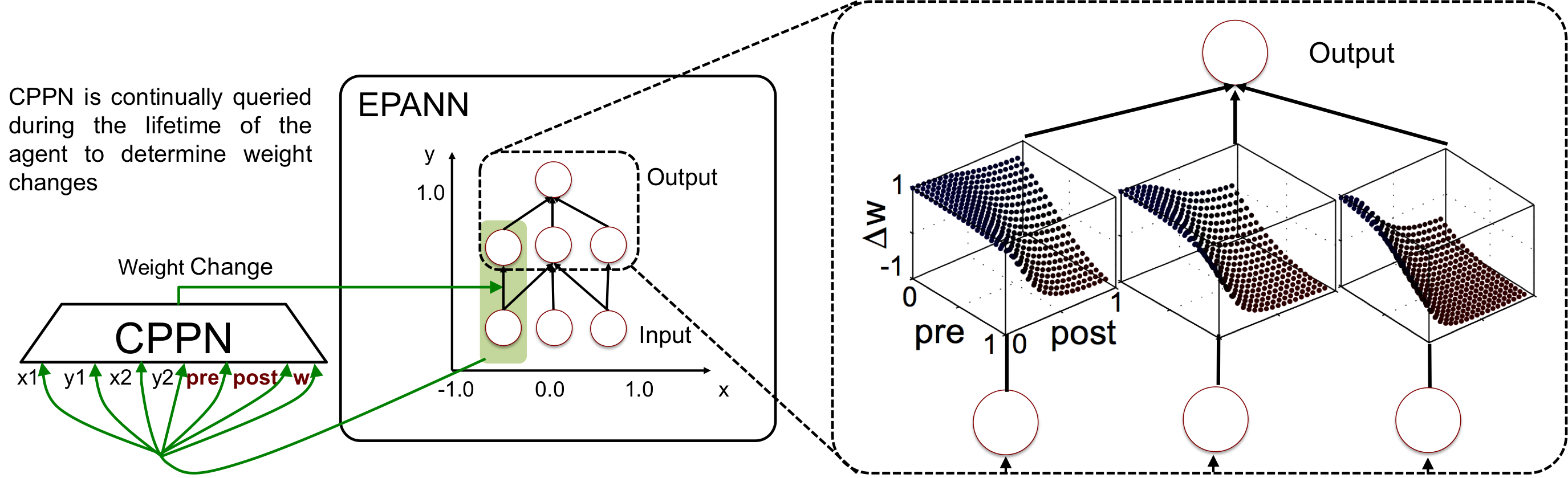

To scale EPANNs to high-dimensional, complex domains, indirect encoding methods such as adaptive HyperNEAT have been developed. These approaches allow compact genotypes to specify structured, regular, and modular connectivity and plasticity patterns.

Figure 6: Indirect encoding of plasticity rules for large-scale ANNs using geometric substrates and evolved CPPNs, supporting nonlinear and spatially organized adaptation.

This framework supports the emergence of topographical maps, modular learning rules, and localization of plastic or modulatory dynamics, critical for scalable adaptive intelligence.

Forward-Looking Perspectives

The authors provide a structured examination of unsolved challenges and emergent opportunities:

- Abstraction and Representation: Theoretical emphasis on appropriate biological and functional abstraction levels; representation learning applied to rules, structure, and genotype encoding spaces.

- Generalizable and Continual Learning: Emphasis on moving from specific behavioral switches to domain-agnostic, generalized learners and stabilizing incremental knowledge acquisition.

- Modularity and Memory: Evolution of isolation between modules to mitigate forgetting, integration with memory-augmented architectures such as Neural Turing Machines, and developmental dynamics for memory consolidation.

- Deep Learning and EPANN Synergies: Recent advances in deep neuroevolution and indirect encoding point toward hybrid systems where EPANNs are used to seed, configure, or meta-learn components of large-scale deep networks.

- Hardware and Scalability: The trajectory toward GPU and neuromorphic deployments, alongside algorithmic and representational advances, promises to enable real-time, embodied EPANN adaptation.

Conclusion

The synthesis presented in this paper identifies EPANNs as a formal class of systems that unify multiple AI and adaptive behavior subfields under the paradigm of evolvable, plastic, and lifelong learning neural networks. Empirical results highlight the field’s capacity for autonomously discovering and optimizing adaptive intelligence across diverse environments. The technical agenda underscores immediate challenges in scaling, abstraction, and evaluation, while the theoretical implications span both computational understanding of biological intelligence and practical deployment of open-ended, general AI. These findings position EPANN research as central to the future trajectory of adaptive and self-improving artificial systems.