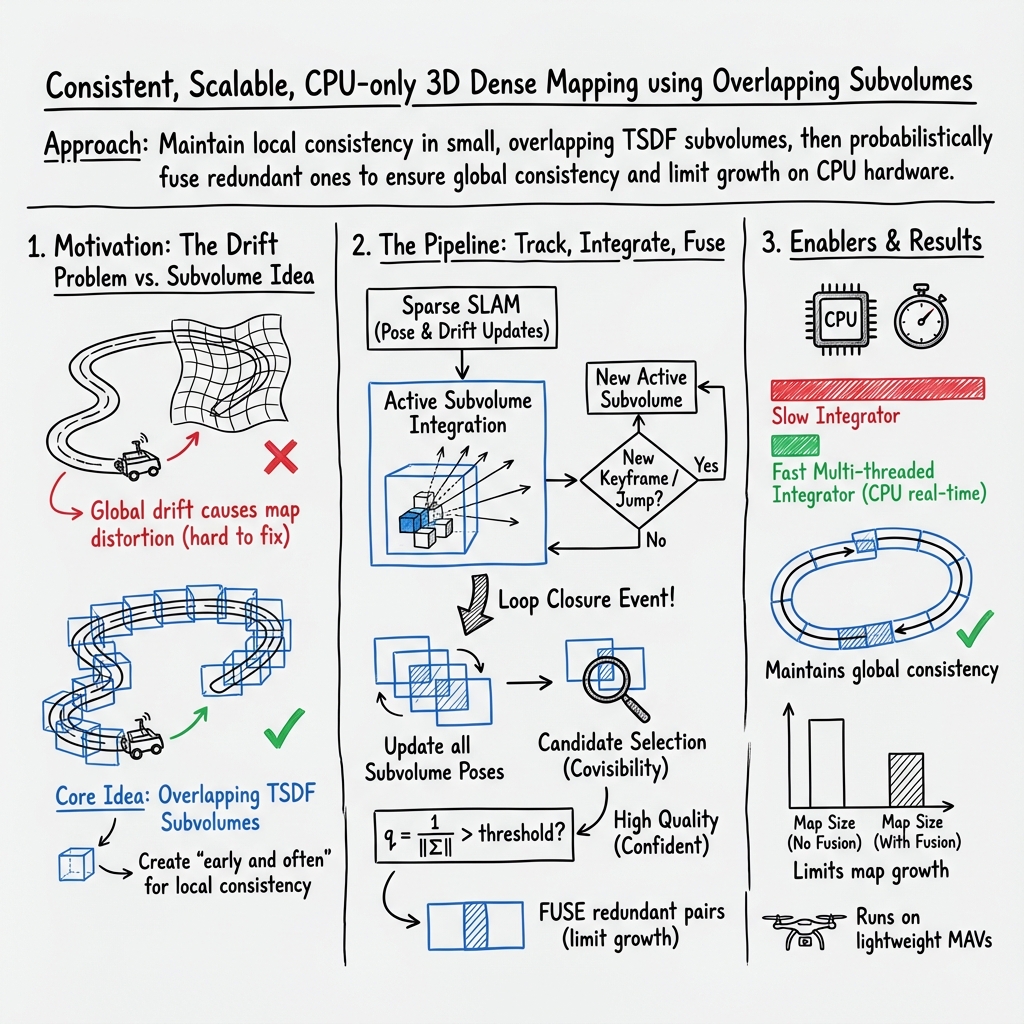

C-blox: A Scalable and Consistent TSDF-based Dense Mapping Approach

Abstract: In many applications, maintaining a consistent dense map of the environment is key to enabling robotic platforms to perform higher level decision making. Several works have addressed the challenge of creating precise dense 3D maps from visual sensors providing depth information. However, during operation over longer missions, reconstructions can easily become inconsistent due to accumulated camera tracking error and delayed loop closure. Without explicitly addressing the problem of map consistency, recovery from such distortions tends to be difficult. We present a novel system for dense 3D mapping which addresses the challenge of building consistent maps while dealing with scalability. Central to our approach is the representation of the environment as a collection of overlapping TSDF subvolumes. These subvolumes are localized through feature-based camera tracking and bundle adjustment. Our main contribution is a pipeline for identifying stable regions in the map, and to fuse the contributing subvolumes. This approach allows us to reduce map growth while still maintaining consistency. We demonstrate the proposed system on a publicly available dataset and simulation engine, and demonstrate the efficacy of the proposed approach for building consistent and scalable maps. Finally we demonstrate our approach running in real-time on-board a lightweight MAV.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Knowledge Gaps

Below is a single, focused list of the paper’s unresolved knowledge gaps, limitations, and open questions, written to guide future research.

- Subvolume fusion quality metric: The choice of q(i, j) = 1 / ||Σ_{i|j}|| is ad hoc; the paper claims proportionality to ellipsoid volume but uses the matrix 2-norm rather than the determinant or principal axes. A more principled, SE(3)-aware uncertainty measure (e.g., using the determinant, eigenvalues, or a pose-uncertainty metric separating rotation/translation) is needed, along with guidelines for threshold selection.

- Conditional covariance extraction scalability: The per-candidate constrained AMD reordering and Cholesky factorization are performed for each subvolume pair, but the computational complexity and latency as the number of subvolumes grows are not characterized. Real-time bounds, worst-case behavior, and comparisons to alternative marginal covariance methods (e.g., iSAM2/Bayes tree-based queries) are missing.

- Robustness to perceptual aliasing: Covisibility-based candidate selection may propose pairs from different but visually similar places (e.g., repetitive industrial structures). There is no mechanism or evaluation of false fusion due to aliasing, nor integration of appearance-based place recognition to guard against it.

- Subvolume creation policy: New subvolumes are created “early and often” based on heuristics (max keyframes or “significant change”), but there is no principled criterion or optimization of this policy with respect to drift bounds, map size, or fusion success rates. How to adaptively tune creation thresholds online remains open.

- Intra-subvolume consistency assumptions: The approach assumes data within a subvolume is locally consistent, yet does not quantify acceptable drift or camera tracking error within a subvolume. There is no metric or detector for when intra-volume distortion exceeds a safe limit or for triggering corrective re-integration.

- Fusion operator correctness: Subvolume fusion is a simple voxel-level trilinear interpolation/integration of one TSDF into another. There is no treatment of conflicting observations, outliers, re-weighting, or uncertainty-aware blending across subvolumes; nor analysis of artifacts at volume boundaries (smoothing, ghosting, topology changes).

- Fast integrator side effects: The lock-free “early termination” strategy for ray casting introduces biased free-space carving and potential gaps behind surfaces. The impact on surface fidelity, free-space completeness, planning safety, and sensitivity to scene geometry and pointcloud density is not formally analyzed.

- Integration time budget truncation: Early termination when exceeding a per-frame time budget may drop rays non-uniformly. The completeness, consistency, and bias introduced by time-budget truncation are not quantified, and adaptive scheduling strategies are unexplored.

- Dynamic scenes: The method assumes static environments; there is no handling of moving objects or transient geometry, nor evaluation of how dynamics affect covisibility, localization covariance, and fusion decisions.

- Long-term scalability: Although fusion reduces map growth, the map can still grow with trajectory length. Memory usage, CPU time, and covariance-extraction cost over long missions (hours/days) in bounded environments are not assessed, and policies for garbage collection or hierarchical summaries are absent.

- Multi-resolution/hierarchical representation: The system fuses volumes into a single TSDF for visualization but does not provide hierarchical/multi-resolution submap management to support scalable storage, streaming, or on-demand refinement.

- Sensor modality generalization: The approach is evaluated with RGB-D and stereo; it does not assess performance with other modalities (e.g., lidar, event cameras), nor mixed-modality fusion impacts on covisibility, uncertainty estimation, and TSDF integration.

- Visual-inertial integration: The system relies on ORB-SLAM2 with some 3D constraints; there is no evaluation with tightly-coupled visual-inertial odometry/SLAM or analysis of how inertial constraints change the conditional covariance extraction and fusion success rates.

- Calibration and synchronization robustness: The MAV setup relies on offline extrinsic calibration and synchronized sensors; there is no analysis of sensitivity to calibration errors, online calibration/drift, or clock offsets, especially for depth-camera rolling shutter effects.

- Loop closure timing and delayed corrections: The fusion policy depends on updated poses after loop closure, but the method does not address delayed or partial loop closures, nor strategies to defer risky fusions until uncertainty reduces, or to “un-fuse” volumes after a bad fusion decision.

- Candidate-pair selection thresholds: Covisibility edge weights and q thresholds are crucial but lack systematic tuning or adaptive control mechanisms; the trade-off between compression and reconstruction accuracy is shown qualitatively but not optimized quantitatively.

- Uncertainty in orientation vs position: The fusion decision uses a single covariance norm without accounting for anisotropy or differing impacts of rotational vs translational uncertainty on TSDF consistency. A pose-uncertainty decomposition could yield better fusion decisions.

- TSDF weighting model: The TSDF stores a generic weight/confidence per voxel, but the paper does not specify or evaluate weighting schemes (e.g., sensor noise models, incidence angle, range-dependent weights), nor how weights are combined during fusion to avoid double-counting or overconfidence.

- Planning-centric metrics: Although TSDFs are chosen for planning, the paper does not evaluate downstream planning performance (e.g., collision rates, path feasibility) nor define map quality metrics directly tied to planning robustness and safety.

- Real-world ground truth: Real-world MAV experiments lack ground-truth geometry; the “ground-truth” in CARLA is itself an approximate reconstruction. A thorough evaluation with true ground truth (e.g., laser-scanned environments) across diverse textures/lighting is missing.

- Parameter sensitivity and generalization: The system evaluates only a few voxel sizes and datasets; there is no study of sensitivity to resolution, truncation distance, TSDF parameters, covisibility thresholds, subvolume sizes, or their generalization across environments.

- Re-integration into existing subvolumes: The method avoids partitioning and allows overlap, but it does not explore conditions under which new frames should be integrated into previously existing subvolumes (after uncertainty reduction) versus creating new ones, nor mechanisms to safely update existing volumes.

- Memory and resource management: The paper reports block counts but lacks detailed profiling of memory footprint, CPU utilization, thread contention, and energy consumption on embedded platforms, especially under long-duration operation.

- Comparative consistency metrics: Evaluation focuses on RMSE to ground truth surfaces; there is no comparison using loop-closure consistency metrics, trajectory/map alignment errors, or deformation metrics versus methods like ElasticFusion/BundleFusion on broader benchmarks.

- Multi-session/multi-agent mapping: The framework’s suitability for multi-session mapping (returning on different days) or multi-agent fusion is not addressed, including managing cross-session covisibility, uncertainty, and identity of subvolumes.

- Failure modes and safeguards: The system lacks documented safeguards against catastrophic fusions (e.g., probabilistic risk models, rollback/un-fuse operations, audit trails), and does not present detection/mitigation strategies for incorrect fusion decisions.

Collections

Sign up for free to add this paper to one or more collections.