- The paper introduces the Neural Process Network that simulates action dynamics by updating entity states via learned transformations.

- It employs a simulation module with action and entity selectors to dynamically track state changes in procedural text.

- It achieves superior performance in entity tracking and state change prediction through a multi-loss training approach.

Simulating Action Dynamics with Neural Process Networks

Introduction

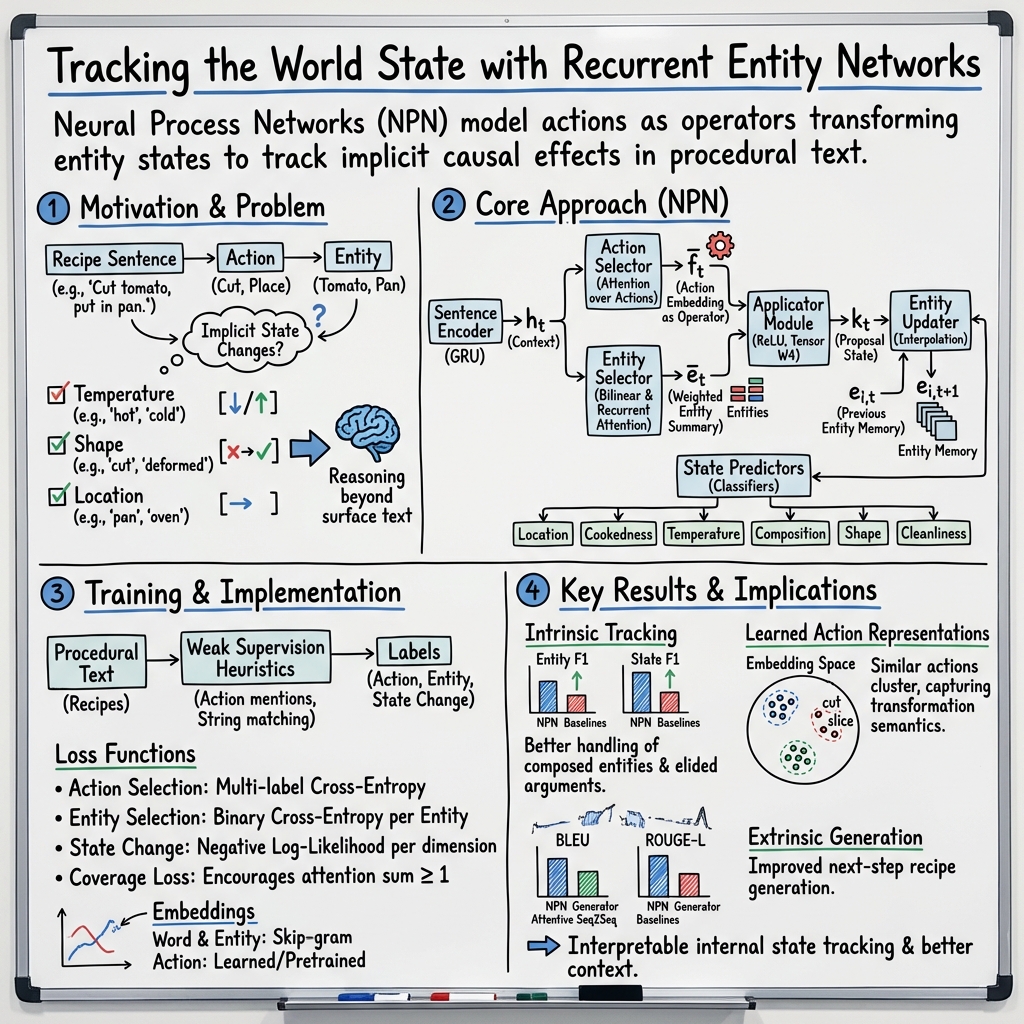

The paper "Simulating Action Dynamics with Neural Process Networks" (1711.05313) introduces a sophisticated approach for understanding procedural text by simulating action dynamics using neural networks. This model, referred to as the Neural Process Network (NPN), addresses the challenge of interpreting causal relationships in procedural texts, such as instructions or narratives, by modeling actions as state transformers. The NPN effectively updates the state of entities by executing learned action operators, enhancing the interpretability of internal representations compared to traditional memory architectures.

Model Architecture

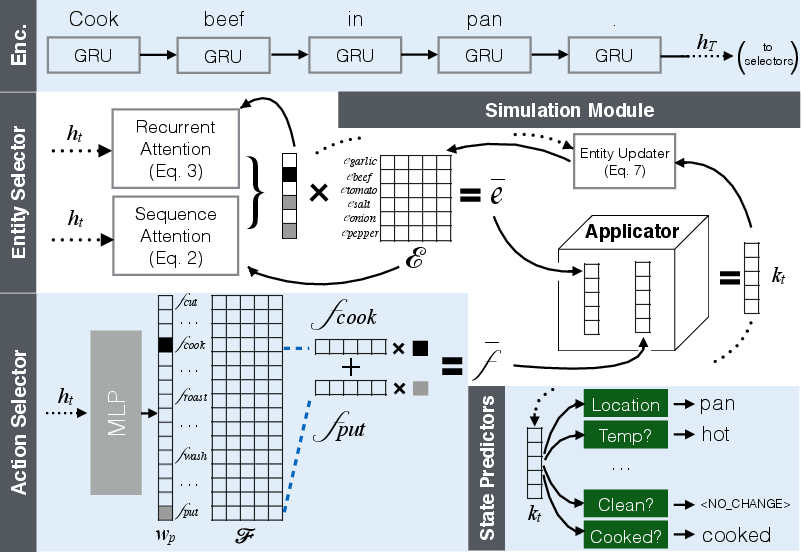

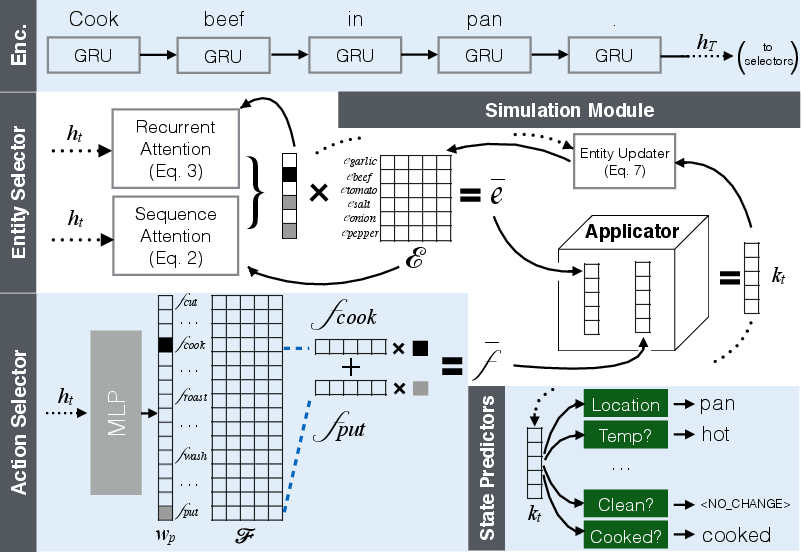

The Neural Process Network is structured around a simulation module that processes natural language instructions sequentially. Within this architecture, the model selects actions and entities to simulate effects, updating entity states dynamically through a recurrent memory framework. A pivotal element of the model is its ability to index entities and actions, effectively transforming and predicting new entity states based on inferred causal effects.

Figure 1: Model Summary. The sentence encoder converts a sentence to a vector representation, ht. The action selector and entity selector use the vector representation to choose the actions that are applied and the entities that are acted upon in the sentence. The simulation module indexes the action and entity state embeddings and applies the transformation to the entities. The state predictors predict the new state of the entities if a state change has occurred.

The simulation of action dynamics involves several discrete components: a sentence encoder, action and entity selectors, a simulation module, and state predictors. The sentence encoder derives vector representations of input sentences, subsequently guiding the action and entity selectors in identifying the relevant embeddings. The simulation module then applies action embeddings to entity vectors, simulating the resultant state changes.

Training and Evaluation

Training the NPN involves optimizing multiple loss functions corresponding to action selection, entity selection, and state change prediction. Due to the expense of acquiring dense annotations, the model is trained using weak supervision from heuristically extracted labels. Notably, the authors introduce a coverage loss to ensure that all mentioned entities are deemed relevant, thereby enhancing narrative consistency.

The model's performance is rigorously evaluated through intrinsic tasks involving entity tracking and state change prediction, alongside extrinsic evaluations for generating upcoming steps in procedural tasks. The results demonstrate the NPN's superior ability to simulate dynamics within procedural text, significantly outperforming baseline models in both entity selection and state change tracking metrics.

Theoretical and Practical Implications

The NPN's ability to model action dynamics within procedural text offers several theoretical insights and practical applications. Theoretically, it advances the paradigm of understanding procedural language through action-centric simulations, providing a foundation for models that can interpret implicit causal relations in text. Practically, the incorporation of explicit action representations enhances the model's applicability in domains requiring nuanced understanding of entity transformations, such as automated narrative generation and complex instructional interpretation.

Future Directions

While the NPN offers substantial advancements, it opens several avenues for future research. Enhancing the model's robustness across diverse procedural domains, integrating multimodal data for richer entity representations, and exploring more sophisticated action representation learning from broader datasets represent promising directions. The public release of annotated datasets accompanying this work serves as a valuable resource for ongoing exploration and development within this domain.

Conclusion

The introduction of the Neural Process Network marks a significant step forward in computational understanding of procedural texts through neural simulation of action dynamics. Empirical evidence underscores its efficacy in enhancing interpretability and accuracy of entity state predictions, positioning it as a potent tool for advancing machine comprehension of procedural language.