- The paper shows that anisotropic noise in SGD provides a robust mechanism to escape sharp minima by aligning with the Hessian’s dominant eigenvectors.

- It develops a unified theoretical framework linking SGD to Langevin dynamics and introduces a novel indicator, Tr(HΣ), to quantify noise efficiency.

- Empirical results from toy examples and real-world datasets confirm that anisotropic noise leads to flatter minima and better generalization.

The Anisotropic Noise in Stochastic Gradient Descent

"The Anisotropic Noise in Stochastic Gradient Descent: Its Behavior of Escaping from Sharp Minima and Regularization Effects" investigates the impact of anisotropic noise in SGD on optimization dynamics and generalization. This study presents a detailed theoretical framework and empirical evidence to explain the superior ability of SGD to escape sharp minima and achieve robust generalization compared to isotropic noise analogs.

Theoretical Insights

Optimization Dynamics

The paper introduces a generalized optimization dynamics framework that unifies SGD with Langevin dynamics by incorporating unbiased noise into the gradient descent update rule. This formulation reveals critical insights into how noise structure, rather than mere magnitude, affects optimization outcomes:

- The study delineates the escape efficiency of minima by measuring the alignment between noise covariance and loss surface curvature through a novel indicator, $\Tr(H\Sigma)$, where H is the Hessian at minima, and Σ is the noise covariance.

- Analytical results demonstrate that anisotropic noise, especially when aligned with major Hessian eigenvectors, markedly enhances escape efficiency from sharp minima compared to isotropic noise, providing a robust theoretical basis for SGD's effectiveness in complex loss landscapes.

Noise Structure and Regularization

The relationship between SGD noise and loss surface curvature is further explored:

- The work recognizes that the covariance structure of SGD noise is inherently anisotropic, aligning more closely with the Hessian of the loss, particularly in deep neural networks.

- This anisotropic structure enables SGD to navigate towards flatter minima, which empirically correspond to better generalizing model configurations.

Practical Implications

The insights presented are vital for designing optimization strategies in neural networks:

- An understanding of noise-induced dynamics can inform learning rate and batch size choices, optimizing SGD's traversal of the loss landscape.

- Proposals are made to develop new optimizers that enhance generalization by intentionally adding noise aligned with critical directions dictated by the Hessian.

Experimental Validation

Toy and Neural Network Experiments

Empirical evaluations support the theoretical propositions:

- 2D Toy Example: Diverse dynamics demonstrate dramatic differences in escape rates from sharp to flat minima, validating the proposed indicator's predictive capabilities.

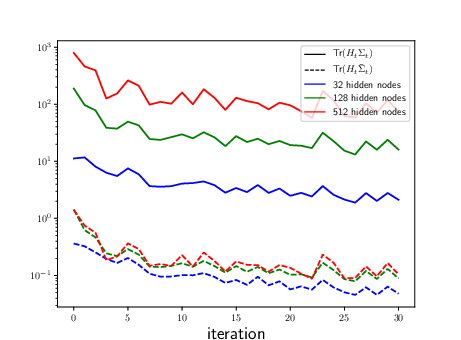

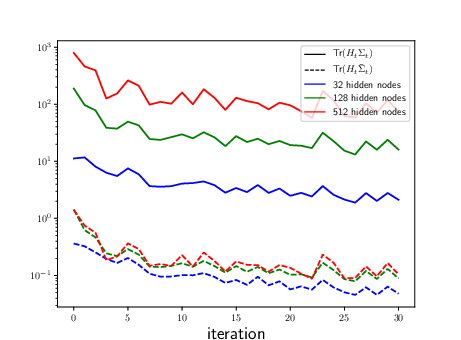

- Neural Networks: Experiments on one hidden layer networks affirm that increasing hidden nodes amplifies the benefits of anisotropic noise, corroborating the analytical results regarding ill-conditioning and SGD noise.

Real-World Datasets

Experiments on FashionMNIST, SVHN, and CIFAR-10 reinforce the practical relevance:

- The implemented comparisons consistently show that SGD and specially designed alternative methods with anisotropic noise significantly outperform isotropic analogs in both escape efficiency and generalization metrics.

- The observed disparities in sharpness and generalization underscore the criticality of noise structure in real-world deep learning tasks.

Figure 1: One hidden layer neural networks. The solid and the dotted lines represent the value of Tr(HΣ) and $\Tr(H\bar{\Sigma})$, respectively, illustrating the impact of noise alignment on optimization.

Future Directions

The paper highlights several future avenues:

- Examination of SGD's out-of-equilibrium behavior and exploration of new theoretical models that capture the nuanced dynamics of SGD in anisotropic spaces.

- Development of noise-tuned optimizers inspired by SGD's demonstrated efficient landscape navigation.

Conclusion

This research convincingly argues for the importance of accounting for anisotropic noise in SGD when considering both optimization dynamics and generalization capabilities. By elucidating the relationship between noise structure and the loss landscape, the findings have broad implications for advancing optimization methodologies in machine learning.