- The paper demonstrates that integrating beam search with RNNGs can replicate human EEG syntax-related responses, notably the P600 and early positivity components.

- The study introduces novel complexity metrics (distance, surprisal, entropy) derived from beam search states to precisely predict EEG amplitude variations.

- Comparative analysis reveals that RNNGs outperform LSTM models in capturing syntax-sensitive neural responses when processing literary stimuli.

Overview

The paper "Finding Syntax in Human Encephalography with Beam Search" employs Recurrent Neural Network Grammars (RNNGs) to investigate syntactic processing parallels between computational models and human electroencephalography (EEG) responses. The study reveals the role of beam search in optimizing RNNG parsing for incremental language comprehension, predicting electrophysiological responses such as the early positive peak and the P600-like later peak, distinguishing the model's syntactic sensitivity.

RNNG and Incremental Processing

RNNGs extend generative grammar by synchronizing syntactic parsing incrementally using neural networks. Leveraging a stack mechanism, RNNGs condense phrase structures into single vectors, facilitating history-based decisions without relying on lookahead buffers (Figure presented: RNNG configuration). This approach enhances the model's capacity to reflect human-like language processing, supporting syntactic composition more explicitly than conventional LSTMs.

Beam Search Implementation

The study implements a word-synchronous beam search, addressing the imbalance between structural and lexical actions within RNNGs. Utilizing Algorithm 1, states are retained through a scoring mechanism fostering exploratory parser states until lexical decisions are resolved (Algorithm outlined: Word-synchronous beam search with fast-tracking). This method improves parsing accuracy, demonstrating effective branching during ambiguity resolution in incremental processing.

Complexity Metrics and EEG Regression Models

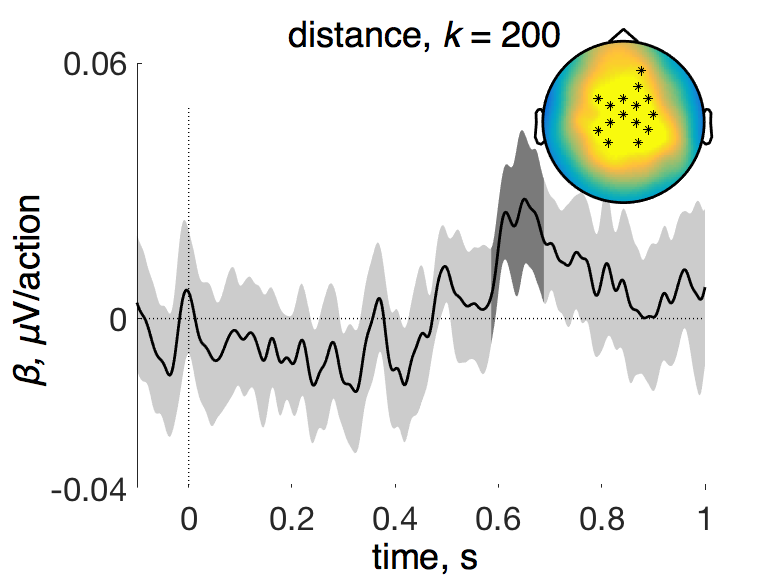

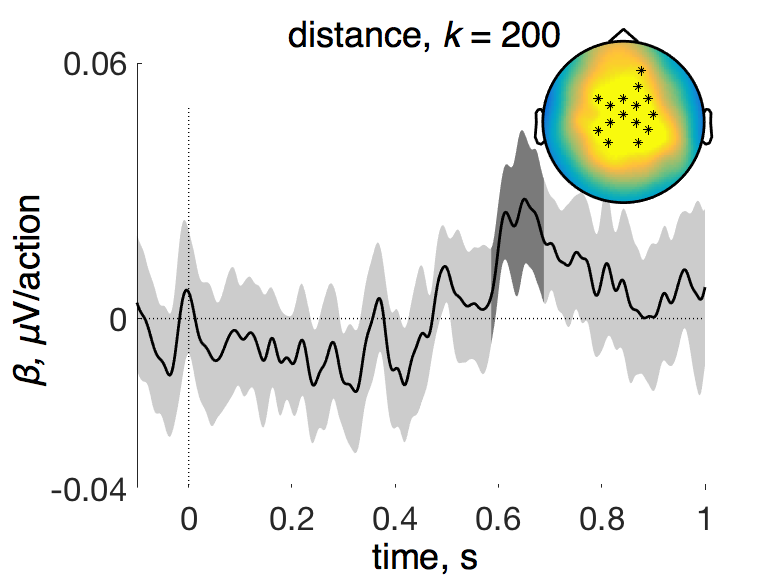

Distinct complexity metrics derived from intermediate beam search states include distance, surprisal, and entropy, measuring syntactic work and unpredictability. These metrics establish quantitative correlations with EEG amplitudes, facilitating analysis of electrophysiological responses to linguistic stimuli. The regression models integrate these metrics to predict EEG readouts, aligning syntactic processing indices with neurolinguistic data.

Figure 1: Distance derives a P600 at k=200.

Model Comparisons in Literary Stimuli

To assess syntactic sensitivity, RNNGs are contrasted with sequential LSTM models across non-explicit syntactic representations. Performance evaluations are conducted on literary chapters used as training and test datasets (Alice's Adventures in Wonderland). RNNGs, incorporating complete syntactic structures into their predictions, tend to outstrip LSTM models by invoking more nuanced parsing features, e.g., syntactic composition.

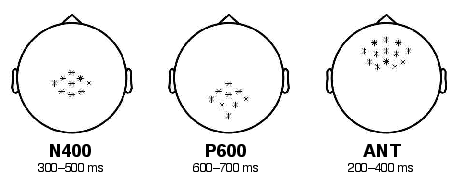

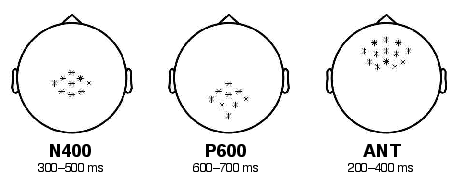

Figure 2: Regions of Interest in EEG analysis to differentiate syntax-related neural responses.

Results and Implications

The research demonstrates significant EEG correlation with RNNG-derived metrics, specifically noting the P600 component linked to syntactic processing (Table: Statistical significance of fitted Target predictors). Comparisons reveal that RNNGs' distance metrics induce P600-like responses while surprisal metrics predict early frontal positivity, underscoring a nuanced understanding of syntax-sensitive computations in neural substrates.

Conclusion

The integration of RNNGs with beam search in the presented system provides a coherent cognitive model of language comprehension, replicating human syntax processing signatures in EEG. This single-stage apparatus omits string-based pre-processing phases, bypassing perceptual strategies like the Noun-Verb-Noun heuristic. This lends credence to the notion that RNNGs may embody potential cognitive models for future exploration, particularly regarding parsing operations within human neural frameworks for language processing.