- The paper presents MTNet, a novel model that combines memory modules, encoders, and attention mechanisms to improve forecasting accuracy for multivariate time-series data.

- It leverages convolutional and recurrent layers to extract short-term and long-term patterns, addressing vanishing gradients inherent in traditional RNNs.

- Experimental results on six benchmarks show significant improvements in RMSE, MAE, RRSE, and CORR, underscoring the model's robust performance.

A Memory-Network Based Solution for Multivariate Time-Series Forecasting

Introduction

The paper "A Memory-Network Based Solution for Multivariate Time-Series Forecasting" introduces the Memory Time-series Network (MTNet), which aims to address challenges in capturing complex patterns and dependencies in multivariate time series data. Traditional methods often fall short in modeling nonlinear relationships and long-term dependencies, prompting the exploration of RNN-based methods, which themselves struggle with vanishing gradients. Inspired by Memory Networks used in QA systems, MTNet integrates memory components, multiple encoders, and an autoregressive framework to boast improved interpretability and predictive accuracy across diverse datasets.

Model Architecture

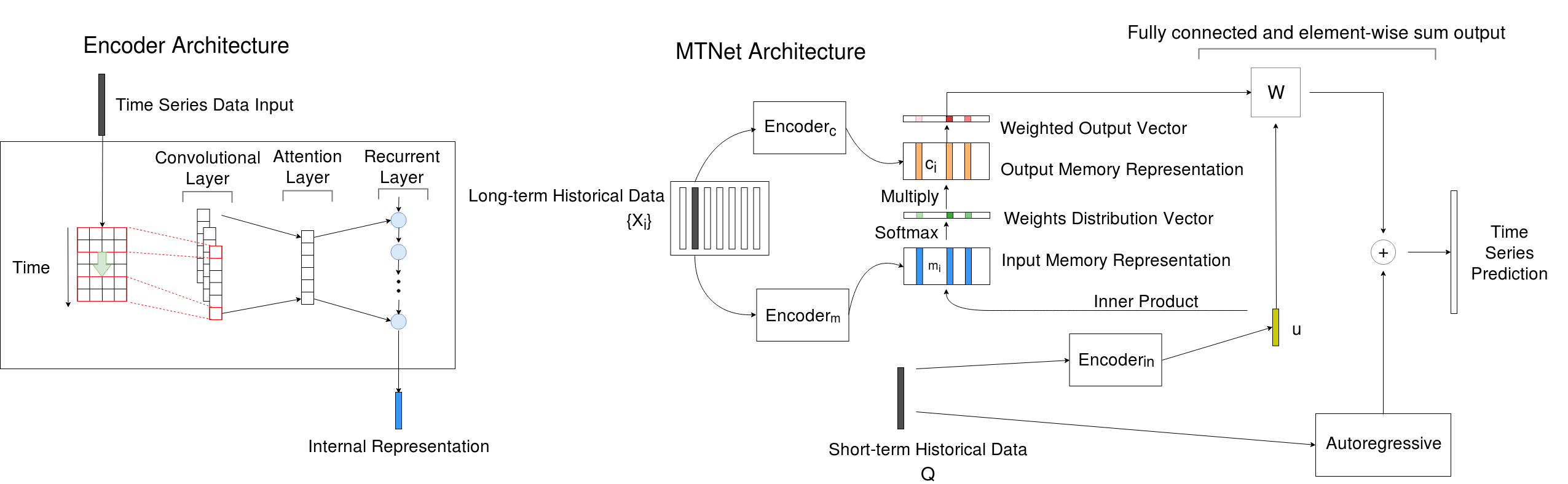

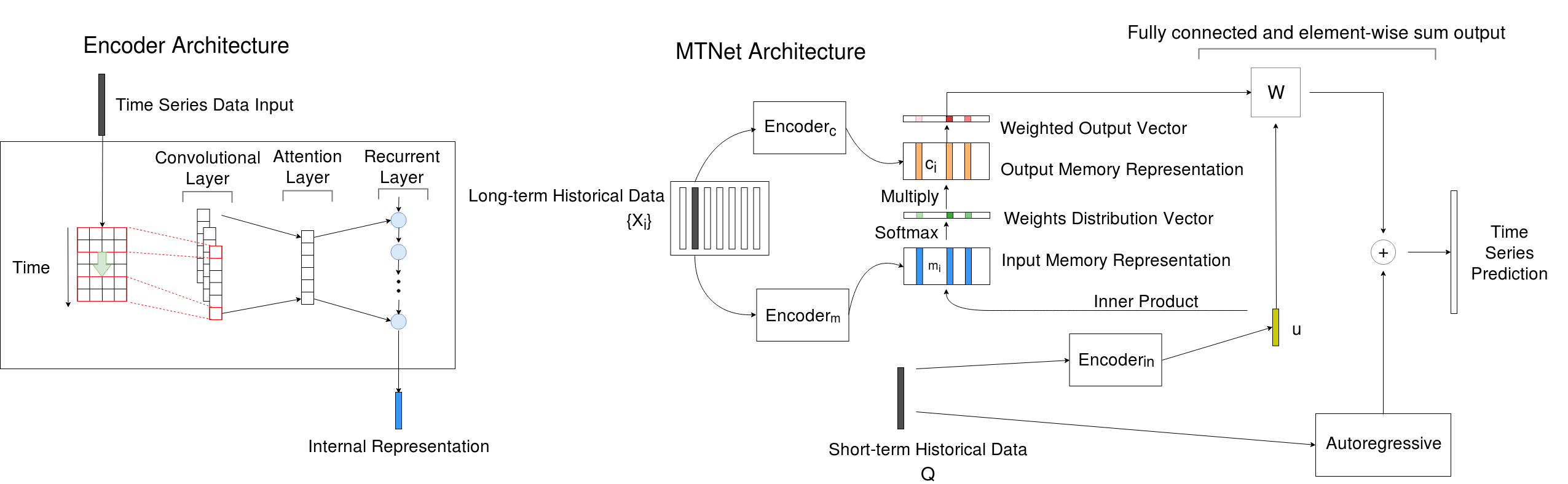

MTNet consists of three key components: a memory module, multiple distinct encoders, and an autoregressive component. These components are integrated to learn from both short-term and long-term data while adapting attention mechanisms to focus on relevant historical patterns. The architecture is strategically designed to maximize interpretability through an attention layer that identifies crucial historical segments.

Encoder and Memory Representation

Figure 1: An overview of Memory Time-series network (MTNet) on the right and the details of the encoder architecture on the left.

The encoders extract features from the input time series using convolutional layers to capture short-term patterns and dependencies and recurrent layers to encode these features over time. The memory network stores long-term historical data, which is then combined with short-term input features through attentional mechanisms to focus on significant periods. The framework allows adaptive learning of dependencies among time steps, providing insights into the temporal dynamics of the series.

Experimental Evaluation

The paper evaluates MTNet on six benchmark datasets, including multivariate and univariate data, spanning domains such as environmental monitoring and financial forecasting. Comparative analyses demonstrate MTNet's significant performance improvements over existing methods such as DA-RNN and LSTNet, particularly in managing periodic patterns and long-term dependencies.

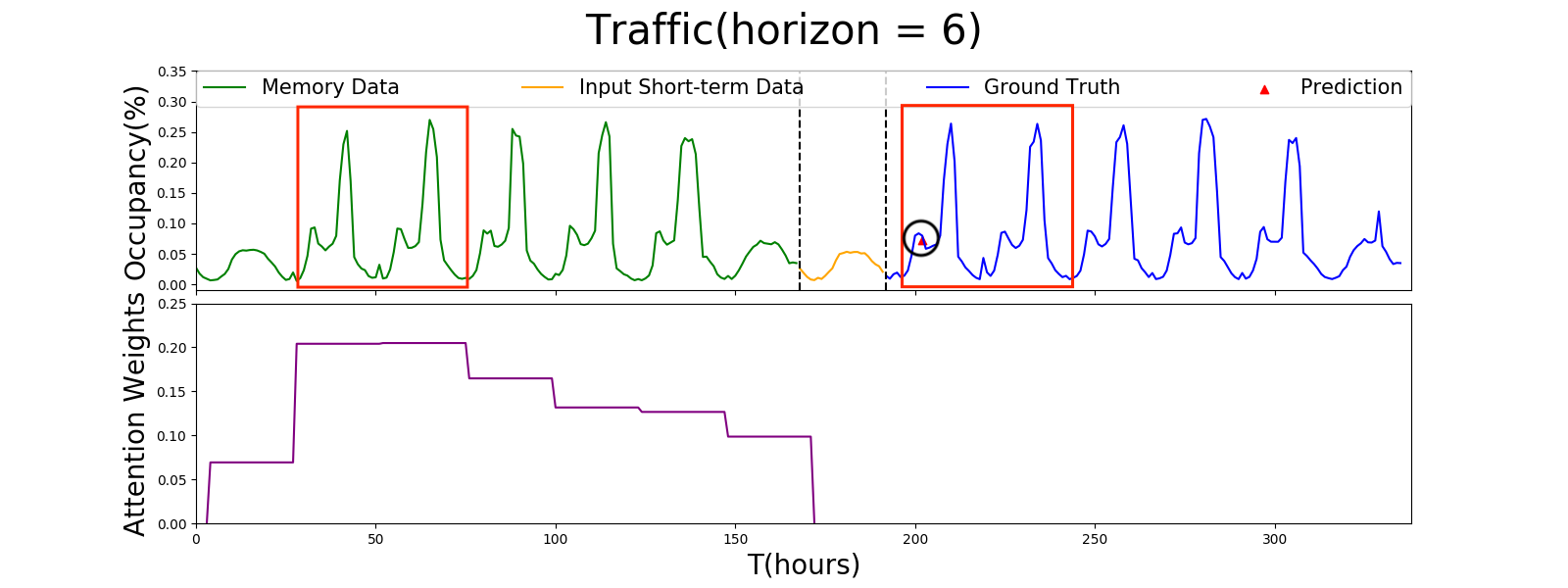

Visualization of Attention Mechanism

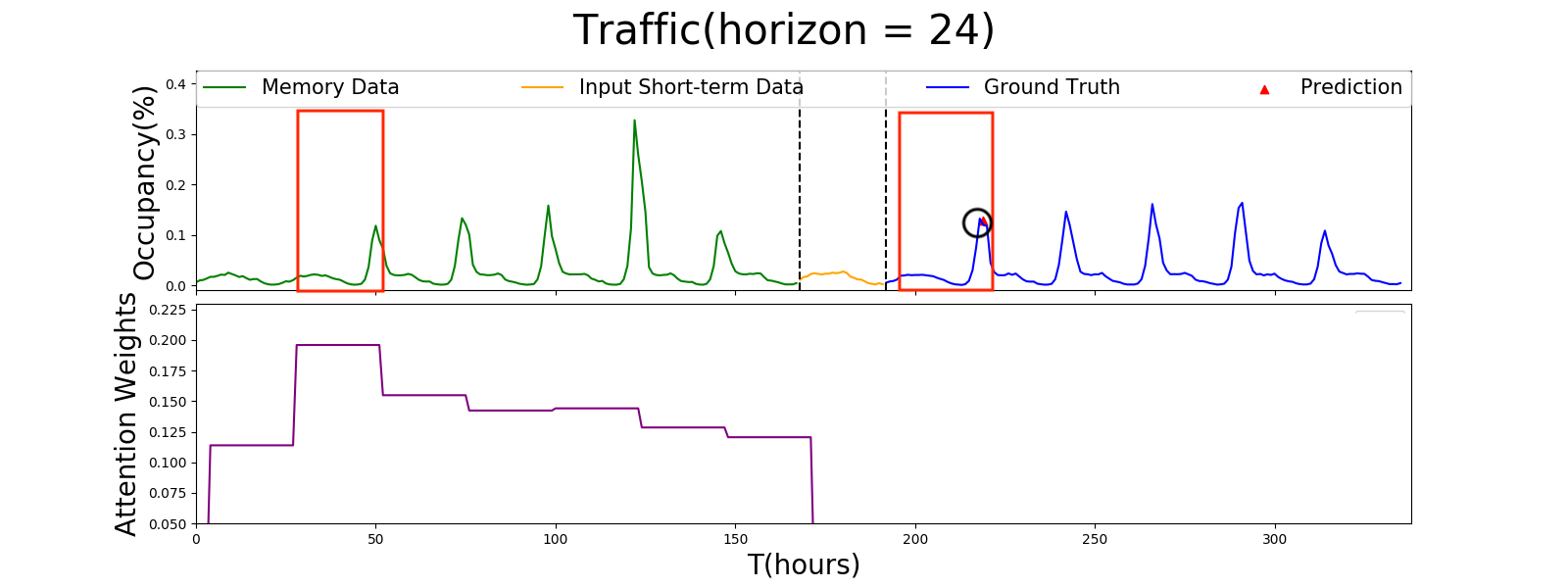

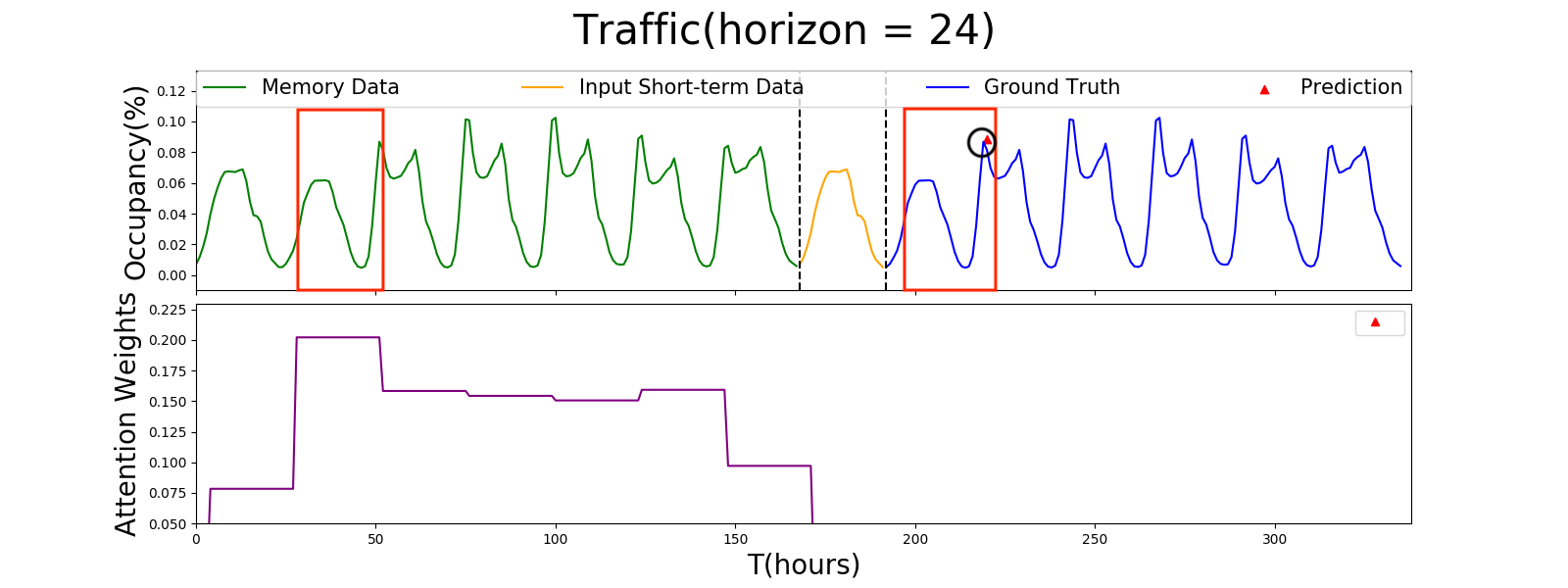

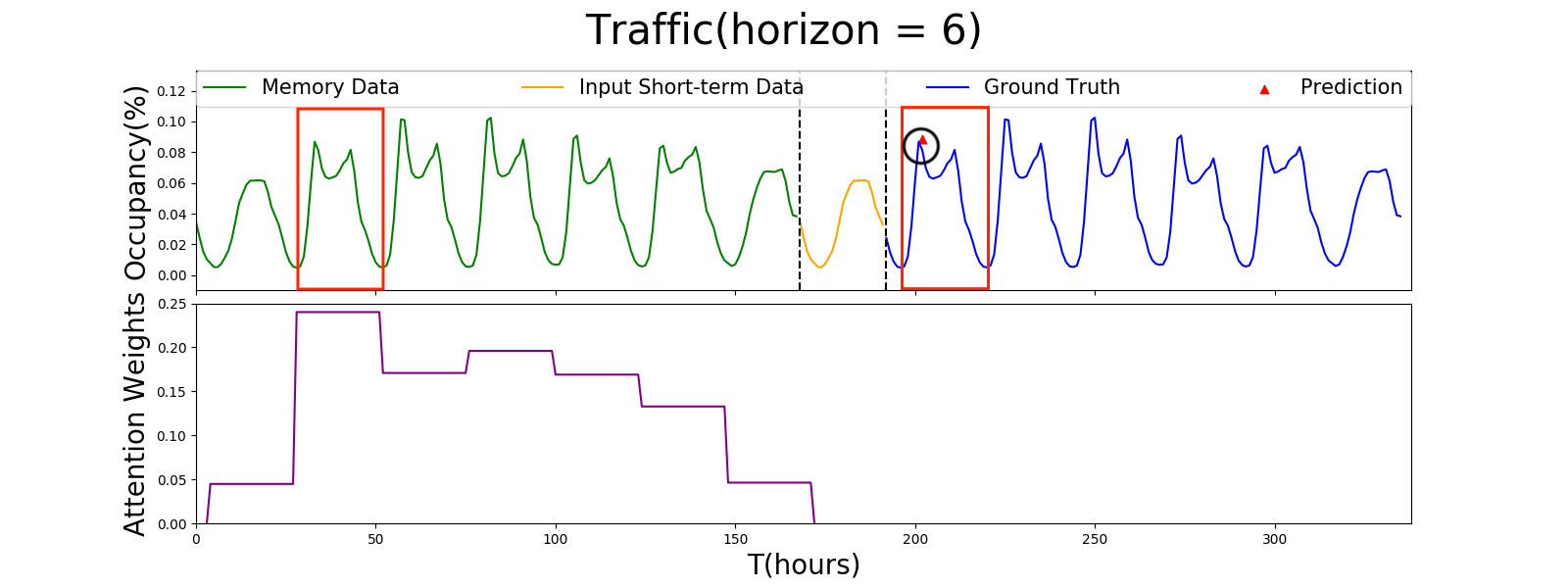

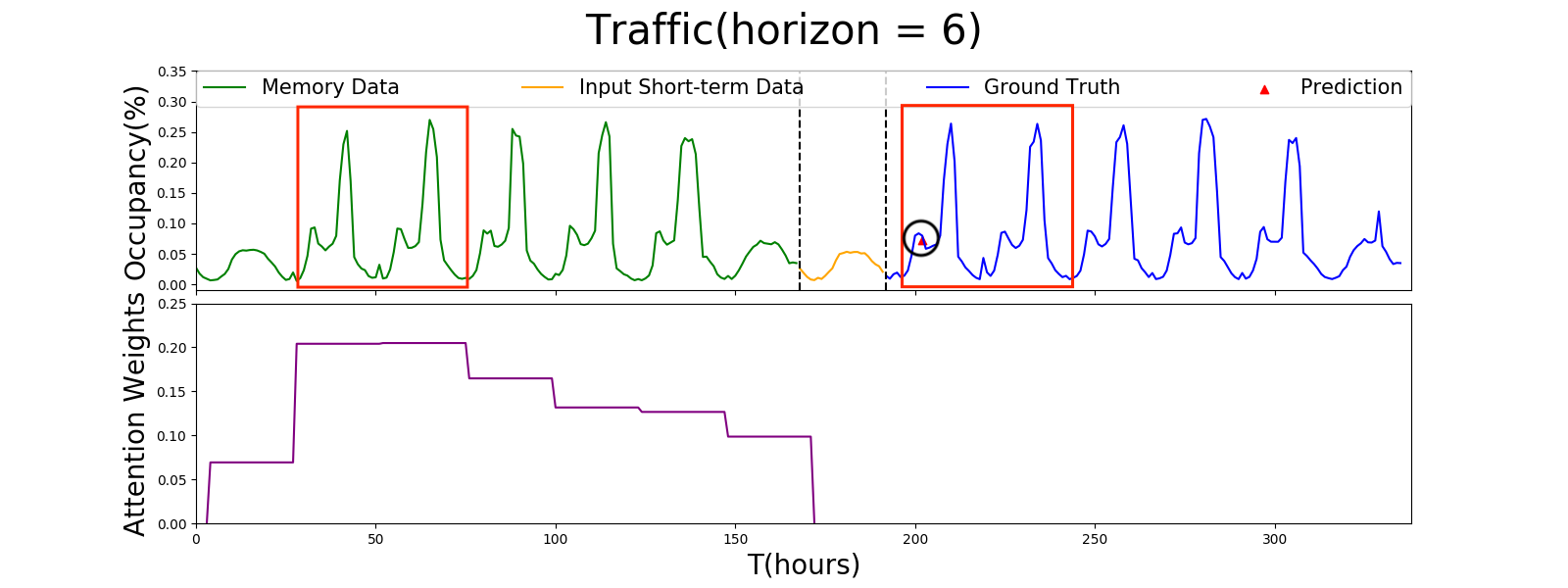

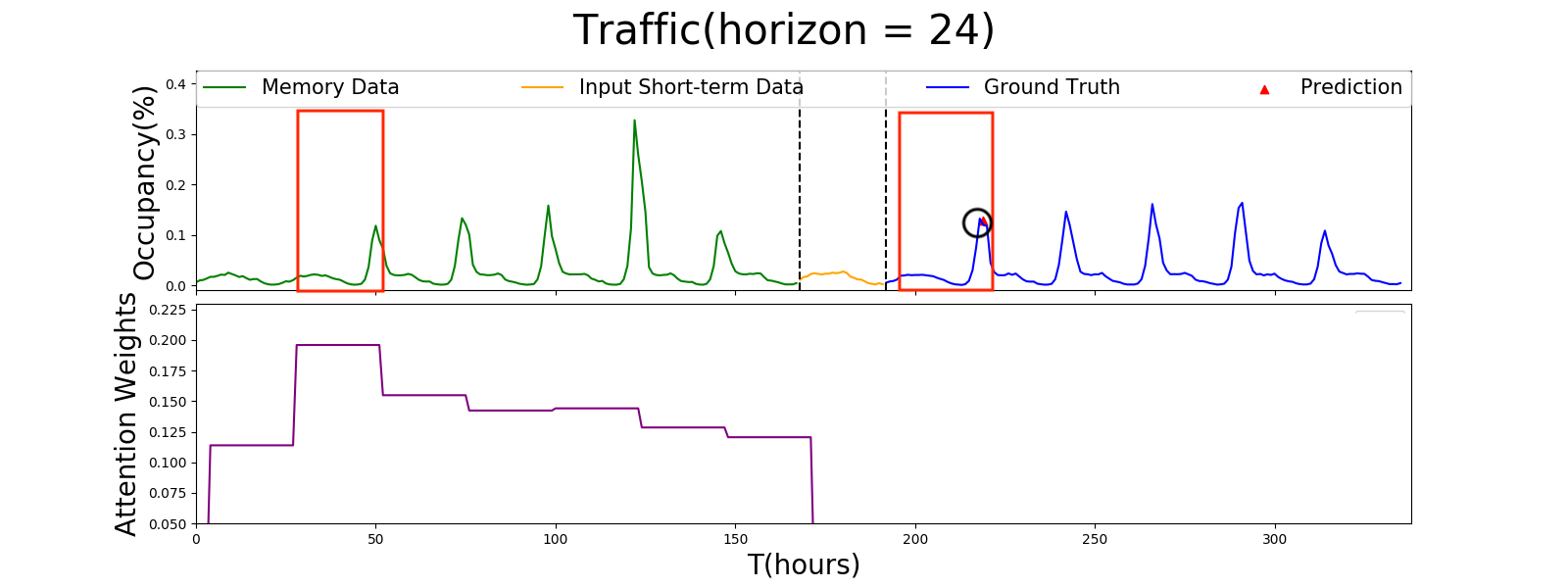

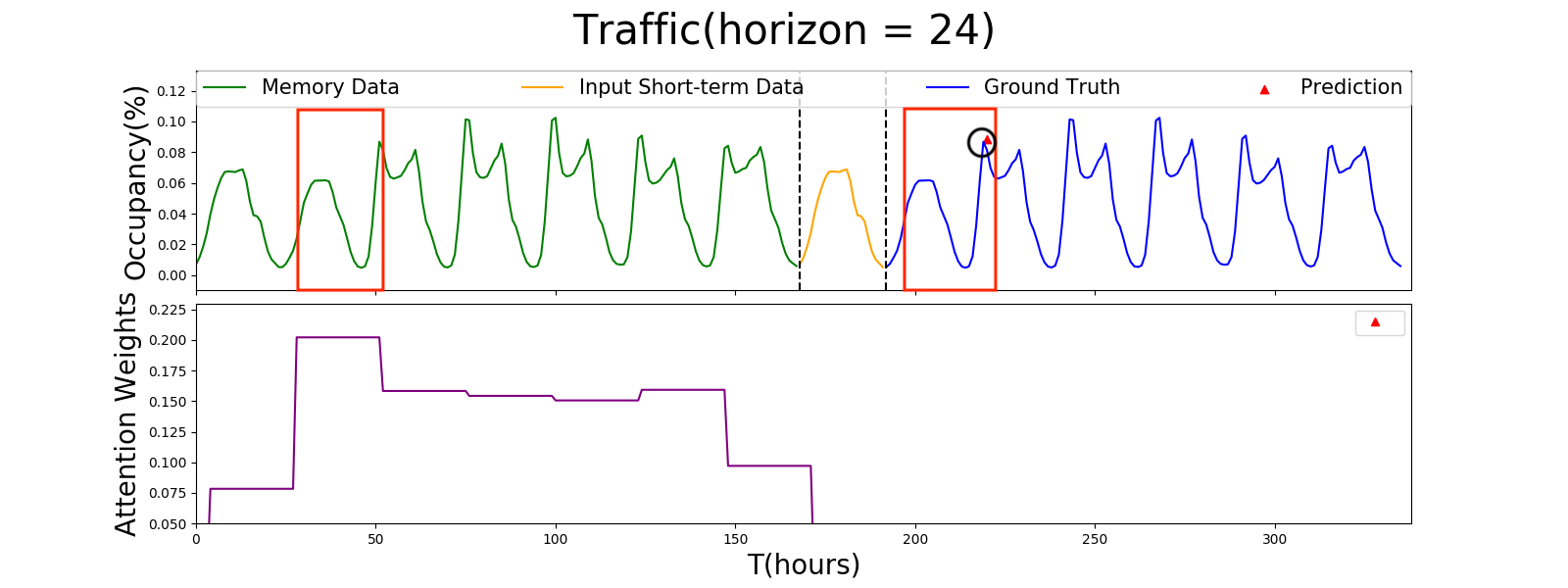

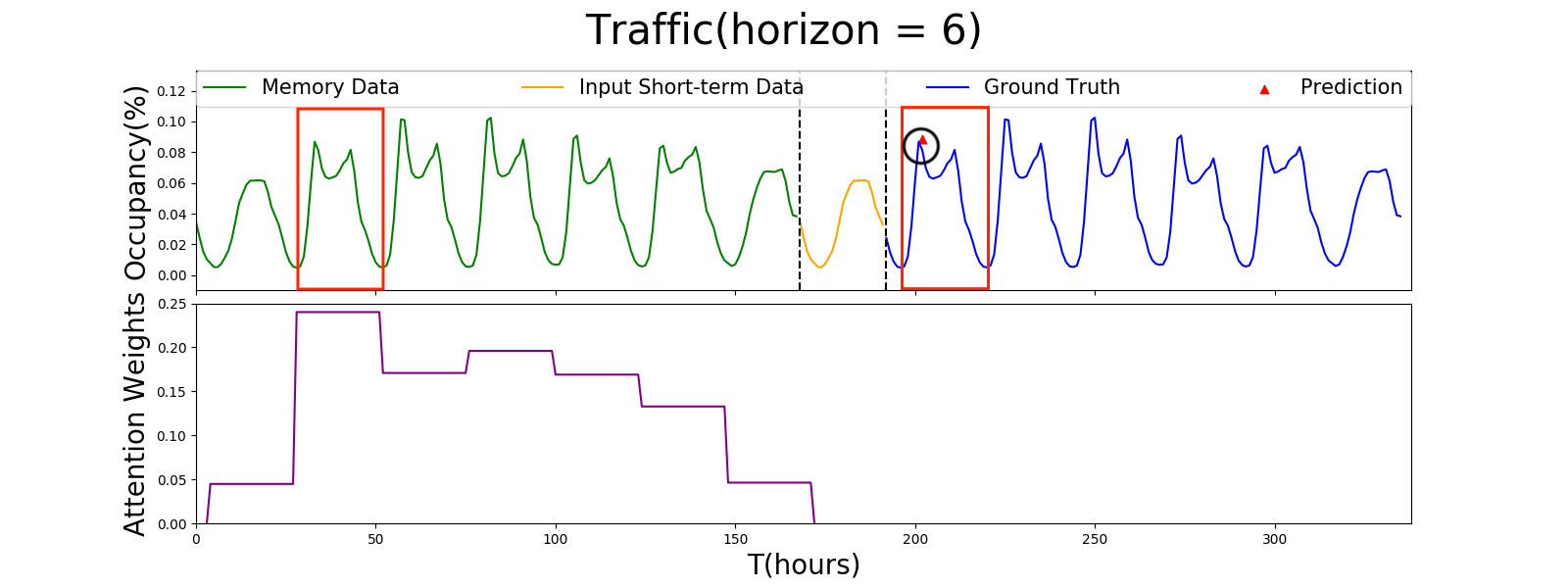

Figure 2: Plot of the attention weights between Input and Memory component for MTNet.

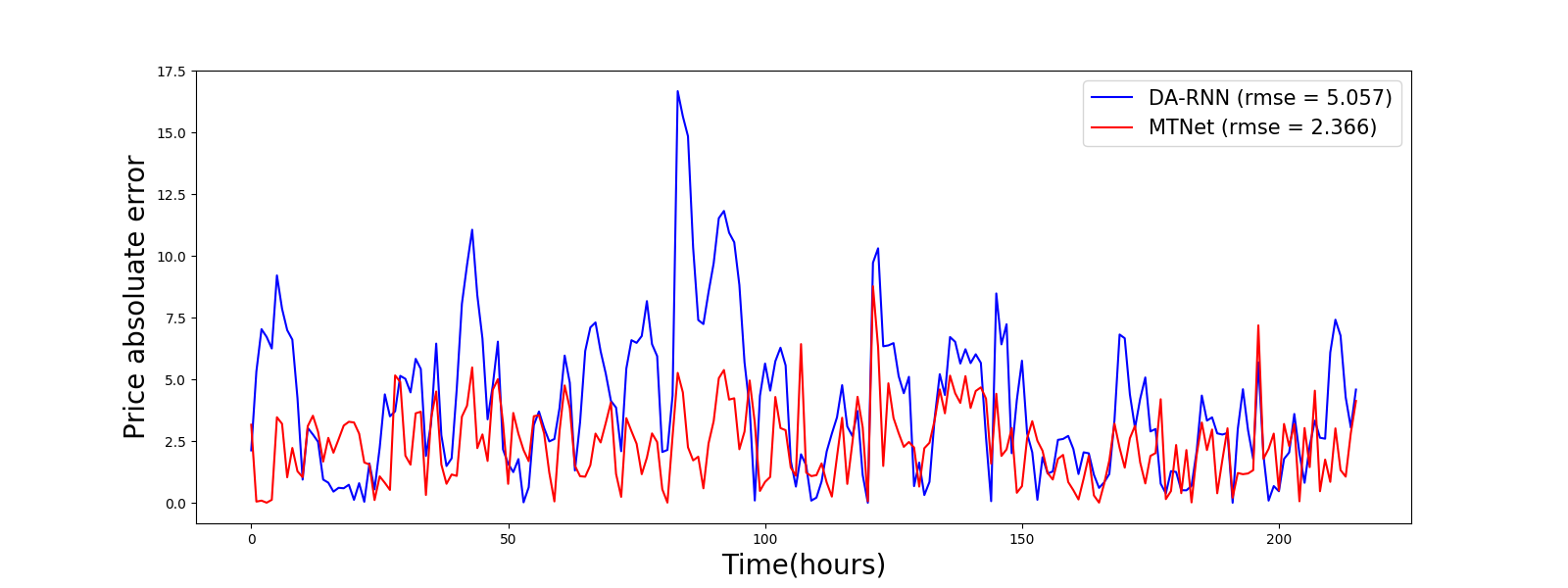

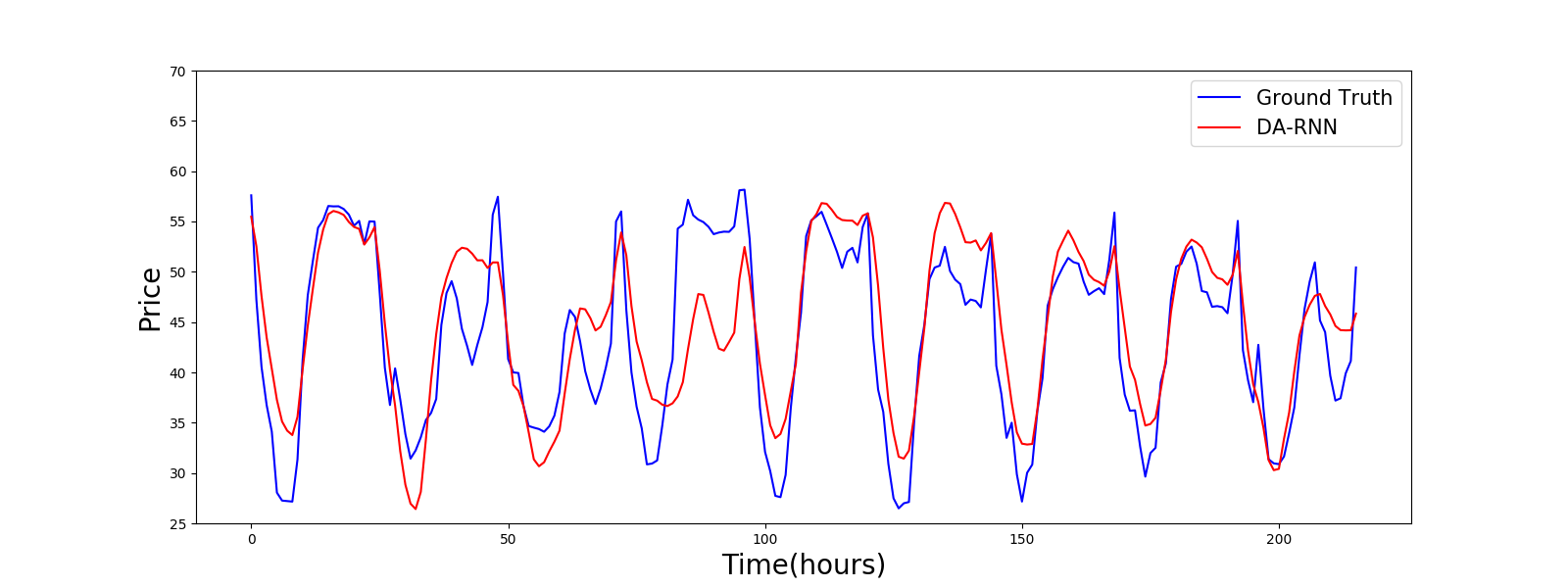

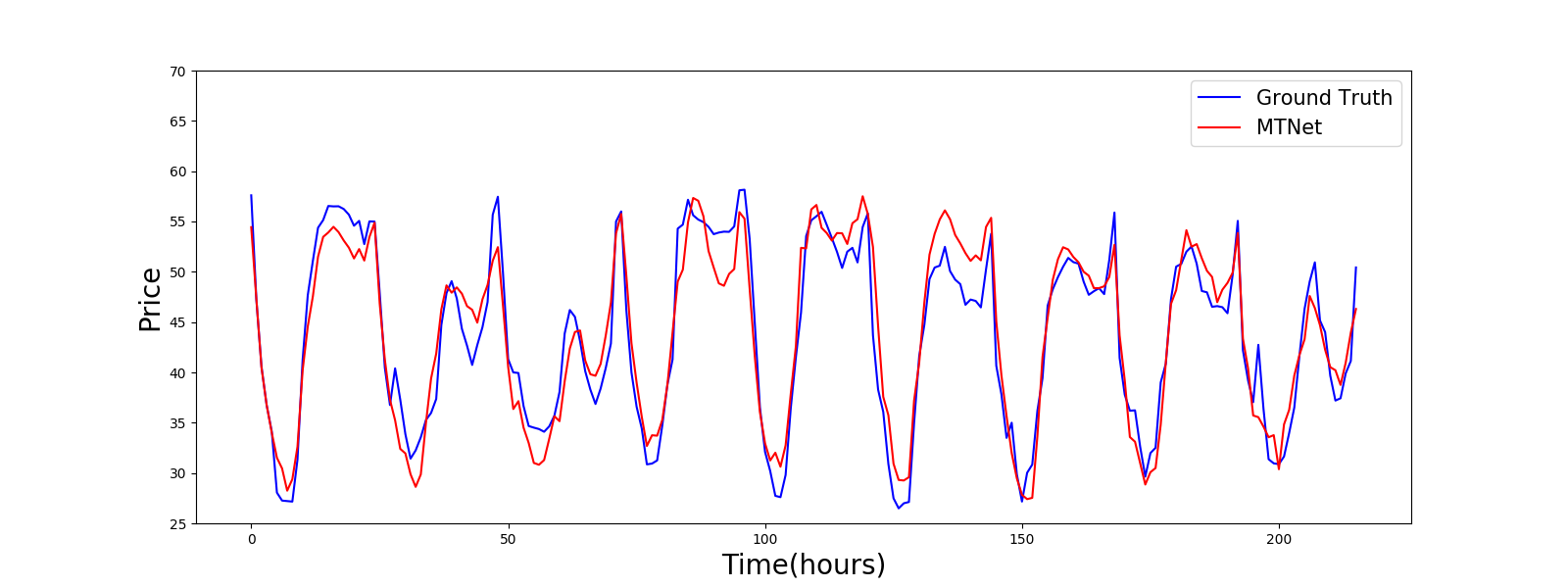

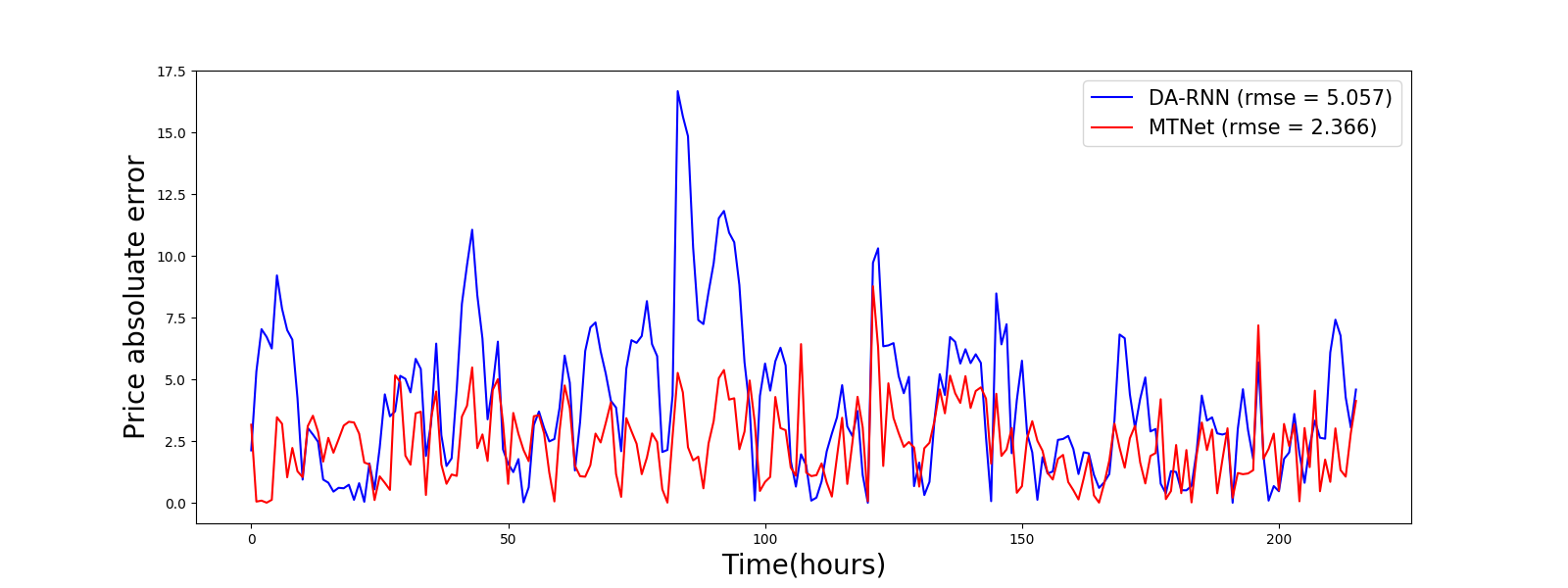

The figures illustrating MTNet's attention weights reveal its capability to autoregressively attend to segments of historical data that align with reference patterns for prediction. Demonstrations on the Traffic and GEFCom2014 Electricity Price datasets underscore MTNet's aptitude for accurately capturing peak values and long-term trends.

MTNet is designed to scale efficiently, employing convolutional layers to manage computational complexity and memory usage. It achieves state-of-the-art performance as measured by RMSE and MAE for univariate datasets and RRSE and CORR for multivariate datasets. The model's flexibility allows it to adapt to various forecasting horizons with significant statistical improvements over competitors (Table 1, Table 2).

Notable Numerical Results

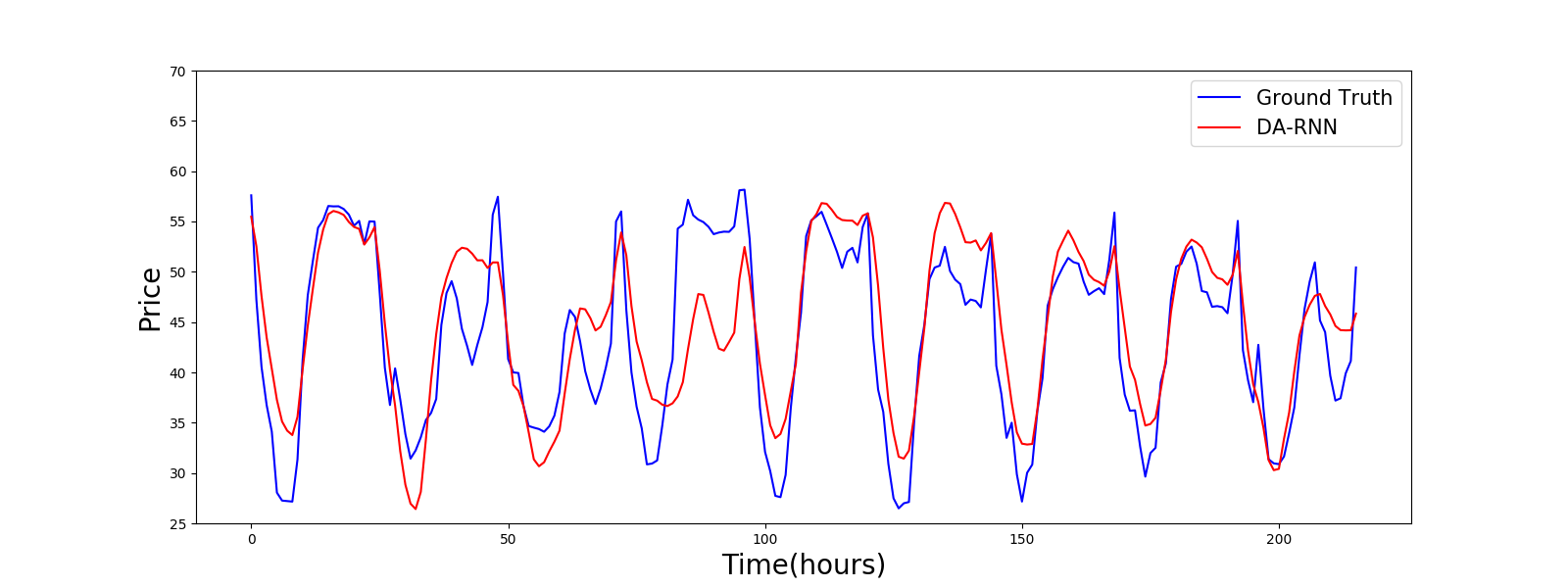

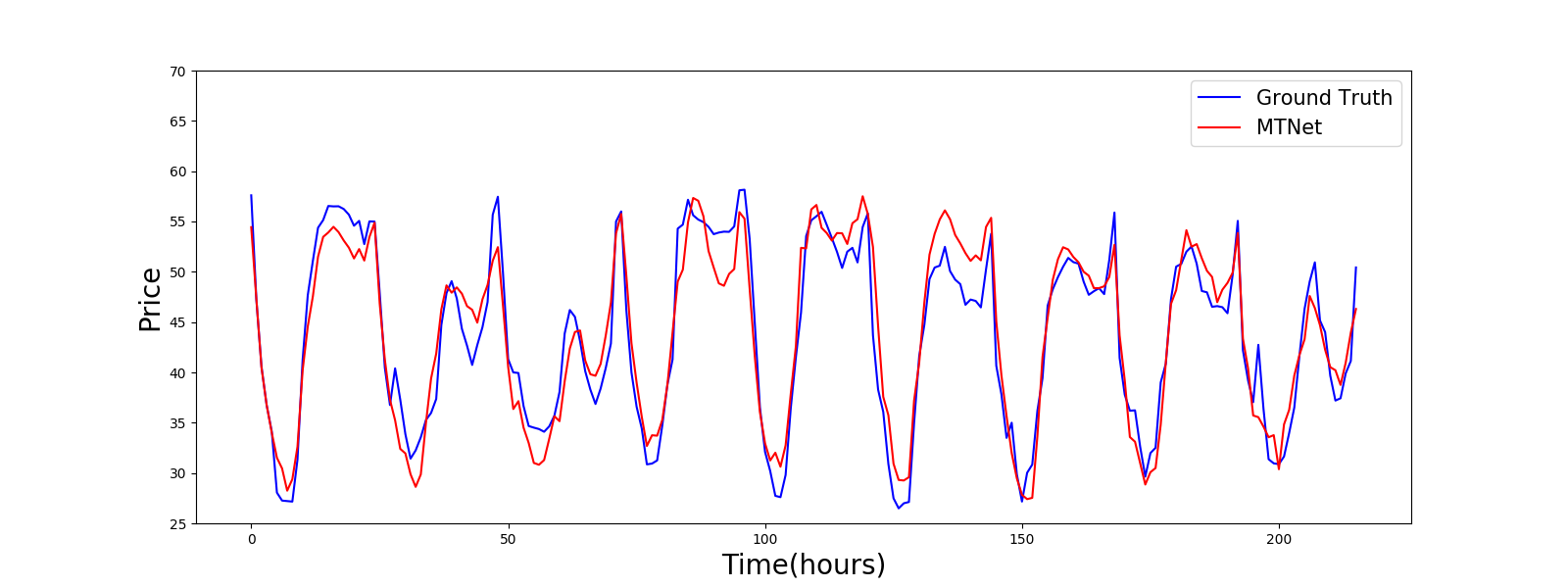

Figure 3: Prediction results of DA-RNN and MTNet GEFCom2014 Electricity Price dataset visualized. Segments are randomly sampled from the testing set.

The experimental results highlight MTNet's adeptness in surpassing traditional RNN approaches, with statistically significant improvements observed across several datasets. Specifically, MTNet consistently demonstrates its superiority in RMSE and MAE metrics across multiple horizons, indicating robust performance in capturing complex temporal dynamics.

Conclusion

The research presents MTNet as a novel approach to multivariate time series forecasting, integrating a memory network framework with attention mechanisms to improve long-term dependency tracking and interpretability. Future research may focus on refining the memory component for detecting rare events and enhancing the model's explainability. The paper's approach sets a new benchmark for time series forecasting, offering valuable insights and methods applicable across various industries.