- The paper proposes a novel model that integrates Gaussian Process priors with VAEs to overcome the i.i.d. assumption in latent representations.

- The paper employs a stochastic backpropagation strategy with low-rank covariance factorizations, enabling distributed and memory-efficient learning.

- The paper demonstrates that GPPVAE outperforms models like CVAE on tasks such as rotated MNIST and face image predictions.

Gaussian Process Prior Variational Autoencoders

This essay discusses the paper titled "Gaussian Process Prior Variational Autoencoders" (1810.11738), which proposes a novel approach to incorporating Gaussian Process (GP) priors into Variational Autoencoders (VAEs) to enhance the modeling of correlations in complex datasets. The main innovation lies in addressing the limitations of VAEs that arise from the assumption of independent and identically distributed (i.i.d) latent representations. The paper presents the Gaussian Process Prior Variational Autoencoder (GPPVAE) as a model that combines the flexibility of VAEs with the ability of GPs to model dependencies within the latent space.

Methodology Overview

The primary challenge addressed by GPPVAE is accounting for sample correlations, particularly within datasets such as time-series of images or pose variations in images. This model uses a GP prior to capture these dependencies in the latent space of a VAE, overcoming the conventional limitations that arise due to the i.i.d assumption of standard VAEs. By incorporating GP priors, the authors introduce a stochastic backpropagation strategy to efficiently compute gradients, allowing distributed learning with reduced memory usage. This is achieved by leveraging low-rank factorizations of the covariance matrix and computing gradients through a novel approach that allows for distributable and memory-efficient inference.

Figure 1: Generative model underlying the proposed GPPVAE and pictorial representation of its inference procedure.

The introduction of GP priors in the context of VAEs builds on existing work that extends the capabilities of VAEs by incorporating auxiliary information or designing more complex latent distributions. Previous approaches include the use of auxiliary data in the VAE framework, leading to models like conditional VAEs (CVAEs), or the exploration of hierarchical structures and rich prior distributions to address the oversimplification of latent spaces.

The theoretical strength of GPPVAE lies in its ability to model dependencies using a GP prior, which is well-suited for datasets that inherently contain correlations, such as those with temporal, object identity, or view-based correlations. The use of GPs allows for flexible modeling based on kernel functions that capture the underlying dependencies.

Empirical Evaluation

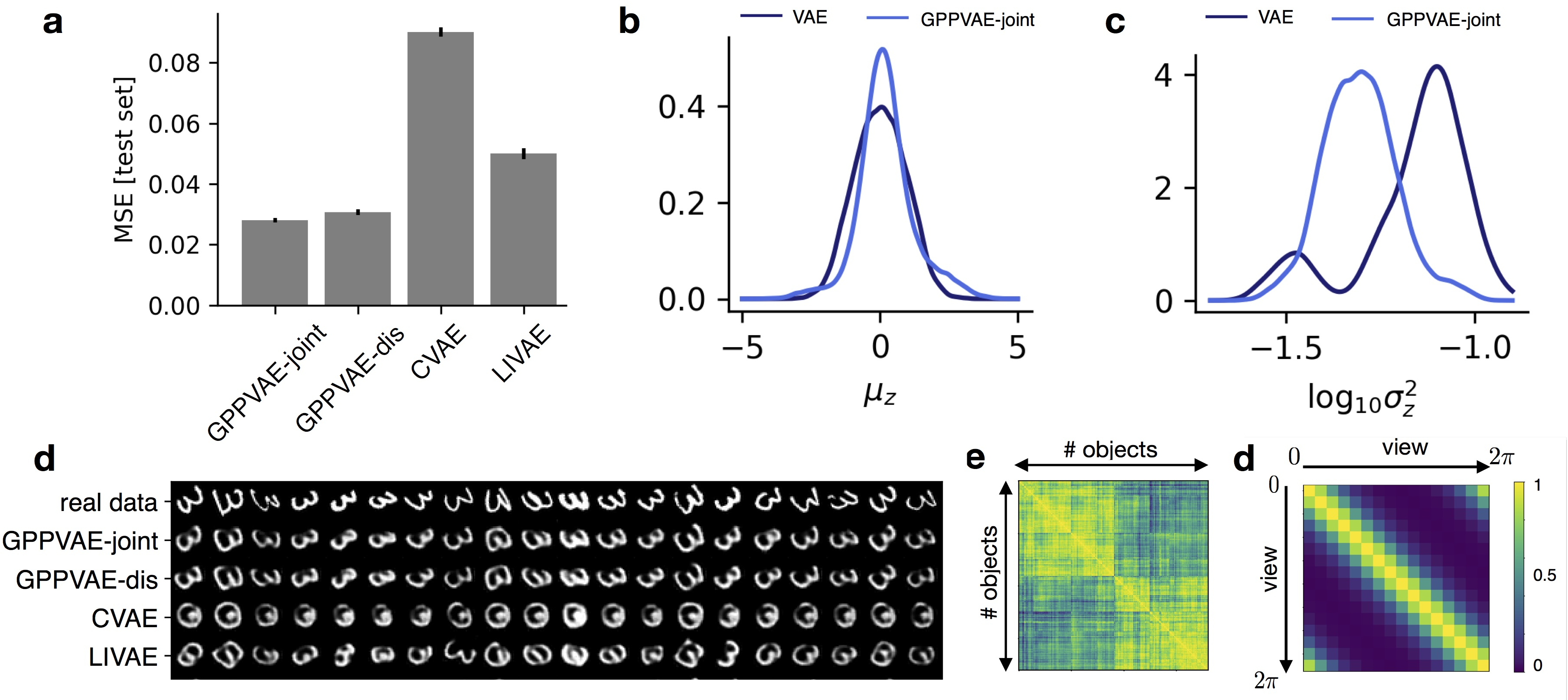

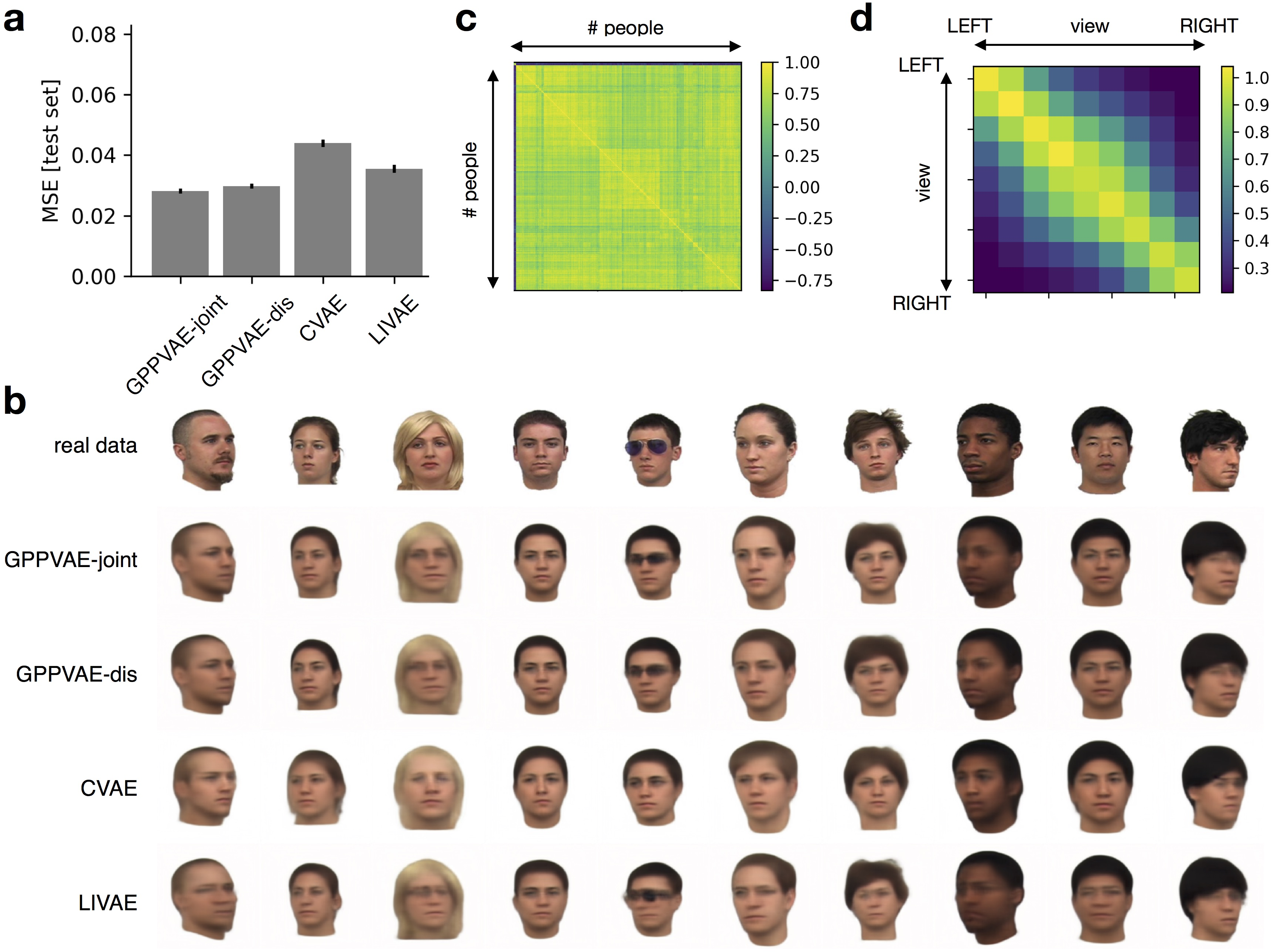

The empirical section of the paper demonstrates the superiority of GPPVAE through experiments on two datasets: rotated MNIST and the Face dataset. The experiments focused on the task of predicting out-of-sample images given auxiliary information with missing views, showing that GPPVAE consistently outperforms competing models like CVAE and linear interpolation within the latent space.

Figure 2: Results from experiments on rotated MNIST.

On the rotated MNIST dataset, GPPVAE achieved lower mean squared error (MSE) compared to CVAE and LIVAE, illustrating its capacity to model the inherent rotational correlations in the dataset. Similarly, in the Face dataset, GPPVAE demonstrated superior performance, highlighting its effectiveness in learning complex view and object covariances.

Figure 3: Results from experiments on the face dataset.

Discussion and Implications

The integration of GP priors into VAEs represents a significant advancement in the field of generative modeling. By allowing the model to account for correlations within the latent space, GPPVAE opens up new possibilities for more accurately modeling complex datasets where i.i.d assumptions are inadequate. This model is particularly beneficial for applications requiring nuanced understanding of high-dimensional data with underlying structural dependencies, such as in medical imaging or robotics.

Future work could explore enhancements such as incorporating a perception-based loss or extensions analogous to GANs, further improving the practical utility and applicability of GPPVAE. Additionally, the paper suggests investigating factorized GP prior approximations to enhance scalability, which would further broaden the applicability of this approach in large-scale data scenarios.

Conclusion

The introduction of Gaussian Process Priors to Variational Autoencoders by the GPPVAE model presents an innovative methodology to improve latent space modeling by addressing dependencies and correlations within complex datasets. By leveraging the expressive power of GP priors, this approach provides a robust framework for applications where modeling sample correlations is crucial, demonstrating significant improvements in predictive performance over traditional VAE approaches.