- The paper shows that the F-test yields valid inference for simple sub-models even when the underlying high-dimensional system is misspecified.

- It rigorously proves that the cumulative distribution of the F-statistic converges uniformly to a non-central F-distribution under mild regularity conditions.

- Simulation studies verify reduced bias in Type I error for heavy-tailed and non-Gaussian designs as the true dimensionality increases.

Statistical Inference with F-Statistics under High-Dimensional Misspecification

Introduction

The paper "Statistical inference with F-statistics when fitting simple models to high-dimensional data" (1902.04304) presents a rigorous theoretical analysis of the validity of the classical F-test when applied to linear sub-models fitted to high-dimensional data. The central question is whether, and under what conditions, the standard F-statistic provides valid inference about the explanatory utility of a low-dimensional (possibly misspecified) regression model when the true data-generating process is high-dimensional, with the number of true explanatory variables d far exceeding the number of observations n.

Problem Setting and Motivation

The work considers the high-dimensional linear model

y=ϑ+θ′z+ϵ

where y is a univariate response, z is a d-dimensional feature vector, and d≫n. In practice, due to the curse of dimensionality and computational constraints, only a simple (p-dimensional) model is fitted, y=α+β′x+e, where x=M′z and M is a d×p full-rank matrix (p<n). The crucial aspect is that this working model can be misspecified, in that the true relationship may not be linear in the selected components, or many relevant variables are omitted.

Such settings commonly arise in genomics, econometrics (e.g., factor modeling in macroeconomic forecasting), and quality control studies, where p explanatory variables are selected from a very large set. The key question is whether hypothesis tests performed in this reduced model—specifically, the standard F-test of H0:β=0—are approximately valid, particularly regarding Type I error control, under this type of misspecification in high dimensions.

Main Theoretical Results

The core contribution of the paper is to show that, under general conditions, the distribution of the standard F-statistic in the submodel can be uniformly approximated by the corresponding non-central F-distribution, even when the working model is misspecified and the underlying error structure is non-Gaussian and potentially heteroskedastic conditional on x.

Precise conditions include:

- d≫n, with p=O(n).

- The explanatory variables z admit an affine representation in terms of independent components, with suitable moment and bounded density conditions.

- The fitted model dimension p satisfies p/logd→0 as d,n→∞.

- Signal-to-noise ratio for the projected sub-model is required to be small (i.e., local alternatives).

Main result (Theorem 1): The supremum of the difference between the cumulative distribution function (CDF) of the observed F-statistic and that of the non-central F-distribution (with the appropriate non-centrality parameter) converges to zero as n,d→∞ under the above conditions. The result holds uniformly over a large class of model parameters, error distributions, covariance structures, and selection matrices M, excepting only a small set of pathological configurations (quantified via Haar measure arguments on the orthogonal group).

Remarkably, the theoretical framework permits misspecification: the error in the working model, e=y−α−β′x, can be non-Gaussian and dependent on x. The result establishes that, asymptotically, the classical F-test is robust to such model misspecification in high-dimensional regimes.

Simulation Study and Empirical Behavior

Extensive simulation studies were conducted to investigate the non-asymptotic validity of the F-test under various conditions. The empirical distribution of the F-test rejection probability under H0 was compared to the nominal significance levels for different types of z distributions (including heavy-tailed and bounded), a range of d and p values, and random orthogonal projections R.

The simulations reveal:

- For non-Gaussian or heavy-tailed designs, the average absolute deviation of the empirical test size from the nominal level decreases as d increases, confirming the theoretical findings regarding high-dimensional consistency.

- The effect of model misspecification (i.e., the deviation between simulated and nominal levels) weakens as d→∞ for fixed p/n.

- For Gaussian regressors, the F-test remains exact even for small d, since the working model is then always correctly specified in the sense of linear projection.

- Systematic over-rejection or under-rejection occurs for certain design distributions and small d, but this bias diminishes with increasing d.

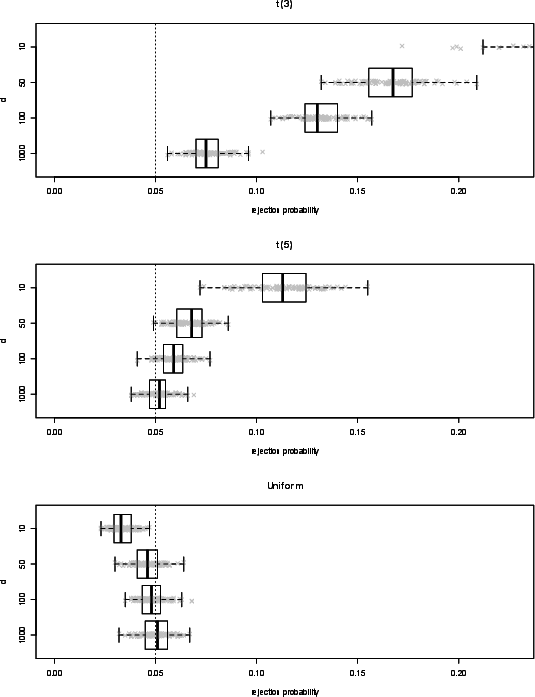

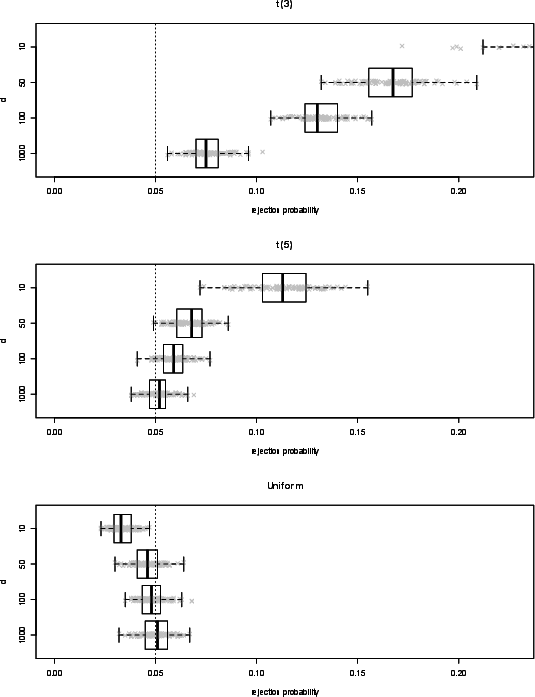

Figure 1: Box-plots of simulated rejection probabilities (pˉr)r=1100 for the F-test across increasing d demonstrate convergence of the empirical test level to nominal as d grows, depending on the distribution of z.

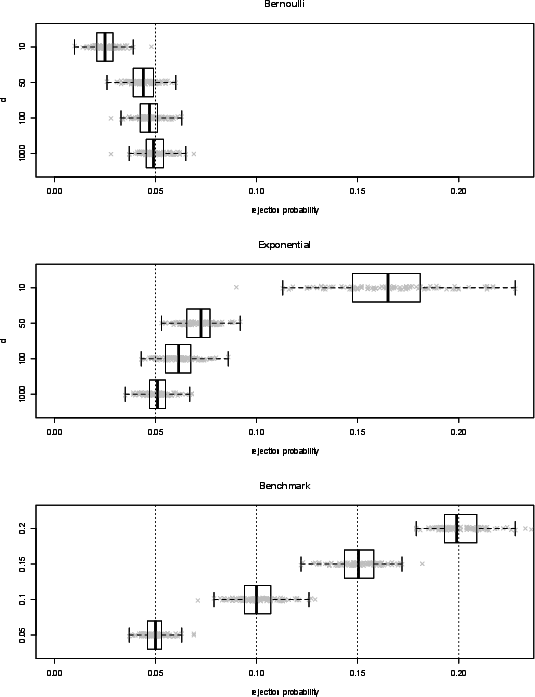

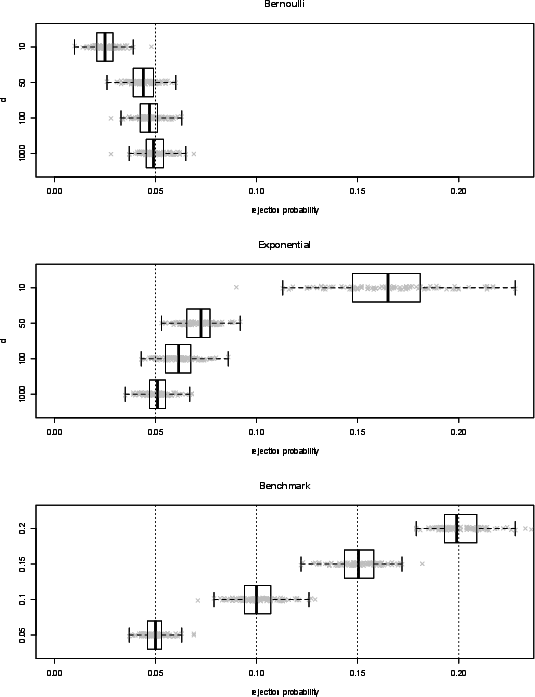

Figure 2: Box-plots for Bernoulli and exponential designs show diminishing bias and variability in F-test rejection probability as the dimension d increases; the benchmark panel facilitates comparison with expected simulation variability.

Methodological and Theoretical Implications

This analysis establishes a formal asymptotic justification for the use of F-statistics derived from simple (possibly severely misspecified) linear regression models in high-dimensional settings. The theoretical apparatus leverages invariance and orthogonal group measures to control for the possible "worst-case" misspecification introduced by projecting the very high-dimensional covariate space down to low dimensions.

Key implications include:

- Uniform Type I Error Control: Even when the working model is not an accurate reflection of the true DGP, the F-test for explanatory utility of the selected covariates is essentially valid for large d, provided mild regularity conditions hold.

- Justification for Subset Models: The findings formally support widespread empirical practice in applied fields (e.g., genomics, macroeconomics) where only small, interpretable subsets of features are entered into regression models after variable screening or dimension reduction.

- Connections to Model Selection Theory: Since surrogate parameters are central in the misspecified setting, the results connect with robust and sandwich-type inference frameworks, but provide sharper high-dimensional guarantees.

Limitations and Open Questions

Some nontrivial aspects remain. The results rely on strong independence and moment conditions for the underlying variables and require that the design matrix is "well-behaved" with respect to the target subspace. Moreover, local signal-to-noise conditions (Δ→0) are necessary for exact distributional convergence of the F-statistic. Extensions to serially dependent designs, non-i.i.d.\ structures, or more general forms of model selection (e.g., data-driven M) remain open.

Importantly, the simulation study highlights persistent over- or under-rejection in small-d or heavy-tailed settings, indicating that asymptotic theory may not govern finite-sample behavior in all regimes. Characterizing the rate of convergence and robustness to broader classes of model violations could be a fruitful direction for further research.

Future Directions

The framework developed may be adapted to other hypothesis testing settings for high-dimensional inference, including generalized linear models, penalized regression, variable selection, or post-selection inference. Moreover, formalizing theoretical guarantees under serial dependence – important for factor models and time series – represents a key theoretical extension. Analyzing the performance under double asymptotics (d,n→∞ with d≫n) for power and minimax optimality would further elucidate the role of the F-statistic as a screening tool in ultrahigh-dimensional statistics.

Conclusion

The paper rigorously establishes that the classical F-statistic maintains asymptotically valid distributional properties, and thus provides reliable inference for hypotheses concerning the explanatory power of a small subset of variables, even in the presence of extreme high-dimensionality and model misspecification. These findings have substantive implications for empirical work in high-dimensional inference, model selection, and the interpretation of linear regression results in modern applied statistics.