Hadoop Perfect File: A fast access container for small files with direct in disc metadata access

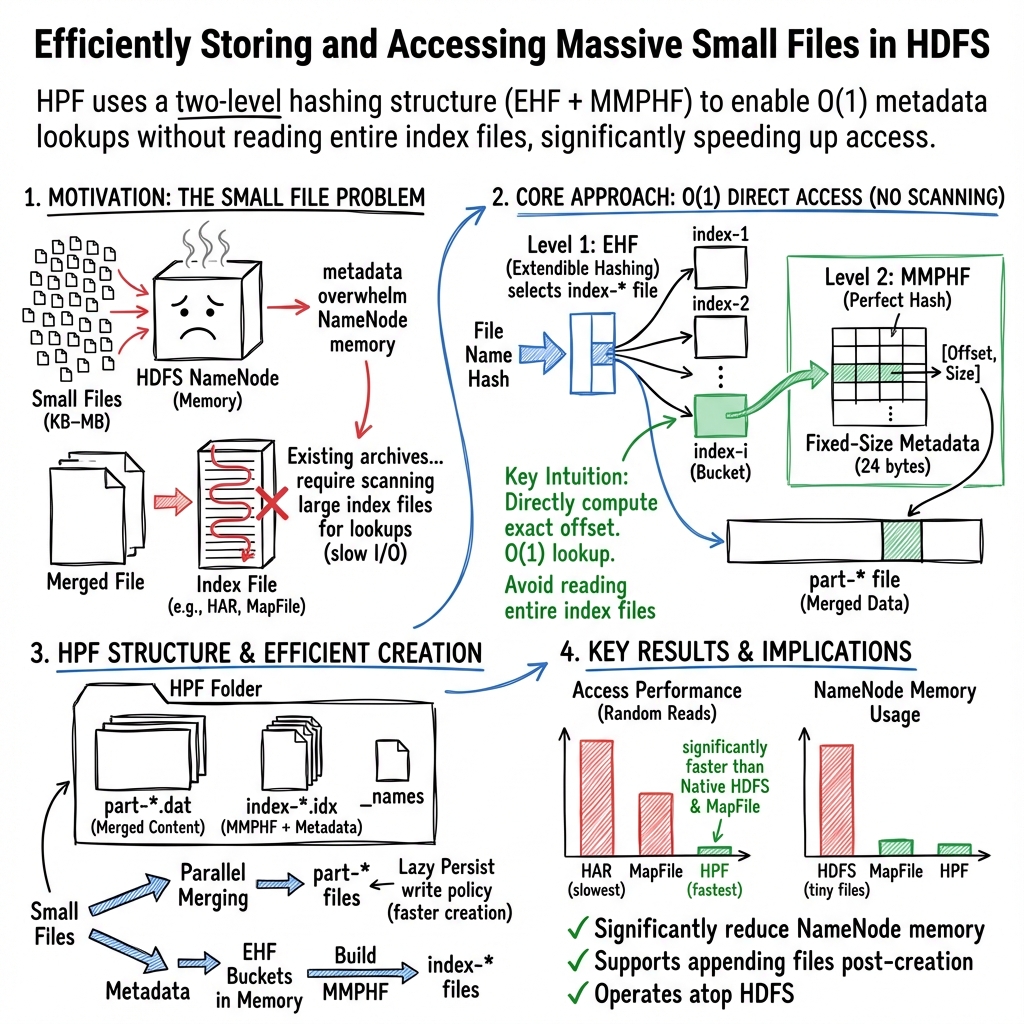

Abstract: Storing and processing massive small files is one of the major challenges for the Hadoop Distributed File System (HDFS). In order to provide fast data access, the NameNode (NN) in HDFS maintains the metadata of all files in its main-memory. Hadoop performs well with a small number of large files that require relatively little metadata in the NN s memory. But for a large number of small files, Hadoop has problems such as NN memory overload caused by the huge metadata size of these small files. We present a new type of archive file, Hadoop Perfect File (HPF), to solve HDFS s small files problem by merging small files into a large file on HDFS. Existing archive files offer limited functionality and have poor performance when accessing a file in the merged file due to the fact that during metadata lookup it is necessary to read and process the entire index file(s). In contrast, HPF file can directly access the metadata of a particular file from its index file without having to process it entirely. The HPF index system uses two hash functions: file s metadata are distributed through index files by using a dynamic hash function and, for each index file, we build an order preserving perfect hash function that preserves the position of each file s metadata in the index file. The HPF design will only read the part of the index file that contains the metadata of the searched file during its access. HPF file also supports the file appending functionality after its creation. Our experiments show that HPF can be more than 40% faster file s access from the original HDFS. If we don t consider the caching effect, HPF s file access is around 179% faster than MapFile and 11294% faster than HAR file. If we consider caching effect, HPF is around 35% faster than MapFile and 105% faster than HAR file.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Overview

This paper is about fixing a common problem in big data systems like Hadoop: handling tons of tiny files quickly and efficiently. The authors introduce a new file format called Hadoop Perfect File (HPF). HPF bundles many small files into bigger ones and uses a clever indexing method so you can find any small file’s data very fast without reading a whole index or using lots of memory.

Key Objectives

The paper asks simple questions:

- How can we store millions of small files in Hadoop without overloading its memory?

- How can we still read any one of those small files quickly?

- Can we make this work without changing Hadoop itself and still allow adding new files later?

Methods and Approach

The paper explains the challenge and then describes HPF’s design and how it works.

The problem with small files

Hadoop has a central manager called the NameNode that keeps track of file information (metadata) in memory. Big files are efficient because they don’t need much metadata per file. But millions of small files create huge amounts of metadata, which:

- Overloads the NameNode’s memory

- Slows down reading and writing

- Makes the system lag when many users access files at the same time

Existing solutions merge small files into big files, but:

- They often require reading entire index files just to find one small file

- Some don’t allow adding new files later

- They may depend on the client’s memory for caching, which is not always available

What is HPF?

HPF is a special “archive” format designed for Hadoop. Think of HPF as a folder that contains:

- part-* files: big files that store the actual contents of the small files

- index-* files: compact index files that store where each small file’s content is inside a part-* file

- a names file (_names): a simple list of all small file names included

HPF merges small files into the part-* files and builds a smart index so it can jump straight to the right spot when you ask for a small file.

How HPF finds files fast (two hash functions)

Imagine a library with millions of books (small files). Instead of checking every card in the card catalog (reading the whole index), HPF uses two tricks to jump straight to the right card:

- Extensible hashing (like adding more drawers when one gets full):

- HPF spreads file metadata across multiple index-* files (“buckets”) so no single index gets too big.

- If a bucket gets too full, it splits and the system adds another index file.

- This keeps each index file small (ideally about the size of one Hadoop block), which makes random access faster.

- Order-preserving minimal perfect hash (like assigning each card a unique spot with no waste or collisions):

- Inside each index file, HPF uses a special hash function that computes the exact position of a file’s metadata.

- “Perfect” means each file maps to a unique slot with no clashes.

- “Order-preserving” means the positions follow the sorted order, which helps predict the exact byte range to read.

- Result: HPF can calculate the exact offset (start position) and read only the tiny part of the index that matters. It does not need to scan the whole index.

Put simply, HPF turns “searching the whole index” into “go straight there,” giving near constant-time lookup.

Building and using HPF

- Merging process:

- HPF creates multiple part-* files (e.g., part-0, part-1) and can use multiple threads to merge files in parallel.

- Each small file’s content is appended to a part-* file; its metadata (file name hash, which part file, offset, size) is recorded.

- A temporary index is kept during creation for recovery if something fails.

- If a part-* file reaches a set size limit, HPF creates a new one (part-2, part-3, etc.).

- Index files:

- Metadata entries have a fixed size (24 bytes) so they’re easy to compute and access.

- The extensible hash spreads metadata across index-* files.

- Inside each index file, the perfect hash lets HPF compute the exact offset to read.

- Appending new files:

- Unlike older formats (like HAR or MapFile), HPF supports adding more files after creation without heavy rework or sorting.

- Memory and caching:

- HPF does not rely on loading index data into the client’s memory.

- Instead, it can use Hadoop’s DataNode caching (Centralized Cache Management) so frequently used index parts stay in fast memory near the data, reducing client-side memory needs.

Main Findings

The experiments compared HPF to:

- Native Hadoop (regular small files directly in HDFS)

- MapFile (a sorted merge format with an index)

- HAR (an older archive format with two index levels)

Key results:

- HPF can access files more than 40% faster than reading small files directly from HDFS in some tests.

- Without caching:

- HPF is about 179% faster than MapFile (almost 2.8× faster).

- HPF is about 11,294% faster than HAR (about 113× faster).

- With caching:

- HPF is about 35% faster than MapFile.

- HPF is about 105% faster than HAR (about 2× faster).

In short, HPF consistently reads small files faster and avoids the common slowdowns of existing archive formats.

Why It Matters

Many real-world systems (social media, e-commerce, websites, satellites, logs) produce massive numbers of small files. If those files are slow to access or cause memory problems, you get:

- Delays in analytics and monitoring

- Difficulties scaling systems

- Higher costs and poor user experience

HPF makes handling these small files efficient:

- It reduces memory load on the NameNode by merging files

- It keeps file lookups fast by reading only tiny parts of a small index

- It lets you add new files over time, which is essential for continuous data like logs

Implications and Impact

HPF can help big data platforms:

- Run faster analytics on lots of small files (e.g., logs, images, sensor data)

- Use less client memory because they don’t need to cache large index files

- Scale more smoothly without changing Hadoop itself

- Support ongoing data growth by allowing new files to be appended

Overall, HPF is a practical, high-performance way to make Hadoop handle millions of small files almost as efficiently as big files, improving speed and reliability for modern data-heavy applications.

Collections

Sign up for free to add this paper to one or more collections.