- The paper introduces KeypointGAN, a self-supervised method that learns interpretable 2D keypoints from unlabelled videos to capture object poses.

- It utilizes a dual representation and a geometric bottleneck, separating pose from appearance through an image-to-image translation network.

- Results demonstrate state-of-the-art performance on benchmarks like Human3.6M and facial keypoints without relying on labeled images.

Self-supervised Learning of Interpretable Keypoints from Unlabelled Videos

The paper "Self-supervised Learning of Interpretable Keypoints from Unlabelled Videos" presents KeypointGAN, a novel approach for recognizing object poses using only unlabelled videos, enhancing semi-supervised learning paradigms by leveraging empirical priors on object configurations. This summary focuses on explaining the practical implications, technical methodology, and potential applications of this approach in real-world scenarios.

KeypointGAN Architecture

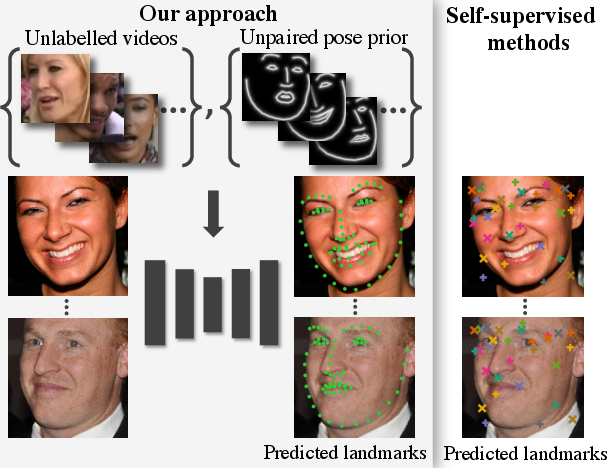

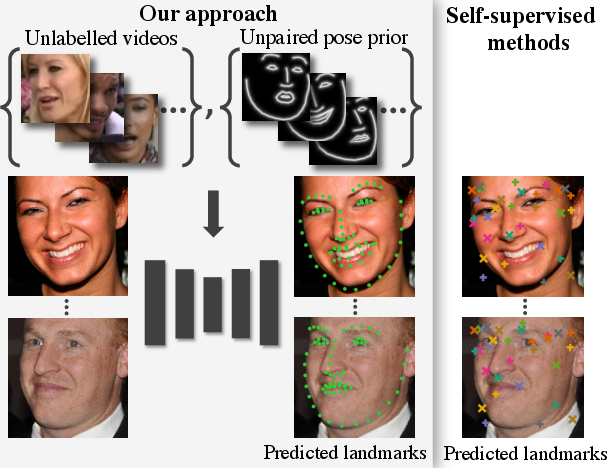

KeypointGAN utilizes a dual representation of object pose, encoded as a set of 2D keypoints and as a pictorial skeleton image. This methodology capitalizes on an image-to-image translation network to generate these pictorial representations, facilitating a more nuanced separation of an object's pose from its appearance (Figure 1).

Figure 1: Learning landmark detectors from unpaired data. KeypointGAN achieves state-of-the-art detection by leveraging unlabelled videos.

The architecture comprises several key components:

- Pose Encoder (Φ): Maps input images to pose skeletons. It integrates a geometric bottleneck which enforces semantic separation between pose and appearance.

- Pose Decoder (Ψ): Reconstructs the input image from the pose skeleton and auxiliary image data, ensuring that this representation solely encapsulates geometric information.

- Adversarial Discriminator (D): Ensures that outputted pose skeletons conform to a priori learned distributions, utilizing unpaired pose samples.

The system is optimized through a combination of perceptual loss for accurate reconstruction and adversarial loss to ensure geometric validity.

Implementation Details

The implementation of KeypointGAN requires several considerations:

Training Setup

- Data Requirement: The model utilizes large datasets of video frames and unpaired poses. Datasets should be divided to ensure no overlap between training frames and pose samples.

- Learning Objectives: The primary objectives are the minimization of perceptual loss to improve image reconstructions and adversarial loss to align with pose priors.

- Optimizer: While standard stochastic gradient descent optimizations can be applied, tuning hyperparameters such as learning rates and loss balancing factors is critical for convergence.

Architectural Insights

- Geometric Bottleneck: It provides a critical separation mechanism enabling pose feature learning without contamination by appearance features.

- Conditional Decoding: Introduces appearance synthesis from auxiliary frames to complete the reconstruction process effectively, catering to potential appearance discrepancies.

- Discriminator Usage: By employing adversarial training, the learned poses align with potential configurations, allowing KeypointGAN to predict human-recognizable keypoints directly.

Experimental results indicate that KeypointGAN achieves superior performance in several benchmark settings such as Human3.6M, 300-W for facial keypoints, and cat datasets, without requiring labeled images for training. The critical success lies in maintaining robust landmark detection across a variety of poses and movements previously unencountered in training data, showcasing adaptability and generalization capabilities.

Applications of this method extend across automatic video annotation, interactive computer vision systems where unlabelled data is abundant, and potentially in robotics where real-time pose estimation is pivotal.

KeypointGAN introduces a robust framework for unsupervised landmark detection. It innovatively bridges the gap between unlabelled video content and structured pose recognition via geometric abstraction and adversarial alignment methodologies. This work paves the way for more autonomous and flexible systems capable of learning rich geometric representations with minimal supervision, suggesting profound implications for fields requiring nuanced object and scene understanding. Future research might explore scaling this approach to more dynamic environments or integrating further modalities such as depth and motion for enhancing 3D pose recognition.