- The paper presents a neural network architecture that integrates the previous frame's segmentation mask to achieve real-time hair segmentation on mobile GPUs.

- The method employs extensive data augmentation and architectural optimizations, attaining IOU scores around 80% with inference times as low as 5.7ms on mobile devices.

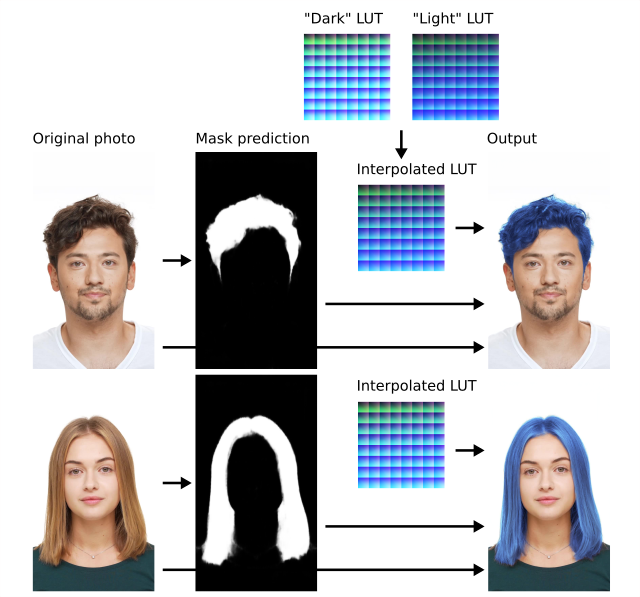

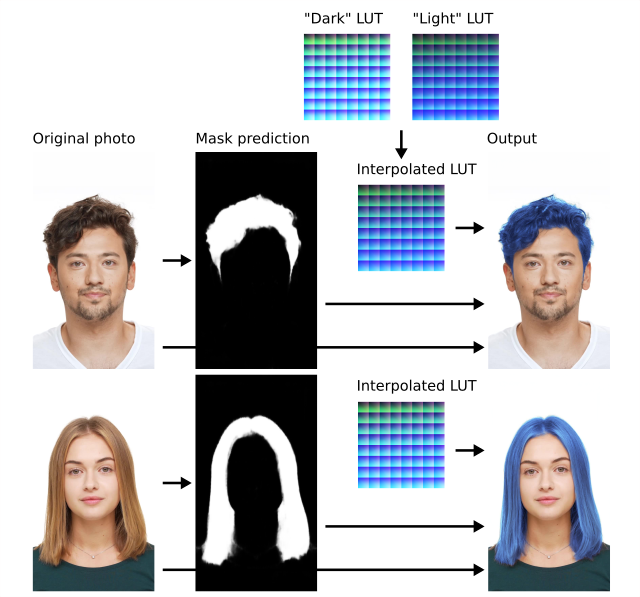

- The study introduces a hair recoloring technique using LUT interpolation based on average hair intensity, ensuring consistent results across various subjects and lighting conditions.

Real-Time Hair Segmentation and Recoloring on Mobile GPUs

This paper introduces a neural network-based approach for real-time hair segmentation from a single camera input, designed for mobile applications. The model achieves real-time inference speeds on mobile GPUs and is deployed in a major AR application.

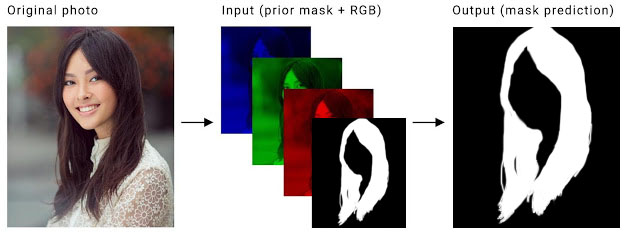

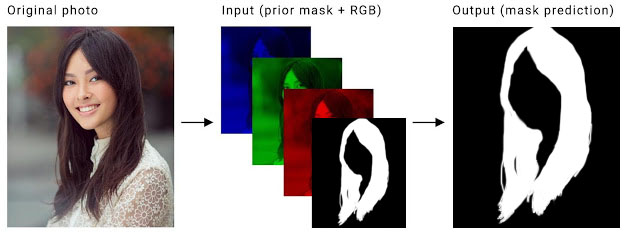

The core of the system is a convolutional neural network designed for semantic segmentation. To ensure temporal consistency without the computational overhead of LSTMs, the network uses the segmentation mask from the previous frame as an additional input channel (Figure 1). The network processes a four-channel input tensor, consisting of the current RGB frame concatenated with the previous frame's segmentation mask, to predict the current frame's hair segmentation mask.

Figure 1: Network input for our segmentation model showing how the previous mask is concatenated to the current frame.

Dataset and Training Procedure

The training dataset consists of tens of thousands of annotated images with pixel-accurate labels for hair, glasses, neck, skin, and lips (Figure 2). The consistency between human annotators was measured at 88\% Intersection-Over-Union (IOU) for hair markup, which was considered as an upper bound for model evaluation. The training procedure incorporates several data augmentation techniques to improve temporal continuity and handle discontinuities, including: using an empty previous mask, applying affine transformations to the ground truth mask, and applying thin plate spline smoothing to the original image.

Figure 2: Example of the detailed dataset annotation process, capturing various foreground elements.

Implementation Details

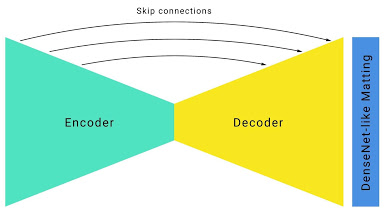

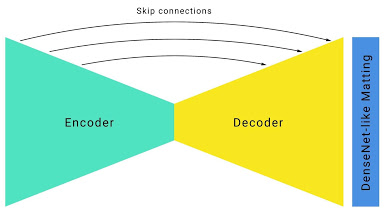

The network architecture builds upon a standard hourglass segmentation network with skip connections (Figure 3). Key architectural improvements for real-time mobile inference include: employing large convolution kernels with large strides in early layers, aggressive downsampling with skip connections, optimized E-Net bottlenecks, and the addition of DenseNet layers for edge refinement.

Figure 3: The customized hourglass network architecture with skip connections, optimized for real-time performance on mobile devices.

Hair Recoloring Methodology

The paper introduces a hair recoloring technique that involves a two-step process (Figure 4). The preparation step involves selecting light and dark hair reference images, calculating their average hair intensity, and designing sets of effects (LUTs) for each. The application step involves obtaining the hair segmentation mask for an input image, calculating the average hair intensity, interpolating the reference LUTs based on the calculated intensity, and applying the interpolated LUT to the segmented hair area.

Figure 4: The hair recoloring procedure using artist-designed LUTs for light and dark hair intensities.

The model achieves real-time performance on mobile devices by leveraging the mobile GPU for the entire pipeline, including camera input, ML inference (using TFLite GPU), and rendering. The inference time is 5.7ms on iPhone XS and 19ms on Pixel 3 for a 512x512 input, and 1.9ms and 6ms respectively for a 256x256 input. The corresponding IOU scores are 81.0% and 80.2%. The hair recoloring results are consistent across subjects and independent of the original hair color (Figure 5).

Figure 5: Hair recoloring samples demonstrate consistent results across different subjects and original hair colors.

Conclusion

The paper presents a practical solution for real-time hair segmentation and recoloring on mobile GPUs, suitable for AR applications. The proposed network architecture and training procedure balance accuracy and speed, enabling deployment on mobile devices. The hair recoloring technique, based on LUT interpolation, provides realistic results across various hair types and lighting conditions. This work demonstrates the feasibility of deploying computationally intensive machine learning tasks on mobile platforms for real-time AR experiences.