- The paper introduces Green AI, advocating for integrating efficiency as a key metric alongside traditional accuracy in AI research.

- It demonstrates that escalating compute power, evidenced by a 300,000x increase since 2012, leads to diminishing returns and environmental concerns.

- The research calls for transparent reporting of computational costs and promotes sustainable, accessible methodologies to broaden AI participation.

Green AI: Evaluating Efficiency in AI Research

The paper "Green AI" addresses the rapidly increasing computational demands of AI research, which have a significant environmental impact and often inhibit participation from under-resourced researchers. The authors propose integrating efficiency as a critical evaluation metric for AI research, complementing traditional metrics like accuracy.

Introduction and Motivation

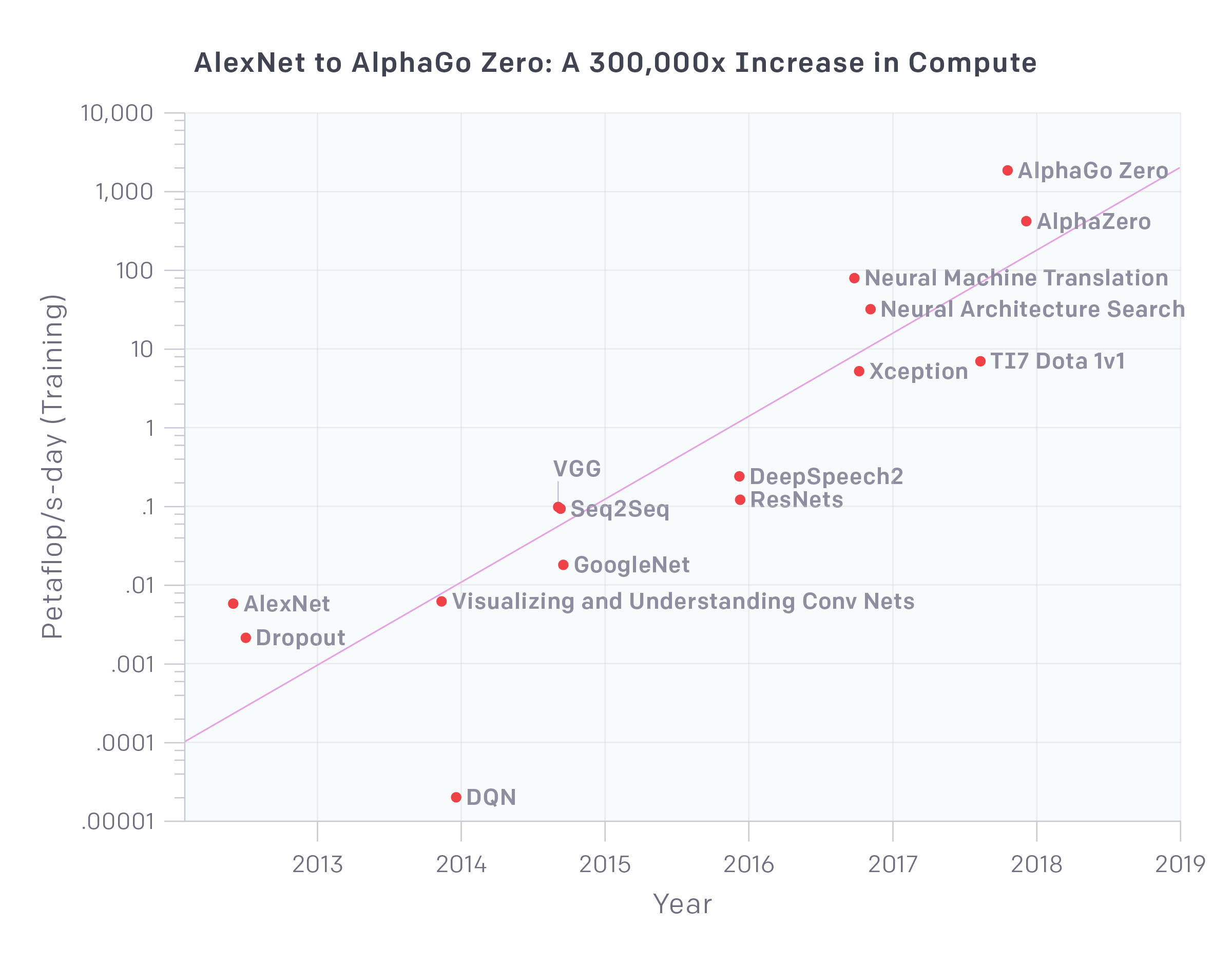

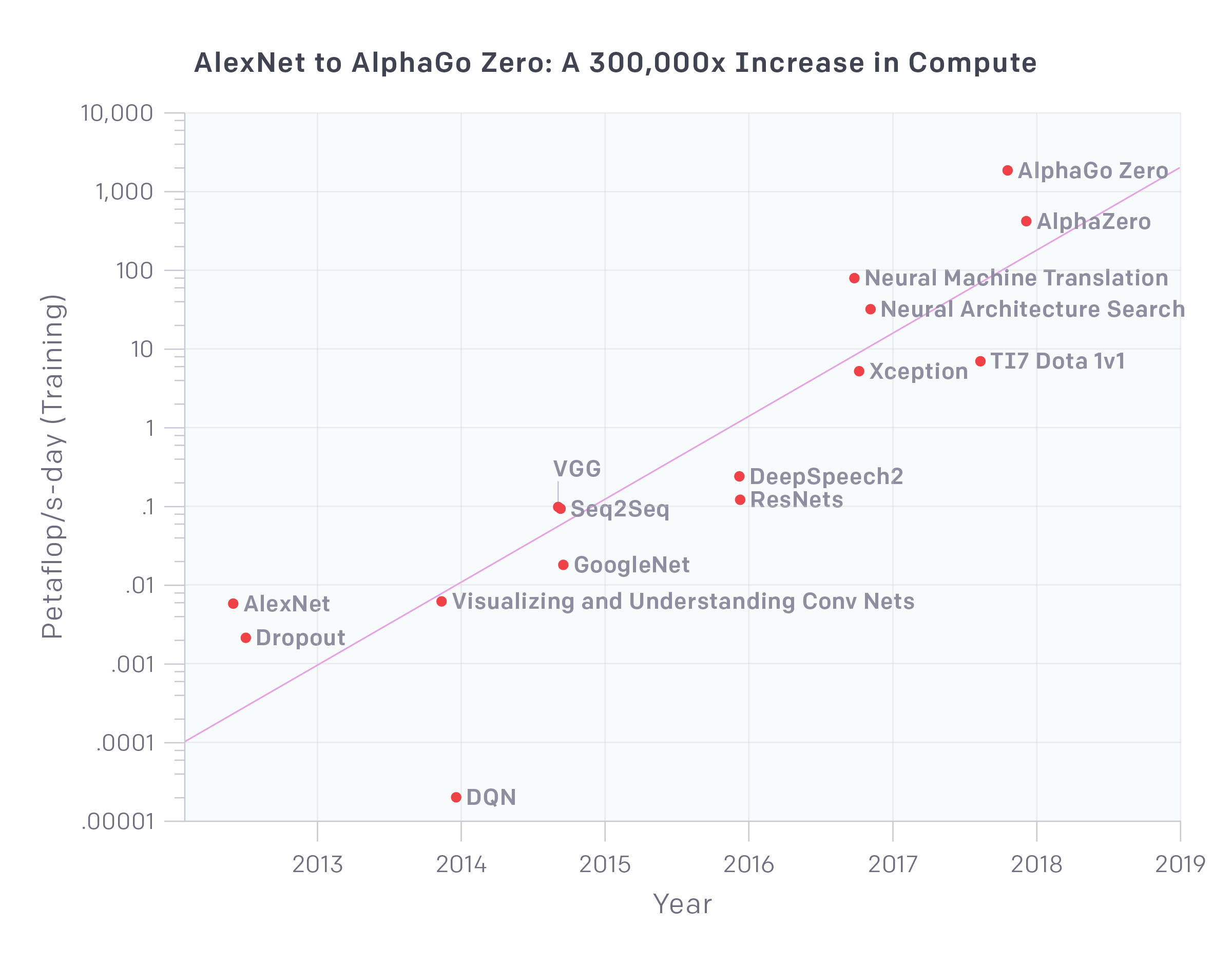

Over the past decade, deep learning has driven significant advancements in AI, but at an enormous computational and environmental cost. From AlexNet in 2012 to recent high-performance models like AlphaZero, the compute power required for training has surged exponentially, with training costs doubling approximately every few months.

Figure 1: The amount of compute used to train deep learning models has increased 300,000x in 6 years.

This paper advocates for a paradigm shift in AI research towards "Green AI," which focuses on the efficiency of models in terms of energy consumption and accessibility, along with accuracy. The primary goal is to enable broader participation in AI research by reducing the financial and environmental costs associated with developing, training, and deploying models.

Analysis of Red AI Practices

Red AI refers to research practices that emphasize achieving state-of-the-art results predominantly through substantial computational resources. This approach often results in diminishing returns, where increased resource allocation yields smaller increments in model performance.

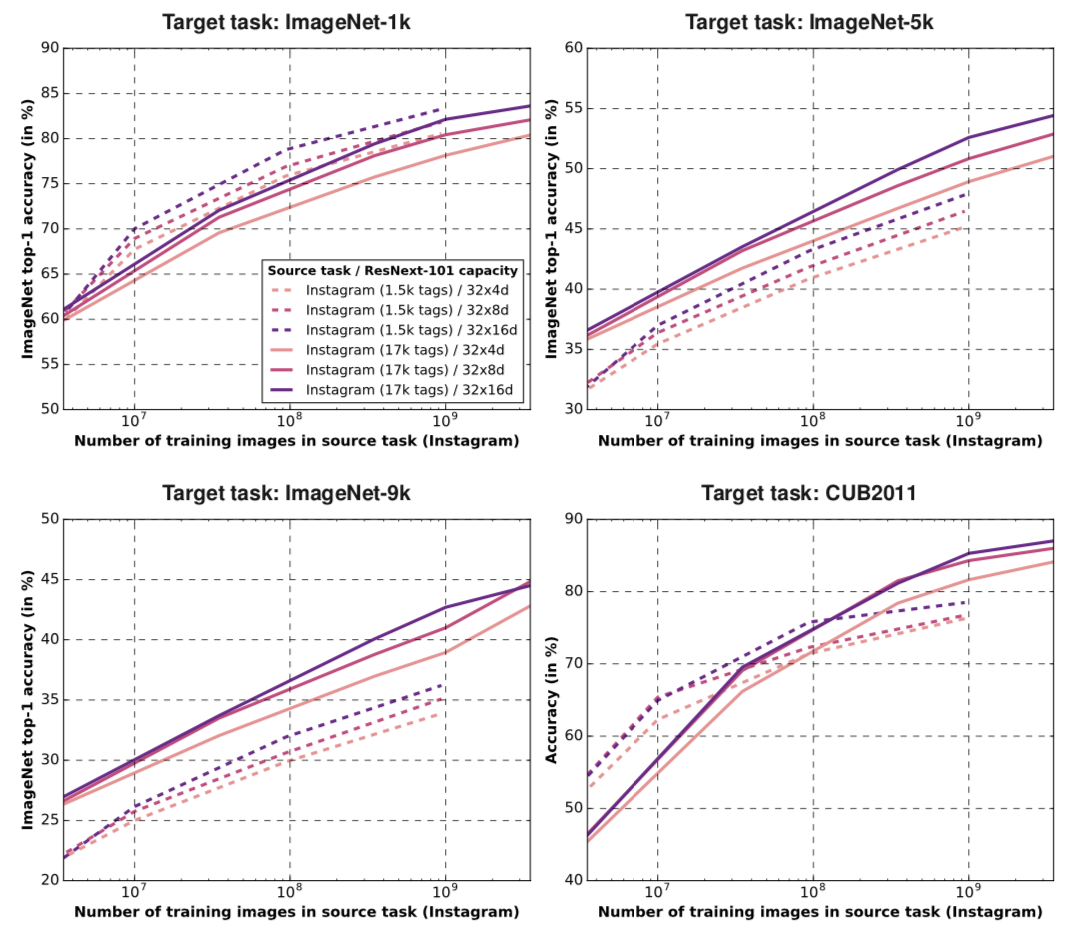

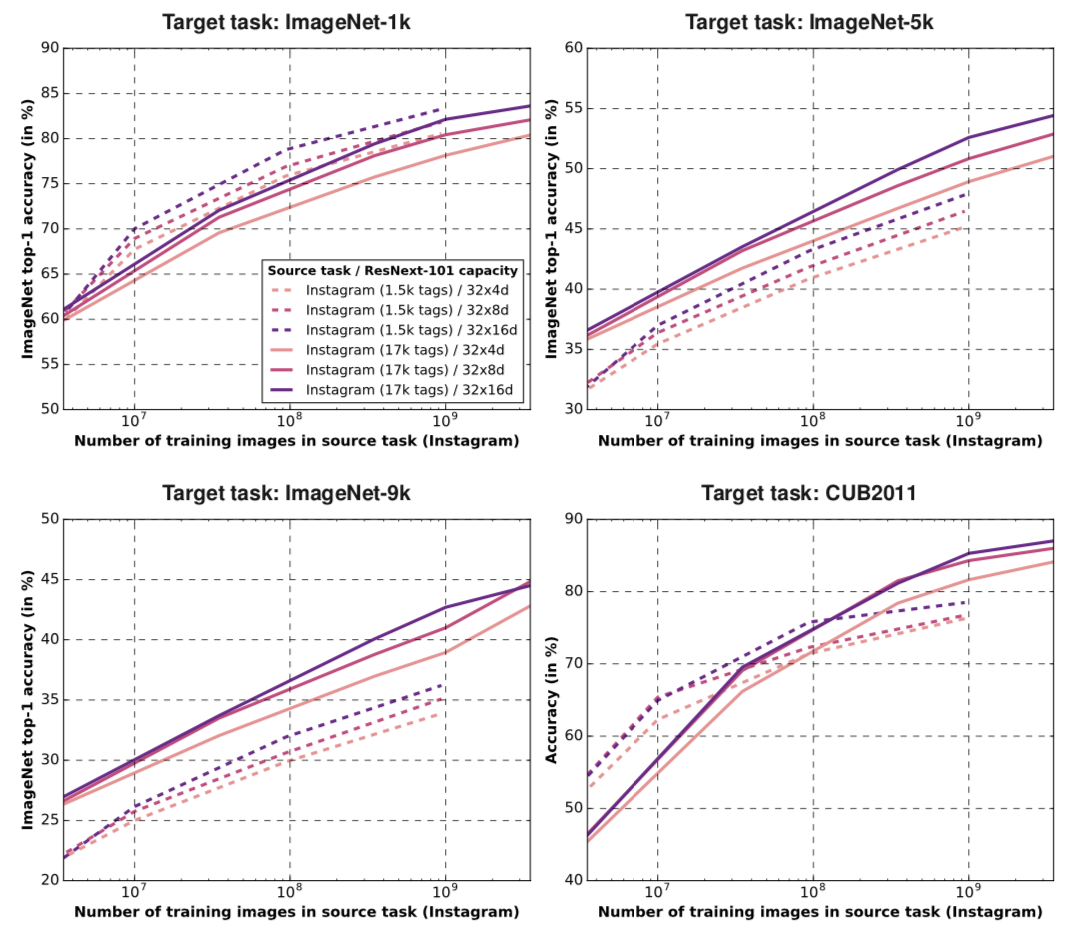

Figure 2: Diminishing returns of training on more data: object detection accuracy increases linearly as the number of training examples increases exponentially.

The authors analyze several factors contributing to the increasing computational expense:

- Model Complexity: Larger models, such as OpenAI's GPT-2 and Google's BERT, require substantial resources for training and inference.

- Data Scale: Models are often trained on massive datasets, leading to increased computational demands that many organizations cannot afford.

- Experimentation Volume: Extensive hyperparameter tuning and architectural searches further inflate computational costs.

Towards Green AI

The transition to Green AI involves developing methods and criteria that prioritize efficiency. This includes:

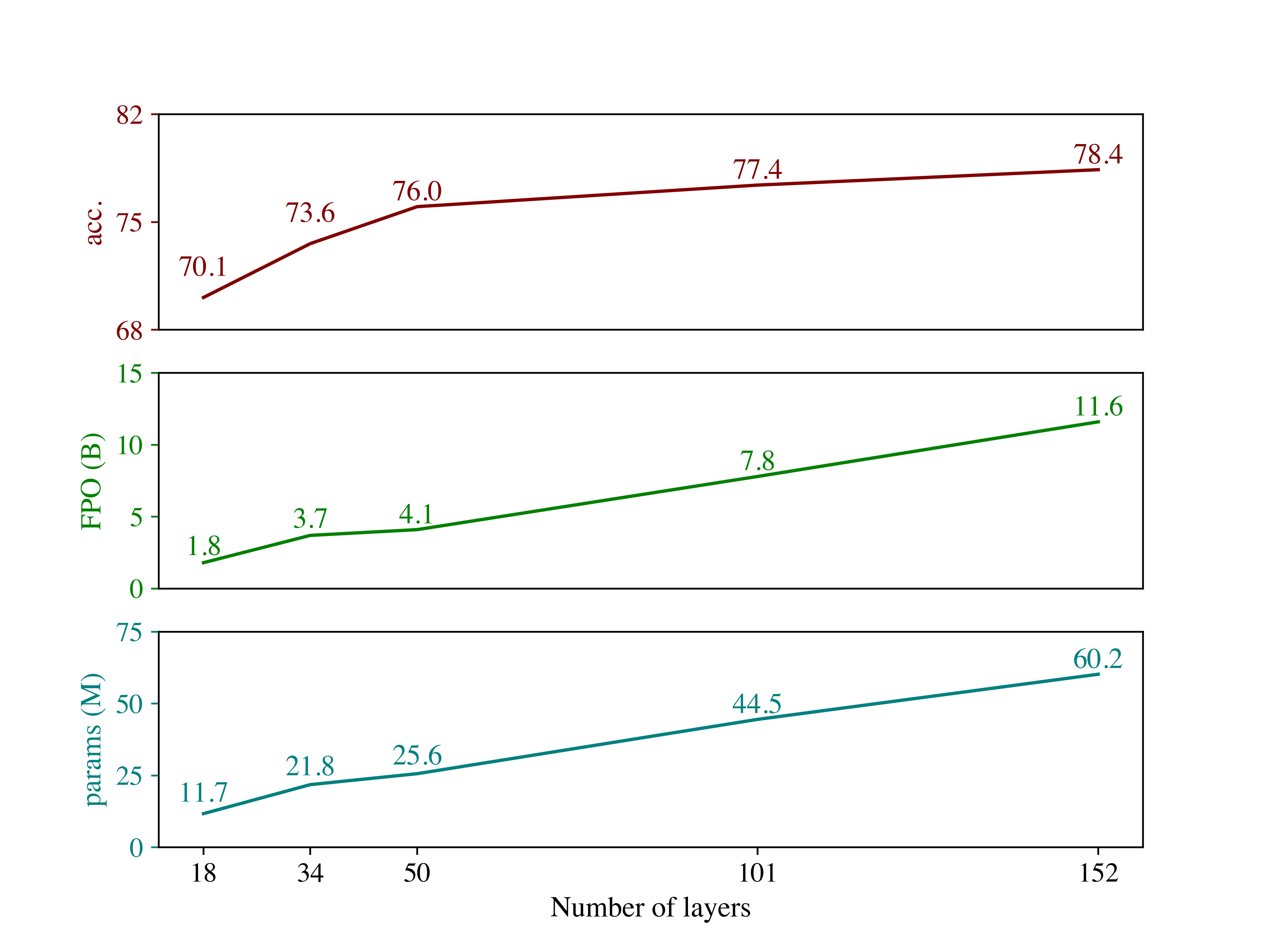

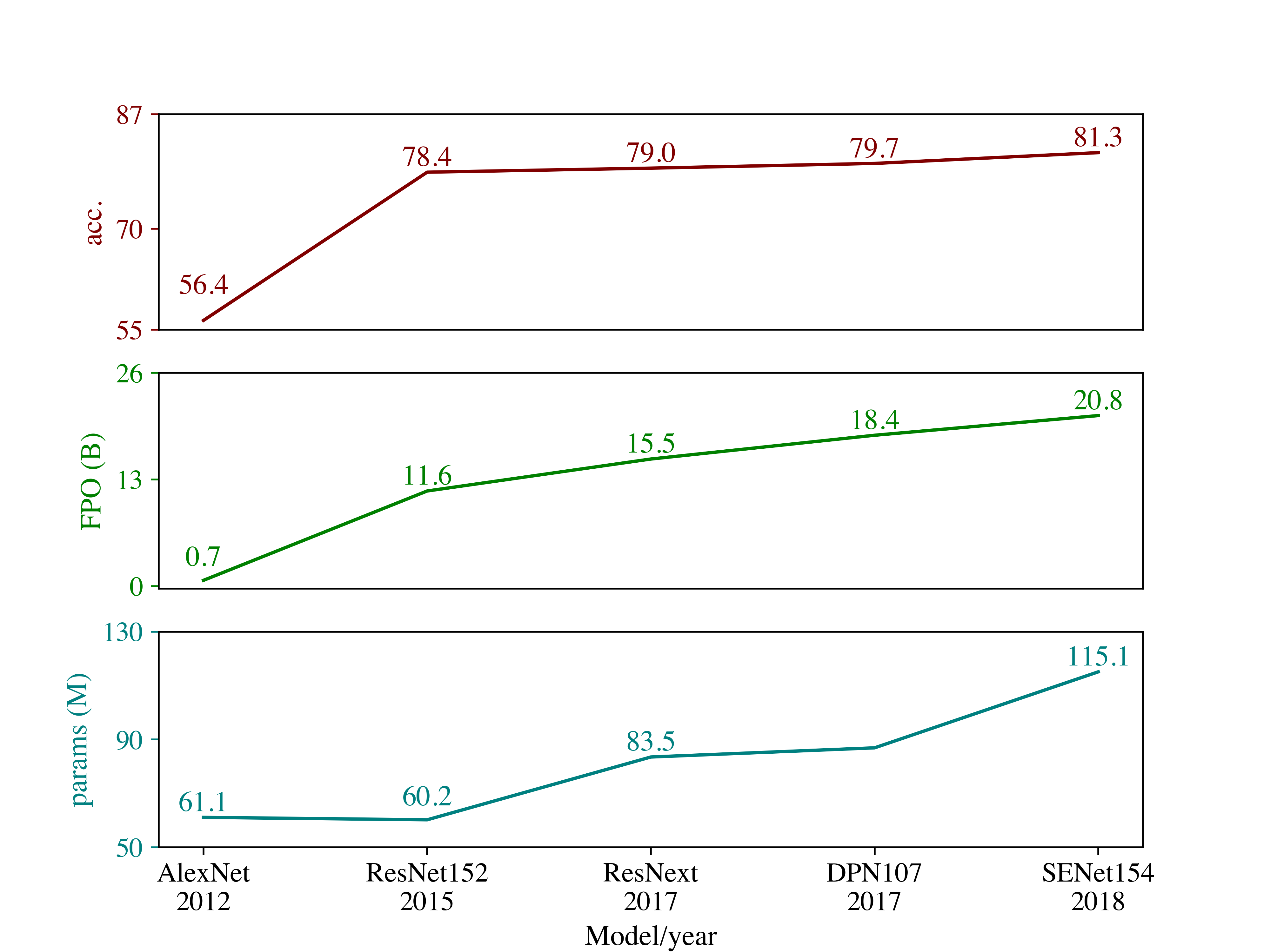

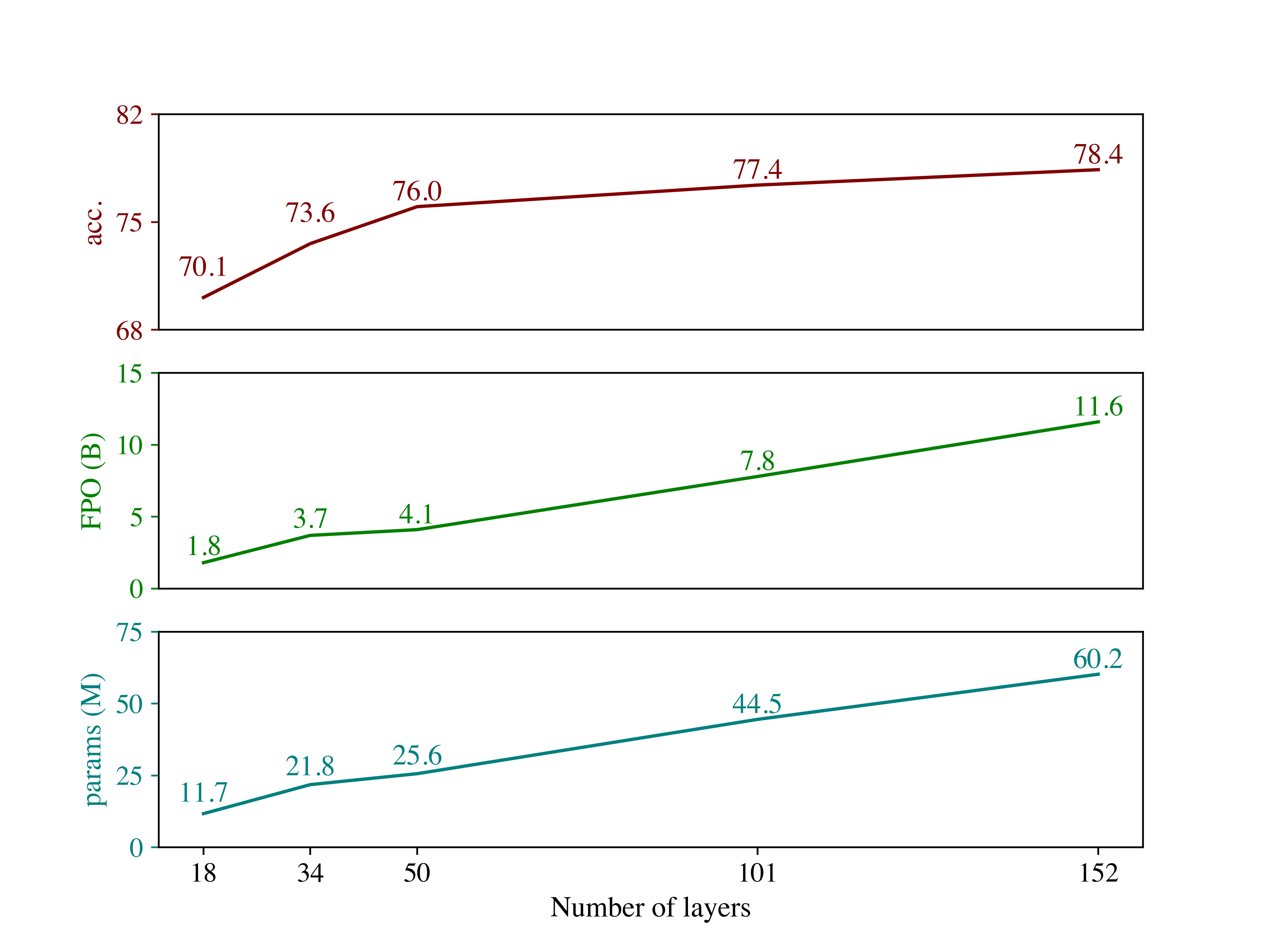

- Efficiency Metrics: Introducing measures like the number of Floating Point Operations (FPO) required for an AI result, which provide a proxy for comparing the computational efficiency across different methods and can serve as a standardized efficiency metric.

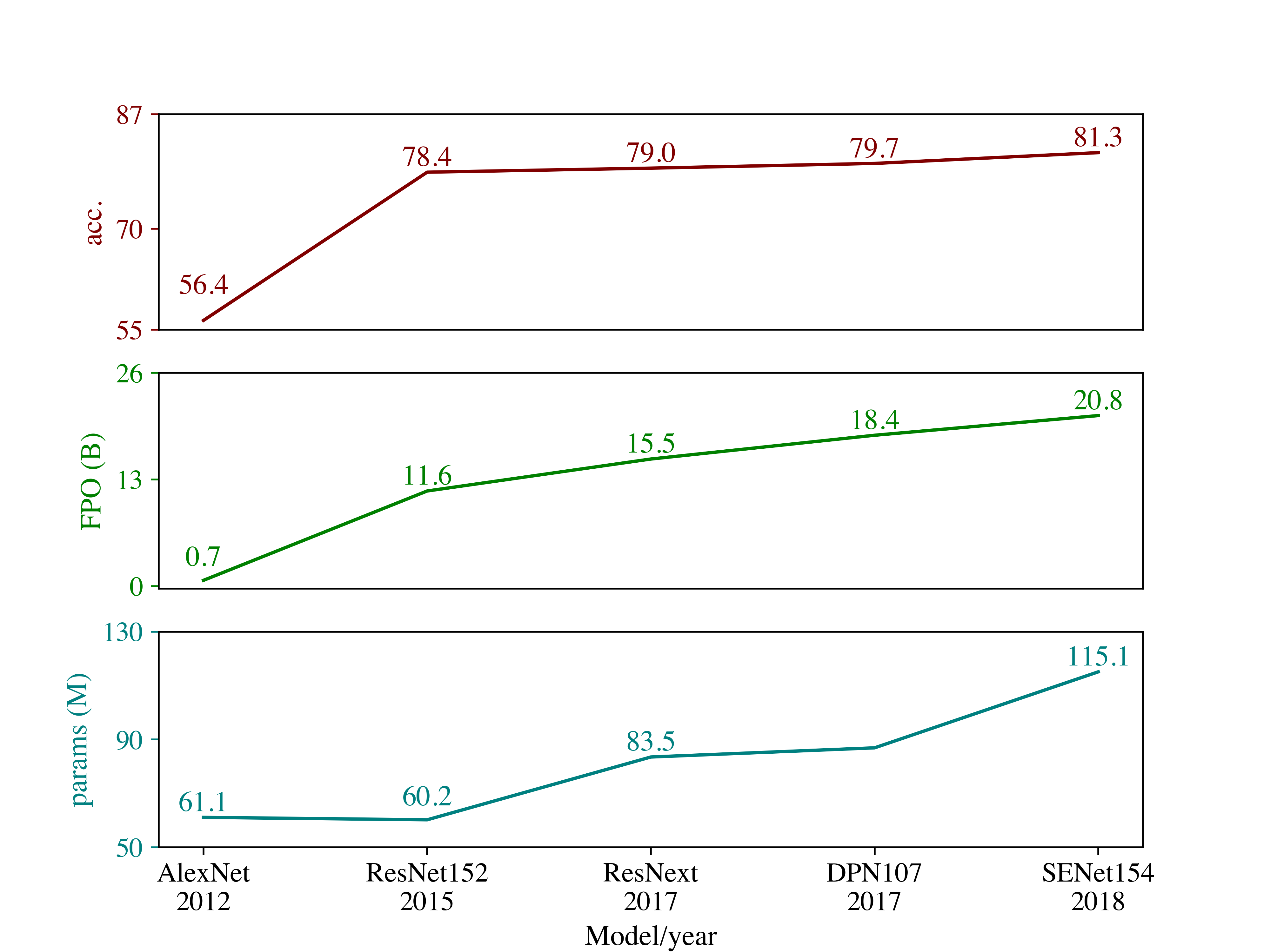

Figure 3: Increase in FPO results in diminishing return for object detection top-1 accuracy.

- Encouraging Reporting Practices: AI researchers should report computational costs transparently, including the FPO costs of experiments.

- Promoting Efficient Methodologies: Encouraging research that focuses on reducing the computational expense without compromising performance, such as data-efficient training methods and model compression techniques.

The concept of Green AI extends beyond reducing computing costs. It is part of a growing interest in sustainable computing, where advancements in efficient algorithmic solutions can have wide-ranging societal and environmental benefits. The paper highlights related efforts in energy-efficient models and applications of AI in domains like environmental monitoring.

Key future directions include fostering collaboration across AI and environmental science disciplines, promoting awareness of the trade-offs between computational efficiency and model performance, and developing frameworks to evaluate and compare efficiency metrics systematically.

Conclusion

The "Green AI" paper underscores the need to reevaluate research practices in AI by balancing performance with efficiency. By adopting efficiency as a primary evaluation metric, the AI community can drive innovative, less resource-intensive research and broaden its inclusivity. This shift not only preserves environmental resources but also allows more diverse global participation, potentially accelerating the field's progress in innovative ways.