- The paper surveys bias in machine learning by categorizing fairness definitions and exploring various mitigation techniques.

- It analyzes real-world examples, such as COMPAS and job ad discrimination, to illustrate algorithmic unfairness.

- It highlights research gaps and calls for unified fairness definitions and robust strategies for ethical AI.

Survey on Bias and Fairness in Machine Learning

The paper "A Survey on Bias and Fairness in Machine Learning" addresses the critical issue of fairness in AI systems and explores biases that may lead to unfair outcomes. This comprehensive survey examines sources of bias and strategies for mitigating their impact across various domains. It highlights the critical need for fairness in machine learning, given the widespread deployment of AI systems in high-stakes areas such as judicial decisions and hiring processes.

Real-World Examples of Algorithmic Unfairness

Machine learning algorithms have shown discriminatory behavior in various applications, such as the COMPAS algorithm, which exhibited bias against African-Americans by predicting higher false positive rates for future criminal behavior. Other instances include gender discrimination in job advertisement delivery and bias in facial recognition systems. These examples underscore the practical implications of biases inherent in data and algorithms, highlighting the importance of fairness constraints during the design and deployment of machine learning models.

Bias in Data, Algorithms, and User Experiences

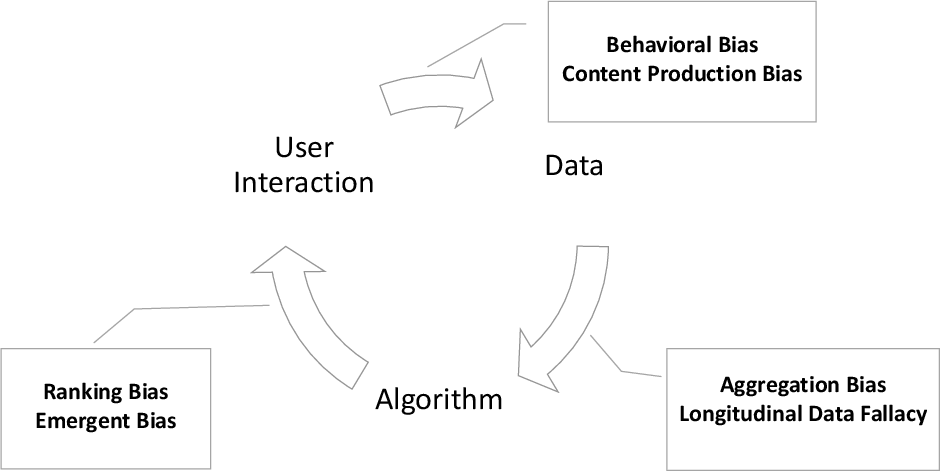

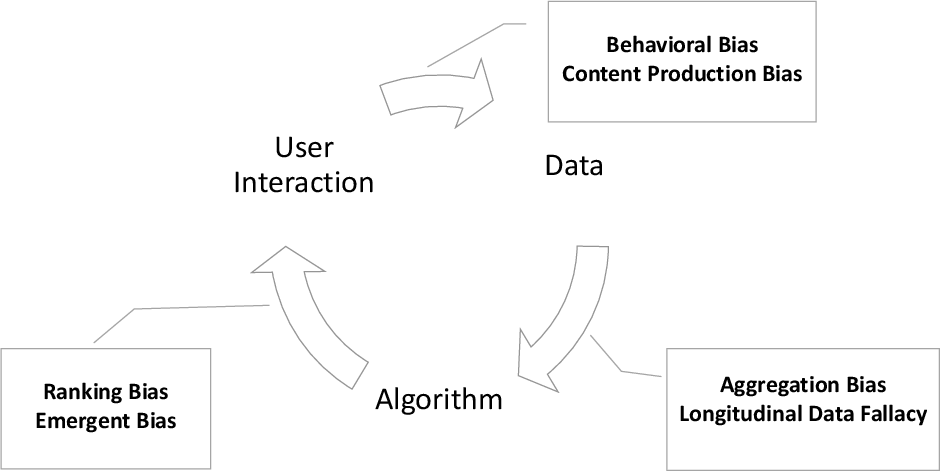

Bias can originate from data, algorithmic processes, and user interactions, creating a feedback loop that perpetuates skewed outcomes. Data biases include representation bias and omitted variable bias, where models are trained inefficiently due to non-representative samples or overlooked essential variables. Algorithmic bias arises from design choices, such as the use of biased estimators, which lead to unfair predictions. Additionally, user interaction biases influence recommendation systems and search engines, further complicating bias mitigation efforts.

Figure 1: Examples of bias definitions placed in the data, algorithm, and user interaction feedback loop.

Taxonomies and Definitions of Fairness

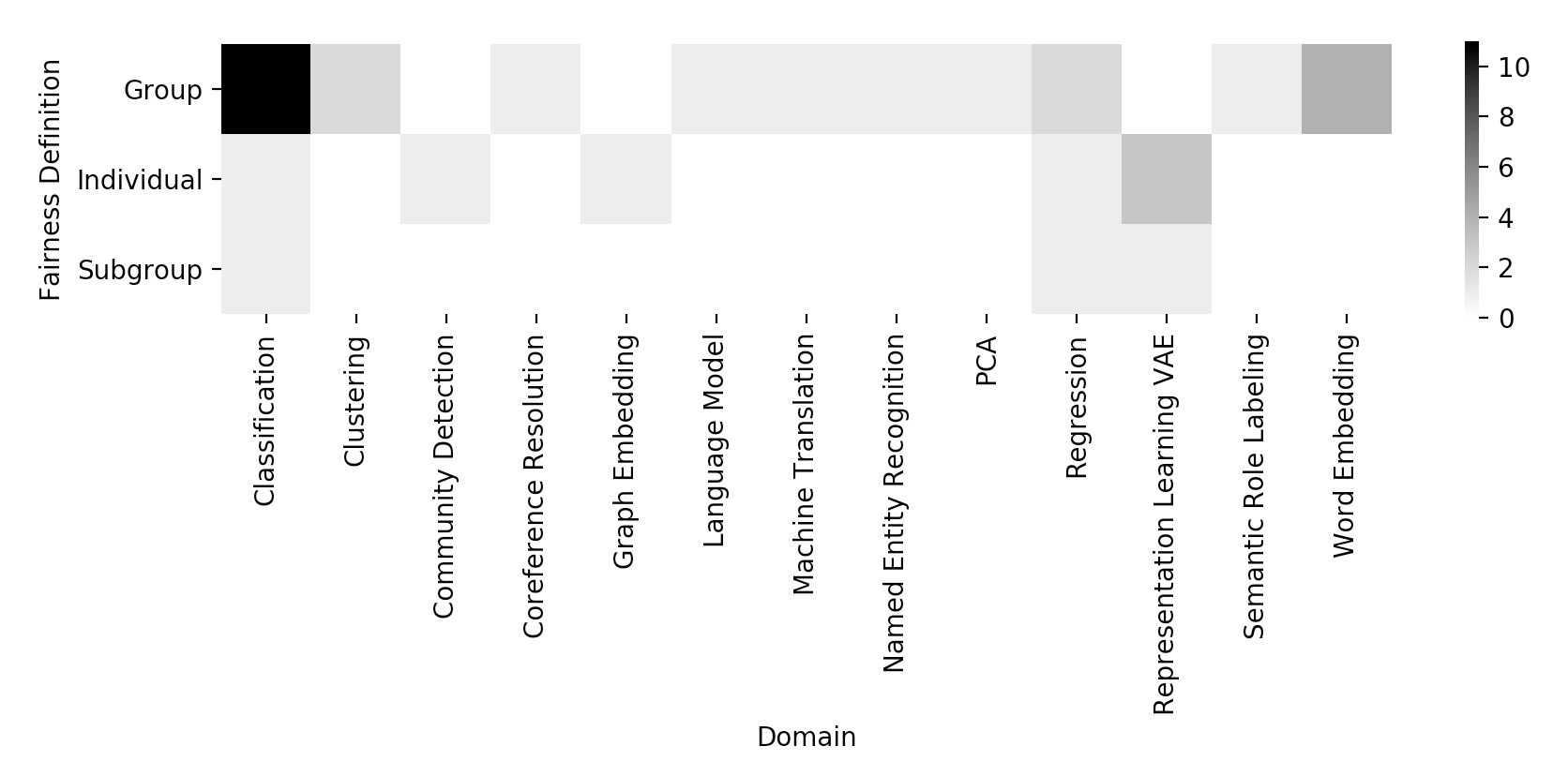

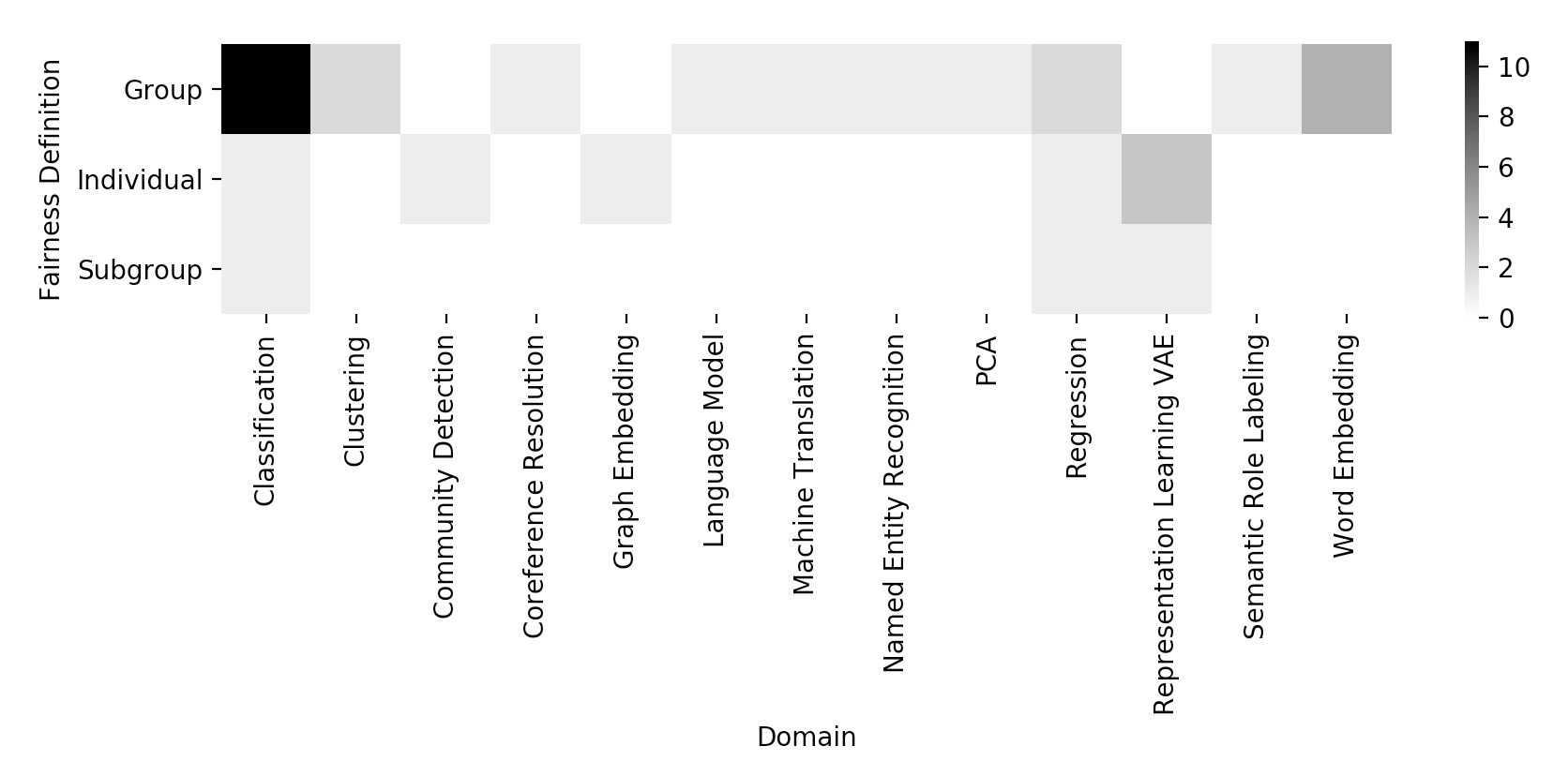

The paper categorizes fairness definitions into individual, group, and subgroup fairness, providing a rich taxonomy for understanding different fairness concepts. Key definitions include Equalized Odds, Demographic Parity, and Counterfactual Fairness, each with its operational criteria aimed at minimizing bias across various AI applications. The inherent trade-offs between these definitions pose challenges in achieving simultaneous fairness objectives, necessitating careful application-specific selection.

Techniques for Bias Mitigation

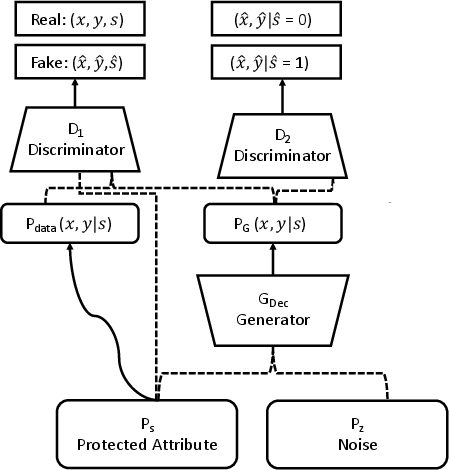

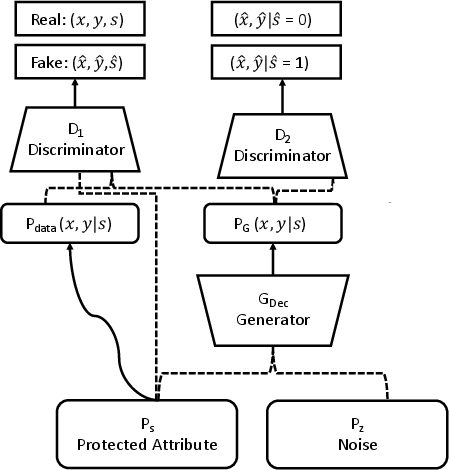

The paper categorizes approaches to addressing bias into pre-processing, in-processing, and post-processing techniques. Pre-processing involves altering the training data to remove discriminatory elements, while in-processing modifies learning algorithms to ensure fairness. Post-processing adjusts the model's output to achieve fairer outcomes. These methodologies span numerous domains, such as classification, causal inference, and adversarial learning, demonstrating their broad applicability in creating fair AI models.

Figure 2: Structure of FairGAN as proposed in "Mitigating unwanted biases with adversarial learning" [arXiv 2018].

Implications and Future Directions

The research underlines the necessity for ongoing work in fairness and bias mitigation, identifying opportunities for developing unified fairness definitions and exploring equity over equality. By illuminating where current methods fall short, it opens avenues for advancing fair AI mechanisms that are robust against variations in data and application contexts. Future research should converge on synthesizing fairness definitions and promote temporal fairness evaluations to better model the evolving nature of AI systems.

Conclusion

The survey provides an authoritative examination of the biases present in AI systems and proposes a multifaceted approach to achieving fairness. By documenting existing biases and proposing algorithms to mitigate them, it encourages researchers to adopt fairness-aware practices. This survey serves as a comprehensive guide for tackling the ethical challenges posed by biased algorithms, promoting the responsible development and deployment of AI technologies.

Figure 3: Heatmap depicting the distribution of previous work in fairness, grouped by domain and fairness definition.