- The paper introduces a two-stage deep optimization pipeline that integrates a differentiable Poisson gradient loss with deep content and style losses for robust image blending.

- It employs composite objectives including style via Gram matrices and content fidelity using VGG-16 features, ensuring smooth boundaries and consistent textures.

- User studies and ablation analysis confirm that the method outperforms traditional techniques in both artistic and real-world scenarios.

Deep Image Blending: Two-Stage Optimization Integrating Poisson and Deep Feature Losses

Introduction and Motivation

Image blending is a core technique in computer vision and graphics, enabling objects from disparate sources to be seamlessly composed onto new backgrounds. Traditional Poisson image editing delivers spatial coherence at object boundaries via gradient domain optimization, yet fails to capture global content or stylistic harmony, often producing color and texture mismatches, especially when transferring across highly variable domains or artistic styles. The presented work, "Deep Image Blending" (1910.11495), introduces a two-stage deep optimization pipeline that combines a differentiable Poisson-inspired spatial loss with deep feature-based style and content losses. This approach aims to bridge the gap between local boundary integration and holistic visual alignment.

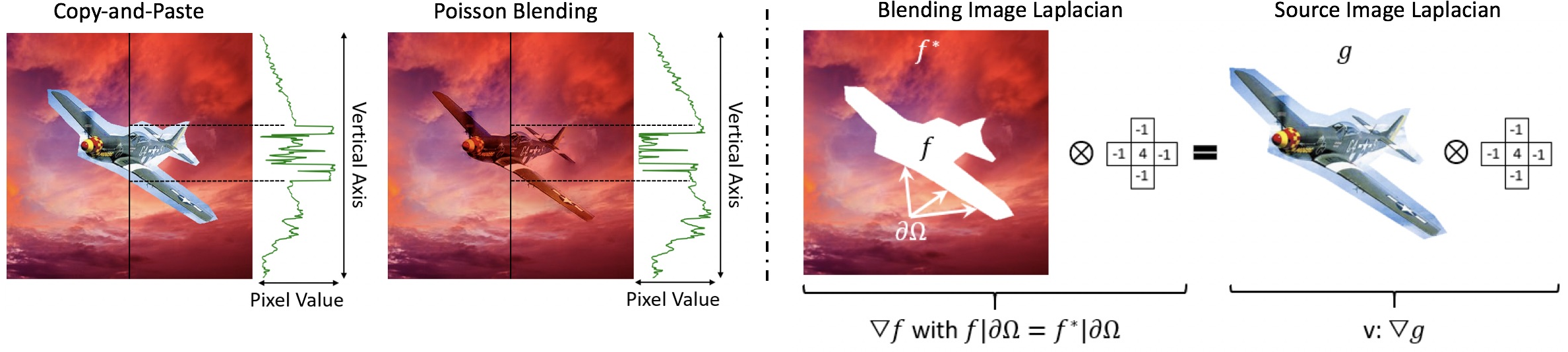

Foundations: Poisson Image Blending and Its Limitations

The methodology roots itself in Poisson image blending, where the blending region's pixel values are reconstructed by enforcing Laplacian consistency between the source and composite images, subject to boundary constraints from the target image. While Poisson blending achieves boundary smoothness, it is agnostic to content preservation and disregards higher-order texture or style alignment. Furthermore, the closed-form matrix solution it employs hampers direct integration with modern, differentiable deep loss functions essential for joint optimization.

Figure 1: Intensity transition comparison for copy-and-paste, Poisson blended images, and a conceptual visualization of Laplacian-based gradient domain consistency at object boundaries.

Methodology

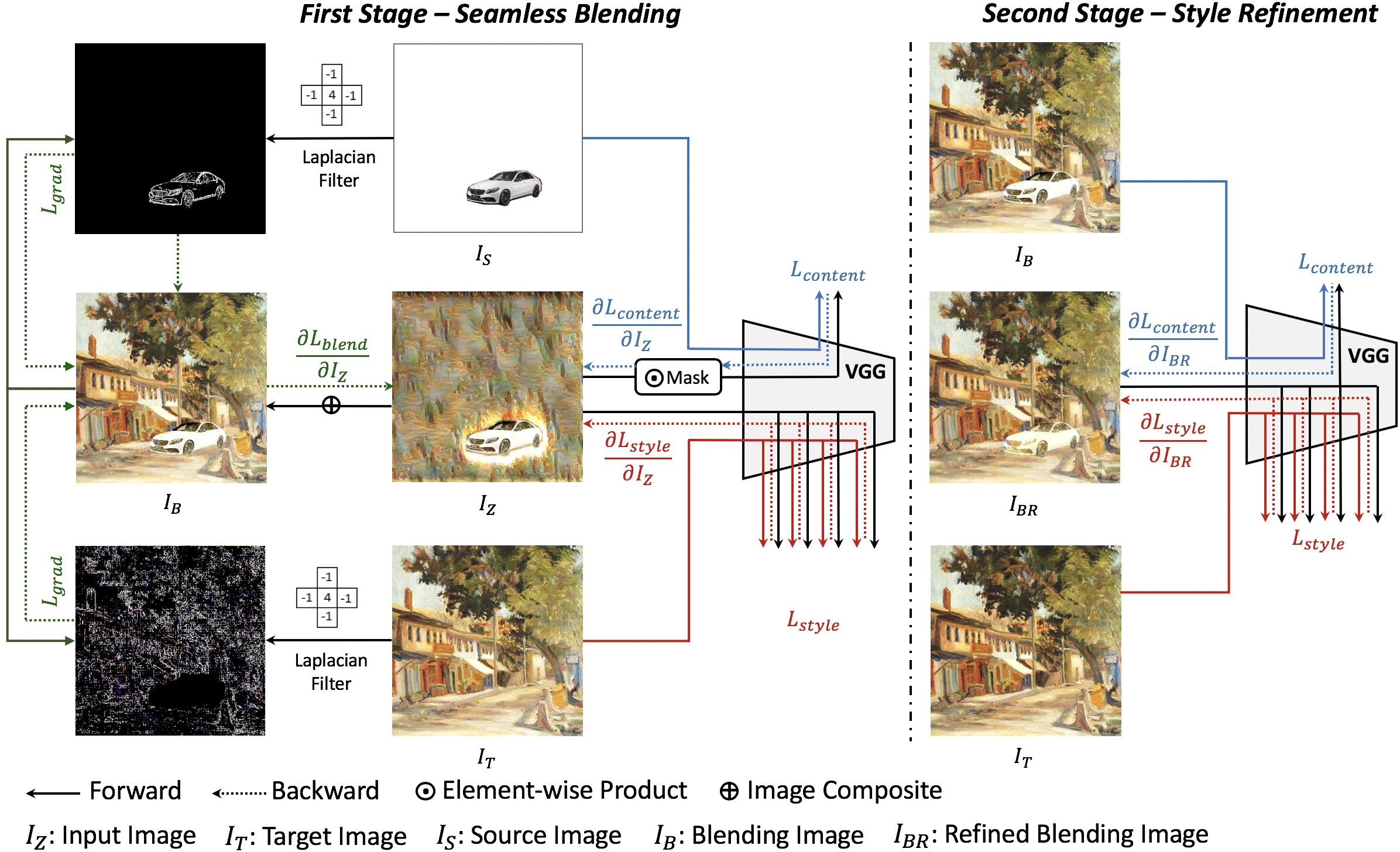

Two-Stage Deep Blending Pipeline

The core innovation is a two-stage pixel optimization procedure. In stage one, the network randomly initializes the blending region and iteratively updates pixels using an L-BFGS solver under a composite objective:

- A differentiable Poisson gradient loss that enforces boundary Laplacian coherence.

- A style loss (deep Gram matrix feature matching) anchoring the blended patch to the background's textural statistics.

- A content loss constraining the semantic consistency with the pasted object.

- Ancillary histogram and total variation losses for feature distribution alignment and spatial smoothness, respectively.

Stage two refines the output, using the stage one result as initialization, further optimizing content fidelity with respect to the initial blend and enhancing visual style alignment to the target background.

Figure 2: Schematic of the two-stage blending optimization; Stage 1 integrates gradient, content, and style constraints, whereas Stage 2 amplifies stylistic and textual harmonization.

Differentiable Poisson Gradient Loss

A key contribution is recasting the original Poisson objective into a fully differentiable loss:

Lgrad=2HW1m,n∑[∇f(IB)−(∇f(IS)+∇f(IT))]mn2

This formulation ensures gradient domain matching at the boundary but allows seamless aggregation with deep-feature losses, supporting end-to-end optimization.

Multi-Term Objective and Training

Both content and style losses are computed using VGG-16 feature activations. Content loss relies on masked L2 distance between blend and source activations, ensuring semantic region fidelity. Style loss, via Gram matrices, matches global textures with the background. The histogram and TV losses act as regularizers, anchoring feature distributions and smoothing artifacts.

Figure 3: Pixel reconstruction dynamics across iterations in both optimization stages. Initial iterations prioritize boundary fitting, while later passes amplify style coherence.

Empirical Evaluation

Ablation Analysis

Systematic ablations reveal that omitting the Poisson gradient loss leads to pronounced artifacts at the composite boundary; removing content loss erases salient object features; disabling style loss causes mismatched illumination and texture. Critically, the two-stage setup outperforms single-stage variants by achieving superior style transfer without sacrificing content integrity or boundary naturalness.

Figure 4: Effects of omitting various loss functions and single- versus two-stage architectures; full method consistently yields smooth, coherent, and semantically faithful blends.

Comparative Benchmarking

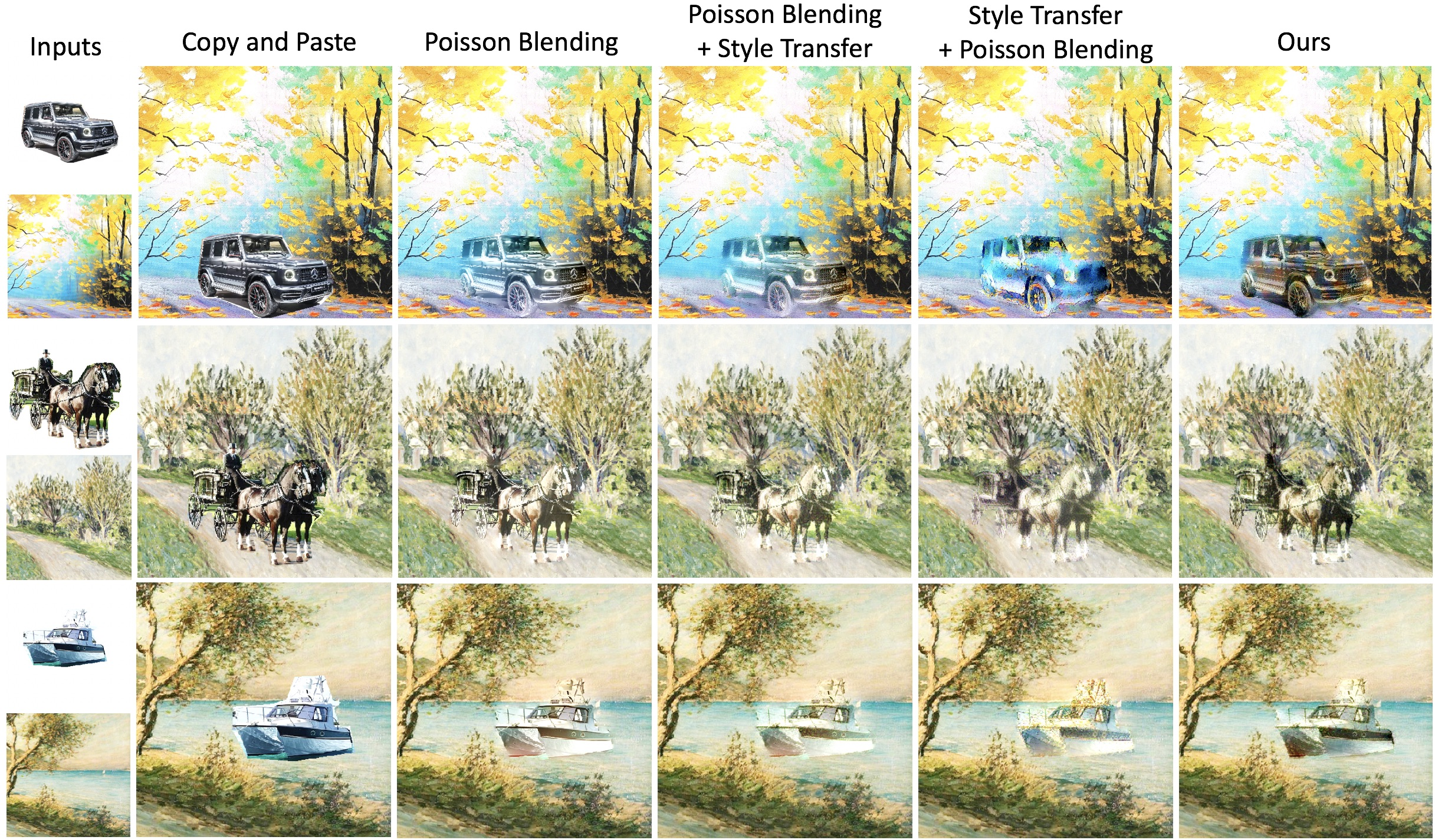

Blending on Paintings

Deep Image Blending is tested against several strong baselines:

- Copy-and-paste (intense boundary artifacts)

- Poisson blending only (smooth transitions but poor texture harmony)

- Sequential Poisson and style transfer (unpleasant illumination, content loss)

The proposed approach achieves boundary smoothness, consistent style, and superior content preservation, confirmed via visual and user study results.

Figure 5: Comparisons on blending into paintings; the proposed approach ensures both seamless integration and harmonious semantics/textures.

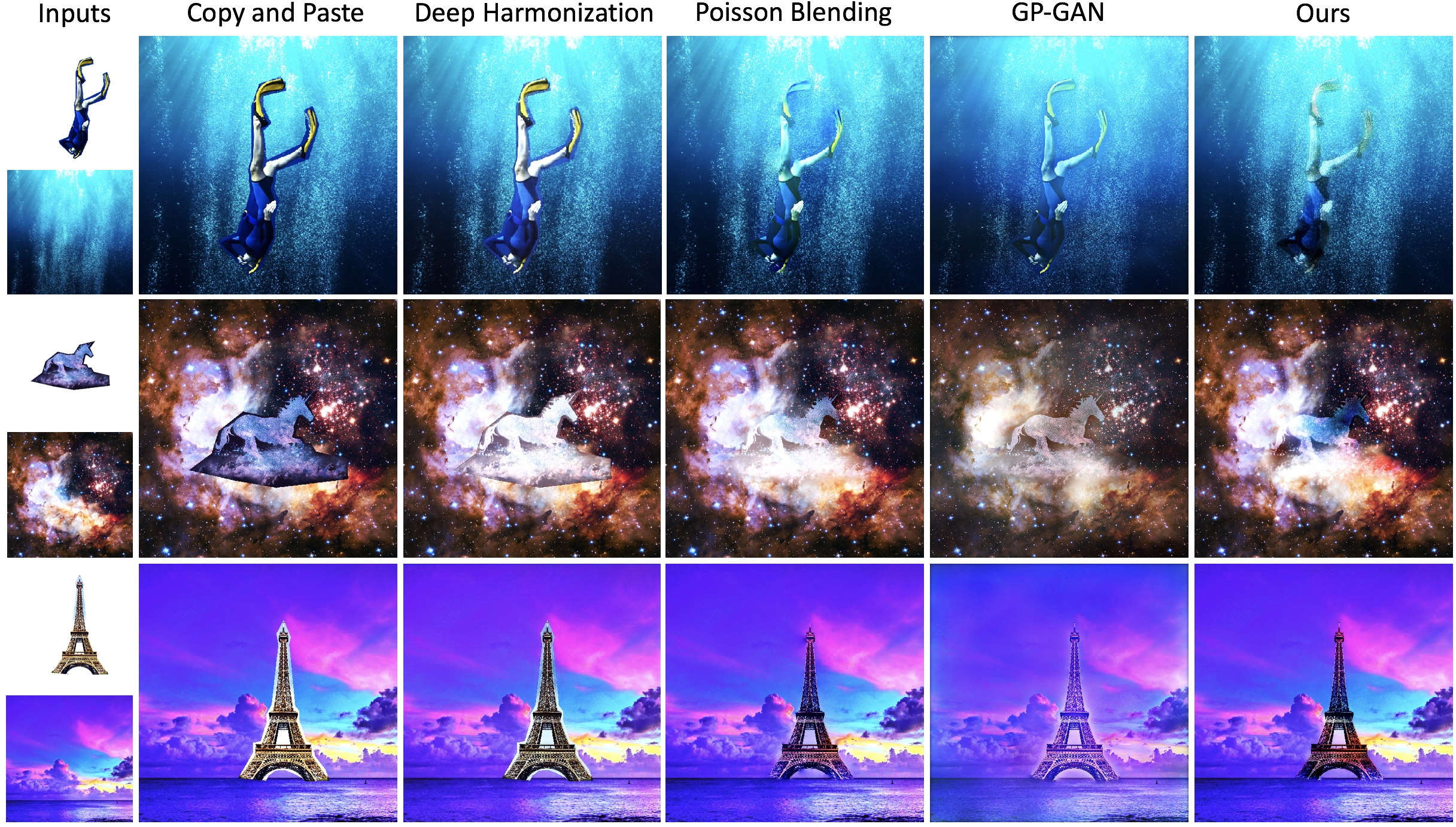

Real-World Images

Against state-of-the-art methods like Deep Image Harmonization and GP-GAN, Deep Image Blending delivers visually realistic composites, robust to object boundaries, illumination, and cross-domain textures, outperforming supervised models despite not requiring any labeled training data.

Figure 6: Comparisons on real-world images; the proposed method demonstrates robust content integration and boundary smoothing that other methods fail to match.

User Study

A 30-participant user study across 20 images (10 real, 10 artistic) shows that 80–90% of users prefer results generated by this method over all baselines, with particularly strong performance on paintings and challenging real images. The quantitative gap is especially pronounced in scenarios requiring strong stylistic adaptation.

Implications and Future Directions

The proposed optimization-based technique does not require dataset-level supervised training, enabling generalization to arbitrary source/target image pairs and extending naturally to both photographic and non-photographic content such as paintings. By leveraging a differentiable approximation of classical Poisson blending within a deep-feature loss landscape, the approach demonstrates that data-driven, non-parametric image editing remains competitive and flexible without bespoke training pipelines.

Potential avenues for future research include:

- Extending the optimization loop to support spatio-temporal consistency for video blending.

- Integrating attention or transformer-based deep features to further enhance semantic understanding.

- Applying these ideas to broader image synthesis contexts (e.g., AR/VR object insertion or creative style compositing).

Conclusion

Deep Image Blending presents a two-stage, data-agnostic, and differentiable optimization pipeline for seamless, style-aware image composition. By fusing classical Poisson gradient constraints with modern deep feature objectives, the method consistently outperforms existing techniques across both natural and artistic datasets, without the need for supervised training or domain-specific data. This work substantiates the merit of combining classical image processing insights with deep representation loss terms and points toward continued hybridization in controllable image editing workflows.