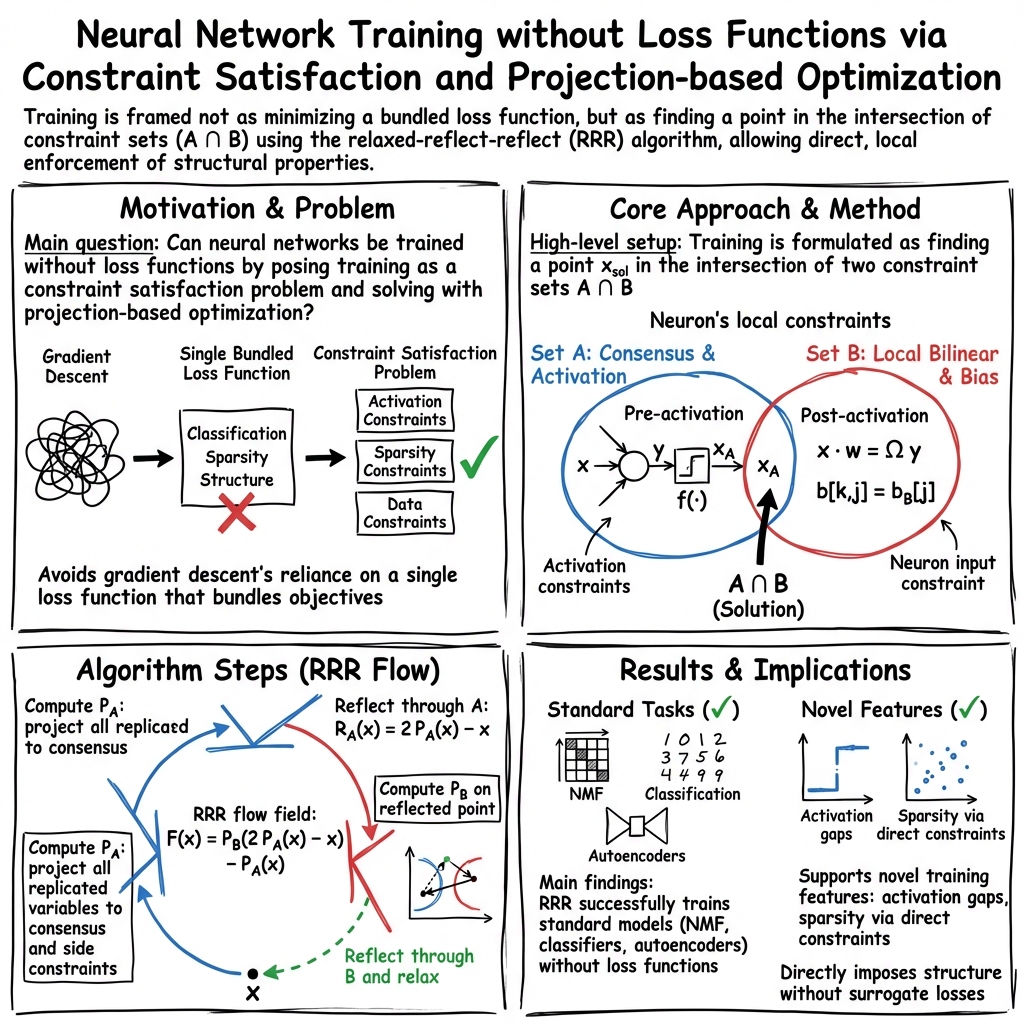

- The paper proposes a loss-free training method by using projection constraints to substitute traditional gradient descent loss functions.

- It leverages the relaxed-reflect-reflect (RRR) algorithm to perform concurrent projections across neurons and data points, ensuring robust convergence.

- The approach is validated with applications in NMF, classification networks, and generative autoencoder models, offering practical efficiency gains.

Learning Without Loss: A Comprehensive Examination

The paper "Learning Without Loss" (1911.00493) introduces a novel training paradigm for neural networks that eliminates the use of traditional loss functions, employing hard constraints instead. This method, inspired by successful techniques in phase retrieval, leverages the forces of projections to guide the optimization process. The study offers a detailed tutorial through progressive examples, culminating in a generative model that synergizes an autoencoder and classifier.

Introduction and Background

With the rise of neural networks in the machine learning domain, the quest for optimal training algorithms has intensified. Despite the complexity inherent to neural networks, characterized by their expressive power, the widely prevalent strategy for training remains gradient descent. This approach relies on loss functions that encapsulate myriad aspects of the training objective, necessitating empirical evaluations due to the theoretical intractability of neural network models.

In stark contrast, phase retrieval—an area focused on reconstructing signals from magnitude constraints—thrives on non-gradient algorithms centered around constraint satisfaction. This paper proposes transplanting these methodologies to neural network training, aiming to expand neural networks' scope by defining activations via constraint sets rather than functions.

Algorithmic Framework

The proposed strategy employs an optimizer dubbed relaxed-reflect-reflect (RRR), distinguished by its ability to derive optimization steps from projections to local constraints. Enabling projections across neurons and data points concurrently, RRR mimics the partitioning strategy successful in phase retrieval. These concurrent operations take inspiration from phase retrieval and form the backbone for training neural networks, eschewing loss minimization in favor of direct constraint satisfaction.

The RRR algorithm can be summarized by the following iterative update rule:

x′=x+β(PB(2PA(x)−x)−PA(x))

where β is a time-step parameter, PA(x) is the projection onto constraint set A, and PB(x) is the projection onto constraint set B. This step circumvents traditional gradient-based flows, providing robust convergence properties linked to the intersection of constraints.

Application and Implementation

The paper provides a thorough pedagogical walkthrough, commencing with a single-layer network designed for non-negative matrix factorization (NMF), advancing to deeper architectures for classification tasks, and culminating in the design of an innovative generative model.

Non-Negative Matrix Factorization

Constraints in NMF are imposed directly on neuron inputs and outputs with additional consensus constraints for replicated variables—such as weights—across data instantiations. The projections to these constraints are sophisticated yet computationally efficient, enabling them to operate at par with traditional gradient descent in terms of scalability.

Classification Networks

For classification, the methodology adapts to handle label-based learning. The paper explains how constraints can be formulated for neuron activations and class encoding, offering alternatives to address data compromised by incorrect labels. Such adaptive constraint formulations allow classifiers to sidestep pitfalls common in gradient-based learning, particularly related to overfitting and stagnant minima.

Representation Learning: Generative Models and Autoencoders

Generative models are explored through autoencoders equipped with iDE (invertible-data-enveloping) codes. The constraints ensure that codes remain disentangled and envelop data comprehensively. The resulting representation of data through these constraints supports the training of classifiers that distinguish between genuine and fake samples, facilitating the generation of new data that closely mimics true samples.

Practical and Theoretical Implications

The implications of this study extend across both theoretical and practical domains. Theoretically, this approach invigorates the discussion surrounding model expressivity versus training complexity, challenging the primacy of loss-based training paradigms. Practically, the efficacy of constraint-driven training—particularly its potential for parallelization—constitutes a significant advancement, promising energy-efficient implementations when distributed processing is utilized.

Future developments may see these methodologies integrated with convolutional layers and explored in conjunction with state-of-the-art algorithms. The elimination of loss functions could redefine how complexity is managed in large-scale systems.

Conclusion

"Learning Without Loss" (1911.00493) paves the way for an alternative neural network training framework that jettisons traditional loss functions for constraint satisfaction. Through well-structured, incremental examples, the paper elucidates the versatility and power of using constraints in a discipline traditionally dominated by gradient descent methodologies. Going forward, adopting these techniques could lead to more efficient and theoretically sound neural network training practices.