- The paper introduces an unsupervised framework that identifies semantic directions in GAN latent spaces by jointly optimizing a matrix and a reconstructor.

- The method achieves high RCA and MOS scores across datasets like MNIST, CelebA-HQ, and BigGAN, demonstrating robust interpretability.

- The discovered latent directions enable practical applications in weakly-supervised saliency detection and refined image manipulation.

Unsupervised Discovery of Interpretable Directions in the GAN Latent Space

Introduction

The paper "Unsupervised Discovery of Interpretable Directions in the GAN Latent Space" (2002.03754) presents an innovative method aimed at identifying semantically meaningful directions within the latent space of pre-trained GAN models. Traditionally, the discovery of such directions requires supervision, entailing the manual labeling of data or leveraging pre-trained models. This research introduces a fully unsupervised approach, which provides significant advancements in understanding the underlying structure of GAN latent spaces.

Methodology

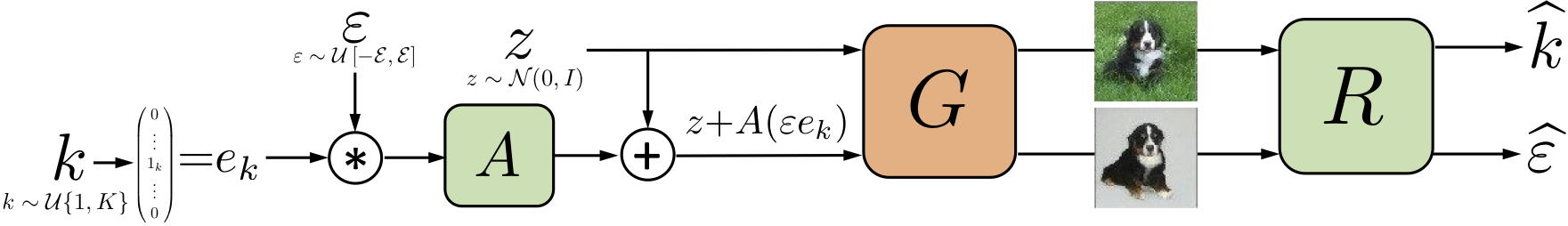

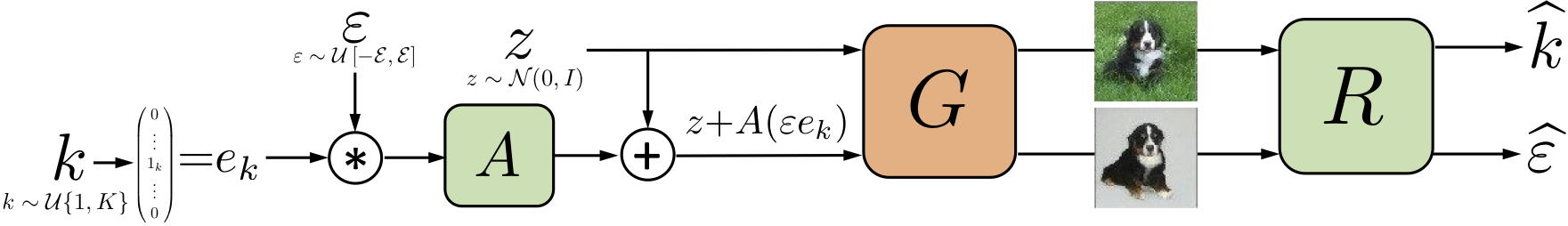

The core objective of this paper is to establish a model-agnostic, unsupervised framework to uncover interpretable directions that correlate with recognizable semantic transformations in generated images. The authors propose a learning protocol where a pretrained GAN generator G is coupled with a matrix A and a reconstructor R. The matrix A identifies potential directional vectors within the latent space, while the reconstructor R, utilizing image pairs, predicts both the direction index and the shift magnitude. The joint optimization of A and R ensures that discovered directions are diverse and disentangled, effectively enabling interpretations of individual variational factors.

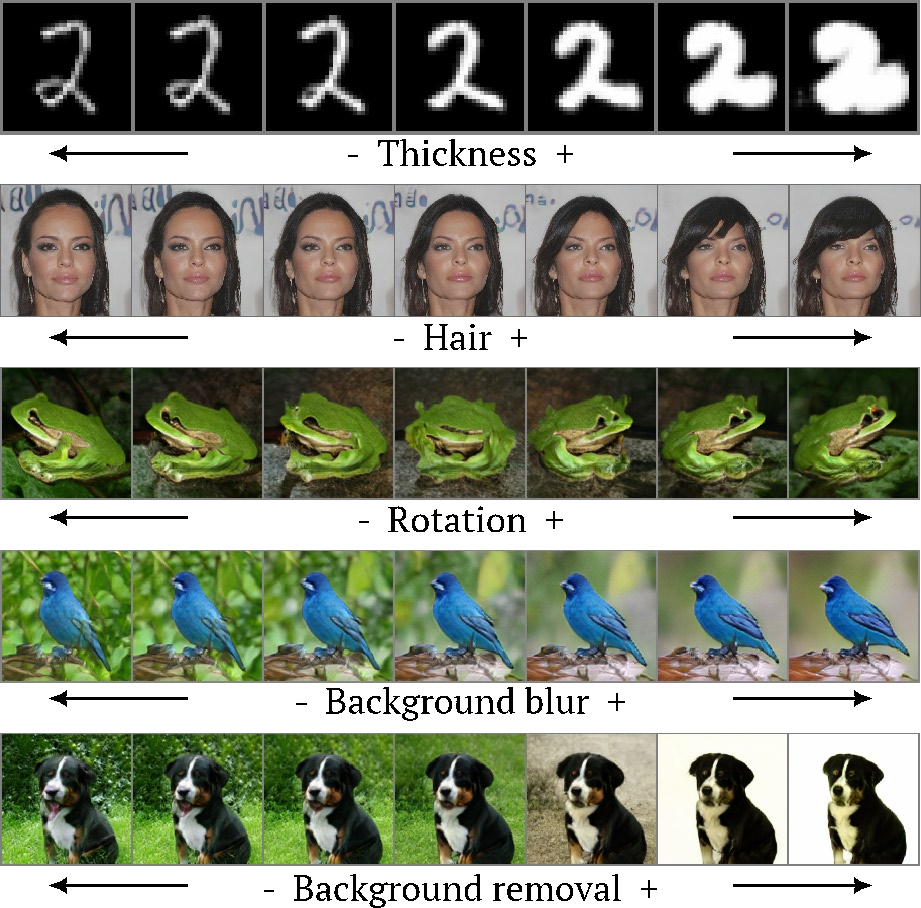

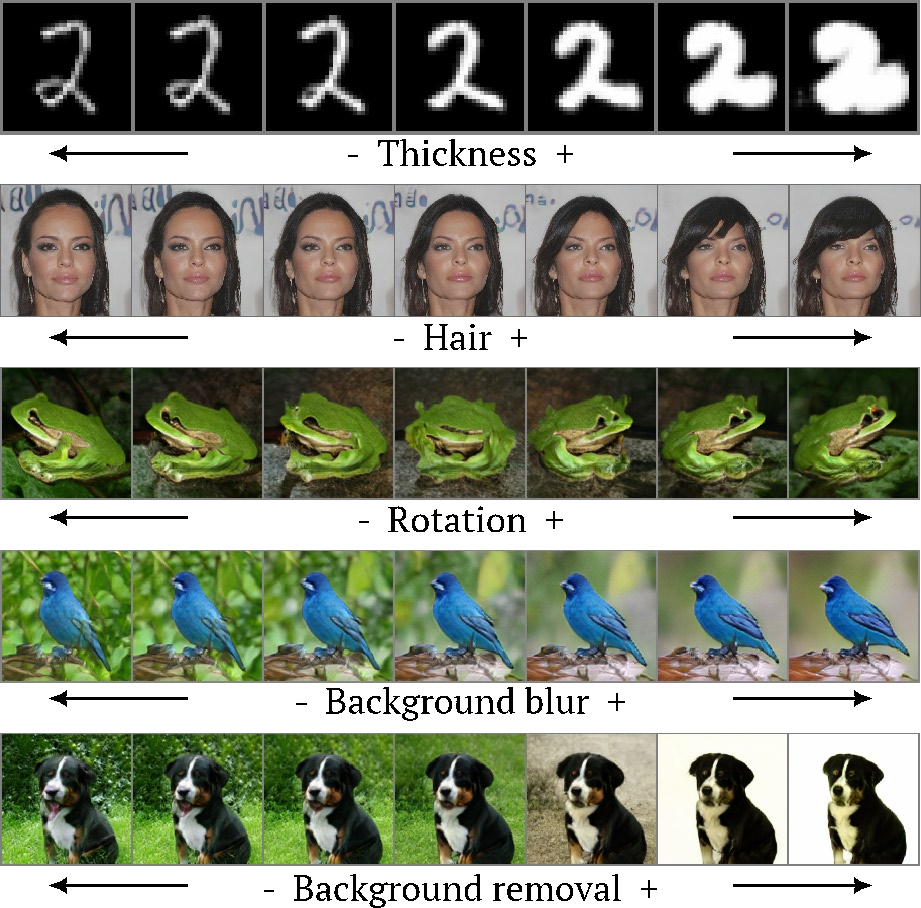

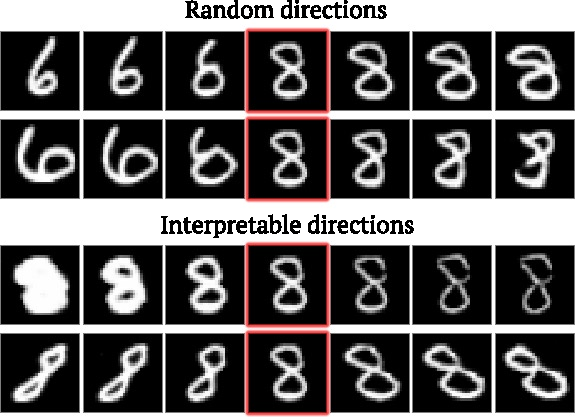

Figure 1: Examples of interpretable directions discovered by our unsupervised method for several datasets and generators.

Results and Evaluation

The authors evaluated the proposed method on multiple datasets, including MNIST, Anime Faces, CelebA-HQ, and BigGAN. Qualitative results demonstrate that the discovered directions correspond to transformations such as background removal, zooming, and texture alterations, revealing the method's capacity to identify complex, interpretable transformations autonomously.

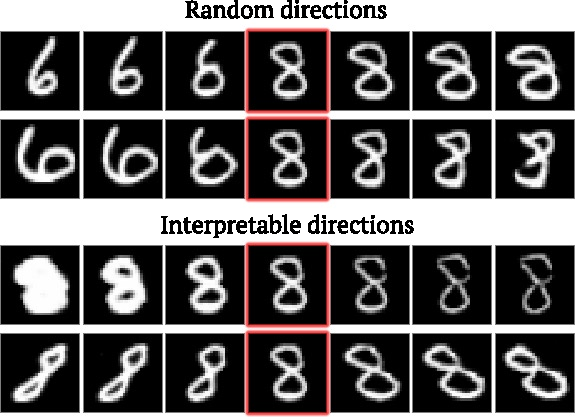

Quantitative assessment is performed using Reconstructor Classification Accuracy (RCA) and individual interpretability metrics (MOS). The method boasts superior performance in both measures compared to baseline approaches utilizing random or coordinate directions. This is indicative of the model's robustness in identifying directions corresponding to distinct factors of variation.

Figure 2: Image transformations obtained by moving in random (top) and interpretable (bottom) directions in the latent space.

Practical Implications and Future Directions

One of the significant practical implications of this work is its application in weakly-supervised saliency detection. The research demonstrates how background removal directions can be employed to generate high-quality synthetic data, enhancing saliency detection models. This usage exemplifies the broader potential of unsupervised discovery methods to contribute effectively to various tasks within computer vision.

Looking forward, this approach could serve as a foundation for further advancements in GAN interpretability. Potential future research may focus on refining the methodology to handle even larger latent spaces or applying it to uncover transformations in more diverse datasets.

Conclusion

This paper provides a significant step forward in understanding and utilizing GAN latent spaces, presenting a versatile unsupervised approach that unlocks new possibilities for semantic image manipulation without reliance on labeled data. The broader applicability and elimination of supervision constraints highlight the method's contributions to advancing generative modeling techniques.

Figure 3: Scheme of our learning protocol, which discovers interpretable directions in the latent space of a pretrained generator G. A training sample in our protocol consists of two latent codes, where one is a shifted version of another. Possible shift directions form a matrix A. Two codes are passed through G and an obtained pair of images go to a reconstructor R that aims to reconstruct a direction index k and a signed shift magnitude ε.