- The paper introduces a novel CNN framework with an unsupervised penalty that simulates virtual IMU data using the AMASS dataset.

- It leverages the SMPL model for preprocessing motion capture data to accurately generate virtual acceleration measurements for activity recognition.

- Fine-tuning with real IMU data from the DIP dataset significantly bridges the gap between virtual and actual sensor outputs, reducing data collection costs.

Deep Learning for Human Activity Recognition with Virtual Wearable Sensors

This paper addresses the challenges in Human Activity Recognition (HAR) by leveraging the AMASS dataset, a large collection of motion capture data, and proposes a deep learning framework to improve recognition accuracy and robustness. The core idea involves training a deep convolutional neural network with an unsupervised penalty on virtual IMU data generated from the AMASS dataset, followed by fine-tuning with real IMU data from the DIP dataset.

Dataset Preprocessing with SMPL Model

The paper utilizes the SMPL model to process the AMASS dataset for HAR. The SMPL model is a parameterized 3D human body model defined as:

M(β,θ)=W(TP(β,θ),J(β),θ,W)

TP(β,θ)=T+Bs(β)+Bp(θ)

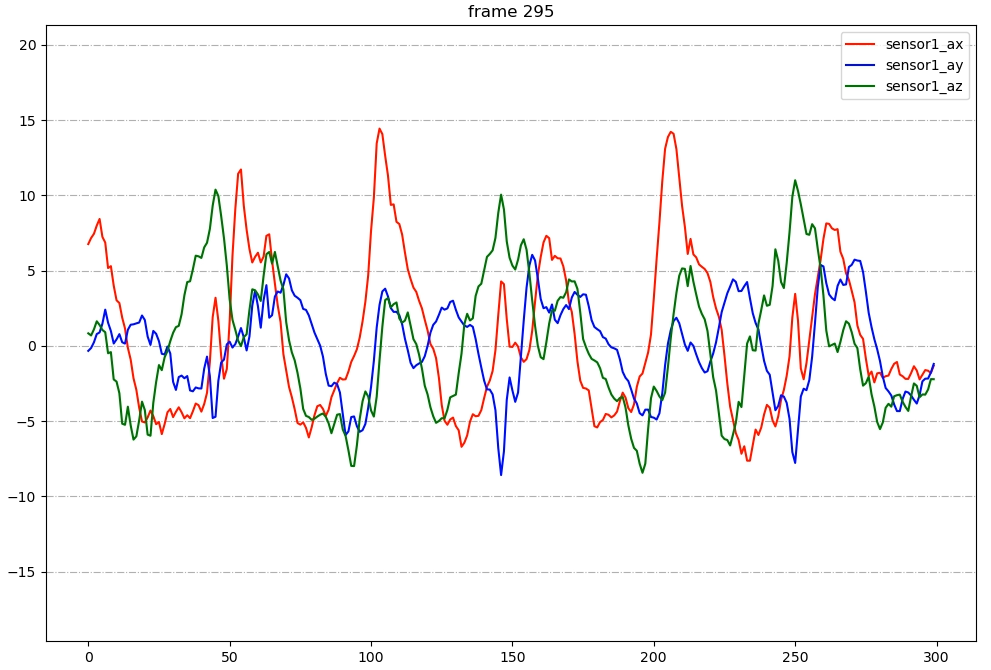

The process begins by generating virtual IMU data from the AMASS dataset, which originally lacks sensor data. This is achieved by placing virtual sensors on the SMPL mesh surface and calculating virtual acceleration data using finite differences:

at=dt2pt−1+pt+1−2⋅pt

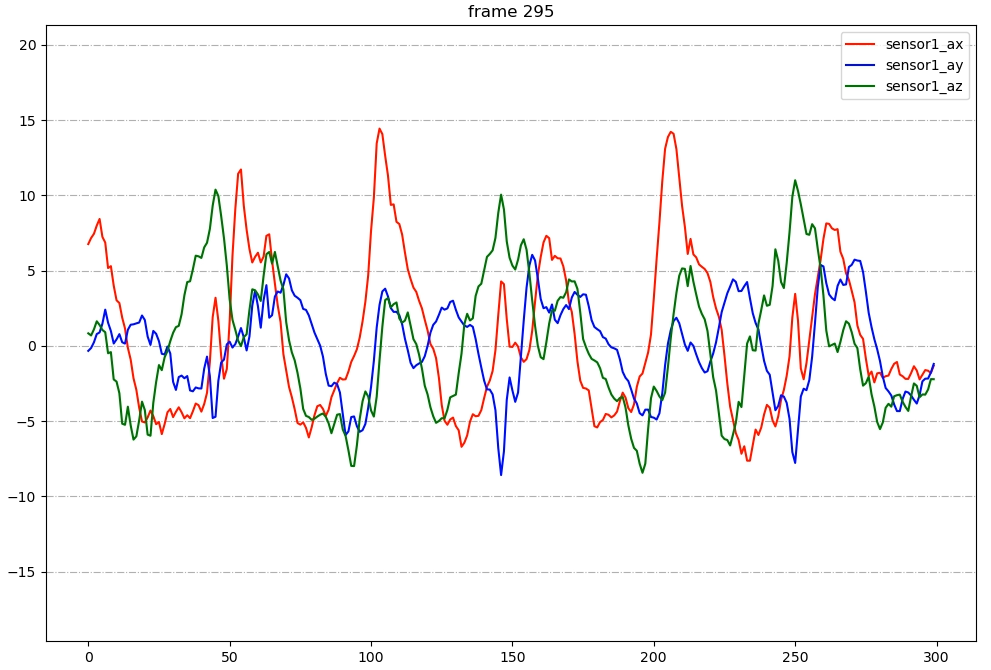

Figure 1: Wrist accelerations for typical walking movement.

The generated data is then labeled and filtered through a three-step process: posture-based labeling, acceleration-based filtering, and data cleaning with the SMPL model. Acceleration-based filtering leverages the differences in acceleration characteristics between activities.

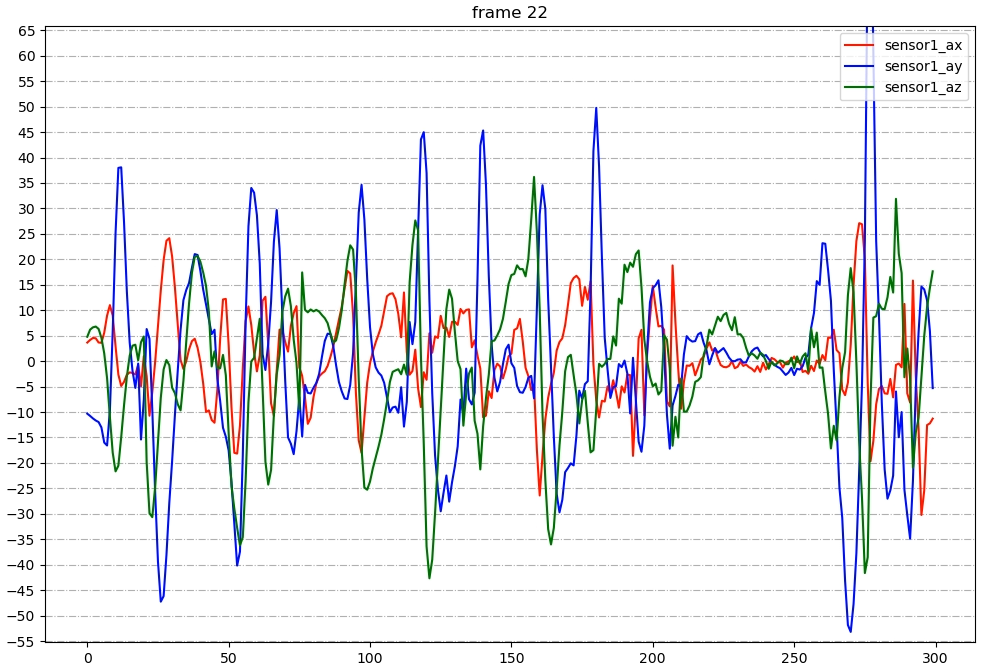

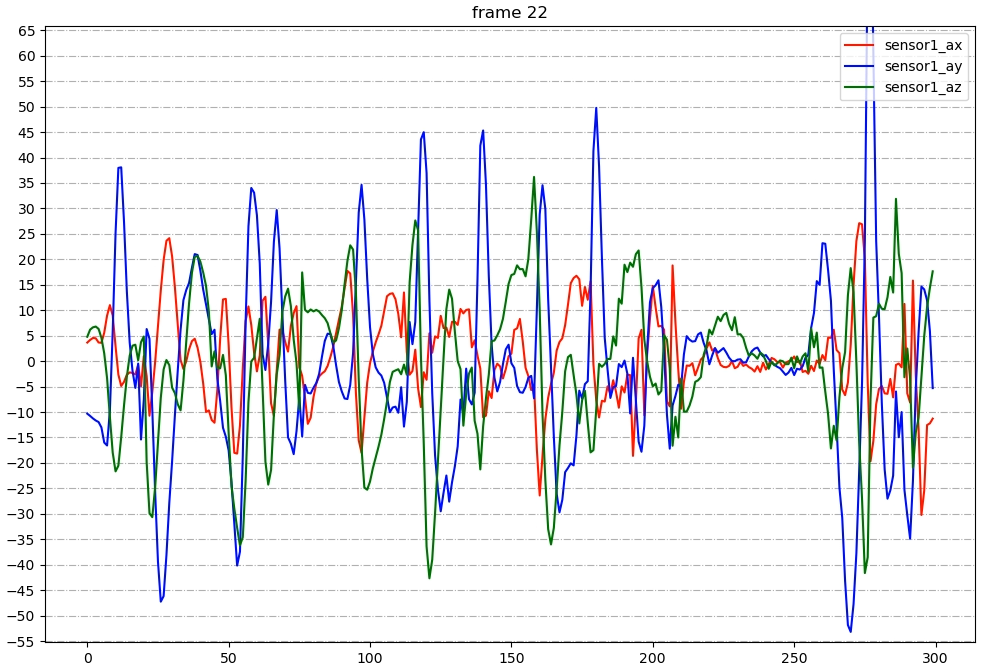

Figure 2: Visualization for clapping movement.

For activities with less obvious acceleration characteristics, such as stretching, visualization with the SMPL model is used to identify and correct mislabeled motions. This visualization is achieved by building the SMPL model in Unity and inputting different SMPL pose parameters.

Deep Learning Algorithm and Fine-Tuning

The proposed method consists of two stages: off-line training and on-line testing. In the off-line stage, a deep convolutional neural network with an unsupervised penalty is trained on the AMASS dataset. The network parameters Θ are updated by minimizing the following equation:

$\begin{array}{*{20}{c}

{\mathop {\rm{argmin}\limits_\Theta }&{\underbrace {\cal L}_{0}\left( {\Theta _0} \right)}_{supervised} + \lambda \underbrace {\cal L}_{1}\left( {\Theta _1} \right)}_{unsupervised \ penalty}

\end{array}$

Where

${\cal L}_{0}\left( \Theta_0 \right) = \left\| {\bf{Z}^{\left( p \right)} - {\varphi _S}\left( {\bf{W}_S}{\bf{\tilde X}^{\left( p \right)} \right)} \right\|_2^2$

${\bf{\tilde X}^{\left( p \right)} = {\varphi _U}\left( {\bf{W}_U} \cdots {\varphi _1}\left( {\bf{W}_1}{\bf{X}^{\left( p \right)} \right)} \right)$

${\cal L}_1}\left( {\Theta _1} \right) = \left\| {\bf{X}^{\left( p \right)} - {\varphi _{2U}\left( {\bf{W}_{2U} \cdots {\varphi _{U + 1}\left( {\bf{W}_1}{\bf{\tilde X}^{\left( p \right)} \right)} \right)} \right\|_2^2$

The unsupervised penalty acts as a denoising autoencoder, enhancing the robustness of the method. In the on-line stage, transfer learning is used to fine-tune the fully-connected layers with real IMU data from the DIP dataset.

Experimental Results and Analysis

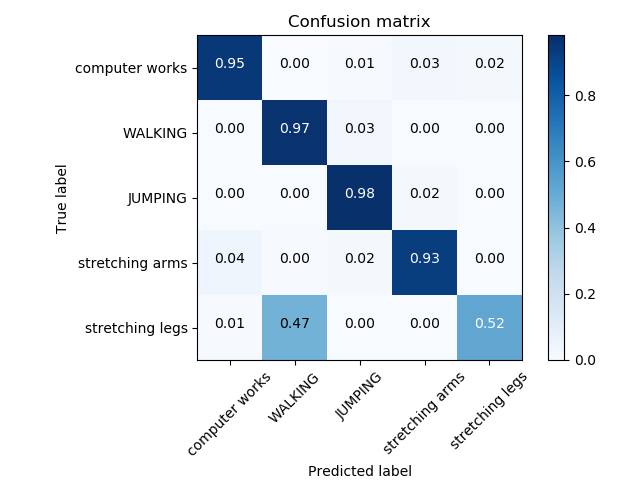

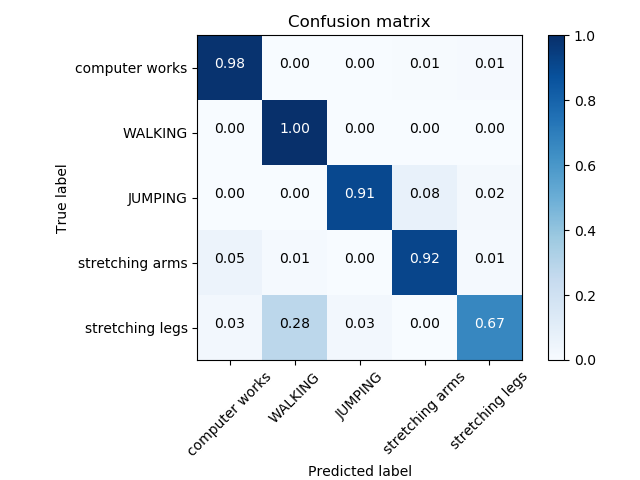

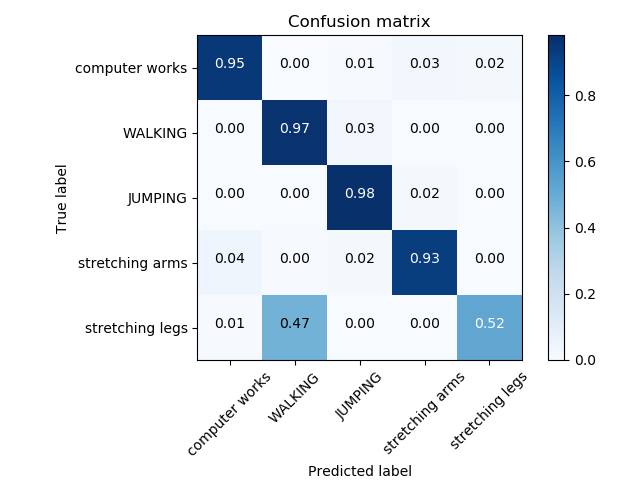

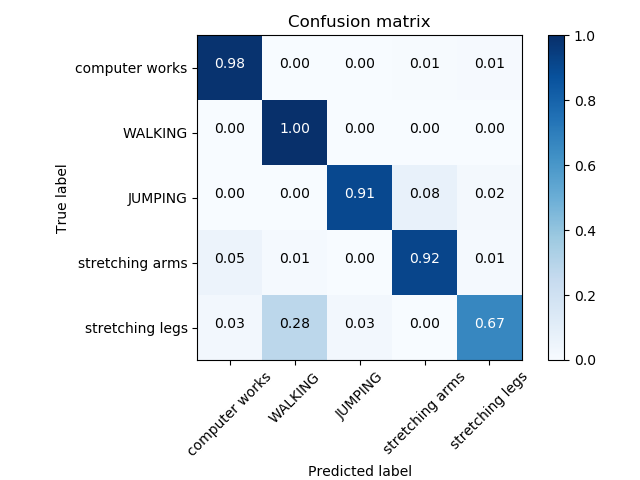

The paper presents three experiments to validate the proposed method. Experiment 1 trains and tests on AMASS, experiment 2 trains and tests on DIP, and experiment 3 trains on AMASS, fine-tunes on DIP, and tests on DIP. The results demonstrate that the proposed method outperforms DeepConvLSTM and RF on both AMASS and DIP. The fine-tuning process effectively improves the classification results, addressing the gap between virtual and real IMU data. The confusion matrix of DIP in experiment 2 and 3 are shown below.

Figure 3: Confusion matrix of DIP in experiment 2.

Conclusion

The paper introduces a novel approach to HAR by using the AMASS dataset with virtual IMU data and a CNN framework with an unsupervised penalty. The experimental results demonstrate the feasibility of using pose reconstruction datasets for HAR and the effectiveness of fine-tuning with real IMU data to bridge the virtual-real gap. The authors note that fine-tuning can achieve excellent results within a small number of epochs (20), suggesting that training on large-scale virtual IMU datasets, followed by fine-tuning with small-scale real IMU datasets, can reduce the cost of data collection. Future work may explore optimal IMU configurations through more detailed experiments.