MedDialog: Two Large-scale Medical Dialogue Datasets

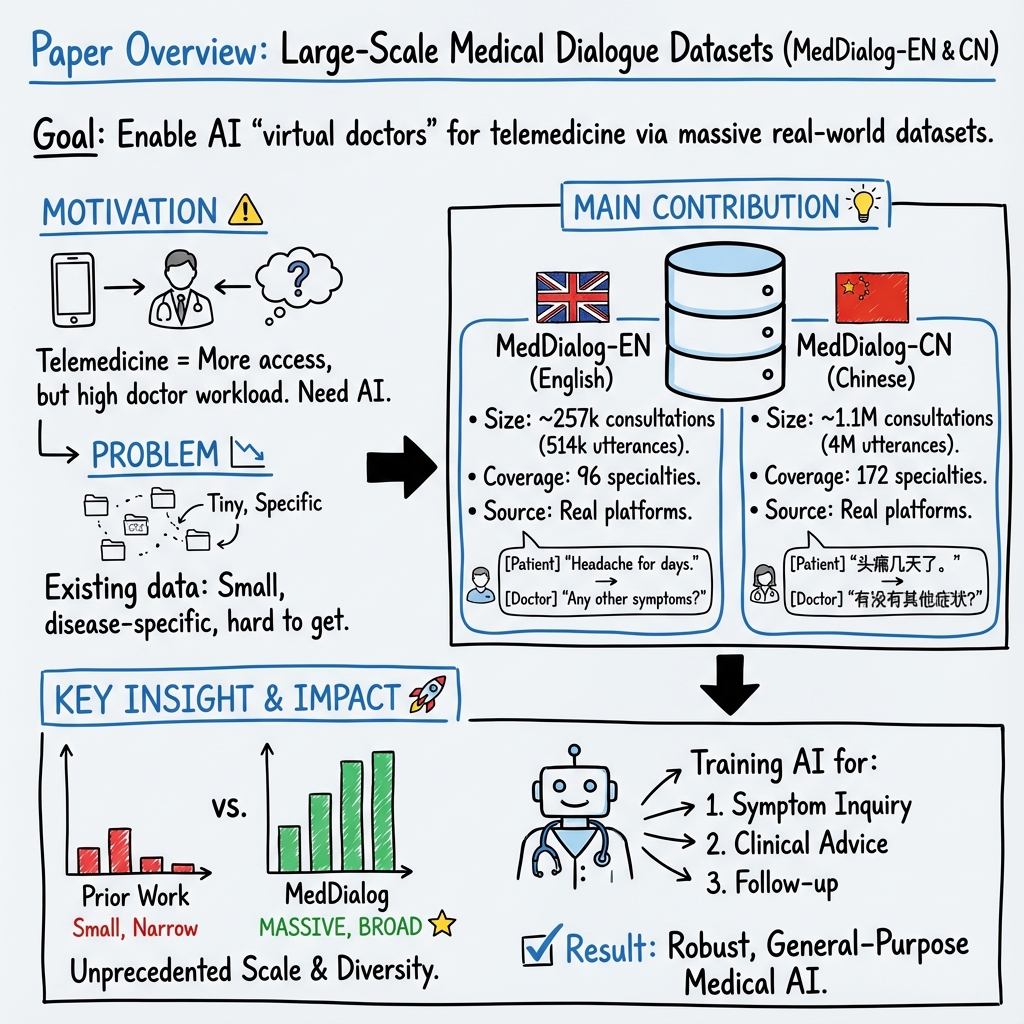

Abstract: Medical dialogue systems are promising in assisting in telemedicine to increase access to healthcare services, improve the quality of patient care, and reduce medical costs. To facilitate the research and development of medical dialogue systems, we build two large-scale medical dialogue datasets: MedDialog-EN and MedDialog-CN. MedDialog-EN is an English dataset containing 0.3 million conversations between patients and doctors and 0.5 million utterances. MedDialog-CN is an Chinese dataset containing 1.1 million conversations and 4 million utterances. To our best knowledge, MedDialog-(EN,CN) are the largest medical dialogue datasets to date. The dataset is available at https://github.com/UCSD-AI4H/Medical-Dialogue-System

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

Easy Explanation: MedDialog — Two Large-Scale Medical Dialogue Datasets (for a 14-year-old)

1) What is this paper about?

This paper introduces two big collections of real conversations between patients and doctors. One collection is in English (MedDialog-EN) and the other is in Chinese (MedDialog-CN). These collections are meant to help build and improve computer programs (AI) that can chat with patients about health—like a “virtual doctor” that can ask questions and give general advice.

2) What questions are the researchers trying to answer?

The researchers want to solve a simple problem: AI needs lots of examples to learn how to talk like a doctor. But getting real medical conversations is hard because of privacy and limited access. So they asked:

- Can we create very large, diverse, real-world datasets of patient–doctor chats in different languages?

- Can these datasets cover many types of diseases and medical specialties (like heart, lungs, kids’ health, etc.) so the AI can learn broadly?

- Can we make them available to the research community to speed up progress in telemedicine?

3) How did they build these datasets?

They gathered patient–doctor conversations from trusted health websites where people can consult doctors online.

To make this easy to picture:

- “Web crawling” is like sending a smart robot to read lots of web pages and copy the relevant conversations.

- An “utterance” is one turn in a chat—like when the patient types a message or the doctor replies.

- “Specialties” are specific areas of medicine, like cardiology (heart) or pediatrics (children’s health).

Here’s what they did:

- For MedDialog-EN (English), they collected 257,454 consultations (about 0.3 million) with 514,908 utterances (about 0.5 million) from two health platforms. Each consultation has a short description of the patient’s problem plus the back-and-forth chat.

- For MedDialog-CN (Chinese), they collected 1,145,231 consultations (about 1.1 million) with 3,959,333 utterances (about 4 million) from a major Chinese health platform. Each consultation includes the patient’s condition and history, the chat, and sometimes the doctor’s diagnosis and treatment suggestions.

- They organized the data by medical specialty and included conversations from many years (roughly 2008–2020 for English, 2010–2020 for Chinese).

4) What did they find, and why is it important?

Main results:

- These are the largest medical dialogue datasets ever released (at the time of writing).

- MedDialog-EN covers 96 specialties (like cardiology, nephrology, pharmacology).

- MedDialog-CN covers 29 broad categories and 172 fine-grained specialties.

- The data includes patients from many different places and backgrounds (worldwide for English, 31 provinces for Chinese), which helps reduce bias and makes AI training more fair and realistic.

Why it matters:

- AI systems learn from examples. More and better data helps them understand how doctors ask the right questions, explain clearly, and offer reasonable suggestions.

- These datasets can speed up the creation of safe, helpful medical chatbots that support telemedicine—especially useful for people who live far from hospitals or have trouble seeing a doctor quickly.

- Broad coverage means the AI won’t just learn about one or two diseases; it can practice across many medical areas.

5) What’s the bigger impact?

If researchers use these datasets well, we could get better “virtual doctor” assistants that:

- Help answer common health questions,

- Guide patients on when to seek in-person care,

- Support doctors by handling basic follow-ups, which may reduce burnout.

Important note: These AI tools are meant to assist, not replace, real doctors. Safety, privacy, and careful testing are essential. But with large, diverse, multilingual data like MedDialog, the path toward helpful, trustworthy telemedicine tools becomes much clearer.

The datasets are publicly available, so more researchers can build and improve medical dialogue systems faster.

Practical Applications

Practical Applications Derived from the MedDialog Datasets

The paper introduces MedDialog-EN and MedDialog-CN—large-scale, publicly available datasets of patient–doctor dialogues in English and Chinese, spanning many specialties and years. Below are concrete, real-world applications that leverage these datasets for industry, academia, policy, and daily life.

Immediate Applications

- Triage and specialty routing models

- Sectors: Healthcare, Software

- What to build: Text classifiers that route incoming patient messages to the right specialty and urgency level (e.g., urgent vs. routine).

- Tools/workflows: PyTorch/Hugging Face Transformers; fine-tune BERT/ClinicalBERT/Chinese-BERT; deploy behind telemedicine “digital front door.”

- Dependencies/assumptions: Human-in-the-loop for final routing; ongoing calibration to local care pathways; dataset license permits commercial use; de-identification and HIPAA/GDPR compliance.

- Draft-response assistants for clinicians

- Sectors: Healthcare, Software

- What to build: LLM-based assistants that generate draft replies to patient queries, with clinicians reviewing/editing before sending.

- Tools/workflows: RAG over institutional guidelines + fine-tuned dialogue models; EHR messaging integration.

- Dependencies/assumptions: Explicit human oversight; safety guardrails; institution-specific guideline retrieval; legal review for SaMD implications.

- Automated intake and EMR pre-population

- Sectors: Healthcare, Software

- What to build: NER and slot-filling to extract conditions, duration, meds, allergies, and past history from free text and pre-fill structured fields.

- Tools/workflows: spaCy/Stanza, clinical NER models; HL7 FHIR integration.

- Dependencies/assumptions: Local terminology adaptation; physician verification step; privacy and logging controls.

- Conversation summarization for “after-visit” notes

- Sectors: Healthcare, Software

- What to build: Summarizers that produce patient-friendly summaries (e.g., SOAP notes + instructions) from chat transcripts.

- Tools/workflows: Fine-tuned summarization LLMs; templated discharge instructions.

- Dependencies/assumptions: Clinician review; alignment with literacy standards; multilingual support as needed.

- Retrieval-augmented clinical Q&A support for providers

- Sectors: Healthcare, Software, Academia

- What to build: Internal Q&A copilots that surface similar past cases and guideline snippets while drafting chat responses.

- Tools/workflows: FAISS/Elasticsearch retrieval over MedDialog + local KB; RAG with citation display.

- Dependencies/assumptions: Data governance; deduplication and quality filtering; explicit “for reference only” labeling.

- Contact center and telemedicine operations analytics

- Sectors: Healthcare Operations, Finance

- What to build: Topic modeling and intent clustering to forecast demand, staff scheduling, and identify operational pain points.

- Tools/workflows: Unsupervised topic models, LDA/BERTopic; BI dashboards.

- Dependencies/assumptions: Anonymized transcripts; drift monitoring as services change.

- Conversation quality and compliance auditing

- Sectors: Healthcare, Policy/Quality

- What to build: Automated scoring for clarity, empathy, and adherence to communication standards; QA alerts for coaching.

- Tools/workflows: Fine-tuned classifiers; rubric-based scoring; dashboard for supervisors.

- Dependencies/assumptions: Institution-defined rubrics; avoidance of demographic bias; legal review for workforce monitoring.

- Safety and moderation filters for medical forums and chat

- Sectors: Software, Community Platforms

- What to build: Classifiers to detect unsafe advice, misinformation, and need for escalation/emergency referral.

- Tools/workflows: Safety-tuned models that trigger standardized disclaimers and escalation workflows.

- Dependencies/assumptions: Alignment with WHO/CDC/AMA guidance; regular red-team testing; clear user messaging.

- Cross-lingual model baselines and transfer learning

- Sectors: Academia, Software

- What to build: Comparative studies and baselines for English–Chinese medical dialogue modeling; domain-adapted MT.

- Tools/workflows: mBERT/XLM-R; bilingual alignment; WWM Chinese BERT; PaddleNLP/jieba for Chinese preprocessing.

- Dependencies/assumptions: Domain-specific MT post-editing; differences in clinical practice across locales.

- Education and skills training (OSCE-style simulators)

- Sectors: Education, Healthcare

- What to build: Standardized patient simulators for communication training, questioning strategy, and empathy practice.

- Tools/workflows: Fine-tuned conversational agents; scenario libraries with varied specialties.

- Dependencies/assumptions: Clear labeling as educational only; no diagnosis; supervised debriefing.

Long-Term Applications

- Autonomous virtual triage and primary care assistants

- Sectors: Healthcare, Consumer Health

- What to build: End-to-end triage assistants conducting structured history-taking, offering next-step guidance, and integrating with care pathways.

- Tools/workflows: Dialogue policies + medical knowledge graphs; dynamic RAG; escalation to clinicians.

- Dependencies/assumptions: Regulatory approval (FDA/CE), clinical trials for safety/effectiveness, robust crisis detection.

- EHR-integrated ambient scribe for telemedicine

- Sectors: Healthcare IT

- What to build: Real-time generation of structured notes, orders, and coding from live chat sessions.

- Tools/workflows: ASR+LLM pipeline for multimodal (text/voice), FHIR integration, ICD/CPT code suggestion.

- Dependencies/assumptions: Very high accuracy; auditability; provider liability considerations.

- Automated clinical coding and billing from chat transcripts

- Sectors: Healthcare, Finance/Revenue Cycle

- What to build: Systems that infer ICD/CPT codes from conversation context and generate compliant documentation.

- Tools/workflows: Sequence labeling + classifier ensembles; coder-in-the-loop verification.

- Dependencies/assumptions: Additional labeled data for coding; payer-specific rules; extensive validation.

- Red-flag detection and escalation systems

- Sectors: Healthcare, Emergency Services

- What to build: Real-time detection of alarming symptoms (e.g., stroke, sepsis indicators) in chats with immediate escalation.

- Tools/workflows: Thresholded risk scores; integrated paging/escalation orchestration.

- Dependencies/assumptions: Clinical guardrails; low false-negative rates; continuous post-market surveillance.

- Public health surveillance from conversational signals

- Sectors: Public Health, Policy

- What to build: Early-warning systems that mine aggregated chat patterns to detect outbreaks and trends (e.g., flu, RSV).

- Tools/workflows: Privacy-preserving aggregation; anomaly detection; secure data-sharing frameworks.

- Dependencies/assumptions: Strong de-identification; IRB/ethics approval; bias correction for platform demographics.

- Global multilingual telemedicine copilots

- Sectors: Global Health, NGOs, Telehealth

- What to build: Multilingual assistants adapted to local guidelines and cultural communication styles.

- Tools/workflows: Cross-lingual transfer; locale-specific RAG; human translator fallback.

- Dependencies/assumptions: Local clinical standard alignment; cultural competence; jurisdictional regulatory differences.

- Synthetic data generation and privacy-preserving training

- Sectors: Academia, Software, Policy

- What to build: Generative models producing high-fidelity synthetic dialogues for low-resource specialties and privacy research.

- Tools/workflows: Diffusion/LLM-based data synthesis; differential privacy; utility–privacy evaluation suites.

- Dependencies/assumptions: Proven reduction of re-identification risk; representativeness without leakage.

- Benchmarking and certification frameworks for clinical dialogue AI

- Sectors: Policy/Regulation, Standards Bodies

- What to build: Standardized test suites and leaderboards for safety, factuality, empathy, and bias in clinical chat.

- Tools/workflows: Curated challenge sets; external knowledge grounding checks; scenario-based evaluation.

- Dependencies/assumptions: Consensus metrics; participation from regulators, clinicians, and vendors.

- Personalized adherence and chronic disease management chatbots

- Sectors: Healthcare, Consumer Health

- What to build: Longitudinal conversation agents that monitor symptoms, nudge adherence, and coordinate care teams.

- Tools/workflows: Long-term user state tracking; integration with wearables and pharmacy systems.

- Dependencies/assumptions: Consent and data linkage; safety nets for deterioration; clinical oversight.

- Clinical trials prescreening via conversational intake

- Sectors: Pharma, Research

- What to build: Agents that screen patients against protocol criteria during chat and flag potential matches.

- Tools/workflows: Criteria extraction; eligibility inference; site referral workflows.

- Dependencies/assumptions: Accurate criteria parsing; handling of protected populations; sponsor SOP alignment.

- Cross-cultural communication and bias audits

- Sectors: Policy, Academia, Healthcare

- What to build: Studies and tools to evaluate differential performance across language/culture; bias mitigation training.

- Tools/workflows: Counterfactual evaluation; fairness dashboards; targeted data augmentation.

- Dependencies/assumptions: Need for demographic/cultural annotations (not included by default); partnership with ethics boards.

Notes on feasibility and assumptions across applications:

- Licensing and terms of use: Although the dataset is publicly released on GitHub, commercial deployment requires confirming the legality of using web-crawled dialogues from source platforms.

- Privacy and de-identification: Ensure removal of PHI and compliance with HIPAA/GDPR/CCPA; adopt differential privacy where applicable.

- Safety and regulation: Many clinician-facing tools may be considered medical devices; plan for regulatory pathways (FDA, EU MDR) and clinical validation.

- Data shift and guideline updates: Dialogues span 2008–2020; models must be updated to current clinical guidelines and medication practices.

- Human-in-the-loop: For near-term safety, keep clinicians in the loop for diagnosis, triage decisions, and documentation approval.

- Localization: Clinical norms and standards differ by country; adapt models to local practice, language, and culture.

Collections

Sign up for free to add this paper to one or more collections.