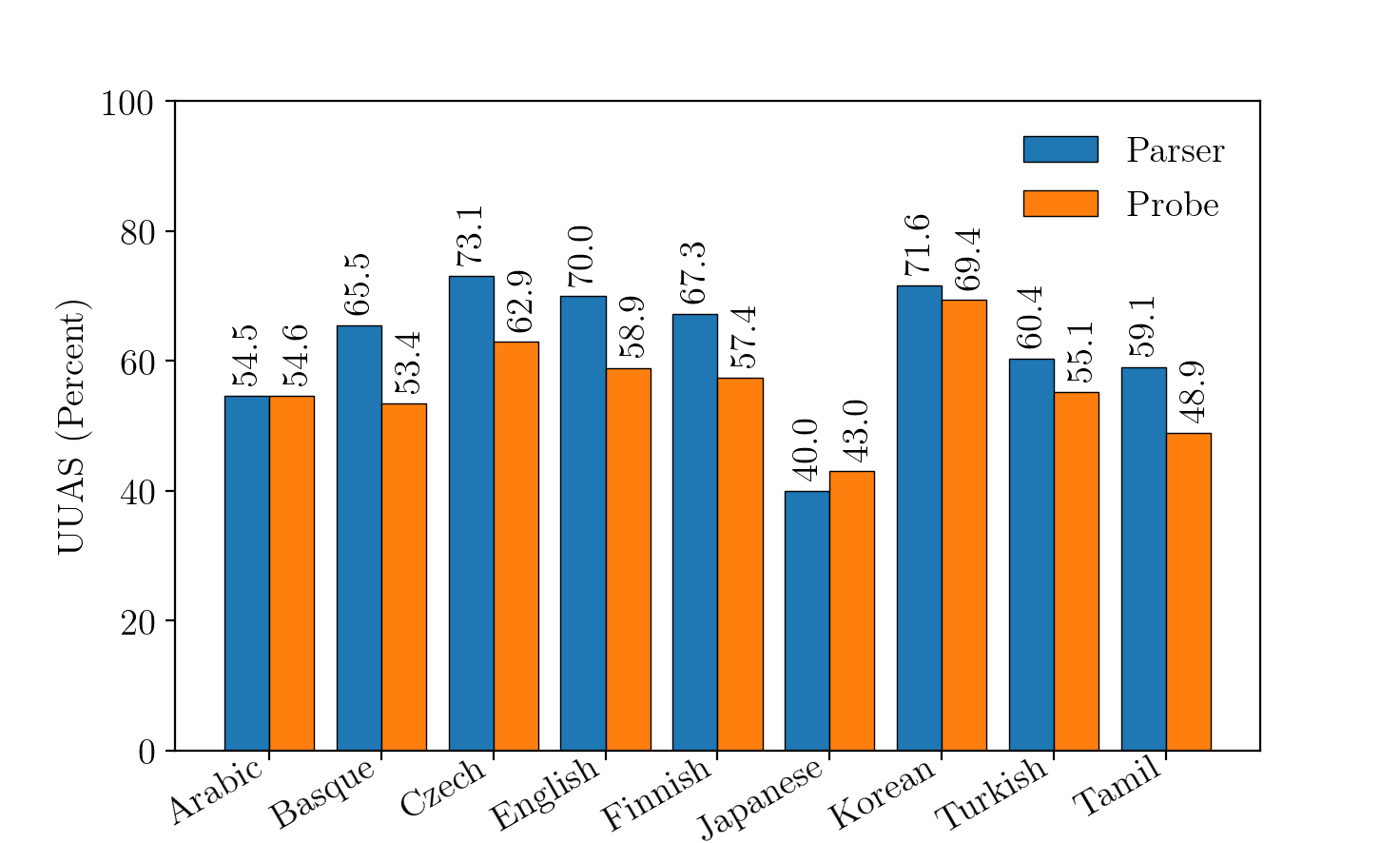

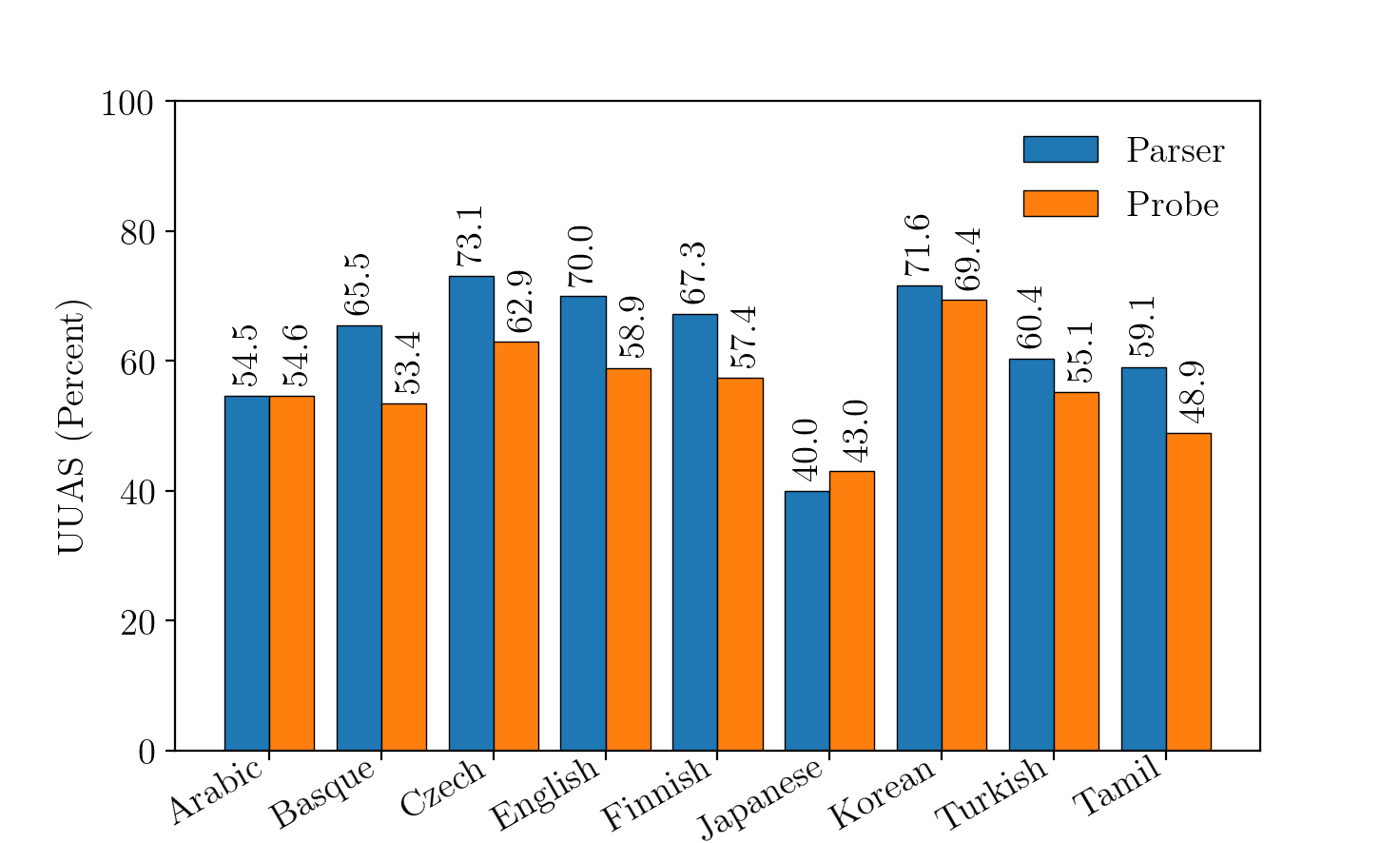

- The paper demonstrates that traditional parsers achieve higher UUAS scores than structural probes in capturing syntactic structures.

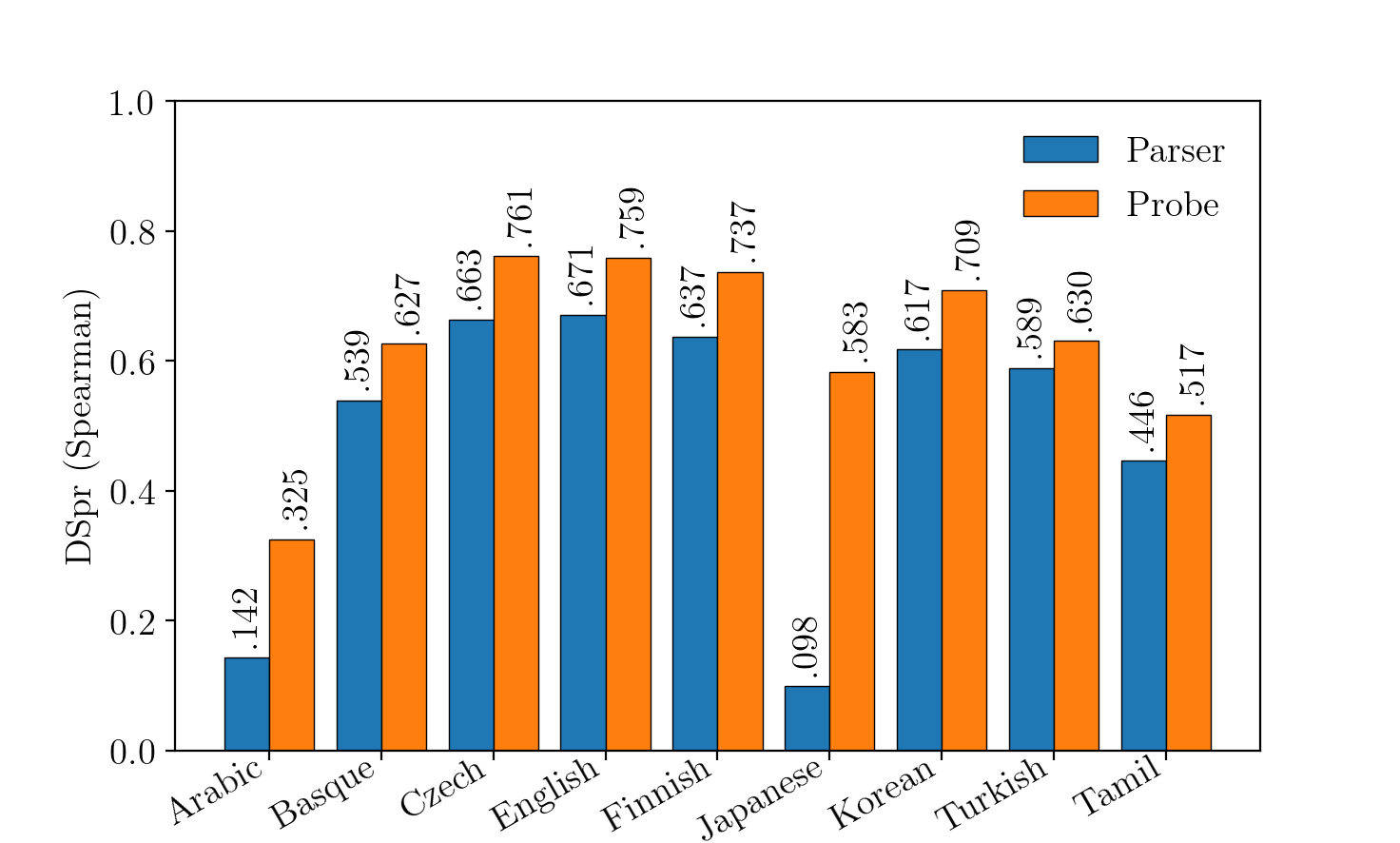

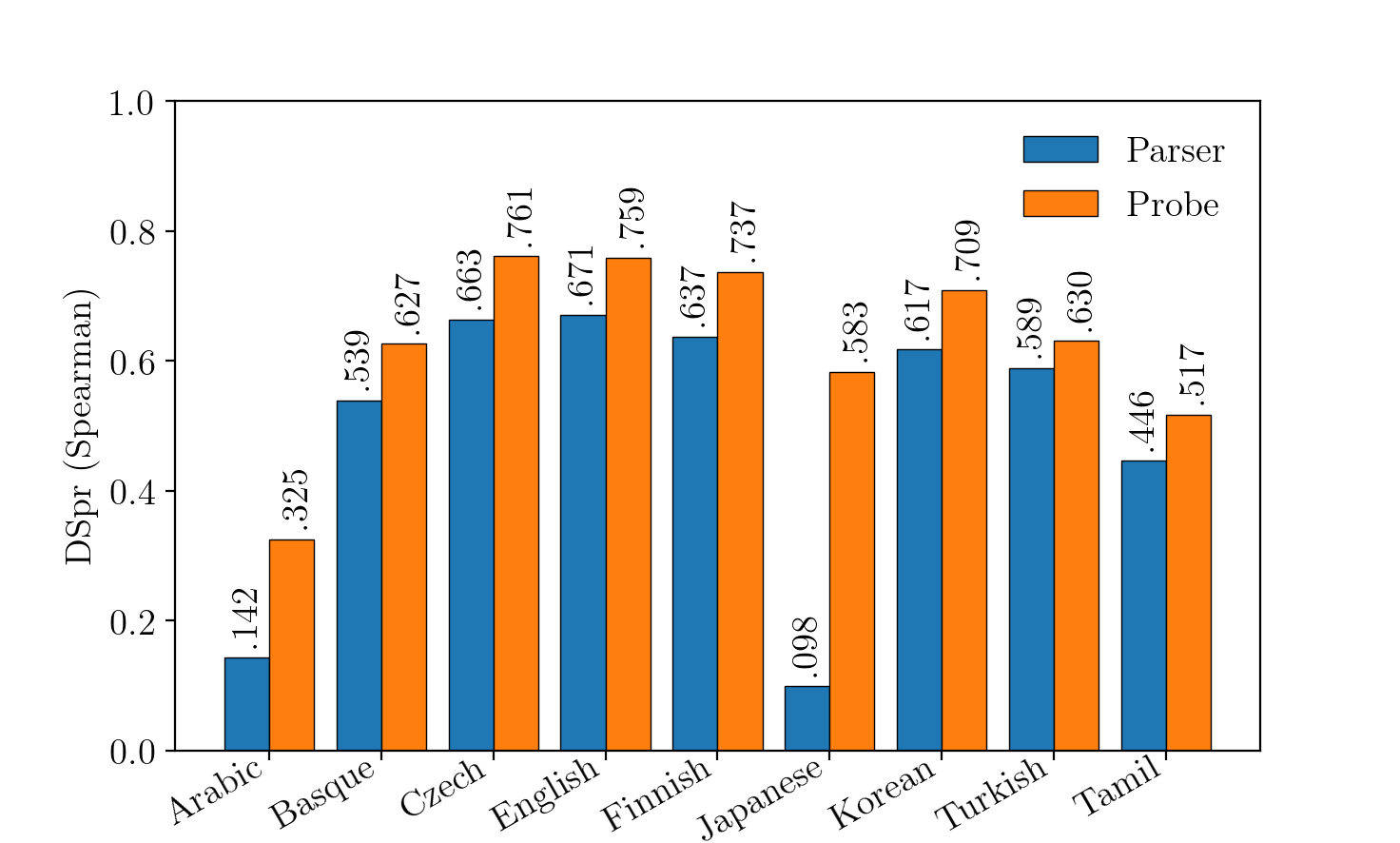

- It introduces a novel approach using the DSpr metric to rank-order syntactic distances, highlighting differences in probing efficacy.

- The methodology underscores the critical role of metric selection, recommending established parsing measures for validated linguistic insights.

Exploring Probing and Parsing in Neural LLMs

Introduction

The paper "A Tale of a Probe and a Parser" investigates the interaction between syntactic probes and parsing models, specifically examining whether syntactic information in neural models of language can be adequately extracted using empirically-designed probes. These probes, exemplified by the structural probe discussed, aim to expose latent linguistic information present in contextualized word representations. However, the authors propose a comparison between the structural probe and a conventional parser to determine which provides a more accurate reflection of embedded syntactic structures.

Probing Techniques and Methodology

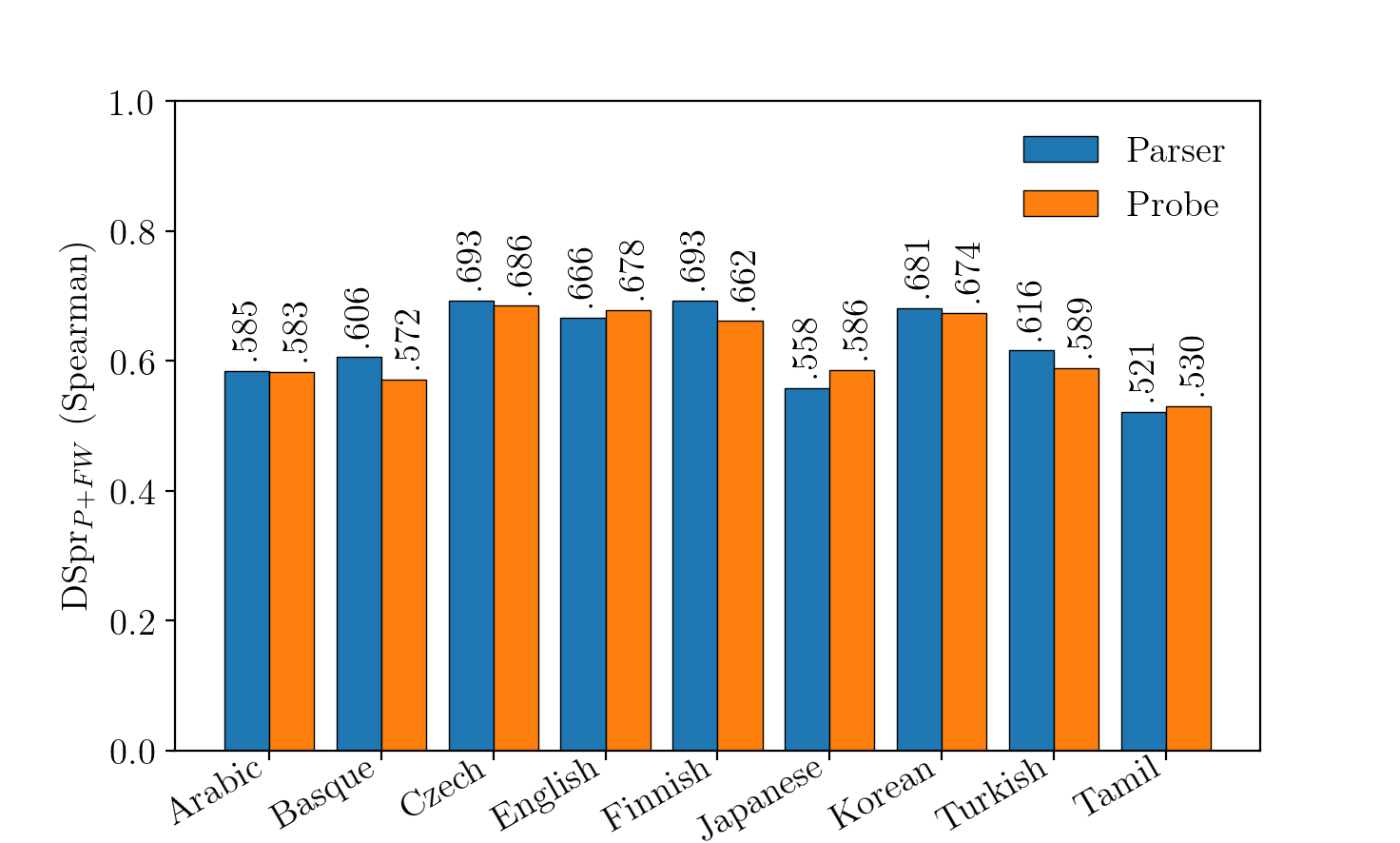

Syntactic probing utilizes diagnostic models to query neural LLM outputs, aiming to detect linguistic structure such as dependency trees. The structural probe by Hewitt is a prominent approach that integrates a novel loss function that measures syntactic distances between word pairs—a divergence from standard parsing practices (Figure 1). The structural probe is contrasted with a structured perceptron parser, identical in parameterization but adhering to a conventional loss function for parsing tasks.

Figure 1: DSpr$_{P+FW$ results for the structured probe.

Experiments

The experimental framework employs UUAS (Undirected Unlabeled Attachment Score) and DSpr (Distance Spearman) metrics to assess model performance across multiple languages using Multilingual BERT embeddings. The choices of metrics and their respective results pose profound implications for evaluating probing efficacy.

Implications and Discussion

The study underscores a pivotal question in probing task design: the choice of metrics. The contrast between DSpr and UUAS results highlights potentially divergent insights into LLM capabilities based on the chosen evaluation framework. While UUAS is well-established in dependency parsing, the DSpr metric favored by the structural probe does not clearly justify its preeminence. Practically, this suggests that tree reconstruction, traditional parsing strengths, might be more informative of linguistic embeddedness than distance correlation metrics.

Conclusion

The examination of probing versus parser performances reveals that effective linguistic probing may not necessitate drastic methodological divergences from parsing. The findings advocate for a common-sense approach to probing models, relying on established parsing methodologies, unless clear justification is provided for novel metrics or approaches. This calls for more rigorous validation when introducing new probing metrics to ensure they accurately reflect genuine insights into linguistic structures embedded within LLMs. Overall, the inquiry into probing drives a deeper understanding of how LLMs internally encode grammar and syntax, crucial for future developments in AI linguistics.