- The paper introduces a novel framework that anticipates accidents by modeling spatio-temporal relationships and using Bayesian neural networks for uncertainty quantification.

- It employs graph convolutional networks and recurrent layers to capture both spatial proximity and temporal evolution of traffic agents.

- Experimental results show the model predicts accidents 3.53 seconds earlier than existing methods while achieving an AP of 53.7% on benchmark datasets.

Uncertainty-based Traffic Accident Anticipation Model

The paper "Uncertainty-based Traffic Accident Anticipation with Spatio-Temporal Relational Learning" introduces a pioneering framework aimed at predicting traffic accidents before they occur using dashcam video data. The model leverages spatio-temporal relationships among traffic agents and incorporates Bayesian neural networks (BNNs) to quantify predictive uncertainty. This approach allows for improved anticipation of accidents and offers insights into the model's confidence levels.

Framework Overview

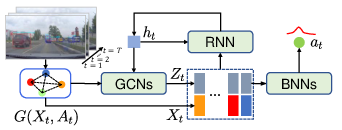

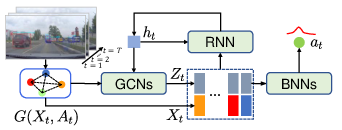

The framework begins by processing dashcam video inputs, where candidate objects detected in the scene are used to construct graphs representing spatial relationships. These graphs undergo feature transformation using fully-connected layers to produce low-dimensional representations that enhance feature representation capabilities.

Graph Representation and Learning:

The spatial relations among candidate objects are captured through a graph convolutional network (GCN), employing adjacency matrices weighted by object distances. This ensures that closer objects—more likely accident participants—are prioritized in the learning process.

Figure 1: Framework of the proposed model. With graph embedded representations G(Xt,At) at time step t, our model learns the latent relational representations Zt by the cyclic process of graph convolutional networks (GCNs) and recurrent neural network (RNN) cell, and predicts the accident score at by Bayesian neural networks (BNNs).

Spatio-Temporal Relational Learning:

The relational features evolve temporally through a recurrent neural network (RNN) layer that updates hidden states by combining agent-specific features with relational embeddings. Spatial relations are learned via iterative GCNs, building representations enriched with temporal context provided by latent RNN states.

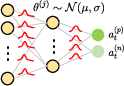

BNN Integration for Prediction:

Bayesian neural networks are utilized to predict accident scores, introducing the capacity to assess both epistemic and aleatoric uncertainties. The BNNs use Gaussian-distributed network parameters, thus enabling multiple output inference passes for comprehensive uncertainty estimation.

Figure 2: Compared with NNs (Fig..~\ref{fig:nn}), network parameters of BNNs (Fig.~\ref{fig:bnn}) are sampled from Gaussian distributions so that both at and its uncertainty can be obtained.

Optimization and Learning Objectives

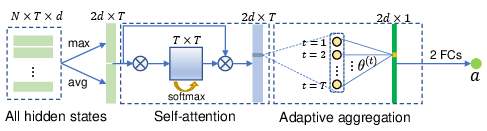

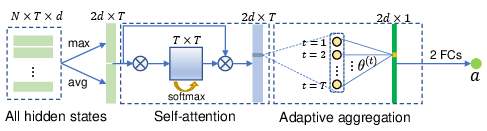

The learning objective is composed of multiple loss components, including a novel uncertainty-based ranking loss that penalizes non-monotonic epistemic uncertainty changes between sequential predictions. The model also employs a self-attention aggregation (SAA) layer, providing video-level predictions with global guidance during training. This promotes improved hidden state accuracy across temporal dimensions.

Experimental Results

The model is evaluated on publicly available datasets, as well as a newly compiled Car Crash Dataset (CCD). The CCD dataset features diverse environmental annotations and accident reasoning, setting a benchmark for contextual accident anticipation. Evaluation metrics reveal that the model achieves higher average precision (AP) and longer Time-to-Accident (TTA) anticipation spans across datasets, compared to current state-of-the-art methods.

Performance:

Experimental results show that the proposed model anticipates traffic accidents on average 3.53 seconds earlier than state-of-the-art frameworks, achieving an AP of 53.7% on standard datasets.

Figure 3: SAA Layer. First, all N×T hidden states are gathered and pooled by max-avg concatenation. Then, the simplified self-attention and adaptive aggregation are proposed to predict video-level accident score a.

Implications and Future Directions

The integration of BNNs provides transparency in AI-driven decision-making processes for autonomous driving systems. The resulting predictive uncertainty assessment offers critical insights into model reliability, guiding developers in understanding and improving system robustness. This research sets the stage for enhancing accident anticipation capabilities under real-world driving conditions by advancing relational learning techniques.

In future work, expansion to multimodal inputs, such as traffic signals and environmental sensors, could be explored to further refine prediction accuracy and incorporate broader context cues in driving scenarios. The model could also be adapted to integrate real-time traffic anomaly detection systems, thus extending its utility beyond static anticipation tasks.

Conclusion

Overall, the introduced framework significantly advances the state of traffic accident anticipation by harnessing relational learning in conjunction with uncertainty quantification, challenging existing models and setting new accuracy benchmarks with substantial interpretability and practical relevance.