- The paper presents a novel continuous-state Hopfield network that increases pattern storage capacity and retrieval speed over traditional binary models.

- It employs an advanced energy function and update mechanism akin to transformer attention, enabling fast convergence and robust associative memory.

- Empirical results across domains such as multiple instance learning and drug design validate the network's scalability and high accuracy.

Revisiting "Hopfield Networks is All You Need"

Introduction and Overview

The paper "Hopfield Networks is All You Need" proposes a state-of-the-art advancement in neural network architectures by introducing modern Hopfield networks with continuous states. Unlike classical binary Hopfield networks, this novel framework retains and retrieves a significantly larger number of patterns with enhanced speed and accuracy, integrating seamlessly with deep learning architectures such as transformers and BERT models. This fusion unlocks new avenues for memory-augmented neural processing, transcending the capabilities of conventional fully-connected, convolutional, or recurrent networks.

Theoretical Foundation: Energy Function and Update Rule

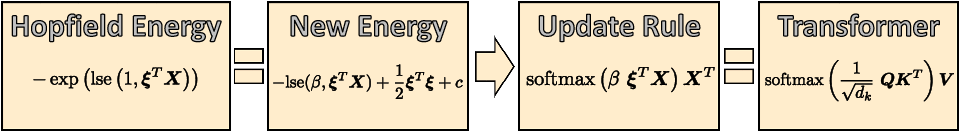

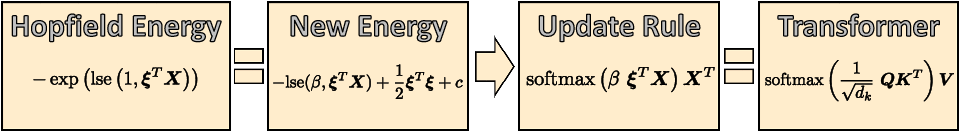

The authors redefine the energy function to accommodate continuous states while maintaining the inherent fast convergence and large storage capacity associated with binary Hopfield networks. Employing advanced mathematical reformulations, the continuous state Hopfield network achieves exponential pattern storage capacity, facilitated through innovative energy functions involving high-order interactions. This enables memory retrieval with minimal errors, thereby proving vital in deep learning contexts where layers are activated once per query.

Figure 1: We generalize the energy of binary modern Hopfield networks to continuous states while keeping fast convergence and storage capacity properties.

Additionally, the update mechanism parallels the transformative attention models used in transformers, thereby bridging associative memory mechanisms with cutting-edge attention processes. This equivalence delineates distinct operational modes within transformer heads, ranging from global averaging in early layers to partial averaging in later layers.

Architectural Integration

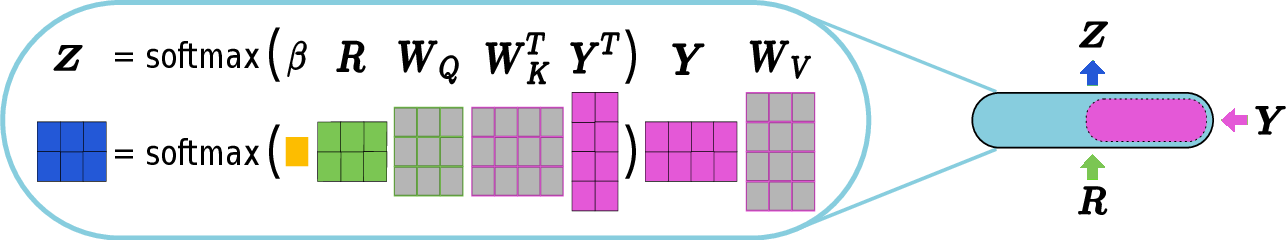

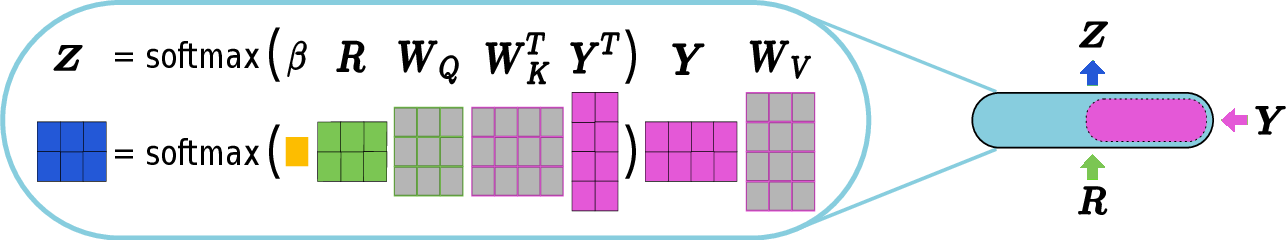

By embedding Hopfield networks into deep neural architectures, this work facilitates robust memory-access and prototype-learning capabilities. The Hopfield layers introduced—specifically, the layers "Hopfield", "HopfieldPooling", and "HopfieldLayer"—expand the operational lexicon beyond traditional networks, addressing functions from pooling to association tasks. Collectively, they revamp memory networks with functionalities such as multiple instance learning, sequence analysis, and permutation invariant learning.

Figure 2: The layer {\tt Hopfield} allows the association of two sets R and Y, facilitating complex associative memory operations.

Experimental Evidence

The empirical validation, spread across various domains, underscores the efficacy of Hopfield layers. Notably, the modern Hopfield network significantly enhances performance on multiple instance learning benchmarks, exhibiting superior results in immune repertoire classification and drug design datasets. This effectiveness extends to small UCI classification tasks, where traditional methods falter, thereby evidencing the practical implications of learning via Hopfield-enhanced networks.

Future Implications and Conclusion

The paper's transformative approach offers profound implications for both theoretical advancements and practical applications within artificial intelligence, particularly enhancing vision and language processing frameworks. Its ongoing developments could further refine neural architectures, promising enhanced scalability and efficacy across diverse computational domains.

In summary, "Hopfield Networks is All You Need" articulates a crucial leap in AI by integrating enriched memory networks with flexible, scalable deep learning models. It charts the course for future explorations in memory-centric networks that boast exponential storage, seamless retrieval, and broad applicability in AI tasks. The innovative strides embodied by this paper thrust forward the boundaries of what neural architectures can achieve, setting a new standard for research and development in AI.