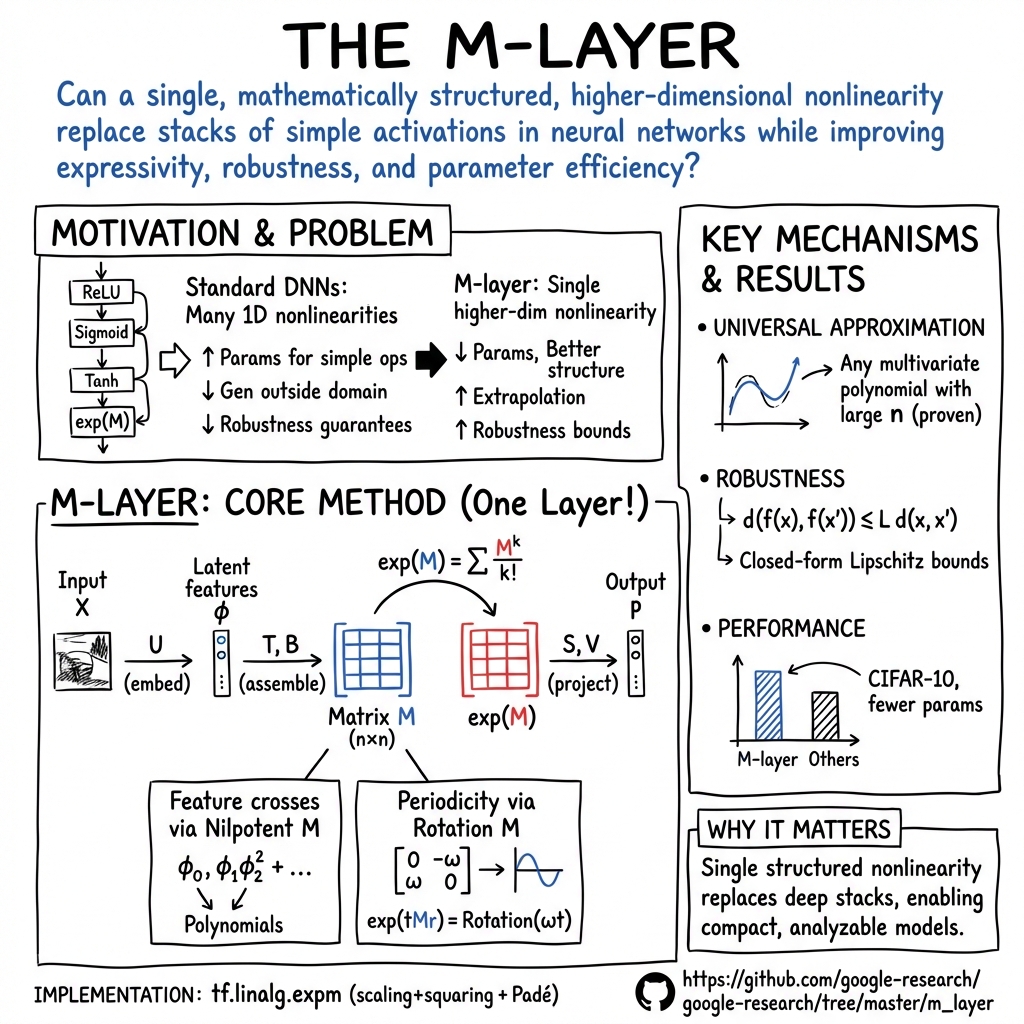

Intelligent Matrix Exponentiation: A New Framework for High-Dimensional Nonlinearity in Neural Networks

Overview and Motivation

The paper "Intelligent Matrix Exponentiation" [2008.03936] introduces a novel supervised learning architecture centered on a single matrix exponential operation, in which the exponentiated matrix is an affine function of the input features. In contrast to conventional deep neural networks that utilize repeated composition of $1$-dimensional nonlinearities (e.g., ReLU, tanh), the proposed M-layer leverages a high-dimensional, input-dependent matrix nonlinearity. This approach yields universal approximation capabilities, enables efficient feature cross interactions, and provides tractable robustness guarantees via explicit Lipschitz analysis.

Traditional DNNs require considerable depth and parameterization to represent complex feature interactions or periodicities and exhibit limited extrapolation capability due to compositional constraints. By formulating a multidimensional nonlinearity directly at the level of matrix exponentiation, the M-layer overcomes this limitation and can encode multivariate polynomials and periodic functions in parameter-efficient, analytically tractable ways.

Mathematical Construction and Architecture

The core of the M-layer architecture is a parametric square matrix $M$ constructed as an affine function of the inputs:

$$

M = \tilde B_{jk} + \tilde T_{ajk} \tilde U_{ayxc} X_{yxc}

$$

where $\tilde{U}, \tilde{T}, \tilde{B}$ are trainable tensors, and $X$ is the input (e.g., an image tensor indexed by $(y, x, c)$ for rows, columns, channels). $\tilde{U}$ performs a projection into a $d$-dimensional latent space; $\tilde{T}$ maps these features into an $n \times n$ matrix; $\tilde{B}$ adds bias. The nonlinearity is introduced via the matrix exponential:

$$

\exp(M) = \sum_{k=0}\infty \frac{1}{k!} Mk

$$

The final outputs are linear contractions of the matrix exponential via another trainable tensor $\tilde{S}$ and bias $\tilde{V}$:

$$

p_m = \tilde{V}m + \tilde{S}{mjk} \exp(M)_{jk}

$$

Training and inference proceed via standard backpropagation through the matrix exponential, which is efficiently computed using scaling and squaring combined with Padé approximation.

Key parameter scaling: The number of learnable parameters is $dn2 + n2 + n2h + h$, where $h$ is the output dimensionality—significantly less than equivalently expressive DNNs in many cases.

Universal Approximation and Feature Crosses

A fundamental property of the M-layer is the capacity to represent arbitrary multivariate polynomials in the input features, given sufficient matrix size $n$. The construction harnesses the combinatorics of nilpotent matrices to encode products of features along specific upper-diagonal entries, ensuring that any monomial can be extracted as a component of the matrix exponential.

Polynomial Computation: For any set of polynomials, appropriate construction of $T$ and $U$ can place all desired monomials in (nonzero) positions of $\exp(M)$. The architecture thus operates as a superset of polynomial kernel classifiers, without the restrictive uniformity of kernel degree.

Periodicity: For periodic or oscillatory structure, the use of anti-symmetric matrix blocks (e.g., $M = \left[\begin{smallmatrix} 0&-\omega\\omega&0 \end{smallmatrix}\right]$) guarantees the presence of $\sin$ and $\cos$ terms in $\exp(M)$, enabling precise modeling (and extrapolation) of periodic input dependencies.

Interpretational Links: The M-layer admits a Lie algebra/group interpretation, as $\exp(M)$ maps a learned Lie algebra element (defined by $\tilde{T}$ and $\tilde{U}$ as a function of the input) onto the corresponding group element, with implications for the study of symmetries and invariant representations.

Analytical Robustness Guarantees

The single-layer, explicit analytical structure allows for closed