- The paper presents an innovative method for detecting spatial patterns using ANN-to-SNN conversion and neural sampling techniques.

- It employs a stochastic spike response model with probabilistic threshold noise to perform energy-efficient Bayesian inference.

- Empirical evaluations on image classification demonstrate that SNNs achieve high accuracy and reduced divergence over time.

Spiking Neural Networks: Detecting Spatial Patterns

Spiking Neural Networks (SNNs) represent a biologically inspired approach within the field of machine learning, emphasizing the usage of dynamic neuronal models that process information in the form of sparse, event-driven spike signals. This paper, part one of a three-part series, intricately discusses SNNs' models, algorithms, and applications, particularly focusing on the detection and generation of spatial patterns embedded within rate-encoded spiking signals. The methodological framework emphasizes the conversion of traditional Artificial Neural Networks (ANNs) into SNNs, as well as the novel approach of neural sampling for Bayesian inference.

Models for Detecting Spatial Patterns

Artificial Neural Networks

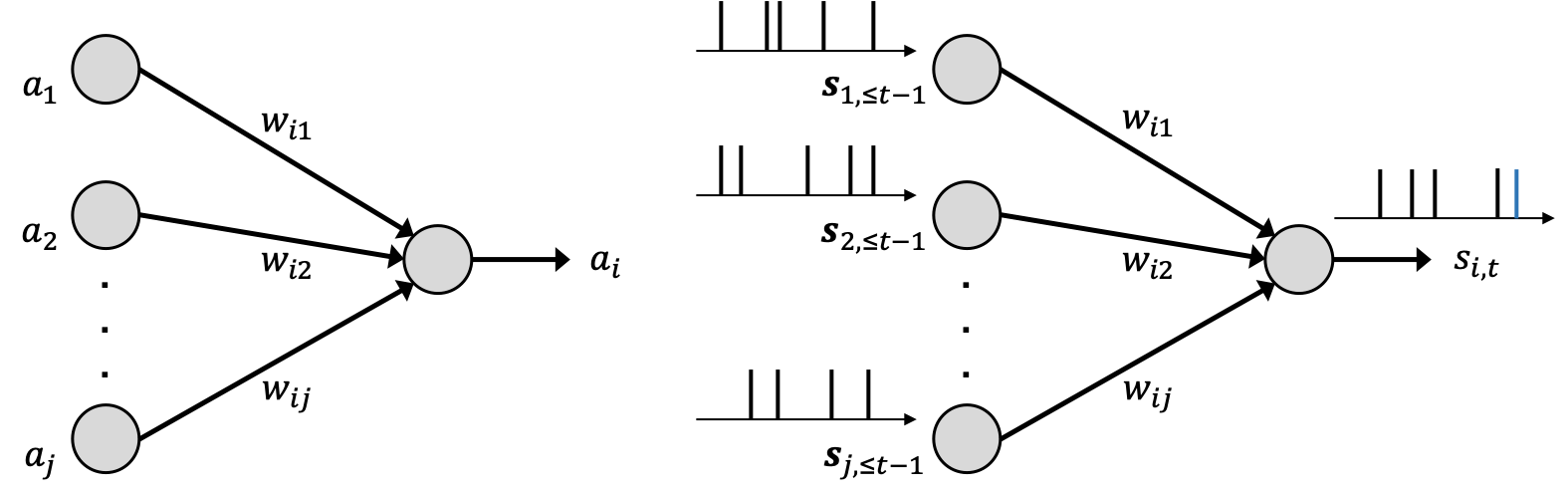

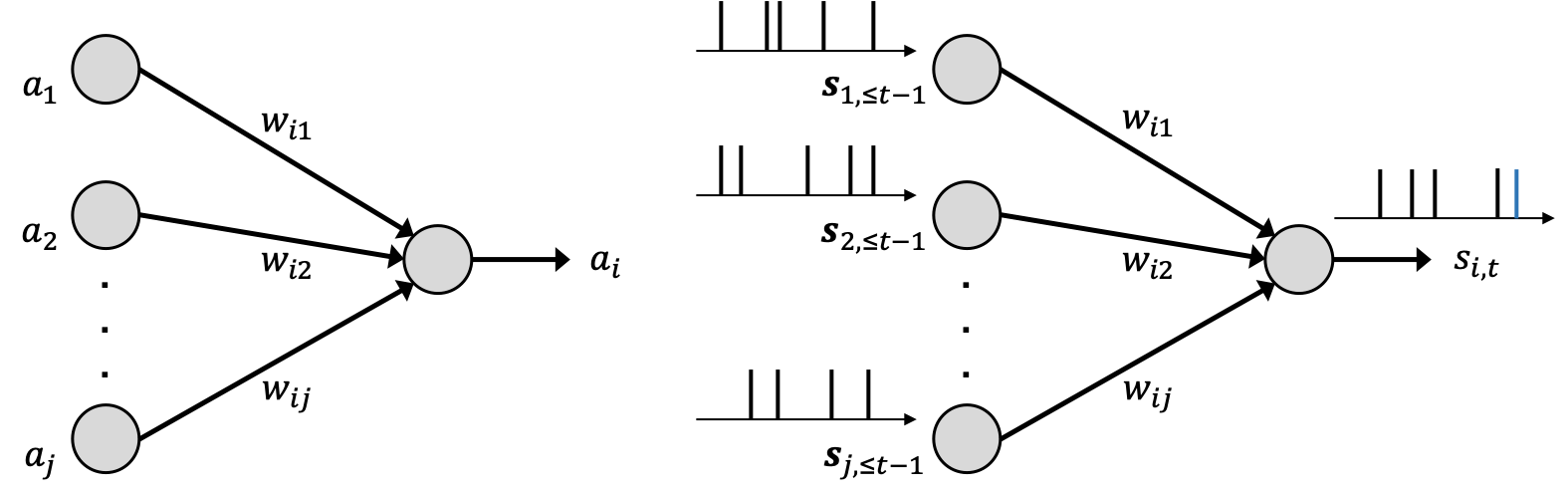

In conventional ANNs, information is encoded as real-valued activations using static functions processed through layers of interconnected neurons (Figure 1). Neurons communicate based on pre-activation sums weighted by synaptic connections, often implemented using non-negative activation functions like the rectifier in ReLUs, suited to emulate spiking behaviors.

Figure 1: Illustration of neural networks: (left) an ANN, where each neuron processes real numbers to output a real number as a static non-linearity; and (right) an SNN, where each neuron transmits binary spiking signals.

Spike Response Model for SNNs

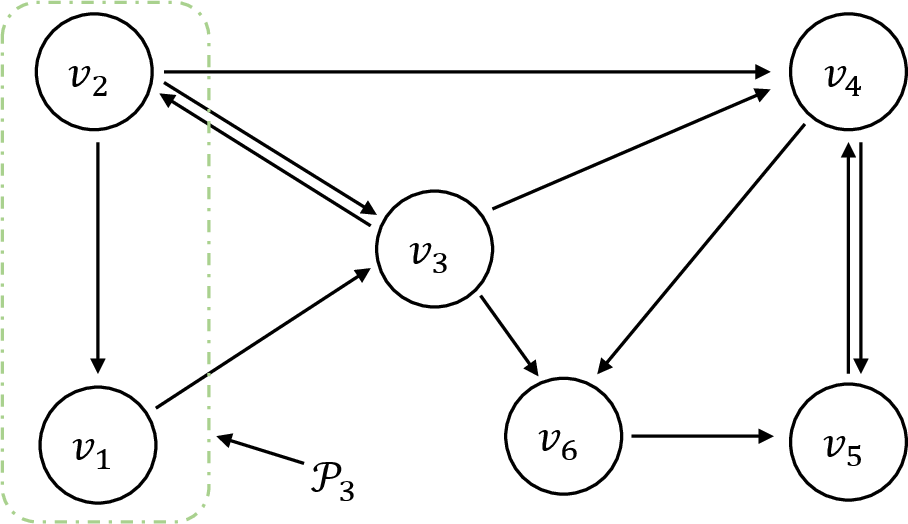

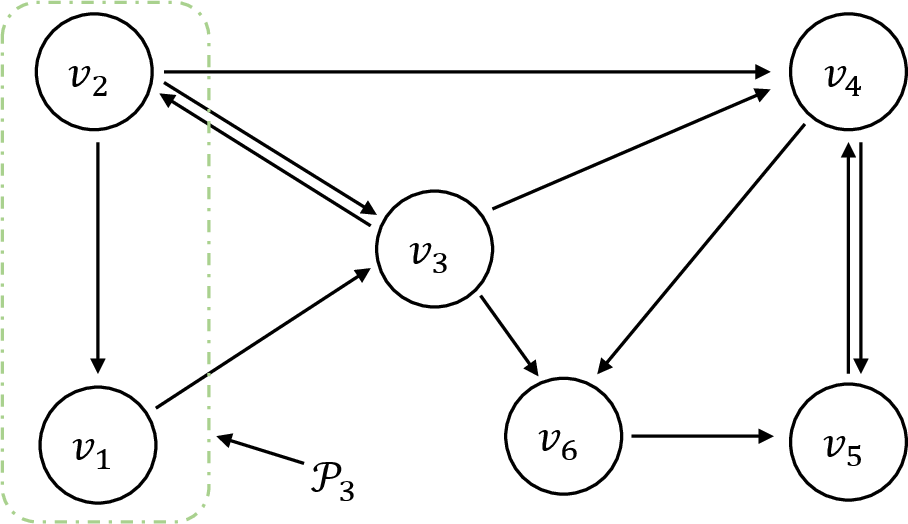

SNNs function as dynamic systems where neurons transmit binary spiking signals over time, governed by membrane potentials that initiate spikes once thresholds are exceeded. The Spike Response Model (SRM) articulates this behavior, capturing the time-causal interplay of synaptic and feedback signals, thereby modeling neurons’ firing patterns (Figure 2).

Figure 2: Architecture of a SNN with six neurons, demonstrating synaptic link dynamics and recurrent behavior.

Stochastic Threshold Noise in SNNs

The paper introduces a probabilistic variant of SRM, leveraging stochastic threshold noise to enable Bayesian inference through neural sampling. A simplified model captures membrane potential dynamics, promoting energy-efficient computation by limiting spike emissions through fixed-duration refractory mechanisms.

Algorithms for Detecting Spatial Patterns

ANN-to-SNN Conversion

This conversion strategy facilitates SNNs to mimic ANNs by matching neural activations to spike rates. The approach underscores rate encoding as crucial for transferring pre-trained weights from ANNs to SNNs, enhancing SNNs' approximation accuracy over extended operational periods. Additionally, the timing of initial spikes serves as an alternative for efficient encoding, employing adaptive threshold mechanisms to preserve performance metrics.

Neural Sampling

Neural sampling exploits SNNs for sampling from Boltzmann distributions, a critical aspect of Bayesian inference. The methodology establishes a probabilistic model that simulates Markov Chain steps, demonstrating how SNNs can effectively approximate distributions, potentially serving as alternatives to classical MCMC methods.

Applications

Image Classification

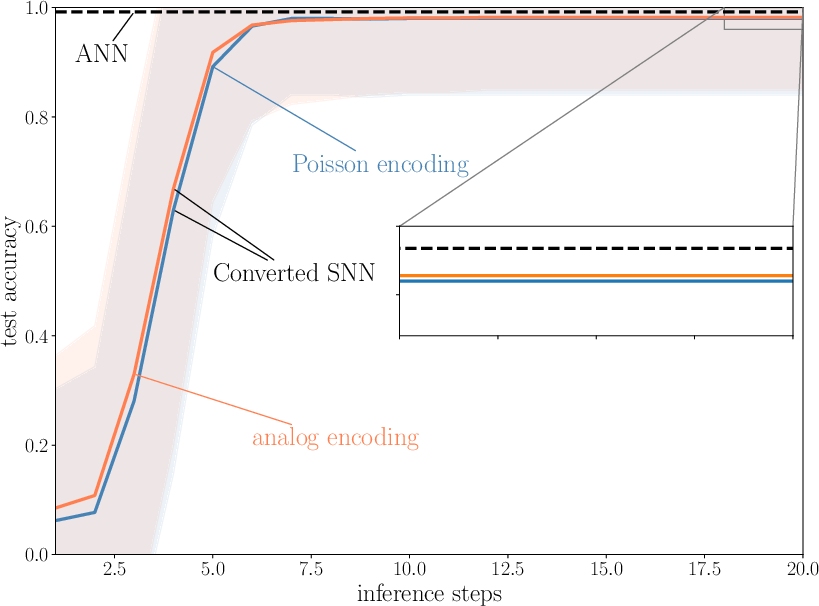

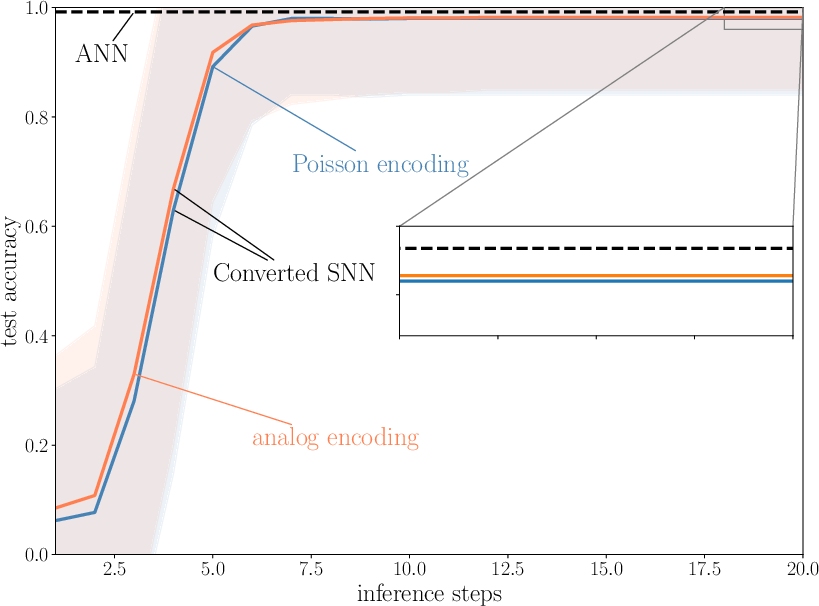

Image classification tasks utilizing neural models demonstrate SNNs' efficacy, particularly when converting ANNs by rate encoding for spatial pattern recognition (Figure 3). Performance evaluations on datasets like MNIST exhibit the progressive accuracy achievable with increasing inference time-steps, underscoring SNNs' energy-efficient computation.

Figure 3: Test accuracy as a function of the number of inference time-steps. Shaded areas represent the standard deviation over the test dataset.

Neural Sampling Validation

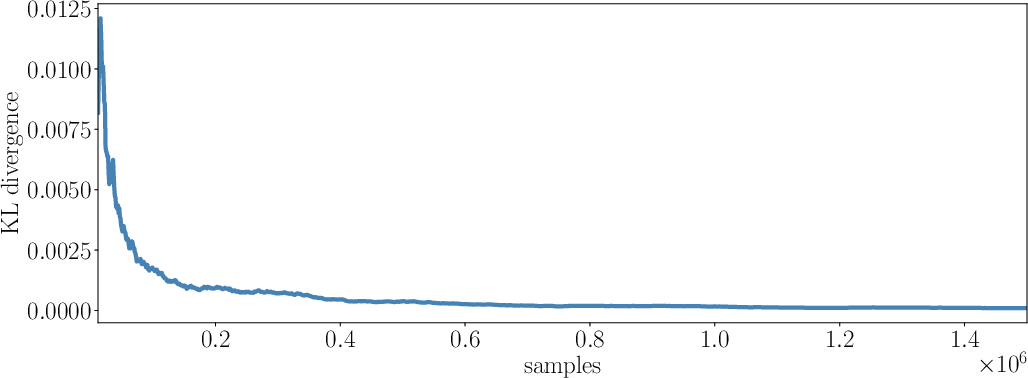

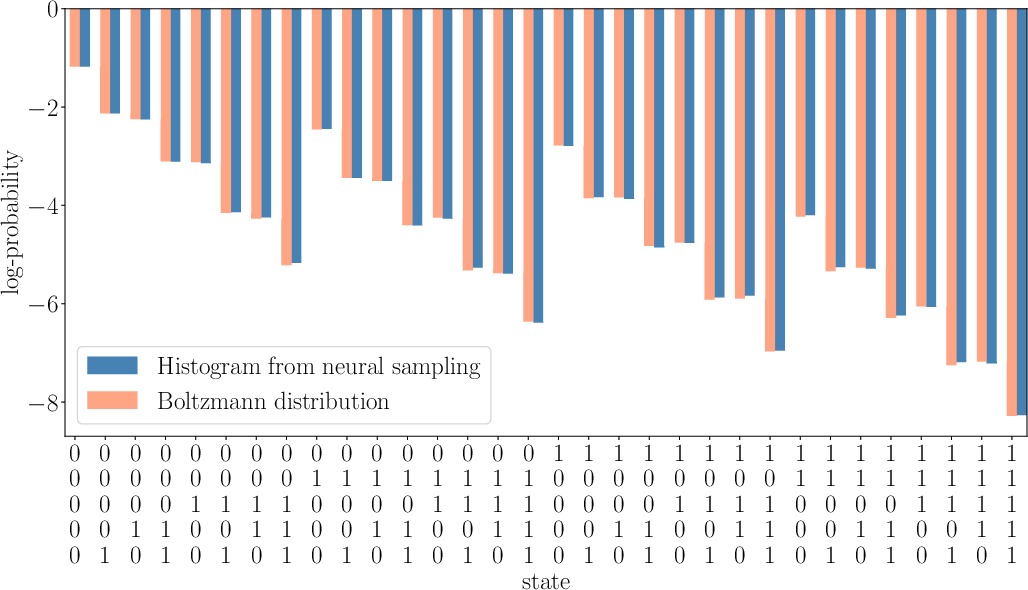

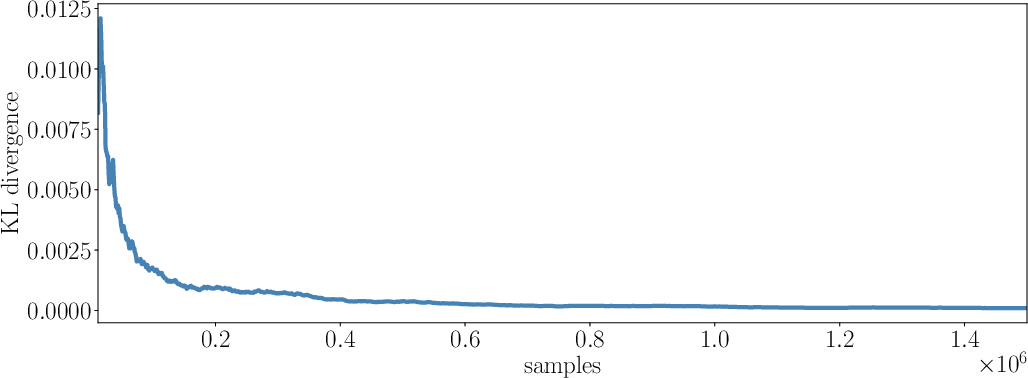

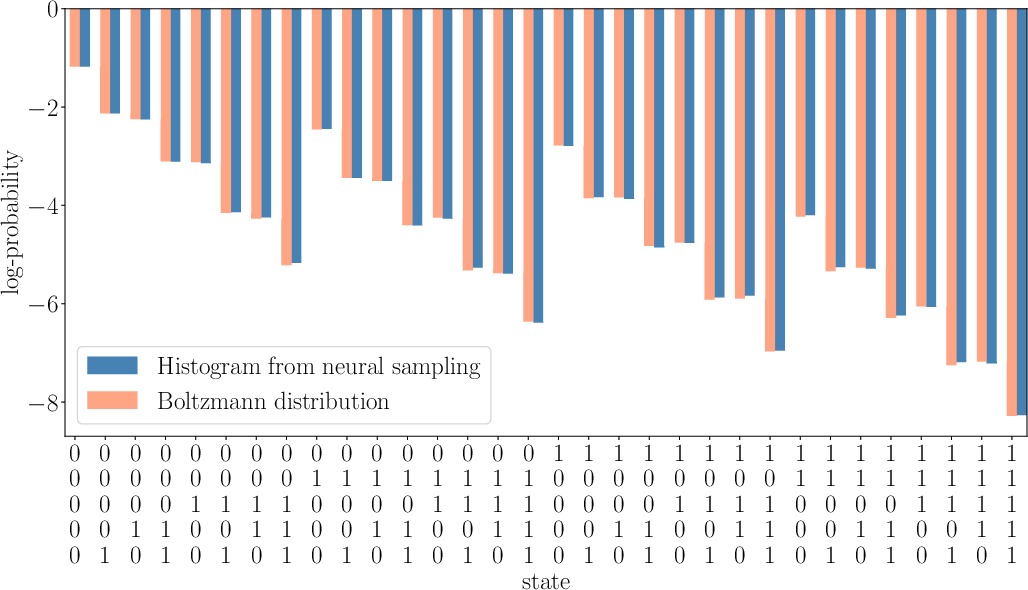

The paper validates neural sampling by simulating SNNs with fixed refractory mechanisms, confirming convergence towards Boltzmann distributions through empirical evaluation of KL divergence and histogram comparisons (Figure 4). This affirms SNNs' capability in sampling applications requiring probabilistic modeling precision.

Figure 4: Illustration of neural sampling capabilities across time steps, visualizing divergence reduction between empirical and theoretical distributions.

Conclusion

This initial paper in the series elucidates SNNs' proficiency in detecting spatial patterns, highlighting algorithmic strategies that enable efficient ANN conversions and neural sampling. The exploration accentuates SNNs' potential role in enhancing machine learning paradigms, setting the stage for subsequent investigations focusing on spiking signal timing and additional neuromorphic applications. Further research is anticipated to explore SNNs' advanced capabilities and their implications on diverse AI-driven systems.