- The paper introduces a novel framework combining neural SDEs with infinite-dimensional GANs and demonstrates its effectiveness in modeling temporal dynamics.

- It leverages neural networks and advanced numerical solvers to accurately recover path distributions in complex stochastic systems.

- Empirical validation on four diverse datasets shows improved metrics in classification, prediction, and model flexibility over traditional approaches.

Neural SDEs as Infinite-Dimensional GANs

Introduction

The integration of Stochastic Differential Equations (SDEs) with neural networks offers a novel approach to modeling temporal dynamics under uncertainty, a cornerstone of various fields such as financial modeling and population dynamics. This paper presents a framework wherein Neural SDEs are conceptualized as an extension of Infinite-Dimensional Generative Adversarial Networks (GANs). Through this perspective, the classical approach to SDE modeling aligns with the more flexible frameworks provided by GANs, enhancing model capacity without relying on prespecified statistics or density functions.

Neural SDEs and GANs Framework

In the proposed framework, the generation of paths via a neural SDE is akin to the generation process in GANs, where Brownian motion acts as input noise, and the temporal paths of pre-defined systems evolve as generator outputs. By parameterizing the discriminator as a Neural Controlled Differential Equation (CDE), this process describes continuous-time generative time series models. The unique contribution here lies in eschewing fixed payoff functions, allowing any SDE to be learned given sufficient data by utilizing a Wasserstein GAN loss with a unique global optimum.

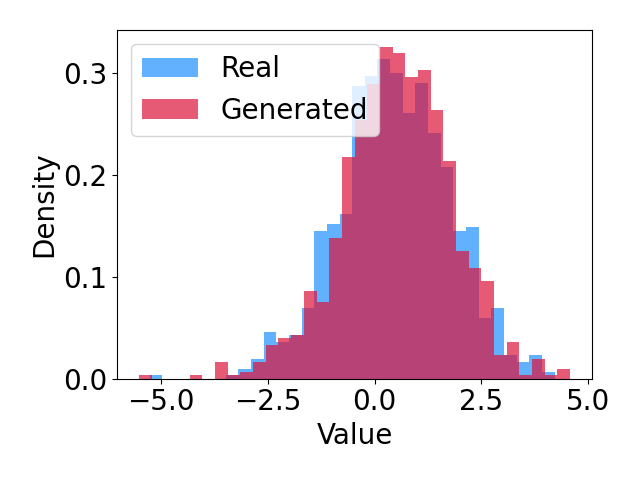

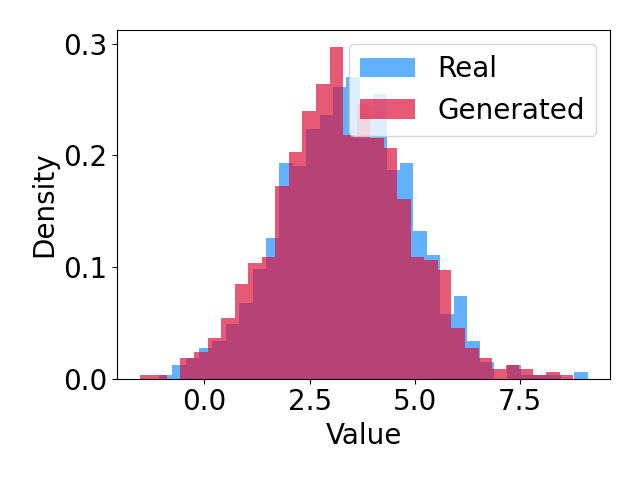

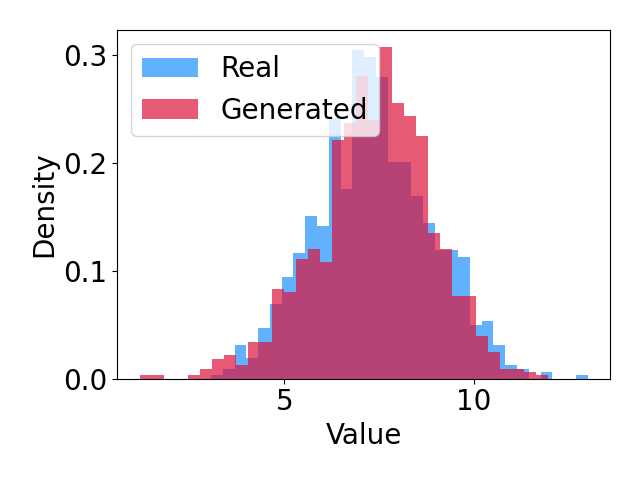

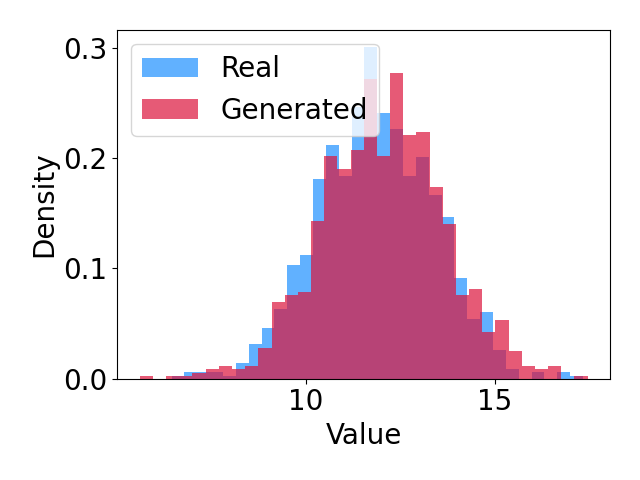

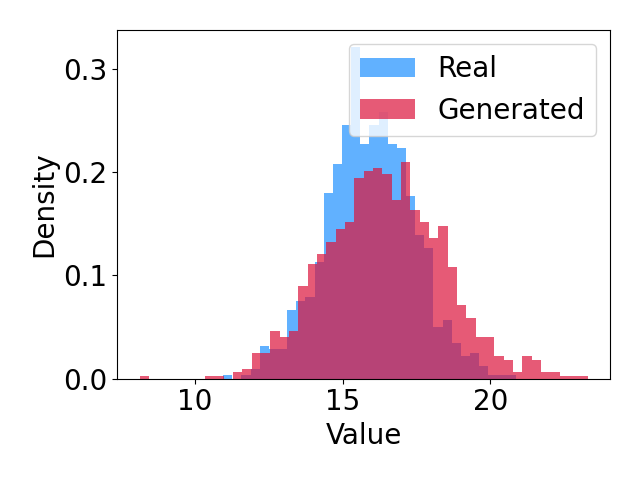

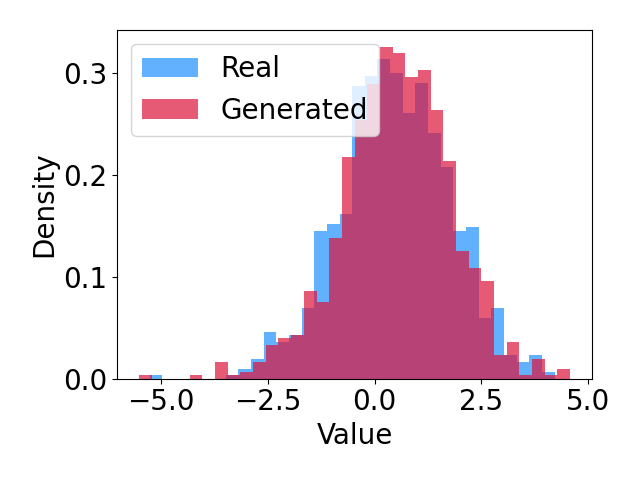

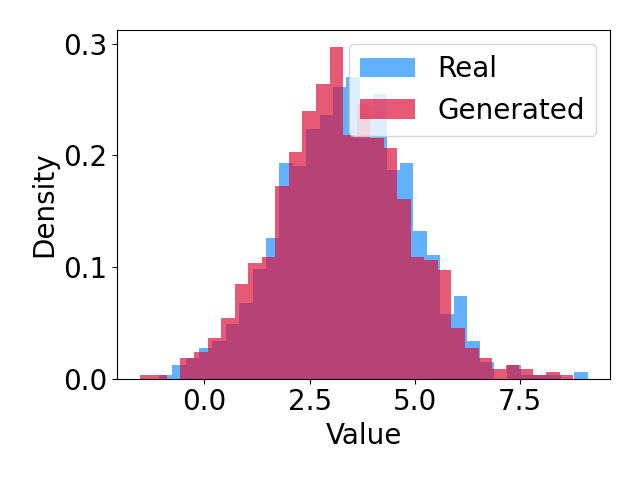

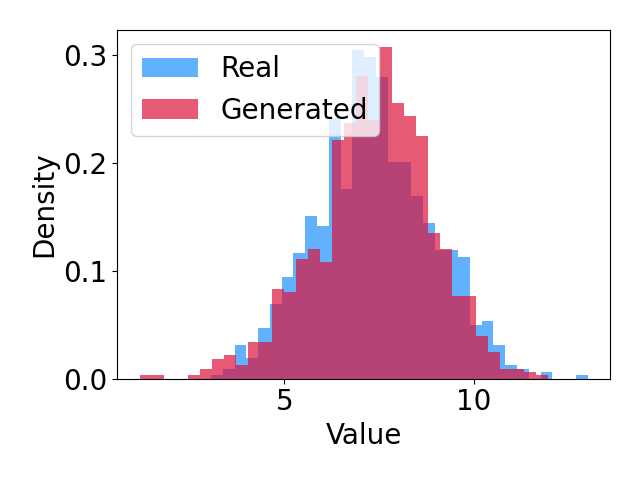

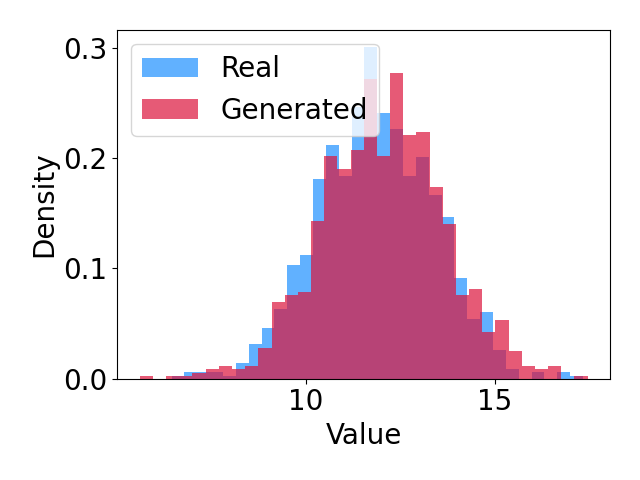

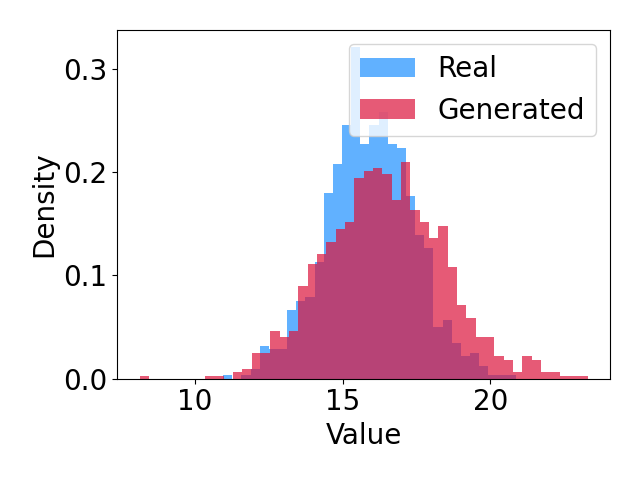

Figure 1: Left to right: marginal distributions at t=6,19,32,44,57.

Implementation Details

The implementation leverages PyTorch, utilizing the torchsde library for numerical solutions of SDEs. The architecture connects neural SDE generators and CDE discriminators in a single framework. Key components include initial conditions parameterized by neural networks (ζθ for the generator and ξϕ for the discriminator) and differential functions (μθ and σθ for drift and diffusion). Sampling and training of these models rely on numerical SDE solvers like the midpoint method, ensuring convergence to the Stratonovich solution.

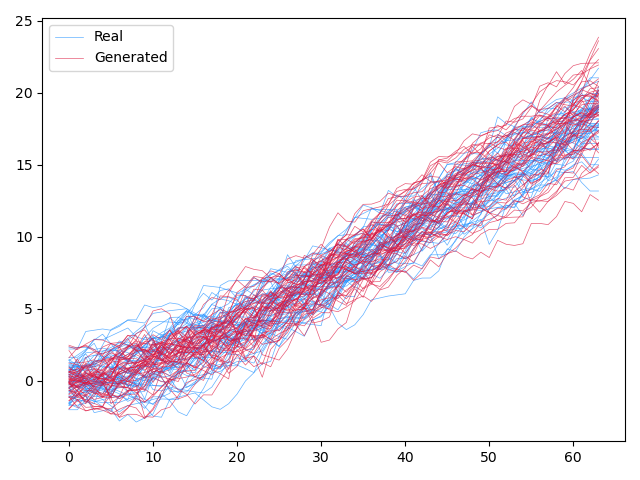

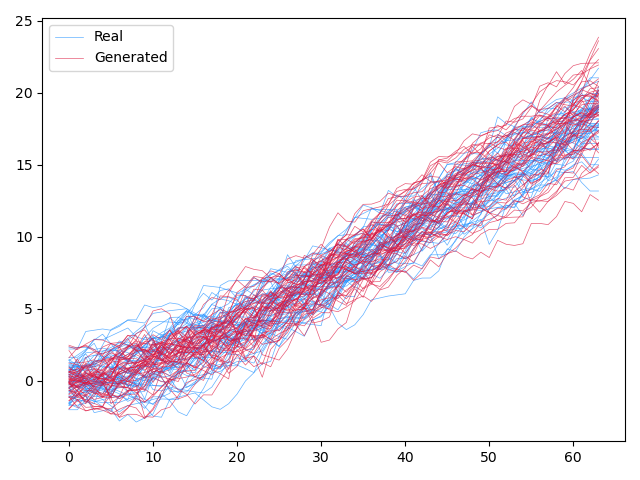

Figure 2: Sample paths from the time-dependent Ornstein--Uhlenbeck SDE, and from the neural SDE trained to match it.

Experimental Validation and Results

Four datasets were used to validate the framework: a synthetic time-dependent Ornstein--Uhlenbeck process, Google/Alphabet stock prices, air quality in Beijing, and neural network weight dynamics under SGD—all of which showcase different application regimes of Neural SDEs. Quantitative comparisons with other continuous-time generative models, like Latent ODEs and Continuous Time Flow Processes (CTFPs), demonstrated the superior performance of Neural SDEs in capturing the dynamics and distributions of such time series data.

Empirical results revealed that the Neural SDE effectively recovered underlying dynamics, with enhanced modelling flexibility evidenced by improved classification, prediction, and MMD metrics on financial and environmental datasets.

Discussion

The coupling of Neural SDEs with CDEs offers advancements over traditional SDE modeling methods, addressing the inherent stochasticity in real-world phenomena without constrained prespecified parameters. This GAN-inspired approach not only expands the capability of SDE models in machine learning but also poses an opportunity for further applications across domains involving stochastic processes.

Conclusion

The reimagining of Neural SDEs as Infinite-Dimensional GANs strengthens their relevance and application in accurately modeling path distributions for complex temporal dynamics. Through leveraging the generative capabilities of GANs, this paper contributes to broadening the applicability of neural differential equations in addressing varied stochastic modeling challenges in deep learning and beyond.