- The paper presents a novel latent action space representation that transforms high-dimensional actions into task-focused latent variables, significantly enhancing sample efficiency in manipulation tasks.

- It employs both offline expert trajectories and online data to jointly optimize latent representation and policy learning, thereby accelerating task performance.

- Experimental results using SAC demonstrate faster learning curves and robust policy transfer in complex, contact-rich tasks, confirming LASER’s practical benefits.

Learning a Latent Action Space for Efficient Reinforcement Learning

The paper provides a novel approach titled LASER (Learning a Latent Action Space for Efficient Reinforcement Learning) that focuses on enhancing the efficiency of RL in solving manipulation tasks. This technique introduces a representation learning paradigm to discover an optimal latent action space, thereby transforming the exploration space for policy learning and potentially increasing sample efficiency.

Methodology

Latent Action Space Representation

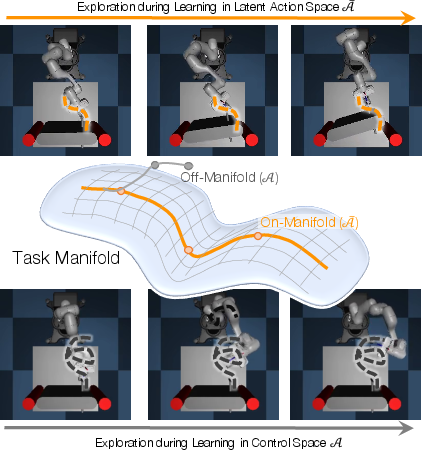

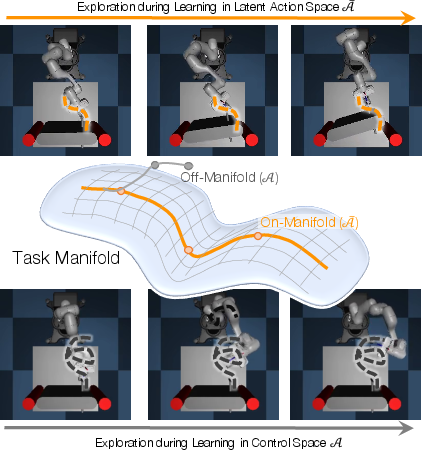

LASER develops a variational latent action space by factorizing the learning problem into two primary components: action space learning and policy learning within this action space. This space is constructed using a variational encoder-decoder architecture, where actions are transformed from high-dimensional, uninformed raw forms to a distilled, task-focused latent representation. The learned action space is optimized to encapsulate the minimal dimensionality necessary for effective task execution (Figure 1).

Figure 1: Learning Latent action spaces for efficient reinforcement learning. Manipulation tasks, such as opening a door, are often structured and do not require exploration in the entire action space, only on certain manifold. LASER learns this action space manifold from data, either offline (expert) or online (training with LASER), enabling faster learning in subsequent novel instances of the task by transferring the knowledge via an efficient latent action space.

Offline and Online Training Variants

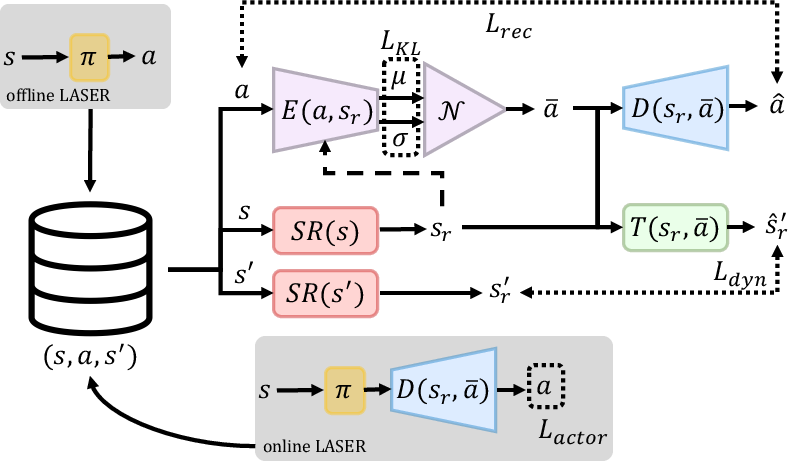

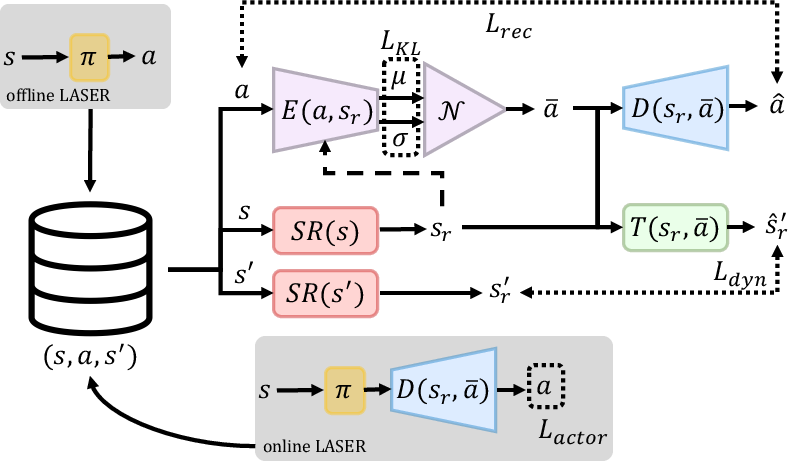

The paper explores both offline and online approaches for training the LASER framework. Offline training relies on expert-generated trajectories to shape a latent action space that can later benefit policy updates during new task instances. Conversely, online training enables simultaneous policy and representation learning within the evolving latent space, exploiting online interaction data to continuously refine the utilitarian representation (Figure 2).

Figure 2: LASER Overview: We train a latent action space $\mathcal{\bar{A}$ using batches of tuples (s,a,s′).

Experimental Results

Efficiency and Task Generalization

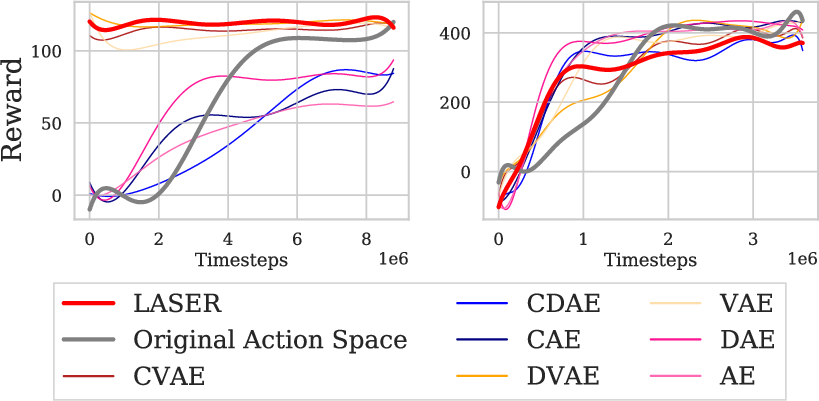

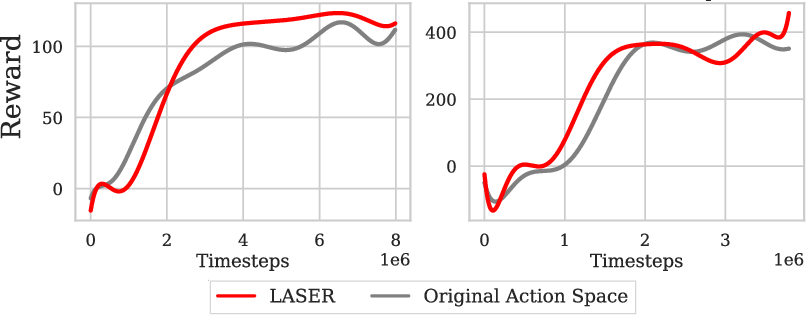

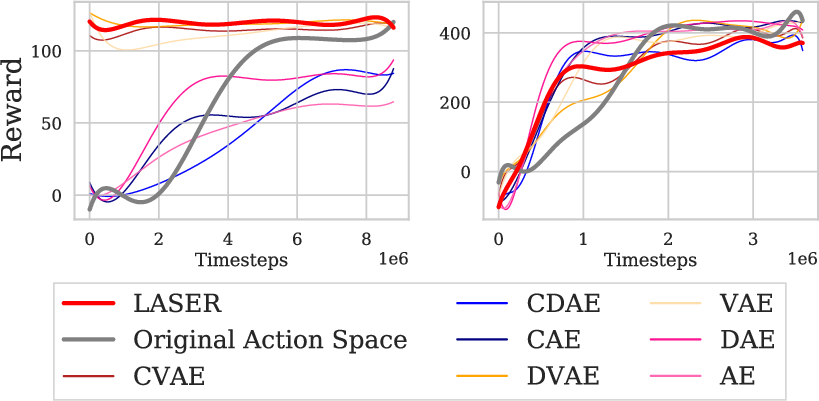

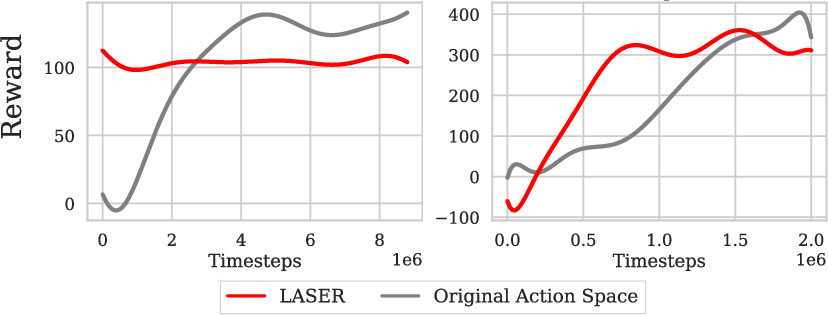

The experiments utilize SAC to illustrate improvements in sample efficiency and policy performance when leveraging the LASER-generated latent action space. Compared to original action spaces, LASER demonstrates expedited learning curves in complex, contact-rich tasks such as Door and Wipe. Notably, policies operating within the LASER framework significantly accelerate initial learning and sustain superior performance when transferred to variant tasks (Figure 3 and Figure 4).

Figure 3: Exp1. LASER trained on offline batch data: SAC on the original action space, a LASER action space, and on ablations of LASER.

Figure 4: Exp2. LASER task transfer: SAC on the original and LASER action spaces in new instances of Door (left), and Wipe (right).

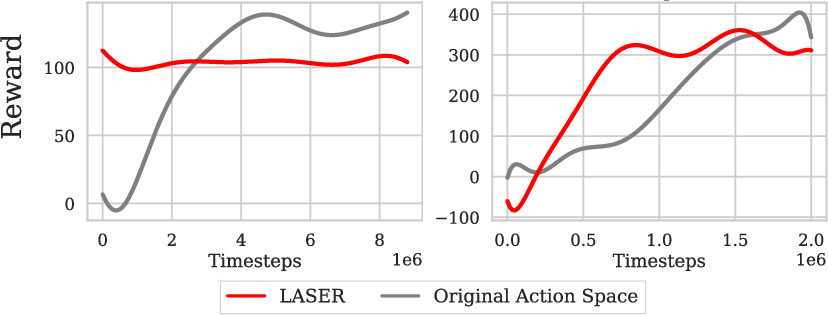

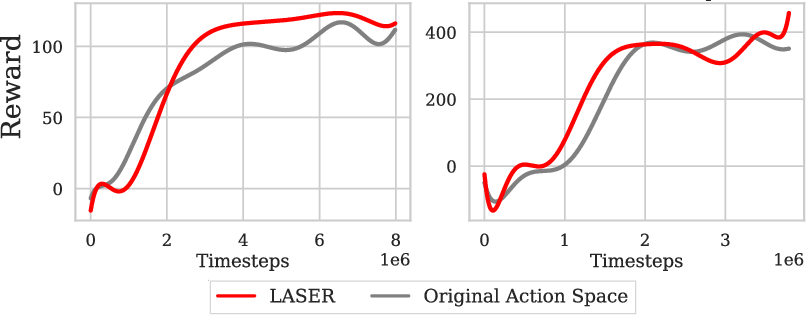

Online LASER successfully integrates the learning of latent actions with ongoing policy optimization, suggesting its robustness in dynamically adapting to novel task conditions without hindering learning effectiveness (Figure 5).

Figure 5: Exp3. LASER trained online with policy learning: SAC on the original and online LASER action spaces on Door (left), and Wipe (right).

Action Space Consistency and Stability

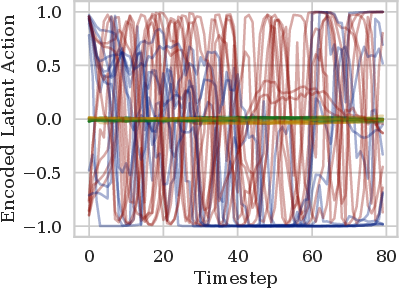

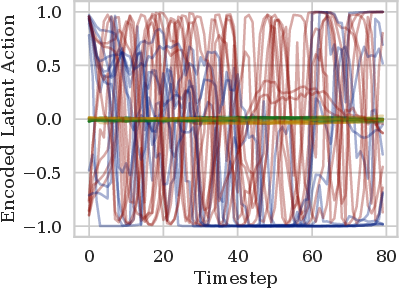

Analyzing these latent spaces shows them to be aligned with the natural task manifold, exemplified by the maximization of utility through minimized dimensionality (Figure 6). This consistency points to LASER's capacity to isolate critical action dynamics and further stabilize policy evolution.

Figure 6: Exp4a. Dimensionality analysis (best viewed in color): Mean of each dimension of the variational encoder output for 10 different rollouts; each latent action dimension is shown in a different colour.

Conclusion

LASER advances RL by delineating an optimal latent action space grounded in structured skill transferability. Its data-driven design harmonizes action manifold representation with the task environment’s inherent structure, enhancing the efficiency of learning cycles and facilitating smoother task generalization. This approach is a significant step in addressing the dimensionality and exploration challenges integral to RL, with promising extensions into unseen task domains and complex robotic interaction scenarios.