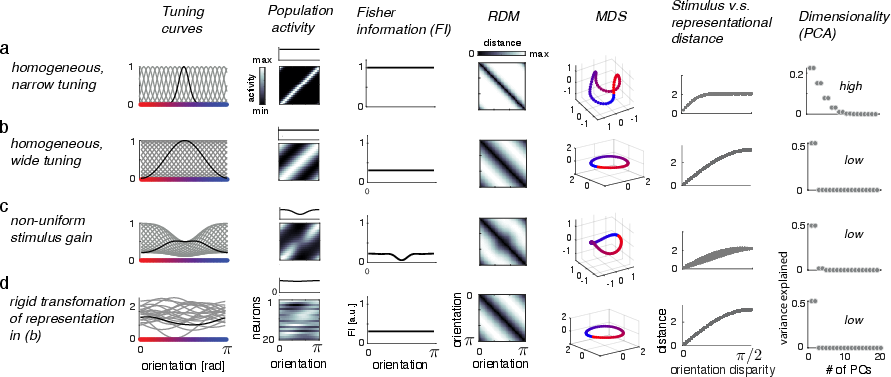

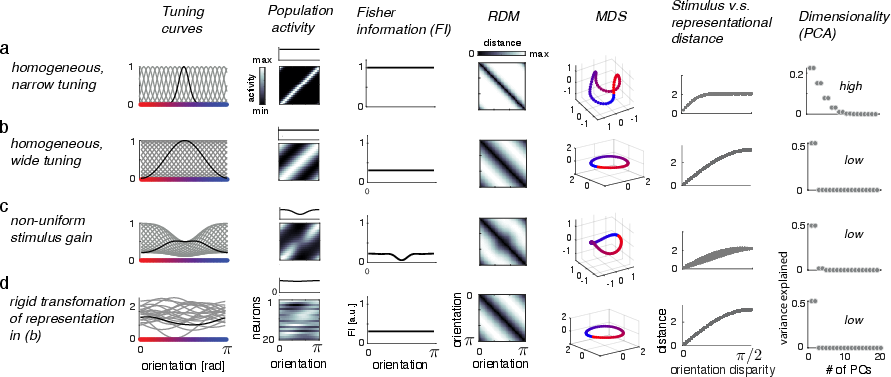

- The paper demonstrates that neural tuning curves and representational geometry jointly influence metrics like Fisher Information and Mutual Information.

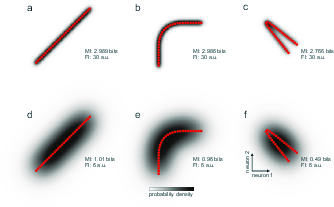

- The study uses detailed analysis of neural response patterns and geometric manifolds to assess precision in stimulus encoding.

- The research implications extend to optimizing neural codes for efficient sensory processing and decoding in cognitive tasks.

Neural Tuning and Representational Geometry

Introduction

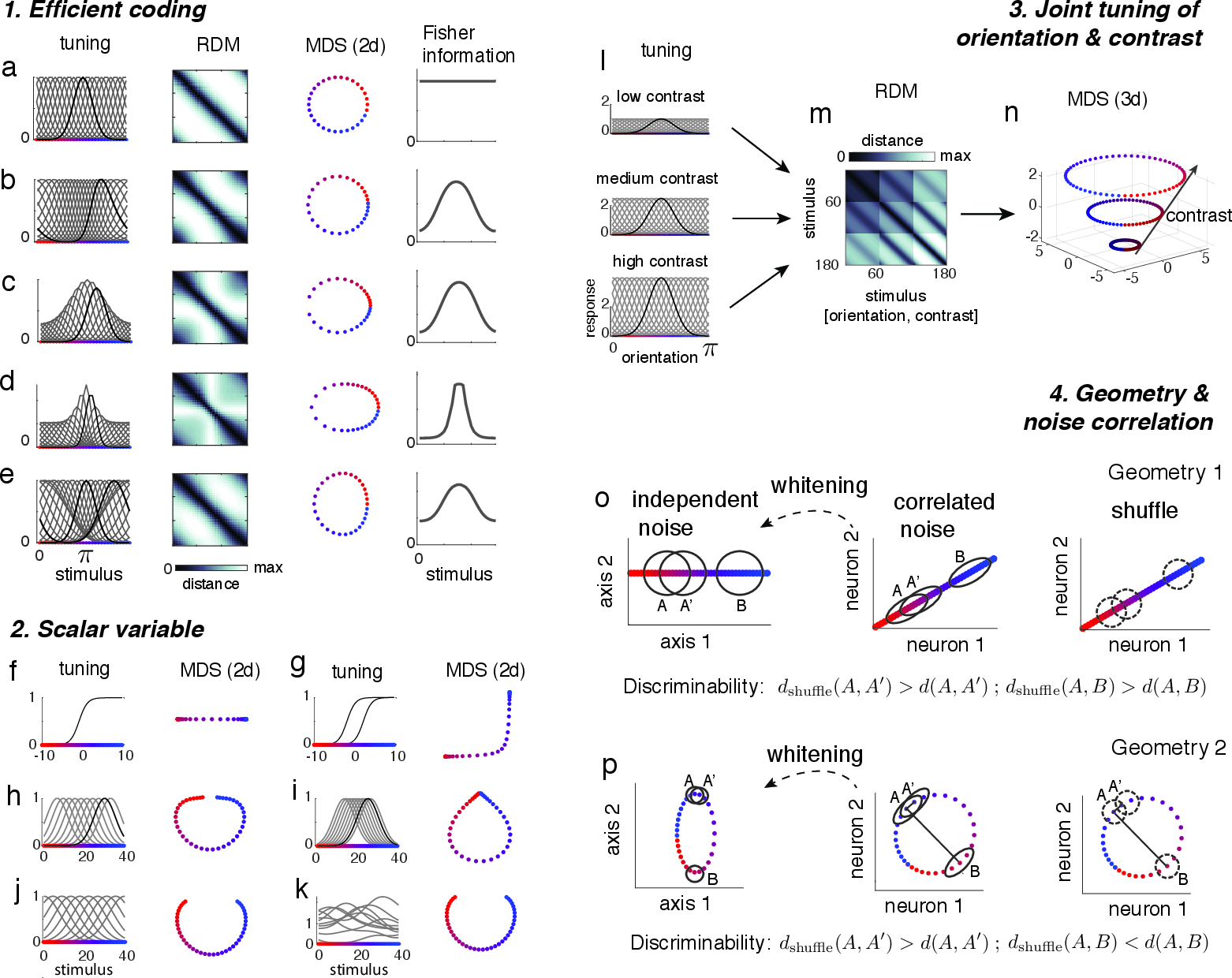

The paper "Neural tuning and representational geometry" explores the critical relationship between neural tuning and representational geometry, providing a comprehensive framework for understanding the representational structures formed by neuronal activity patterns and their behavioral importance. The research posits that both neural tuning curves and the geometric arrangement of representations in multivariate response space are vital to understanding the neural code.

Neural Tuning and Representational Geometry

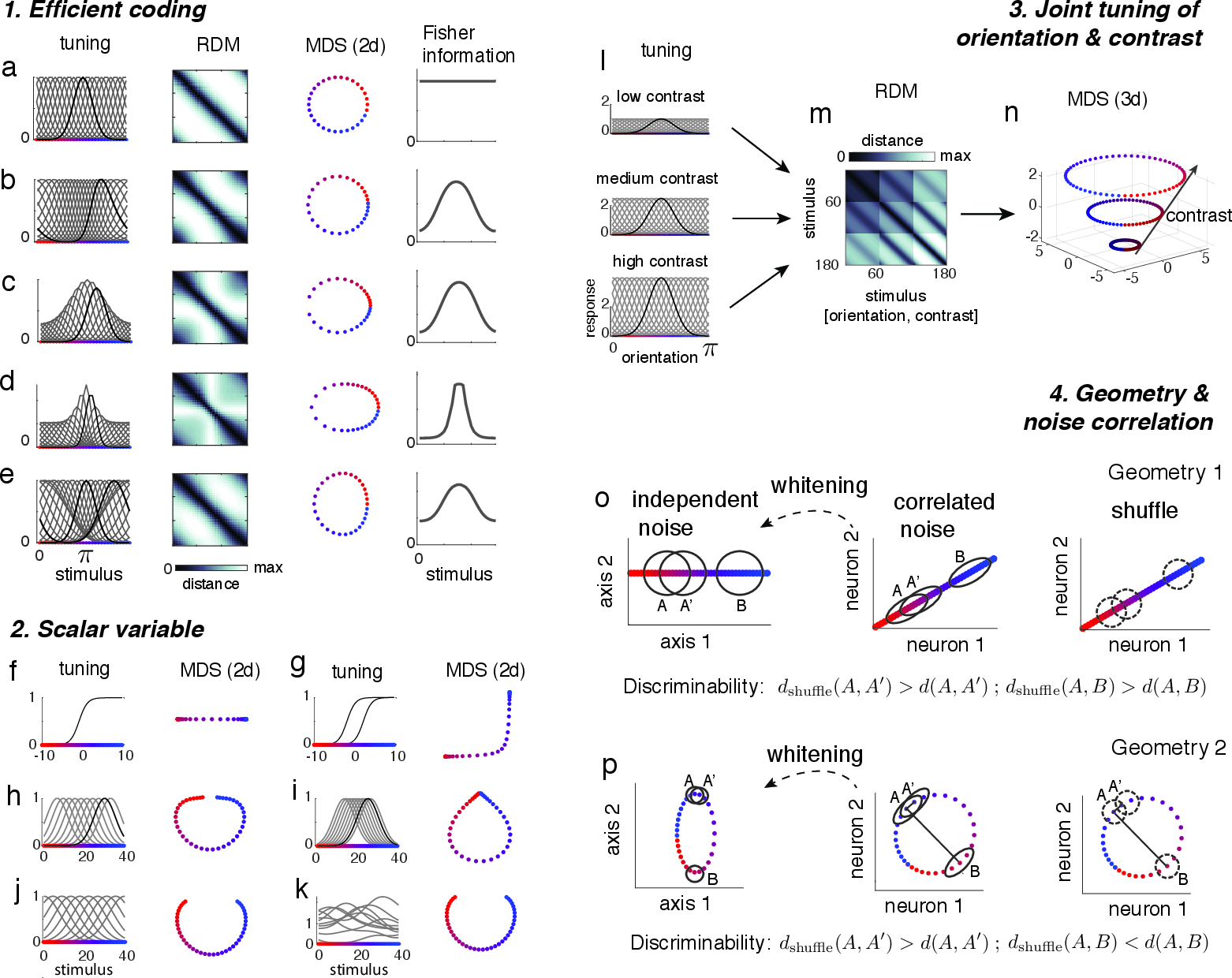

The paper explores neural tuning curves, which describe the modulation of a neuron's firing rate based on specific stimulus variables, such as orientation or position. These tuning curves manifest as bell-shaped curves in classical models, such as those demonstrated by Hubel and Wiesel in their work with V1 neurons. The representational geometry, on the other hand, encapsulates the distance relations in the response patterns for a given set of stimuli, offering a comprehensive view of decodable information within linear and nonlinear modalities.

Figure 1: Neuronal tuning determines representational geometry. Each row shows a specific configuration of the neural code for encoding a circular variable, such as orientation.

A significant portion of the paper is dedicated to analyzing how the tuning curves and representational geometry determine cognitive metrics such as Fisher Information (FI) and Mutual Information (MI). Fisher Information provides insight into the precision of stimulus encoding, dependent on tuning curves' slopes and manifolds in response spaces. It is shown that, while FI reflects local sensitivity, it does not fully encapsulate global representation geometry. MI, on the other hand, characterizes the overlap of stimulus and response distributions and is inherently tied to the entire representational manifold, modulating based on geometry and noise.

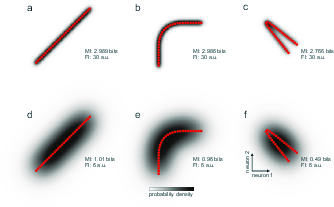

Figure 2: The relationship between manifold geometry, Fisher information, and mutual information.

Encoding and Decoding Perspectives

The dual perspectives of encoding and decoding offer a holistic view of how neural populations represent stimuli. Encoding models are concerned with the transformation of sensory inputs into neural activity, described succinctly by the manifold's characteristics. Decoding involves retrieving information from these encoded signals, where representational geometry informs the potential fidelity and resource efficiency of such processes.

Implications and Applications

The implications of this research extend significantly into practical and theoretical neuroscience, emphasizing the necessity of a balanced view of tuning and geometry. This dual perspective allows for more accurate predictions about neural behavior and enhances understanding of how neural systems optimize codes for efficiency—allocating precision relative to frequency and behavioral relevance. Applications span various domains, from sensory encoding theories to spatial navigation realized through grid cells.

Figure 3: Example applications. Efficient coding schemes demonstrate spatial encoding as predicted by manifold geometry.

Conclusion

This paper provides a robust framework interlinking neural tuning and representational geometry and fosters a more comprehensive understanding of the neural codes underlying cognitive processes. Through exploring FI and MI within this context, it offers insights into optimizing neural encoding for efficient and precise information processing. Potential future directions could include exploring more complex noise models and manifold geometries to further refine understanding of neural representation in diverse cognitive operations.