- The paper introduces BDANet, a novel two-stage CNN that uses cross-directional attention between pre- and post-disaster images for improved building segmentation and damage classification.

- It leverages a multi-branch U-Net design and CutMix data augmentation to mitigate class imbalance and boost performance on the extensive xBD dataset.

- Results demonstrate state-of-the-art accuracy and reduced training time, making BDANet a promising tool for real-time disaster management and response.

"BDANet: Multiscale Convolutional Neural Network with Cross-directional Attention for Building Damage Assessment from Satellite Images" (2105.07364)

Introduction

The paper introduces BDANet, a multiscale convolutional neural network specifically designed for assessing building damage using satellite images. This framework addresses the need for accurate and efficient damage assessment following natural disasters, leveraging high-resolution pre- and post-disaster imagery. Unlike traditional methods, BDANet integrates a cross-directional attention module to effectively model correlations between image pairs, improving damage prediction accuracy.

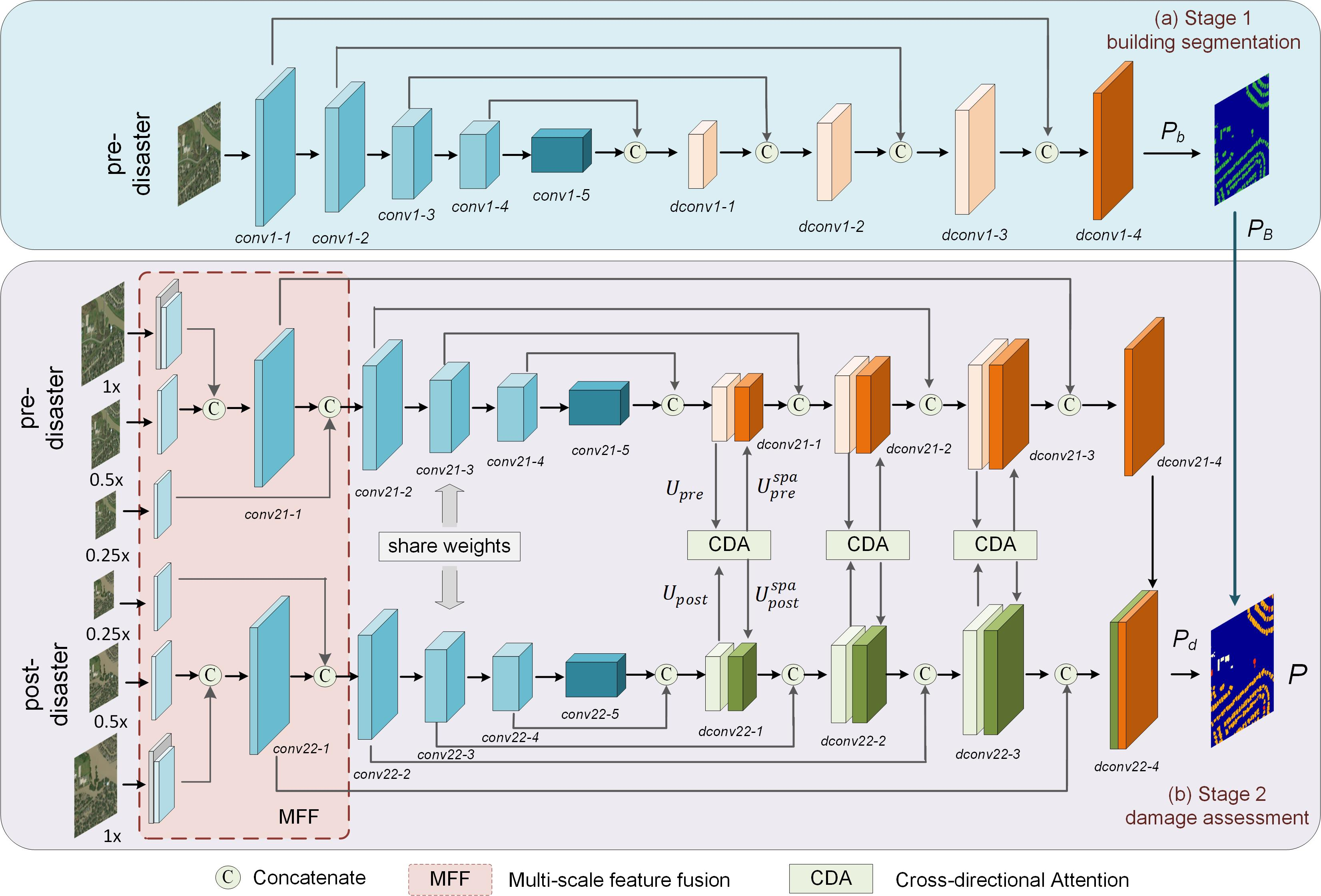

BDANet Architecture

BDANet follows a two-stage approach:

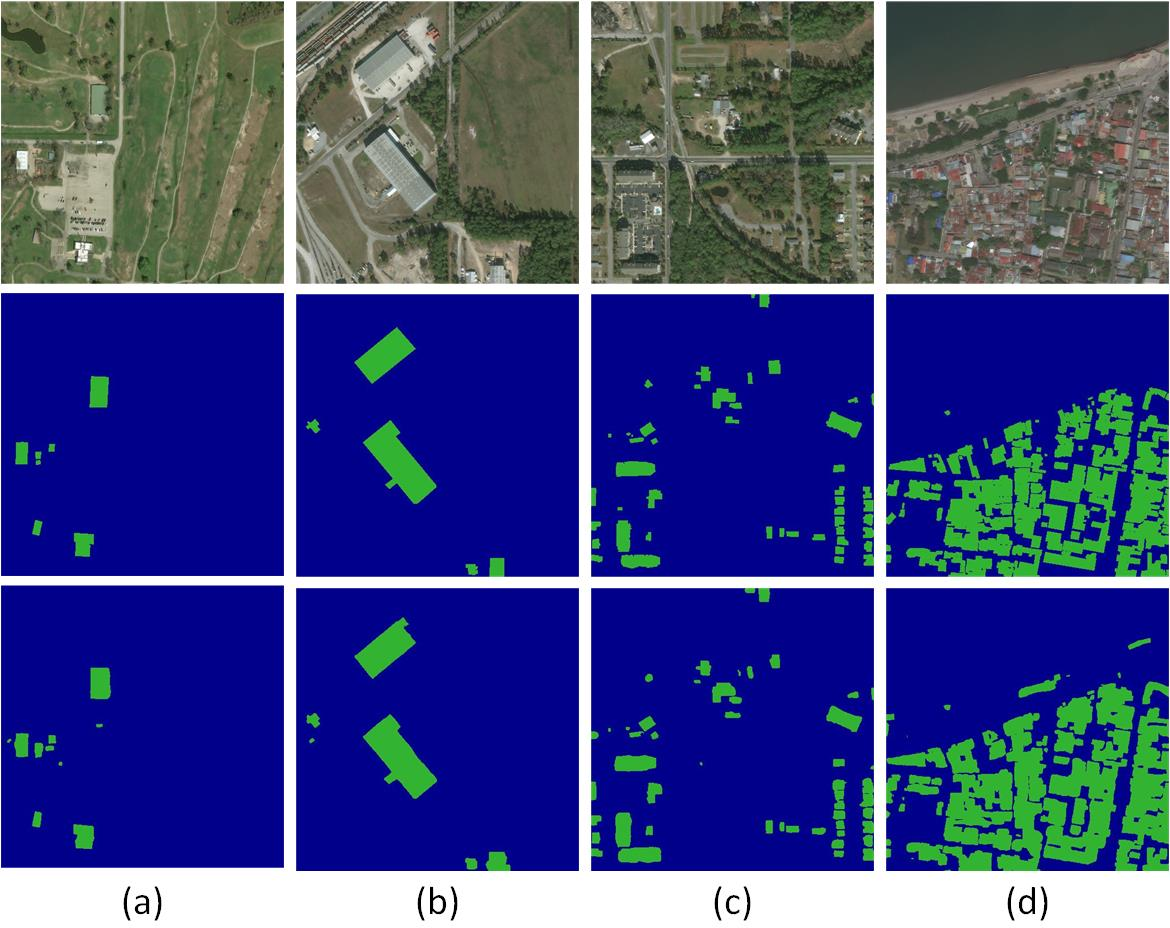

- Stage 1: Building Segmentation

- Stage 2: Damage Assessment

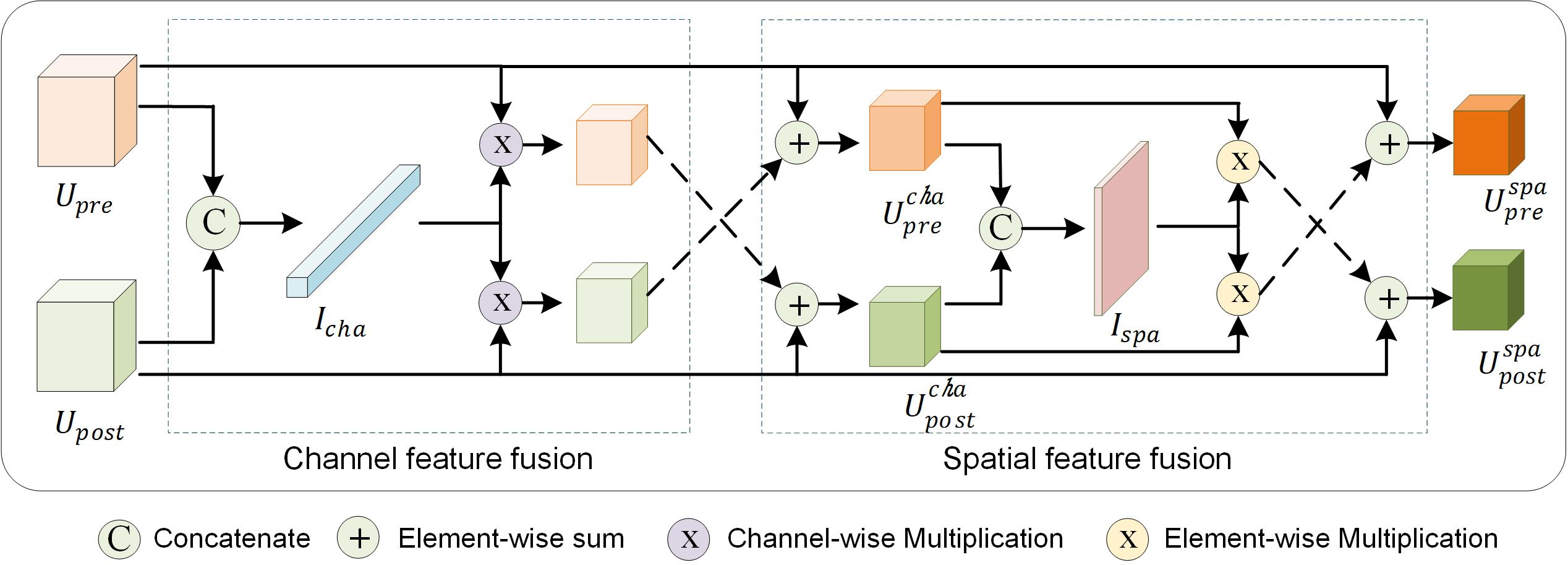

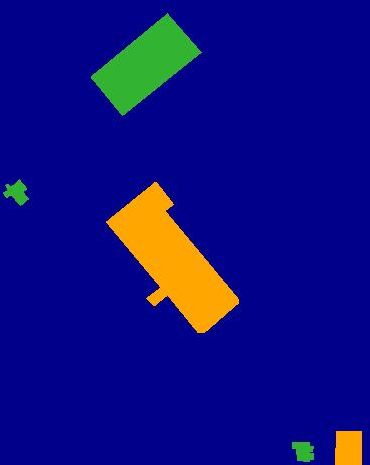

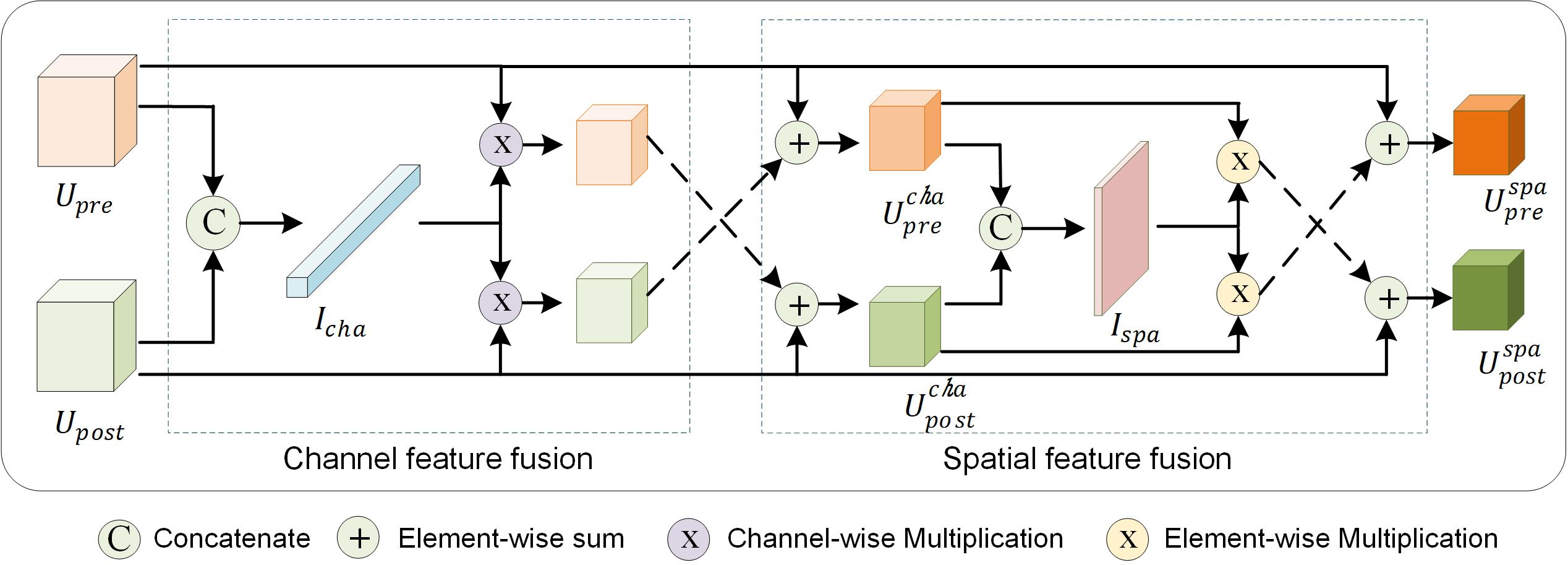

Cross-Directional Attention Module

The CDA module aids in identifying informative sections of images by recalibrating feature weights. This consists of spatial and channel squeezes and excitations, performed in a cross-modal fashion between pre- and post-disaster features, ensuring robust damage level classification.

Figure 3: Framework of the proposed cross-directional attention (CDA) module.

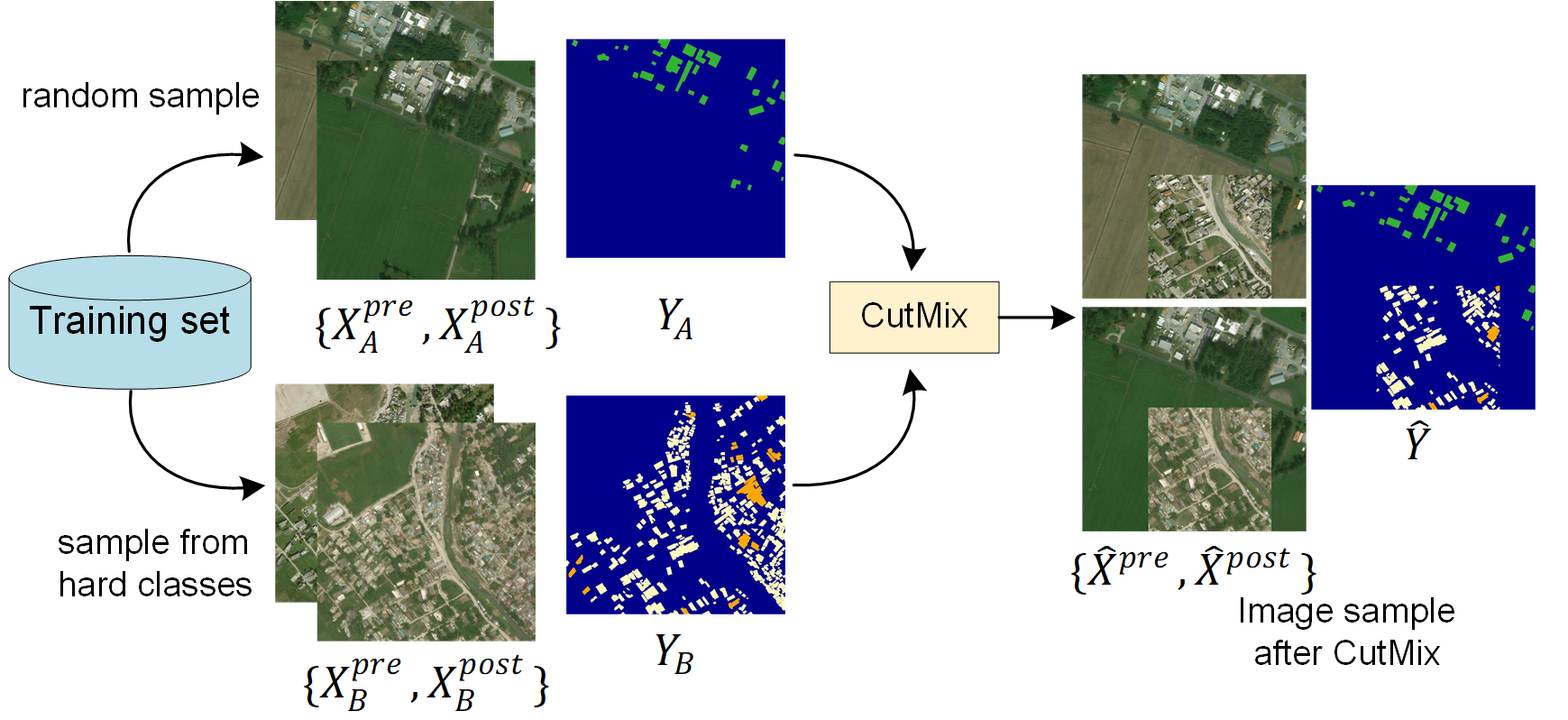

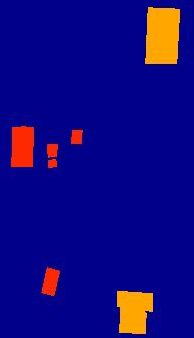

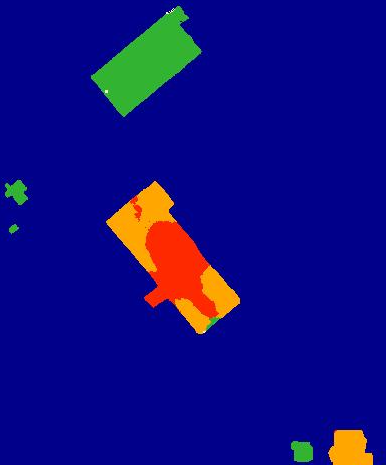

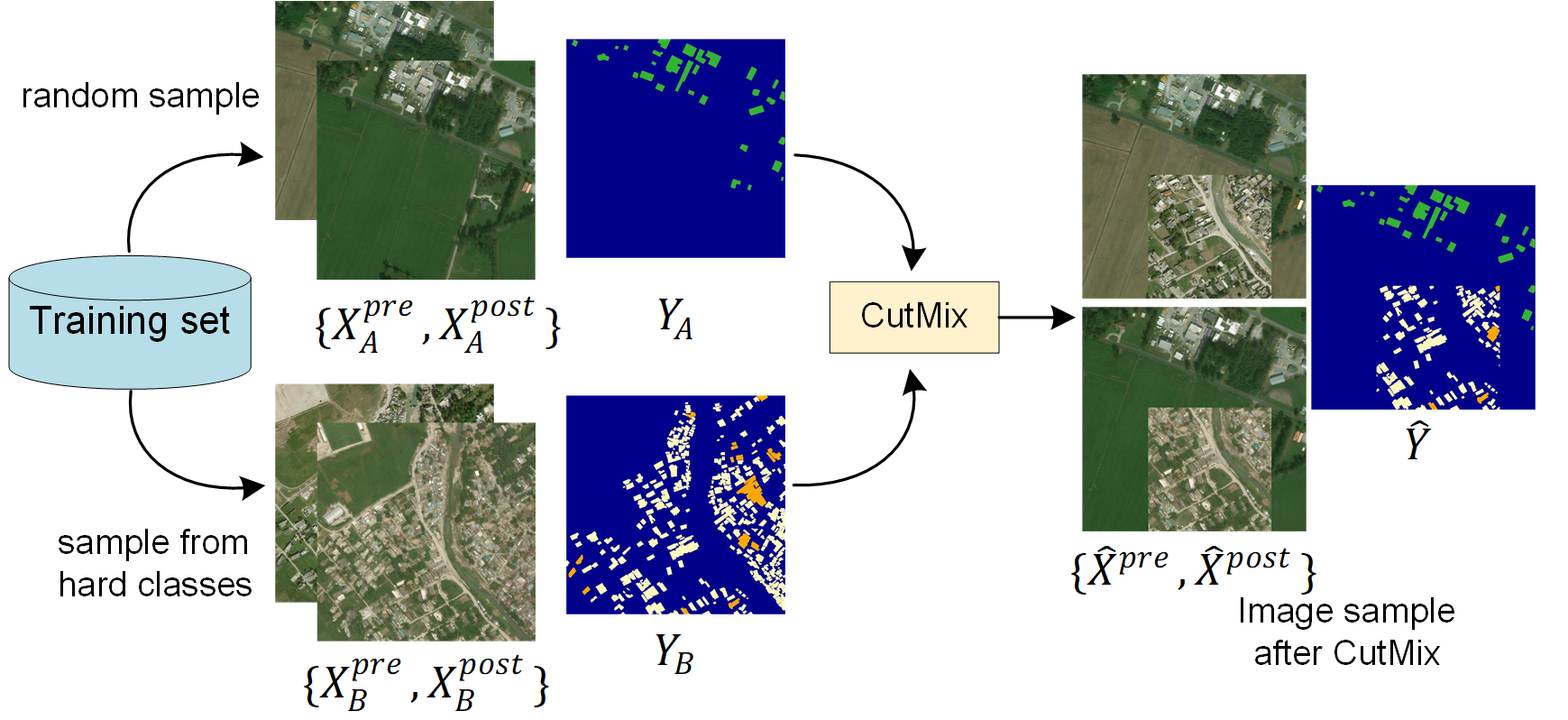

Data Augmentation and CutMix

To overcome class imbalance and improve model generalization, BDANet employs CutMix augmentation for difficult-to-classify damage levels. This technique combines image patches to increase training set diversity, focusing the learning process on challenging scenarios.

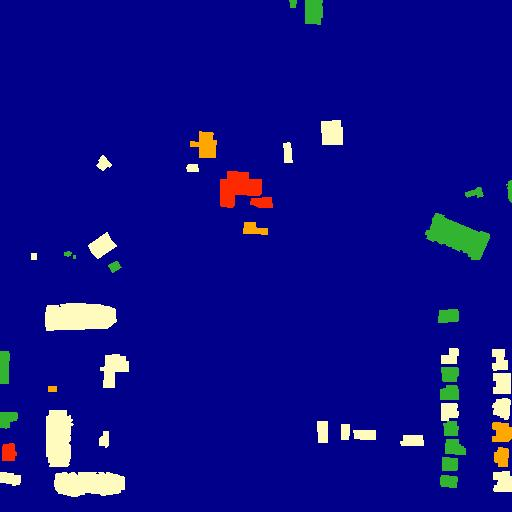

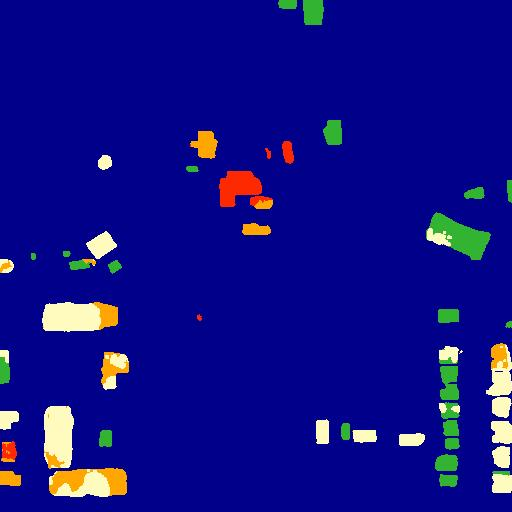

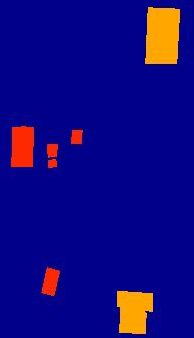

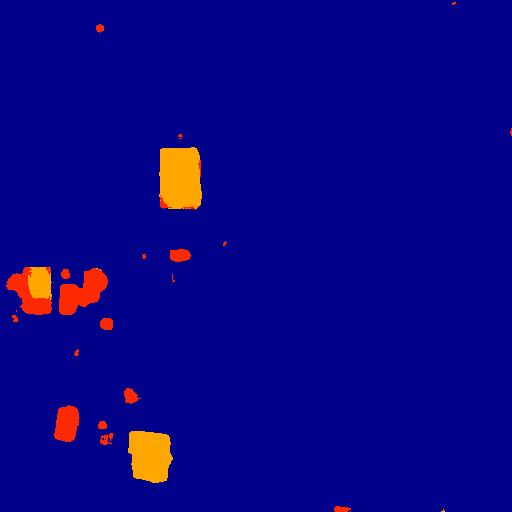

Figure 4: Data augmentation with CutMix for difficult classes.

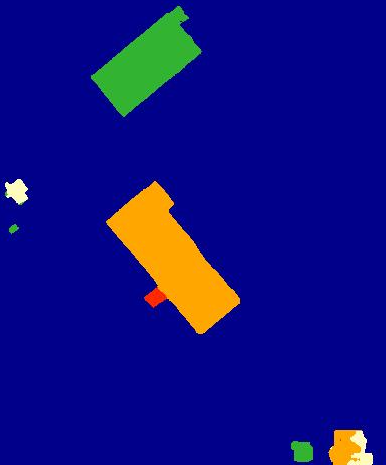

Results and Evaluation

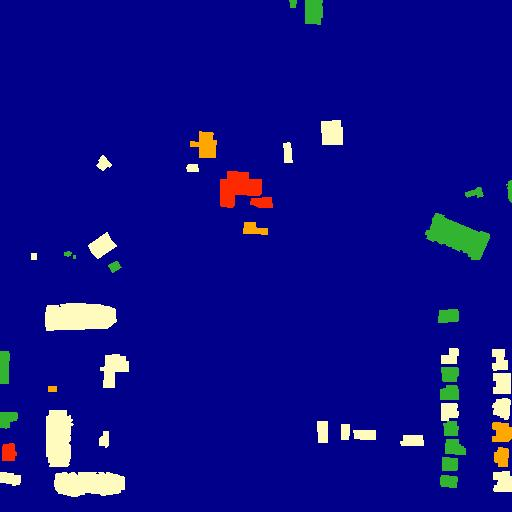

BDANet was evaluated on the xBD dataset, the largest available dataset for building damage assessment, demonstrating state-of-the-art performance in terms of overall classification accuracy. Critical metrics such as F1 score showed substantial improvements, particularly in minor damage levels, due to the focused augmentation strategies.

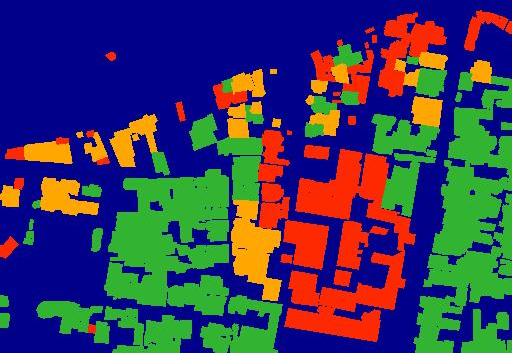

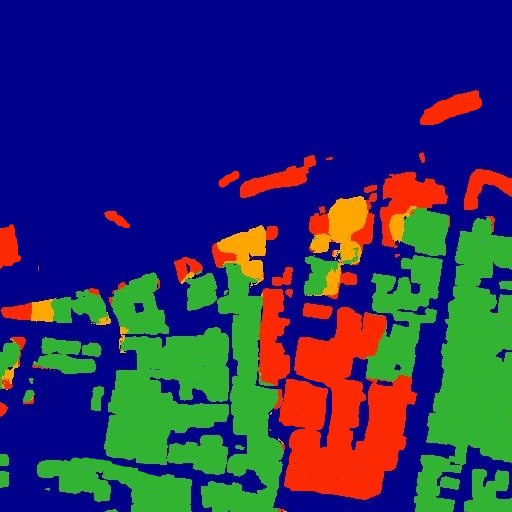

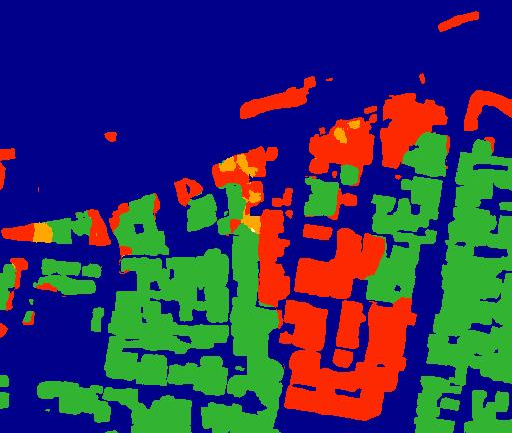

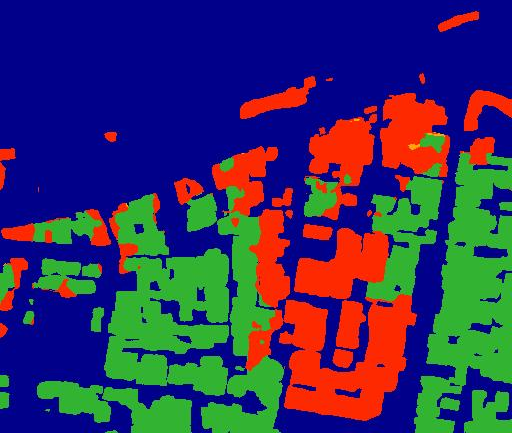

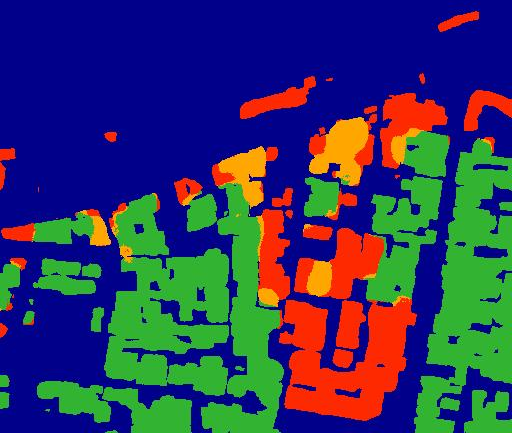

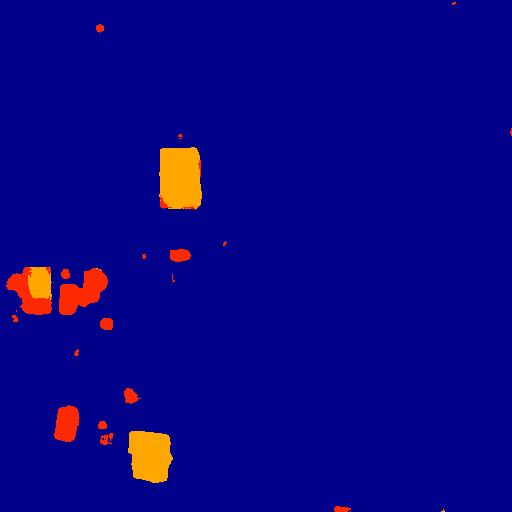

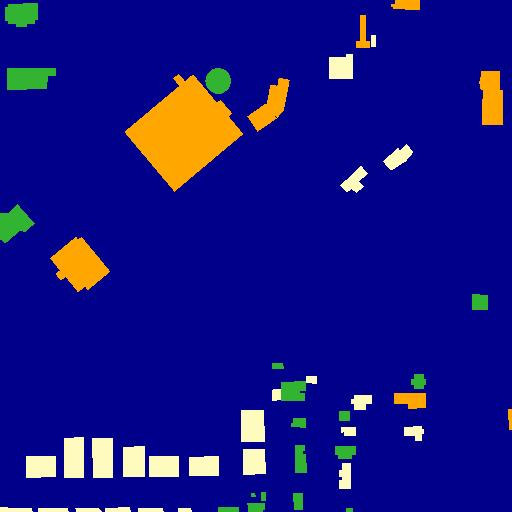

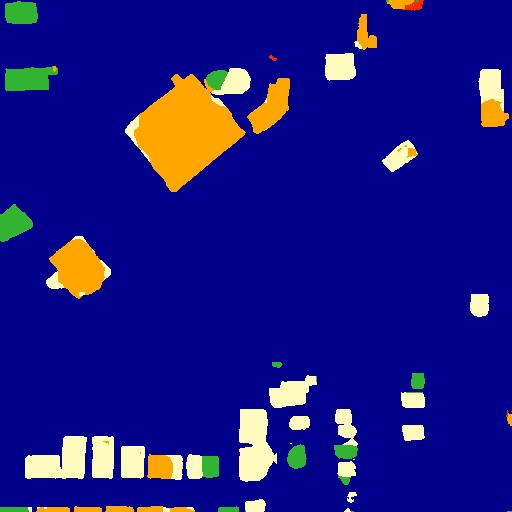

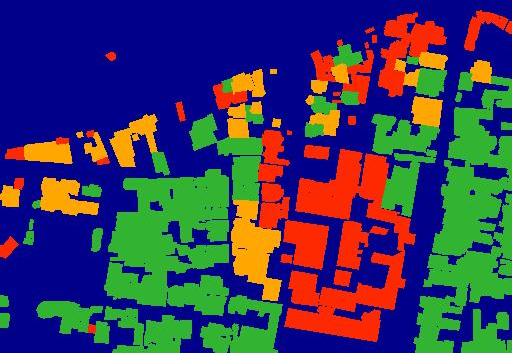

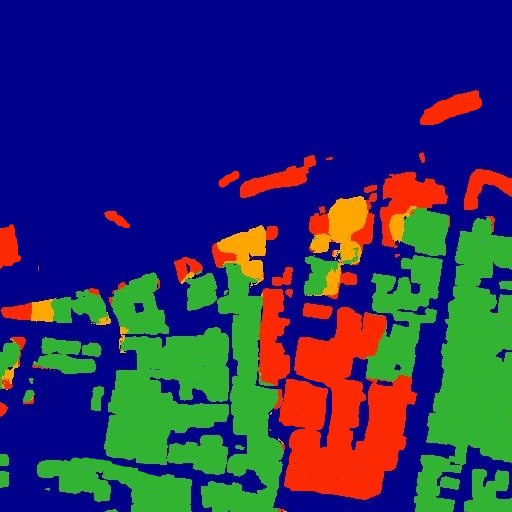

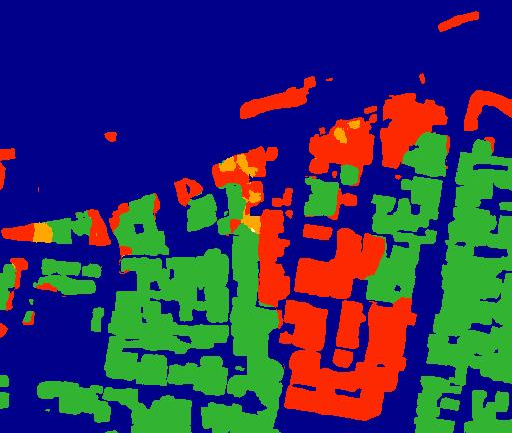

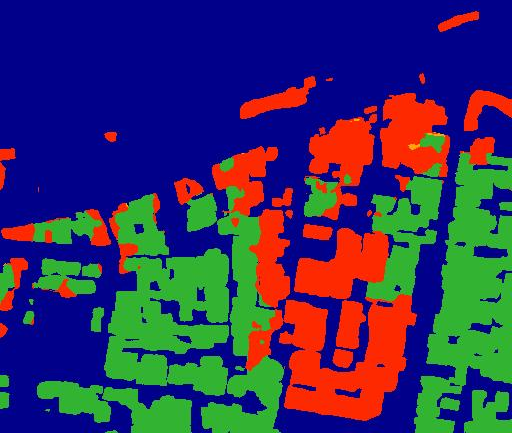

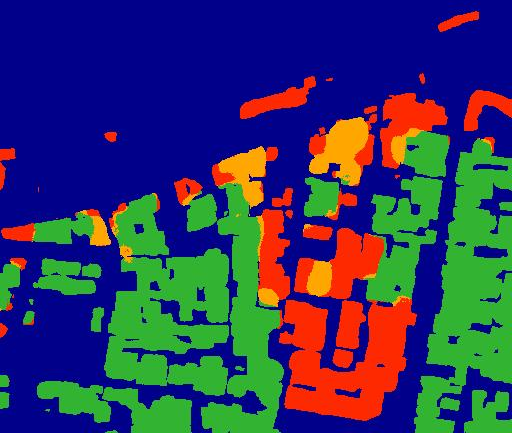

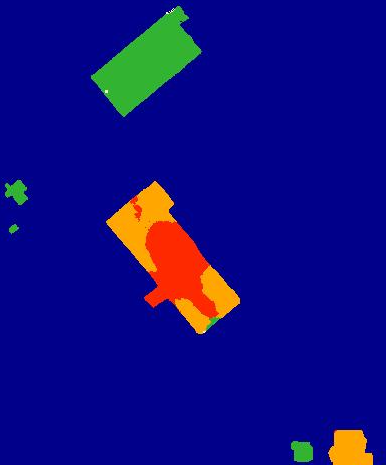

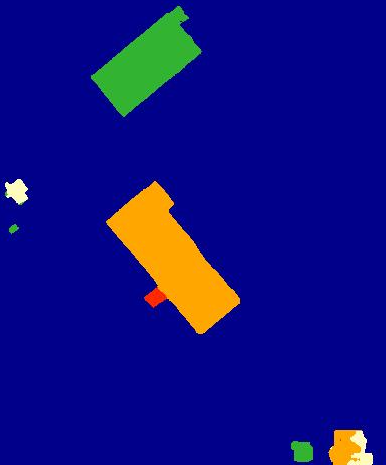

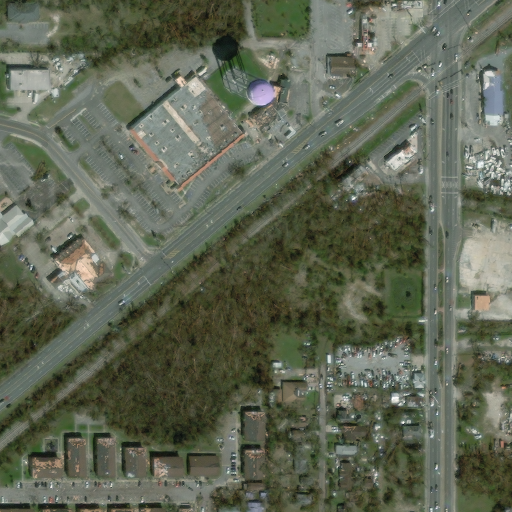

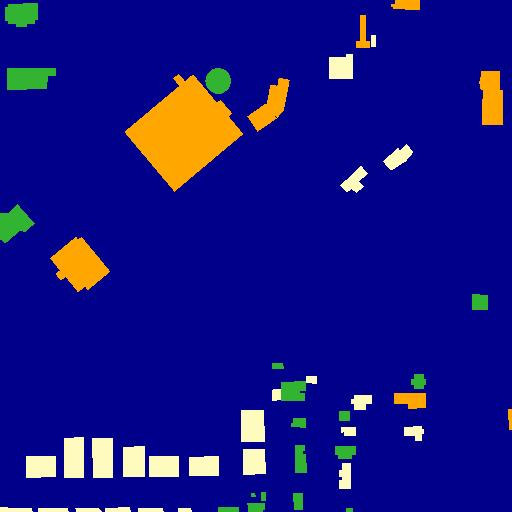

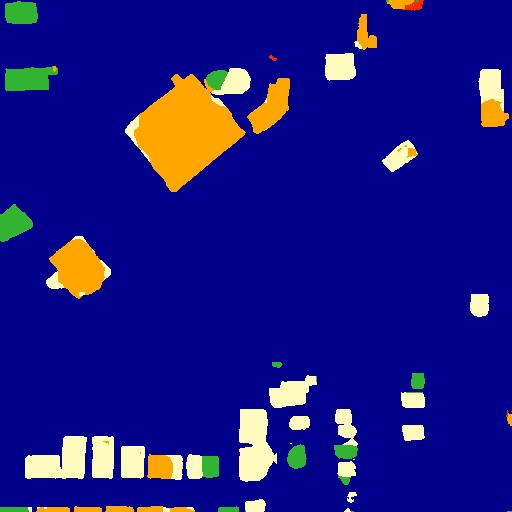

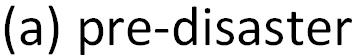

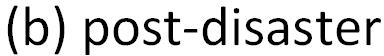

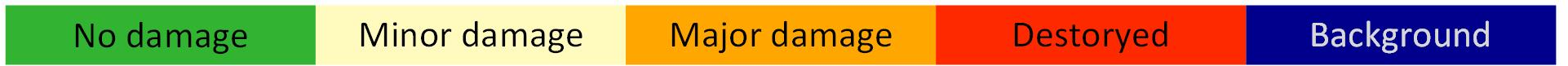

Figure 5: Damage assessment results. From left to right: (a) pre-disaster image, (b) post-disaster image, (c) the ground-truth, (d) FCN, (e) SegNet, (f) DeepLab and (g) our proposed network.

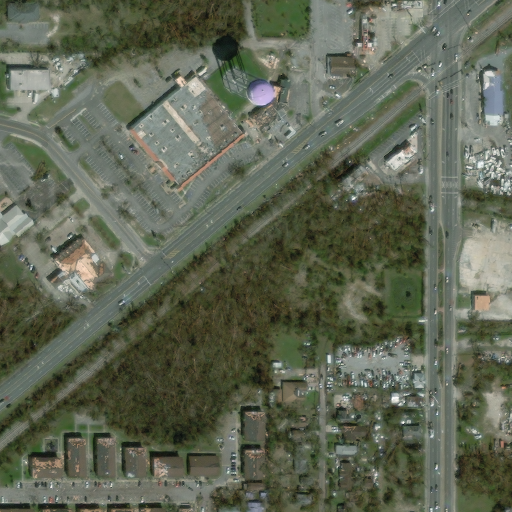

Computational Efficiency

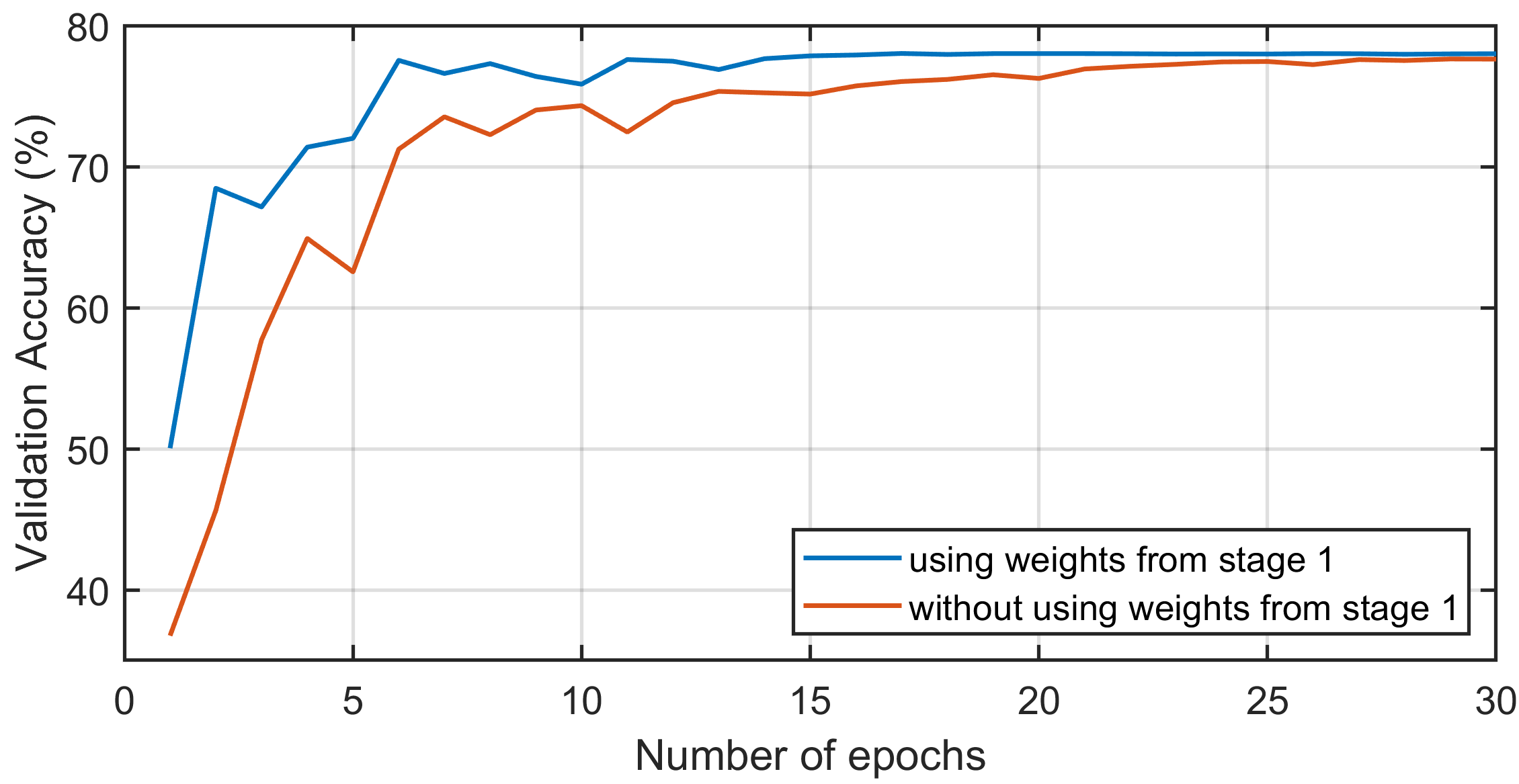

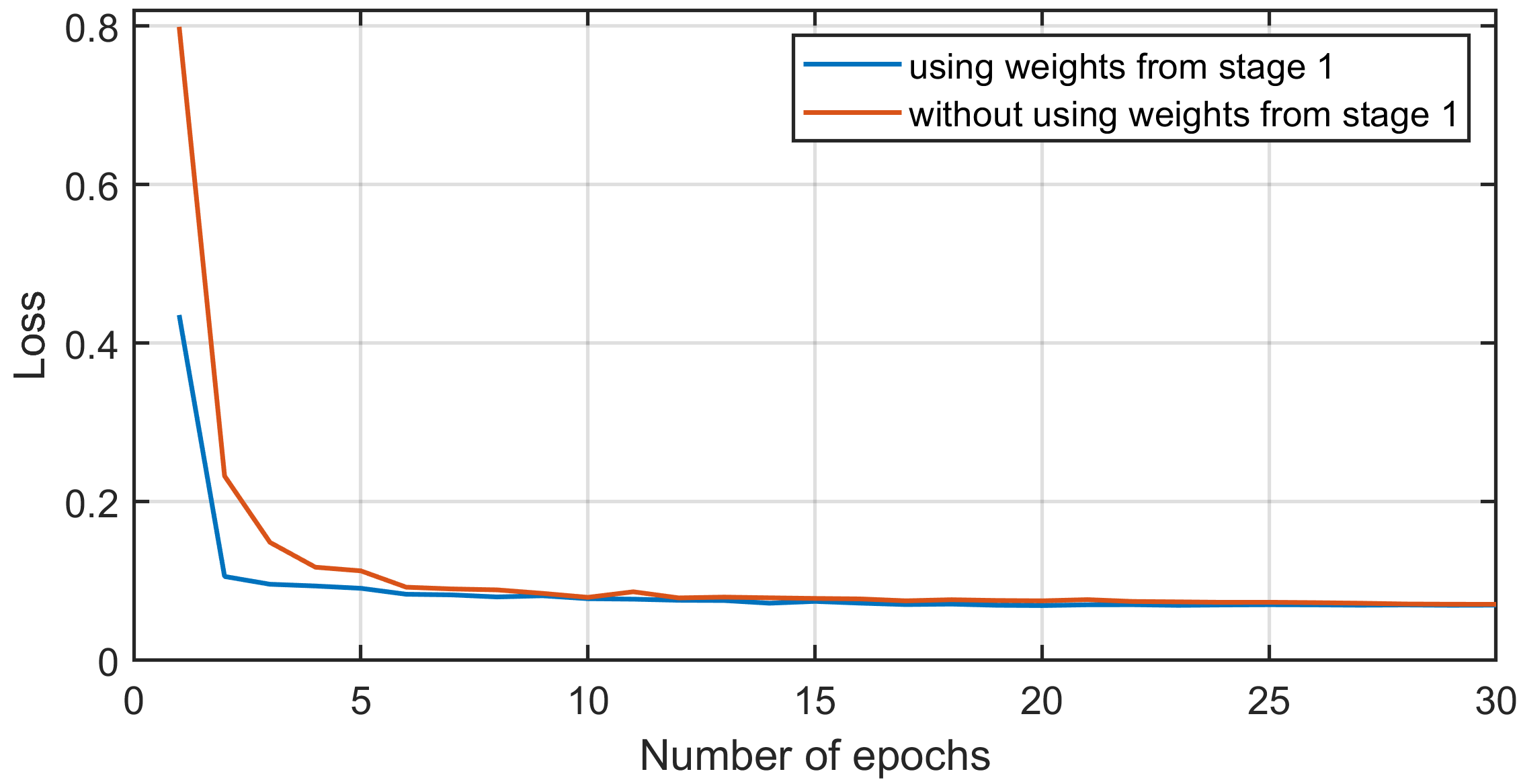

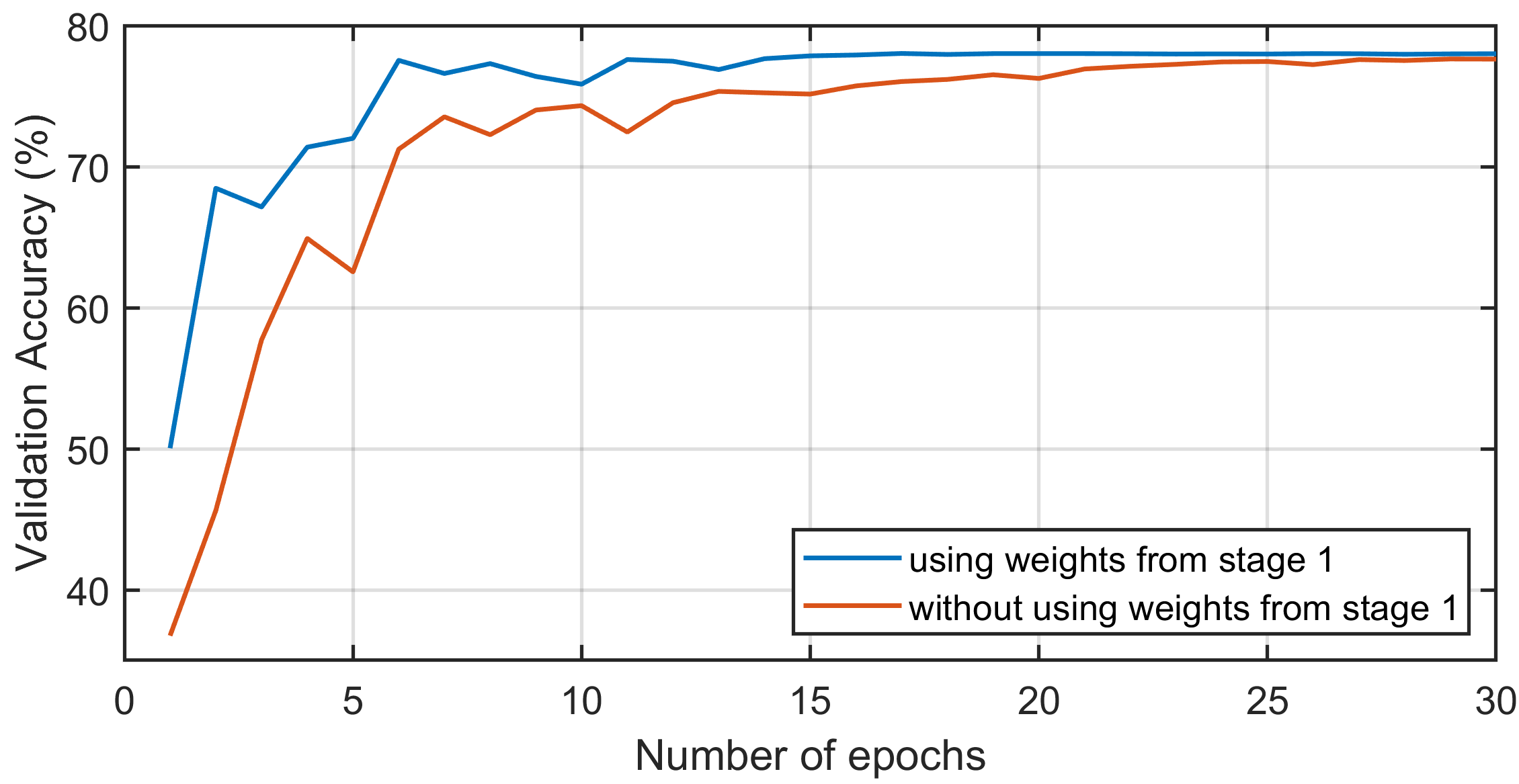

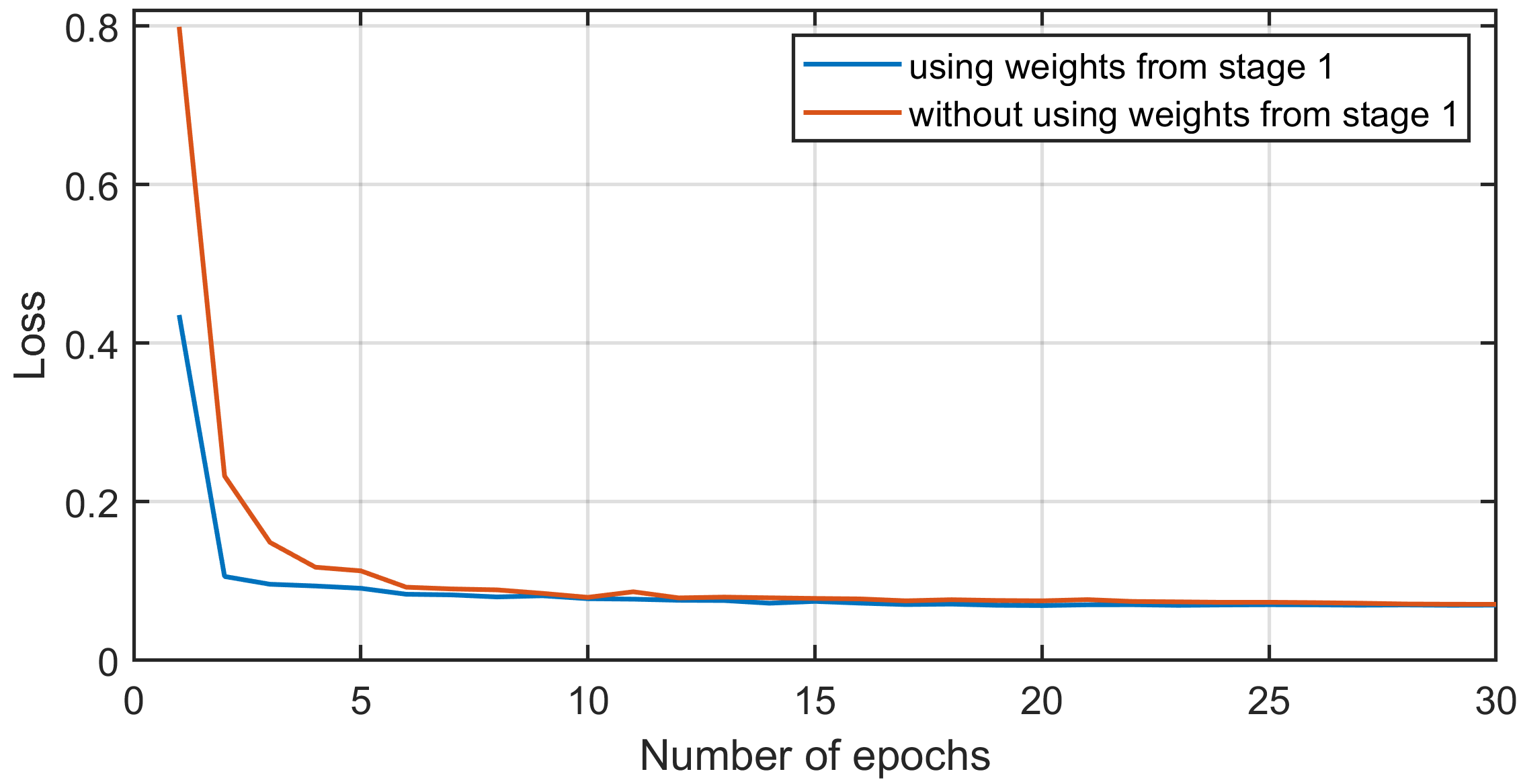

The integration of weights from Stage 1 for Stage 2 initialization significantly reduced training time, while maintaining high validation accuracy from early epochs. Computational costs were analyzed showing the efficiency of BDANet compared with other networks, with only marginal increases in resource use due to CDA and MFF modules.

Figure 6: Evaluation of the training efficiency in Stage 2 by applying network weights from Stage 1 as initialization. (a) Validation accuracy (\%). (b) Cross-entropy loss.

Conclusion

BDANet provides an advanced framework for building damage assessment, offering significant improvements in damage detection accuracy through innovative network design components like the CDA module and strategic data augmentation. Future work may explore adaptive learning strategies for real-time assessment scenarios, optimizing deployment in disaster management operations. The methodologies and results present promising directions for further research in automated remote sensing and disaster response technologies.