- The paper introduces a deep clustering method that simultaneously learns multiple data facets using a hierarchical VAE with a Mixture-of-Gaussians prior.

- It demonstrates competitive performance on datasets like MNIST, 3DShapes, and SVHN by effectively disentangling distinct data characteristics.

- The model redefines the discrete posterior via Monte Carlo sampling to minimize bias and enhance training stability in the clustering process.

Multi-Facet Clustering Variational Autoencoders

Introduction to Multi-Facet Clustering

The paper presents Multi-Facet Clustering Variational Autoencoders (MFCVAE), a novel approach to deep clustering that addresses the limitations of traditional methods by simultaneously learning multiple data clusterings. High-dimensional datasets such as images or speech data have various abstract characteristics that cannot be adequately captured by a single partitioning strategy. MFCVAE is designed to learn these multiple facets through a hierarchical latent variable model with a Mixtures-of-Gaussians (MoG) prior.

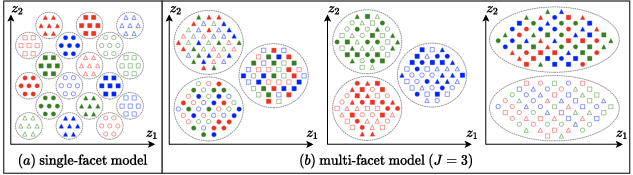

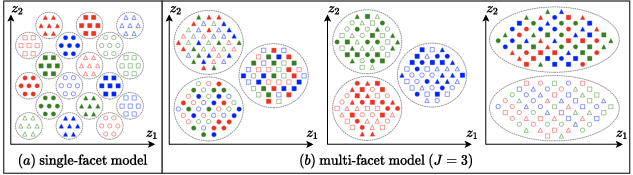

This approach permits disentanglement, capturing distinct abstract data characteristics across separate latent facets efficiently. Each facet represents different levels or aspects intrinsic to the data, while the model's structure incorporates prior independence assumptions across these facets, allowing for sophisticated and scalable learning of the underlying data characteristics (Figure 1).

Figure 1: Latent space of a (a) single-facet model and a (b) multi-facet model (J=3) with two dimensions (z1, z2) per facet. The multi-facet model disentangles data characteristics into three sensible partitions.

MFCVAE Model Architecture

The MFCVAE architecture builds upon Variational Autoencoders (VAEs) by integrating multiple facets into the clustering process. For each facet, the model applies a Mixture-of-Gaussians prior: $c_j \sim \Cat({\pi}_j), \quad {z}_j \mid c_j \sim \mathcal{N}(\bm{\mu}_{c_j}, \bm{\Sigma}_{c_j})$

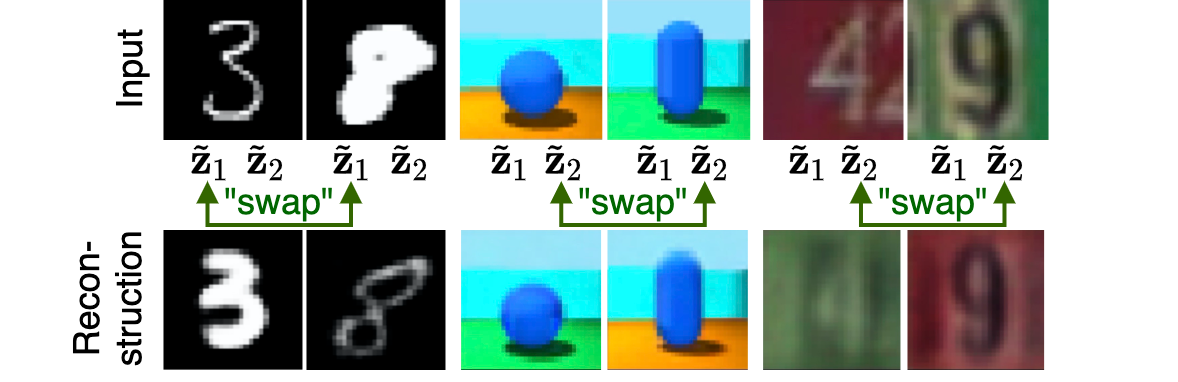

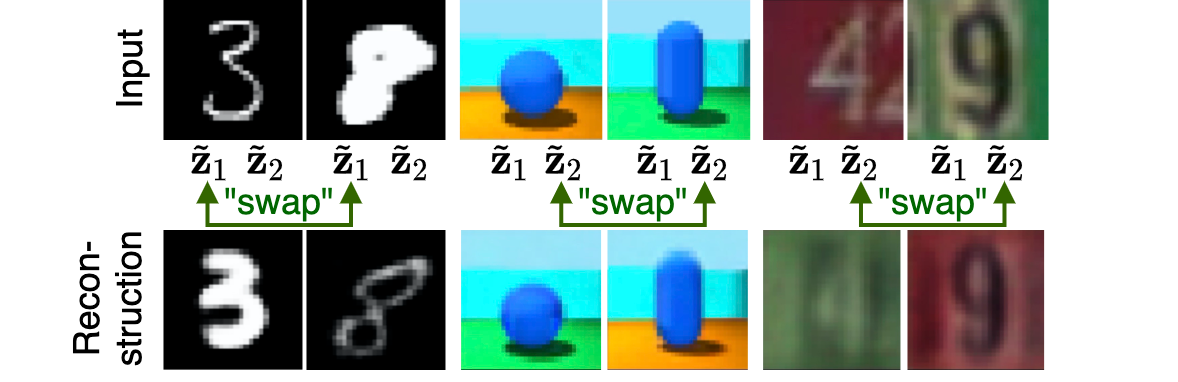

where each latent facet cj represents statistics over observed data samples significant to the analysis. The generative process (depicted in Figure 2) relies on a ladder network architecture that is progressively trained. This architecture fosters stable convergence and disentangled representations by conditioning the decoder on progressively deeper layers, each capturing different abstraction levels.

Figure 2: Graphical model of MFCVAE showcasing the variational posterior $q_{\phi}(\vv{z},{c} | {x})$ and the generative model $p_{\theta}({x},\vv{z},{c})$.

Theoretical Insights and VaDE Tricks

The paper presents novel theoretical advancements, particularly in optimizing the Evidence Lower Bound (ELBO) for models capturing multiple clustering facets. By extending the VaDE trick—originally meant for single-facet models—the authors provide an improved Bayesian posterior for the latent discrete variables. This optimization minimizes bias during model training:

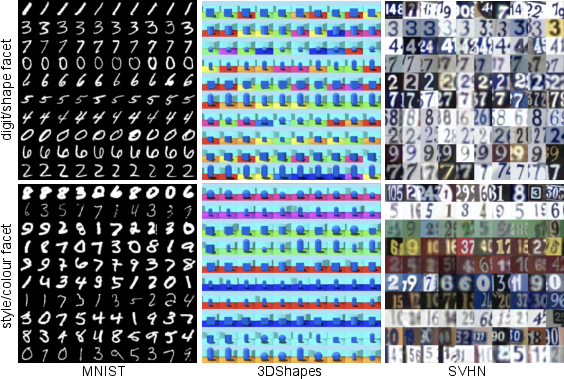

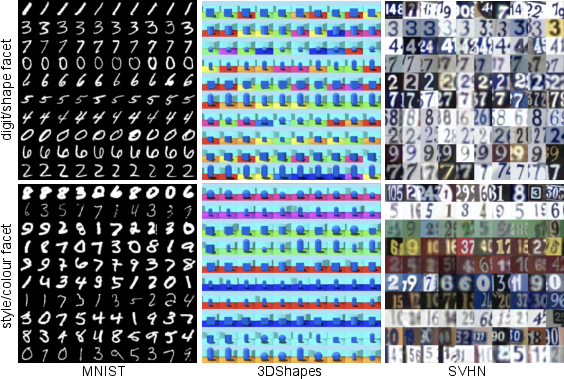

Figure 3: Synthetic samples generated from MFCVAE with various latent facets for different datasets showcasing compositional generative capabilities.

The primary innovation is redefining the posterior for discrete variables using Monte Carlo-estimated samples from the continuous latent space, obviating instability issues related to Gumbel-Softmax tricks typical in hierarchical VAE architectures.

Experimental Evaluation

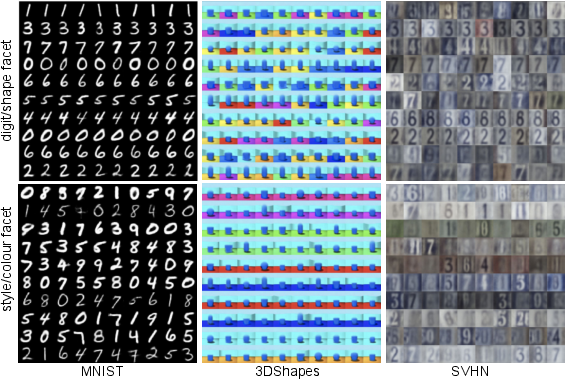

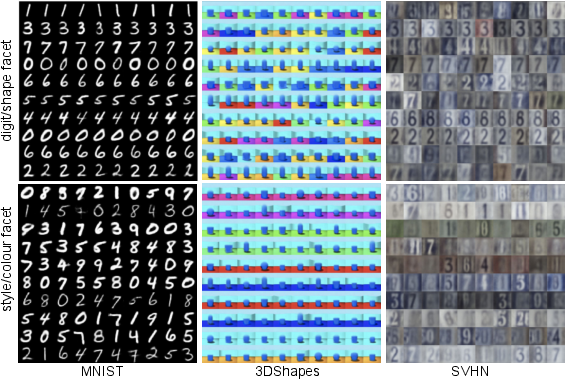

MFCVAE has been tested across several datasets (MNIST, 3DShapes, and SVHN), demonstrating competitive performance relative to generative and non-generative clustering models. It achieves unsupervised classification comparable to models like VaDE, while ensuring superior facet disentanglement that permits independent mathematical manipulation. For instance, on MNIST, the model separate distinct data features such as digit class and stroke style, allowing for targeted data generation and classification enhancements:

Figure 4: Test accuracy over training epochs for models trained on MNIST illustrating robust performance with different architectural configurations.

Quantitative results reveal the model’s strong clustering performance, measured as unsupervised clustering accuracy, aligning closely with supervised class labels. The architecture’s robustness is supported by considerable stability across multiple experimental configurations, evidenced by narrow performance spread over multiple seed trials.

Conclusion

The MFCVAE approach formally advances deep clustering research by respecting high-dimensional data's inherent complexity through structured generative processes. Its model architecture and training paradigm facilitate scalable, end-to-end differentiable learning of independent data facets, addressing previously noted challenges with stability and facet disentanglement. Future research directions suggest exploring automatic tuning of latent dimensions, broader application scenarios beyond imaging, and advanced regularisation techniques to further optimise facet-specific representation learning.