- The paper introduces MusicBERT, a pre-trained Transformer model that uses novel OctupleMIDI encoding and bar-level masking to enhance symbolic music representation.

- It leverages a large-scale corpus from the Million MIDI Dataset, achieving state-of-the-art results in tasks like melody completion, accompaniment suggestion, and genre classification.

- The study demonstrates that tailored pre-training methods can overcome labeled-data scarcity in music tasks, setting new benchmarks for symbolic music understanding.

MusicBERT: Symbolic Music Understanding with Large-Scale Pre-Training

MusicBERT presents a novel approach to symbolic music understanding through large-scale pre-training, inspired by the successful applications of pre-trained models in NLP. It specifically addresses the unique complexities inherent in symbolic music data that traditional NLP pre-training strategies cannot accommodate comprehensively.

Introduction

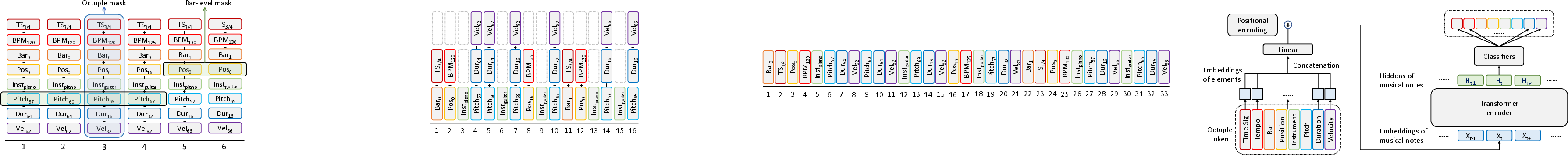

The understanding of symbolic music, which involves classifying and matching music pieces based on symbolic data like MIDI, benefits significantly from robust musical representations. The challenge lies in the limited availability of labeled data for specific music understanding tasks. MusicBERT leverages the analogy of symbolic music to language, using pre-training on large-scale unlabeled data to enhance these representations. This approach necessitates bespoke mechanisms, such as OctupleMIDI encoding and bar-level masking, to capture intrinsic structural and diverse aspects of music beyond the capacities of NLP-derived methods.

Model Components

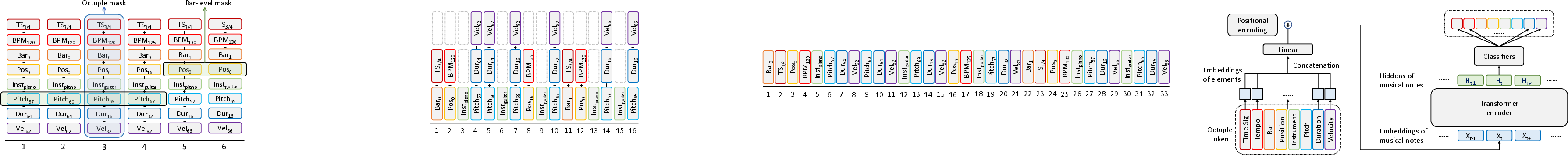

MusicBERT introduces a pre-trained Transformer encoder tailored for music data. This is achieved through the integration of OctupleMIDI encoding, bar-level masking strategies, and the use of a substantial symbolic music corpus, the Million MIDI Dataset (MMD).

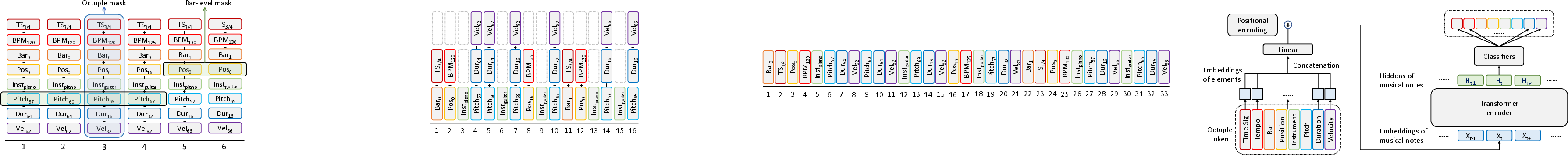

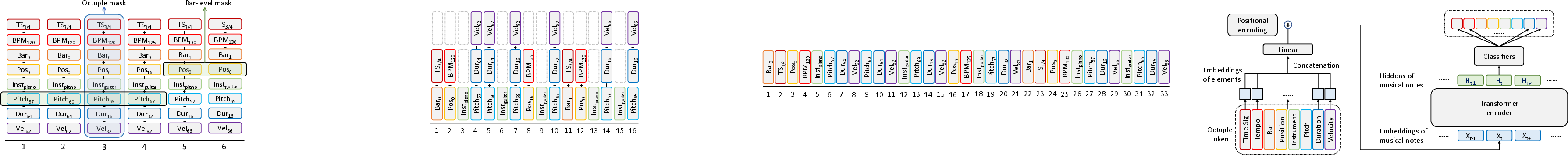

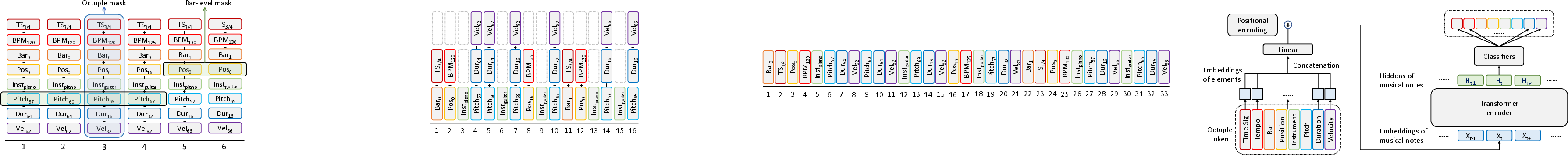

OctupleMIDI Encoding

OctupleMIDI is an encoding scheme that encapsulates a single music note into an 8-tuple, addressing elements like time signature, tempo, and instrument. This method significantly reduces sequence length compared to other MIDI-like encodings, facilitating more efficient processing by Transformer models while retaining crucial musical information.

Figure 1: Model structure of MusicBERT.

This encoding ensures universality across music genres, supporting variable time signatures and long note durations, establishing a compact and expressive representation of musical data.

Bar-Level Masking

In addressing information leakage inherent in token-level masking, MusicBERT applies a bar-level masking strategy. By masking complete sets of token elements within bars, it avoids predictable patterns that can compromise pre-training efficacy. This method draws inspiration from the masked LLM of BERT but adapts it to the specific requirements of music data, ensuring substantial contextual learning without redundancy.

Figure 2: OctupleMIDI encoding.

Pre-Training Corpus

MusicBERT is pre-trained on the Million MIDI Dataset (MMD), comprising over 1.5 million music songs. This dataset is derived from extensive data cleaning and deduplication processes, ensuring diversity and scale, which are critical for effective model pre-training.

Implementation and Results

MusicBERT demonstrates superior performance across various symbolic music comprehension tasks. When applied to melody completion, accompaniment suggestion, genre classification, and style classification, it consistently achieves state-of-the-art results.

Downstream Tasks

In tasks like melody completion and accompaniment suggestion, MusicBERT showcases its enhanced learning of melodic and harmonic contexts. For song-level tasks, such as genre and style classification, MusicBERT benefits from its long-context encoding ability, a boon of the compact OctupleMIDI encoding enabled by bar-level masking.

Methodological Insights

MusicBERT's effectiveness is attributable to its encoding and masking strategies, each proven superior by comprehensive ablation studies. Its reliance on pre-training amplifies its performance, as evidenced by significant improvements across all evaluated tasks when compared to non-pre-trained counterparts.

Conclusion

MusicBERT effectively extends the methodology of pre-training to symbolic music understanding, addressing distinct challenges in the music domain through inventive encoding and masking approaches. By leveraging a large-scale training corpus, MusicBERT not only sets new benchmarks in music applications but also poses opportunities for further exploration in music generation and retrieval tasks, as well as other unexplored areas of music understanding.