- The paper introduces a general speech restoration framework, VoiceFixer, which employs a two-stage system integrating a ResUNet for analysis and a GAN-based vocoder for synthesis.

- It simultaneously addresses multiple distortions—including noise, clipping, reverberation, and low-resolution effects—thus outperforming traditional single-task restoration methods.

- Experimental results reveal significant improvements in metrics like MOS, SiSPNR, and LSD, affirming the framework's capability in enhancing speech quality in real-world conditions.

VoiceFixer: Toward General Speech Restoration with Neural Vocoder

The paper "VoiceFixer: Toward General Speech Restoration With Neural Vocoder" introduces a novel framework for speech restoration that tackles multiple distortion types simultaneously. VoiceFixer is designed as a generative framework comprising two main stages: analysis and synthesis. This approach aims to address general speech restoration (GSR) which improves upon traditional single-task speech restoration (SSR) methods like speech denoising, declipping, or super-resolution. The VoiceFixer framework utilizes a ResUNet for the analysis stage and a neural vocoder for the synthesis stage, and performs exceptionally in restoring heavily degraded real speech recordings.

Introduction to General Speech Restoration

The concept of general speech restoration (GSR) focuses on addressing multiple distortions present in a single model, contrasting with SSR systems which typically handle one type of distortion at a time. VoiceFixer adopts the GSR task to remove distortions such as additive noise, room reverberation, low-resolution sampling, and clipping, all in one go. The framework is inspired by human auditory systems which perform tasks like auditory scene analysis followed by understanding and synthesis, mimicking biological hearing mechanisms (Figure 1).

Figure 1: Overview of the proposed VoiceFixer system.

Traditional methods in speech restoration tend to oversimplify distortion types due to mismatches between training data and real-world conditions, which degrade performance. By encapsulating a two-stage system, VoiceFixer leverages both spectral transformations and deep neural network architectures to refine restoration processes.

Methodology

System Architecture

VoiceFixer consists of two core components: the analysis stage modeled by a ResUNet and the synthesis stage driven by a neural vocoder. During the analysis stage, mel spectrograms are employed as intermediate representations due to their reduced dimensionality compared with STFT spectrograms, facilitating efficient feature extraction and mapping (Figure 2).

Figure 2: The architecture of ResUNet.

The synthesis stage utilizes a GAN-based vocoder (TFGAN) which generates waveforms from mel spectrogram inputs (Figure 3). It incorporates both frequency and time domain discriminators to ensure high-quality sound output. This setup allows separate training for analysis and synthesis stages, enhancing adaptability and performance.

Figure 3: The architecture and training scheme of TFGAN, whose generator is later used as vocoder.

Distortion Simulation

The distortion simulations replicate real-world speech degradation types including clipping, reverberation, low-resolution effects, and additive noise. Using a composite function approach, detailed in the experiment section, these distortions are compounded in simulation to reflect realistic settings and prepare models for variable conditions.

Experimental Results

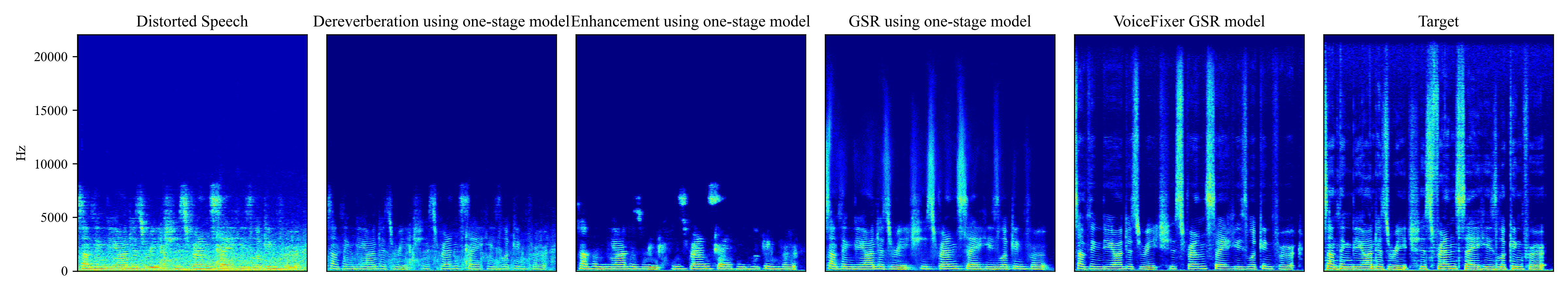

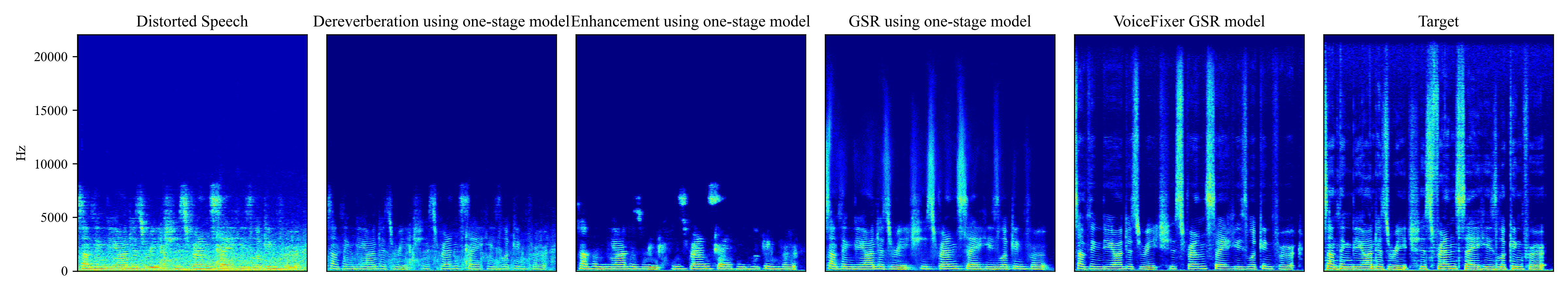

VoiceFixer demonstrates robust performance in evaluations across various metrics such as mean opinion score (MOS), scale-invariant spectrogram to noise ratio (SiSPNR), and log-spectral distance (LSD). The framework surpasses traditional SSR models and baseline GSR models significantly, particularly within complex distortion scenarios (Figure 4).

Figure 4: Comparison between different restoration methods. The unprocessed speech is noisy, reverberant, and in low-resolution. The leftmost spectrogram is the unprocessed low-quality speech and the rightmost is the target high-quality spectrogram. In the middle, from left to right, the figures show results processed by one-stage SSR dereverberation model, SSR denoising model, GSR model and VoiceFixer based GSR model.

VoiceFixer’s ResUNet-based analysis stage consistently outperformed counterparts such as BiGRU and DNN in reconstruction tasks due to its high modeling capacity for complex spectrogram transformations.

Implications and Future Directions

The paper suggests VoiceFixer as a versatile tool for restoration of historical speeches and degraded recordings found in old movies, providing a substantial increase in speech quality. Future directions could explore extending the framework to encompass general audio signals beyond speech, and enhancing neural vocoder designs to handle increasingly diverse audio characteristics.

Conclusion

The VoiceFixer framework effectively addresses the challenge of general speech restoration by integrating deep learning techniques with multi-stage processing inspired by human auditory systems. It sets a precedent for improving speech intelligibility and quality across diverse distortion types, moving toward universal applicability in audio restoration contexts.