- The paper introduces DS-TOD, a framework that injects domain-specific knowledge into PLMs via targeted term extraction, domain pretraining, and efficient adapter-based specialization.

- It leverages domain-specific corpora such as DomainCC and DomainReddit to improve performance on task-oriented dialog tasks like Dialog State Tracking and Response Retrieval.

- Experimental evaluations demonstrate significant performance gains with reduced computational costs, highlighting DS-TOD’s effectiveness in cross-domain and multi-domain settings.

DS-TOD: Efficient Domain Specialization for Task Oriented Dialog

The paper "DS-TOD: Efficient Domain Specialization for Task-Oriented Dialog" (2110.08395) presents a framework for domain specialization of Pretrained LLMs (PLMs) tailored for Task-Oriented Dialog (TOD) systems. The framework, DS-TOD, aims to inject domain-specific knowledge into PLMs to enhance their performance on downstream TOD tasks.

Introduction to DS-TOD

Task-Oriented Dialog systems are prevalent in applications where conversational agents assist in accomplishing specific tasks like booking a taxi or ordering food. Most recent TOD systems leverage fine-tuning of PLMs such as BERT and GPT-2 to achieve state-of-the-art results. However, these models, when pretrained on general dialog corpora like Reddit, may not capture domain-specific nuances essential for specific TOD domains.

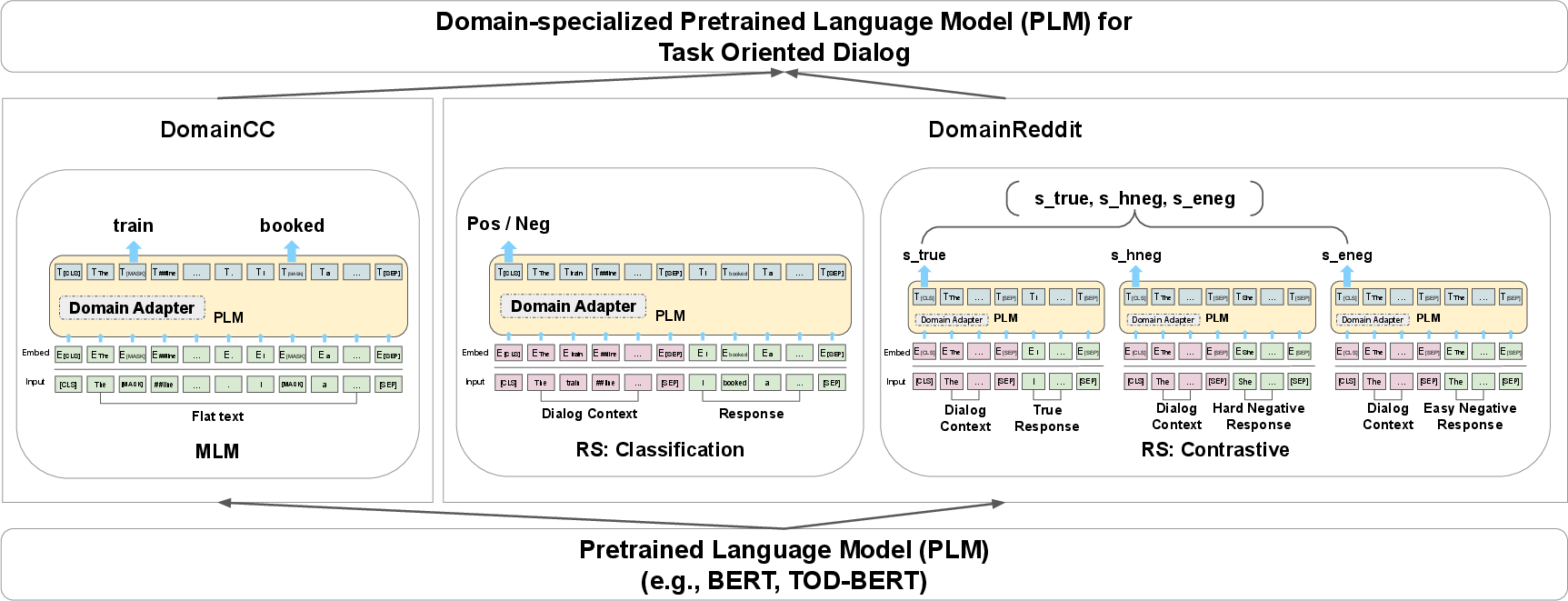

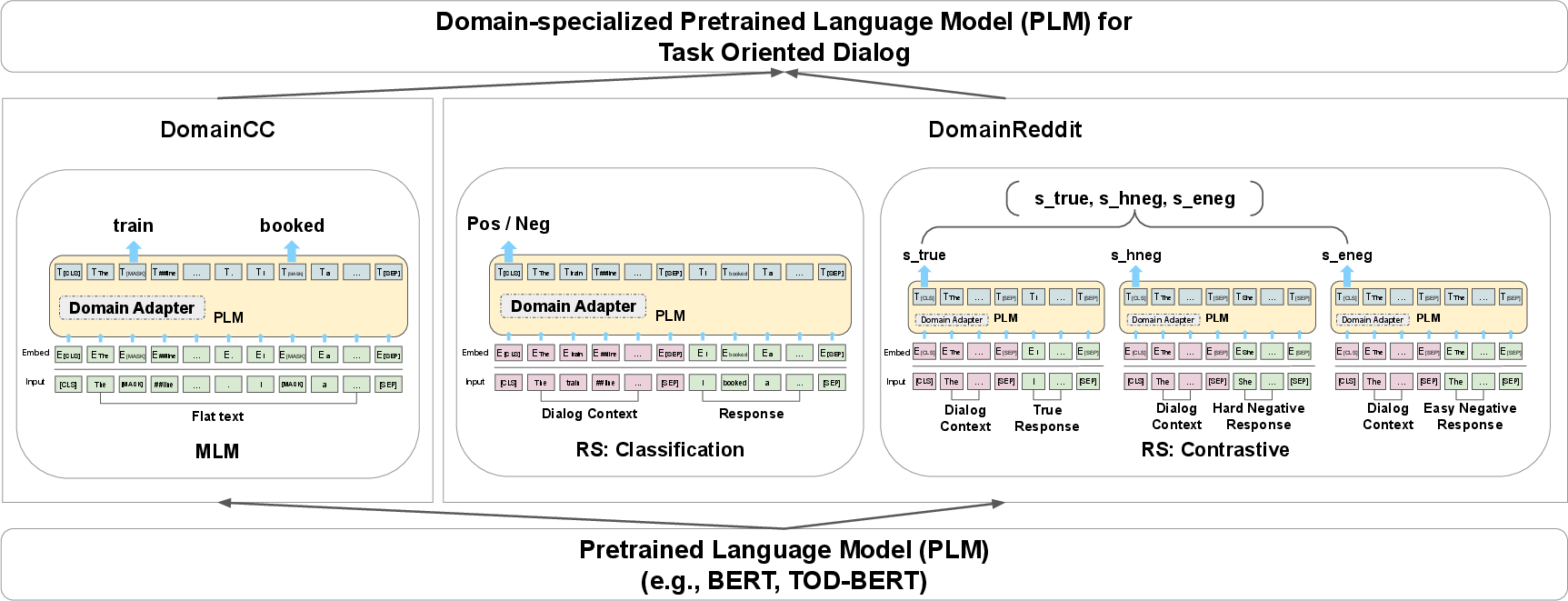

DS-TOD addresses this by creating domain-specialized PLMs through three primary steps:

- Domain-Specific Term Extraction: Extract salient domain-specific terms from a TOD corpus, creating resources like DomainCC and DomainReddit.

- Pretraining on Domain-Specific Data: Use Masked Language Modeling and Response Selection objectives on these resources to perform domain-specific pretraining.

- Resource-Efficient Specialization via Domain Adapters: Introduce additional parameter-light layers (domain adapters) to encode domain-specific knowledge, providing an efficient specialization mechanism.

Figure 1: Overview of DS-TOD. Three different specialization objectives for injecting domain-specific knowledge into PLMs.

Domain-Specific Data Collection

The approach leverages the multi-domain MultiWOZ dataset to focus on five domains: Taxi, Restaurant, Hotel, Train, and Attraction. Domain-specific terms are identified using TF-IDF across domain-specific dialogs. These terms are then employed to filter relevant content from large corpora like CCNet and Reddit, resulting in the DomainCC and DomainReddit resources.

Domain-specific corpora such as DomainCC provide flat text data, while DomainReddit offers dialogic data, both facilitating effective pretraining for each domain. Additionally, salient n-grams identified for each domain enable targeted filtering of relevant data, ensuring the models are exposed to domain-relevant linguistic patterns.

Training Objectives

DS-TOD explores multiple training objectives:

- Masked Language Modeling (MLM): Performed on DomainCC, MLM adapts PLMs to domain-specific content by dynamically masking segments of the input text.

- Response Selection (RS): Implemented on DomainReddit, RS-Class entails binary classification to identify correct responses in dialogues, while RS-Contrast employs a contrastive learning framework, enhancing conversational structure embedding.

Adapter-Based Specialization

To mitigate computational costs and catastrophic forgetting, DS-TOD employs adapters—parameter-light modules integrated into PLMs. These adapters enable efficient domain knowledge encoding, allowing for dynamic domain specialization without full model fine-tuning. This method proves beneficial, especially in multi-domain settings where combining domain-specific adapters enhances model performance without requiring extensive retraining.

Experimental Evaluation

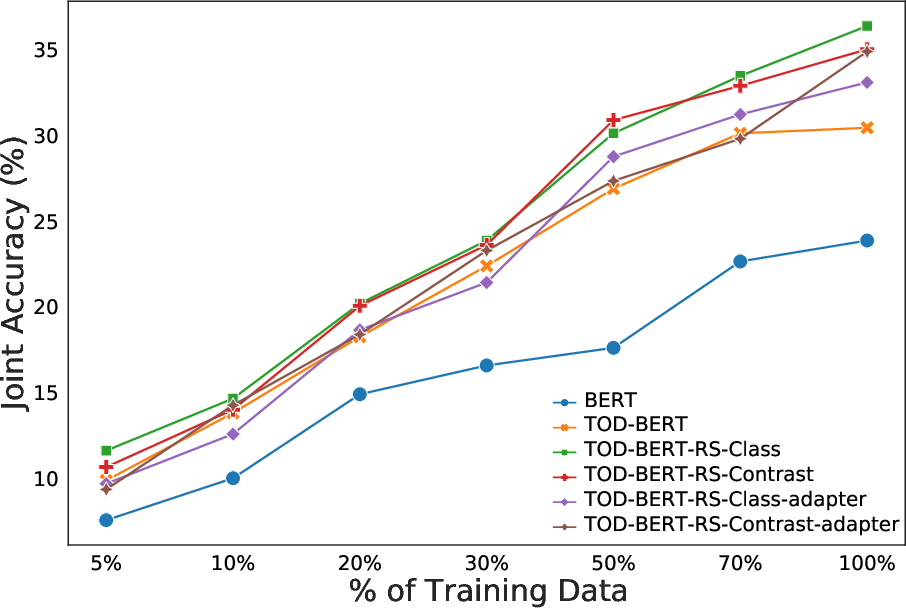

Experiments conducted on MULTIWOZ domains validate the effectiveness of DS-TOD. Domain-specialized models significantly outperform baseline PLMs on Dialog State Tracking (DST) and Response Retrieval (RR) tasks.

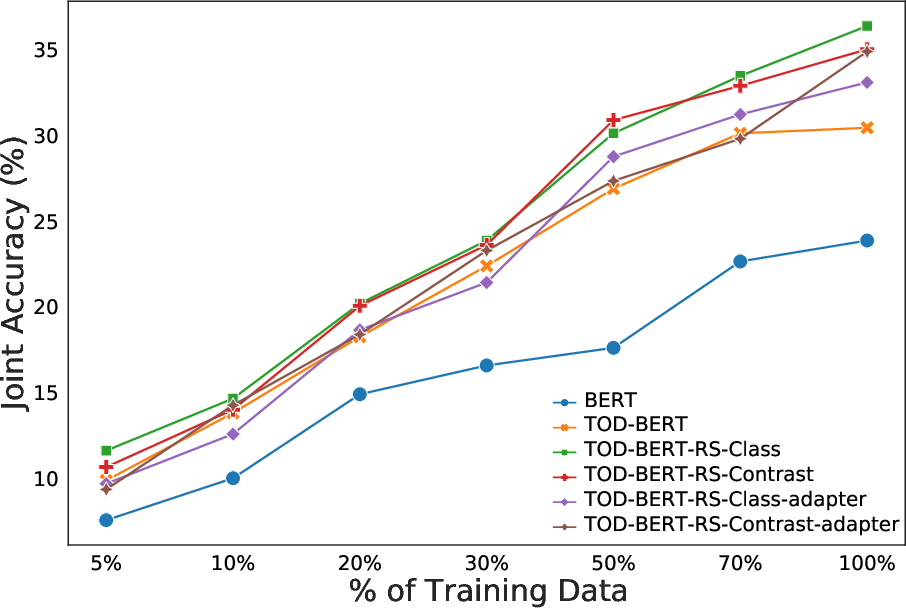

Figure 2: Sample efficiency of DS-TOD for DST: joint goal accuracy for different portions of downstream training data.

Results demonstrate that domain specialization, particularly via RS objectives, provides consistent performance improvements. Furthermore, adapter-based specialization achieves comparable results to full fine-tuning, underscoring its efficiency.

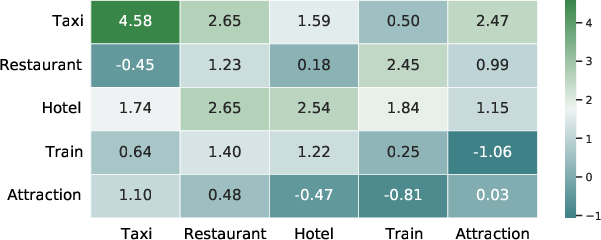

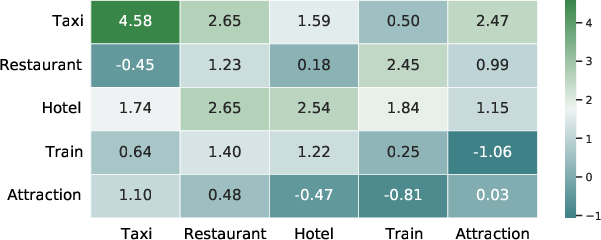

Cross-Domain Transfer and Multi-Domain Specialization

DS-TOD also explores cross-domain transfer capabilities, revealing that domain-specialized models exhibit performance gains in related domains, suggesting a promising avenue for leveraging domain interdependencies in TOD systems. The approach also supports efficient multi-domain specialization through domain adapter stacking and fusion, facilitating versatile multi-domain dialog systems.

Figure 3: Relative improvements in cross-domain DST transfer using DS-TOD.

Conclusion

The DS-TOD framework advances TOD systems by effectively integrating domain-specific knowledge into PLMs. This approach not only enhances TOD performance across various domains but also reduces computational demands through efficient domain specialization techniques. DS-TOD paves the way for more adaptive, domain-aware TOD models capable of handling diverse, real-world dialog scenarios. Future research will focus on expanding this specialization to encompass additional languages and tasks, ensuring broader applicability in multilingual and multi-functional dialog systems.