Illiterate DALL-E Learns to Compose

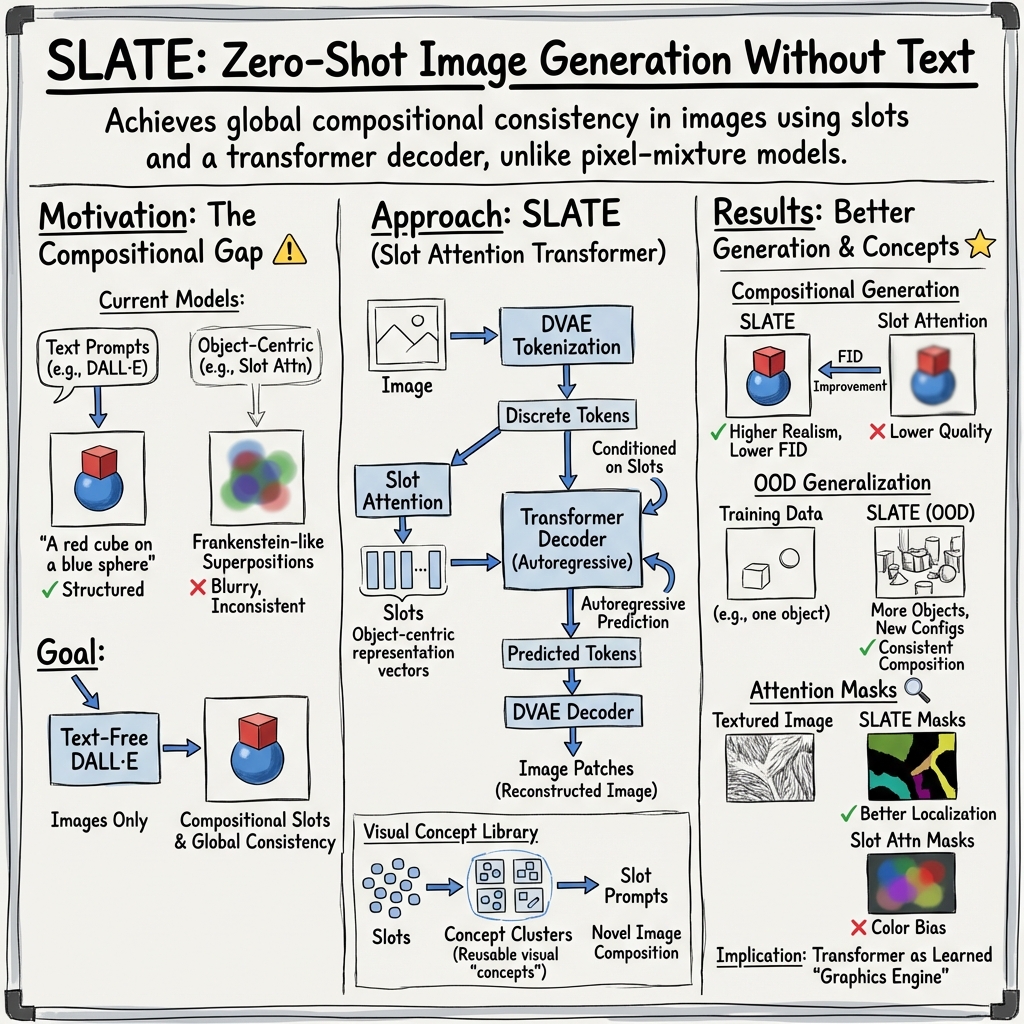

Abstract: Although DALL-E has shown an impressive ability of composition-based systematic generalization in image generation, it requires the dataset of text-image pairs and the compositionality is provided by the text. In contrast, object-centric representation models like the Slot Attention model learn composable representations without the text prompt. However, unlike DALL-E its ability to systematically generalize for zero-shot generation is significantly limited. In this paper, we propose a simple but novel slot-based autoencoding architecture, called SLATE, for combining the best of both worlds: learning object-centric representations that allows systematic generalization in zero-shot image generation without text. As such, this model can also be seen as an illiterate DALL-E model. Unlike the pixel-mixture decoders of existing object-centric representation models, we propose to use the Image GPT decoder conditioned on the slots for capturing complex interactions among the slots and pixels. In experiments, we show that this simple and easy-to-implement architecture not requiring a text prompt achieves significant improvement in in-distribution and out-of-distribution (zero-shot) image generation and qualitatively comparable or better slot-attention structure than the models based on mixture decoders.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper introduces a new AI model called SLATE that can learn to “mix and match” parts of images to create new pictures—without reading any text prompts. Think of it as a version of DALL·E that doesn’t understand words but still learns how to compose scenes by understanding objects in images. The goal is to get strong, flexible picture generation (like DALL·E) while learning only from images (no text).

What questions did the researchers ask?

- Can an AI learn to break a picture into meaningful parts (like “face,” “hair,” “wall,” “floor,” “block,” “shadow”) just by looking at lots of images—no labels, no captions?

- After learning these parts, can it recombine them in new ways to make realistic images it has never seen before (zero-shot generation)?

- Can it fix two big problems in older methods that either blur details or glue parts together poorly, making “Frankenstein” pictures?

How does their method work?

Here’s the big idea: SLATE learns “object slots” (think of them as little notes that describe each object in a scene) and uses a powerful picture-drawing engine to assemble everything so it looks natural and consistent.

To understand the steps, imagine rebuilding a picture from simple pieces:

1) Turning an image into tokens (like LEGO pieces)

- The model first chops an image into small patches and turns each patch into a “token,” which is just a compact code. This is done with a tool called a DVAE.

- Analogy: If the image is a big LEGO set, the DVAE turns it into a sequence of labeled LEGO bricks.

2) Finding the parts (object slots)

- A module called Slot Attention looks at all the tokens and groups them into N “slots.”

- Each slot tries to represent one thing in the scene—like a block, a face region, the background, or hair.

- Analogy: It’s like having several magnets (the slots) that pull in the tokens that belong to their object.

3) Drawing the picture back with a transformer

- To rebuild the picture, SLATE uses a transformer (similar to the ones used in LLMs) that draws the image token-by-token, looking at two things: previously drawn tokens and the object slots.

- This is important because it means every new pixel can “know” about other pixels and objects—so shadows, reflections, and edges line up properly.

- Analogy: The transformer is a careful artist who paints the scene one patch at a time, constantly checking the sketch and the list of objects to keep everything consistent.

4) Building a visual concept library

- After training, the model has lots of slots from many images. The researchers cluster similar slots to create a “visual vocabulary” (a library of reusable concepts).

- Now, just like giving DALL·E a text prompt, you can give SLATE a “slot prompt”: pick “hair” from one image, “face” from another, and “background” from a third—and ask it to compose a new picture.

- Analogy: It’s like having a sticker book of parts you can mix and match to make new scenes.

What did they find?

Here are the main results, explained simply:

- Better compositions without text: SLATE can mix parts from different images to create new, realistic pictures—no captions needed. For example, it can place blocks in new stacks, combine different hair and faces from cartoon avatars, or put shapes into scenes with correct shadows and reflections.

- More consistent images: Unlike older methods that just “average” pixels from each object (which often leads to blurry or mismatched results), SLATE’s transformer makes sure parts work together. Shadows match objects, reflections line up, and textures look detailed.

- Stronger zero-shot generalization: SLATE handles new combinations it never saw during training, like:

- More or fewer objects than usual

- Two towers instead of one

- Mixing hair and faces from different “styles” or genders

- Higher image quality: On many datasets (like Bitmoji, CelebA, Shapestacks, and more), SLATE’s images look more realistic, judged by standard scores (FID) and by human preferences.

- Clearer object attention in tricky images: SLATE often separates objects better in textured or complex scenes (like faces and backgrounds), where older methods get confused.

Why this matters: It shows that a model can learn compositional “building blocks” from images alone and recombine them in new, meaningful ways—bringing us closer to flexible, human-like visual imagination without needing text.

Why does this matter?

- Toward “text-free DALL·E”: SLATE demonstrates that strong, controllable image composition doesn’t require captions. That’s useful when text labels are unavailable or expensive to collect.

- Better editing and creativity tools: A model that understands “parts” of images can be used to edit scenes, swap attributes (like backgrounds or hairstyles), or design new scenes by combining concepts—no manual labeling.

- A general framework for object-centric AI: By pairing object slots (what and where) with a powerful transformer (how to draw), SLATE hints at a path for AI that can understand and render complex scenes more like humans do.

Key ideas in plain words

- Slot (object slot): A compact description of one part of a scene, like a sticky note that says “this is the hair” or “this is the floor,” including where it goes.

- Transformer: A smart “storyteller” that builds the picture one piece at a time, always checking context so the whole image makes sense.

- Compositionality: The ability to mix learned parts in new ways, like making an “avocado chair” or stacking blocks in a new order.

- Zero-shot: Doing something new without having seen that exact combination during training.

Overall, SLATE shows that with the right kind of scene understanding (slots) and a strong image builder (transformer), an “illiterate” model—one that never reads text—can still learn to compose images creatively and consistently.

Knowledge Gaps

Knowledge gaps, limitations, and open questions

Below is a single, concrete list of gaps the paper leaves unresolved that future work could address.

- Quantitative object segmentation: No evaluation of slot-object correspondence (e.g., ARI, mIoU) on datasets with ground-truth masks; need benchmarks to verify that each slot consistently maps to a single object.

- Concept library validity: The K-means-based concept library lacks analysis of concept purity, stability across runs, and sensitivity to K; methods to automatically select K and to update the library online (e.g., nonparametric clustering) are missing.

- Conditioning mechanism details: It is unclear how slots are integrated into the transformer (concatenation vs. cross-attention vs. gating) and how different mechanisms impact compositionality, image quality, and training stability; ablations are needed.

- Scalability to natural, high-resolution scenes: Performance on higher-res, cluttered, real-world images (diverse backgrounds, lighting, occlusions) is untested; scaling behaviors and limits remain unknown.

- Compute and efficiency: Training/inference time, memory footprint, parameter counts, and throughput are not reported; rigorous profiling and comparisons to mixture decoders, autoregressive, and diffusion decoders are needed to assess practicality.

- DVAE tokenization choices: Effects of codebook size V, patch size/downsampling factor K, temperature schedule, dead-code prevalence, and codebook utilization on attention granularity and generation quality are not analyzed; targeted ablations required.

- Fidelity vs. realism trade-off: Although FID improves, reconstruction MSE often worsens; mechanisms to control the trade-off (e.g., auxiliary losses, decoding strategies) and evaluation of identity preservation when recombining slots are missing.

- Sampling strategy: Generation appears to use argmax decoding; the impact of stochastic sampling (temperature, top-k, nucleus) on diversity, coherence, and compositional generalization is unexplored.

- Slot/object count mismatch: No systematic study of robustness when the number of slots differs from the number of objects (N < or > objects), nor strategies for dynamic slot allocation or pruning/merging.

- Spatial layout and relations: Composition relies on heuristic constraints (minimum distance, tower configuration); there is no explicit interface to specify positions/relations (e.g., left-of, behind, mirror symmetry) or scene-graph conditioning.

- Physical consistency metrics: Claims about shadows, reflections, and occlusions are qualitative; controlled tests and quantitative metrics for physical plausibility are needed to substantiate the “graphics engine” hypothesis.

- OOD generalization scope: Tests focus on recombining known slots (opposite-gender hair/face, object count changes, two towers); generalization to unseen categories, backgrounds, camera poses, and cross-dataset transfer remains an open question.

- Baseline breadth: No comparisons to transformer-based discovery (e.g., DINO) or modern decoders (diffusion models, state-of-the-art autoregressive); broader baselines are necessary to contextualize gains.

- Attention granularity: Slot attention operates on DVAE patches, leading to coarse masks; investigate multi-scale tokenization, overlapping patches, or hybrid CNN-token encoders to improve boundary precision.

- Slot disentanglement stability: Lacks analysis of slot identity consistency across images, permutation invariance handling, and slot collapse; include metrics and training strategies (e.g., binding losses) to stabilize object-slot assignments.

- Geometric/appearance alignment in composition: No mechanisms to align geometry, scale, pose, or color statistics when composing slots from different images; explore normalization/canonicalization of slot attributes prior to decoding.

- Data and compute scaling laws: No curves showing performance vs. dataset size/diversity and training compute; assess sample efficiency and establish scaling trends.

- Failure modes and robustness: Absent systematic cataloging of failures (heavy occlusion, thin structures, foreground-background confusions) and robustness to corruptions/noise/adversarial perturbations.

- Human evaluation rigor: Human preference studies lack methodological details (participant counts, protocols, inter-rater reliability); provide thorough documentation to ensure reproducibility and validity.

- Ethical/bias assessment: Minimal analysis of whether clustering and composition propagate dataset biases (e.g., in Bitmoji/CelebA); propose measurements and mitigation strategies.

- End-to-end discrete concept learning: Explore learning discrete concept tokens for slots (e.g., VQ on slot embeddings) and slot-level priors directly, instead of offline K-means clustering.

- Targeted scene editing: Formalize and evaluate editing operations on a given image (replace/move/resize/recolor specific slots), with metrics for edit fidelity and minimal unintended changes.

- Theoretical explanations: Provide formal or controlled experimental analysis isolating factors (decoder capacity, attention patterns) that drive object-centric emergence under autoregressive slot conditioning.

- Video and temporal consistency: Extend SLATE to video to evaluate temporal slot consistency, object tracking, and dynamic compositionality; assess performance against video object-centric baselines.

Glossary

Autoencoder: An unsupervised learning model that maps input data to a latent space and reconstructs it from the latent representation. "In this framework, an encoder takes an input image to return a set of object representation vectors or slot vectors..."

Image GPT decoder: A generative model that uses transformers to render images by autoregressively generating image tokens. "Unlike the pixel-mixture decoders of existing object-centric representation models, we propose to use the Image GPT decoder conditioned on the slots..."

Inductive bias: Assumptions about a learning problem used to guide the learning algorithm. "To encourage the emergence of object concepts in the slots, the decoder usually uses an architecture implementing an inductive bias about the scene composition..."

Object-centric representation: A method of representing data by focusing on individual objects within a scene. "...object-centric representation models like the Slot Attention model learn composable representations without the text prompt..."

Out-of-distribution: Refers to samples that are not part of the input distribution the model was trained on. "...achieves significant improvement in in-distribution and out-of-distribution (zero-shot) image generation..."

Pixel independence problem: The issue where pixels are independent of one another, which harms semantic consistency. "...the rendered image would look like a mere superposition of individual object patches without global semantic consistency..."

Pixel-mixture decoders: Decoders that construct images by a pixel-wise weighted mean of slot images. "In pixel-mixture decoders, each slot's contribution to a generated pixel... is independent of the other slots and pixels..."

Slot-Attention model: A framework for unsupervised object-centric learning that uses attention mechanisms to infer object slots from images. "...object-centric representation models like the Slot Attention model learn composable representations..."

Systematic generalization: The ability to apply learned knowledge to new, varying situations; generating plausible outputs beyond the training distribution. "DALL$ has shown an impressive ability of composition-based systematic generalization..."

Collections

Sign up for free to add this paper to one or more collections.