- The paper introduces SCAPT, a supervised contrastive pre-training method that aligns explicit and implicit sentiment representations for improved ABSA.

- The methodology leverages large-scale, sentiment-annotated datasets and integrates review reconstruction and masked aspect prediction to capture subtle sentiment cues.

- Experimental results on SemEval-2014 and MAMS benchmarks show significant improvements in accuracy and F1 scores, especially for implicit sentiment detection.

Learning Implicit Sentiment in Aspect-based Sentiment Analysis with Supervised Contrastive Pre-Training

The paper "Learning Implicit Sentiment in Aspect-based Sentiment Analysis with Supervised Contrastive Pre-Training" addresses the challenge of identifying implicit sentiment expressions in aspect-based sentiment analysis (ABSA). The authors propose a method using supervised contrastive pre-training to enhance sentiment detection, particularly when explicit sentiment markers are absent from the text. This is achieved through a novel pre-training scheme designed to capture implicit sentiment by aligning representations of sentiment expressions sharing the same labels.

Problem Definition

Aspect-based sentiment analysis is a sub-field of sentiment detection aimed at identifying the sentiment polarity associated with specific aspects of products, such as service or food in reviews. A significant portion of reviews, approximately 30%, convey sentiments implicitly without explicit opinion words. This necessitates advanced methods capable of capturing such subtleties, as existing neural network approaches generally focus on explicit sentiment markers.

Methodology

The proposed method involves Supervised Contrastive Pre-Training (SCAPT) applied to large-scale sentiment-annotated corpora retrieved from domain-specific language resources:

- Data Pre-Processing: Large sentiment-labeled datasets from sources like Yelp and Amazon Reviews are leveraged. Reviews relevant to domains such as restaurants or electronics are extracted and annotated, focusing particularly on sentences containing relevant aspect terms from ABSA training sets.

- Pre-Training Objectives:

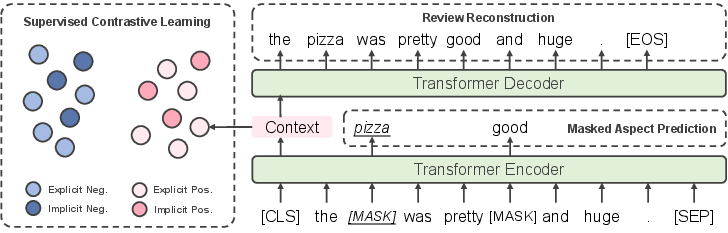

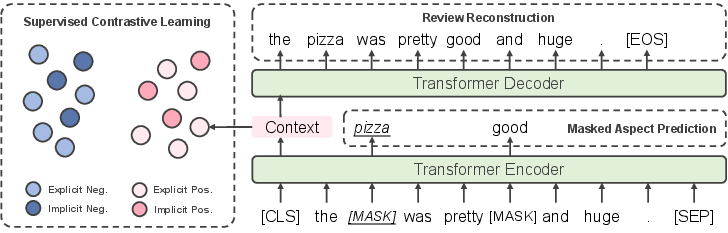

SCAPT includes three primary objectives:

- Supervised Contrastive Learning: Aligns representations by pulling together explicit and implicit expressions with the same sentiment orientation and pushing apart those with different labels.

- Review Reconstruction: Inspired by denoising autoencoders, this objective harnesses the semantic context beyond mere sentiment polarity.

- Masked Aspect Prediction: Ensures models learn context information pertinent to aspect-based sentiment recognition by masking aspect-related tokens during training.

Figure 1: Schematic representation of SCAPT highlighting its three integral components.

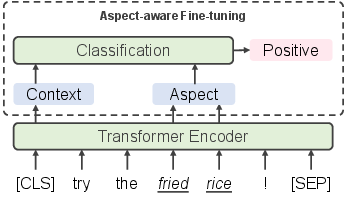

- Model Architecture: The methodology utilizes a Transformer-based architecture with a specific pre-training regime followed by aspect-aware fine-tuning to improve performance on ABSA tasks.

Experimental Results

Experimental evaluations are conducted using standard ABSA benchmarks such as SemEval-2014 and MAMS. The models incorporating SCAPT outperform numerous baselines, showing marked improvement in detecting both explicit and implicit sentiments:

SCAPT achieves superior accuracy and F1 scores on multiple datasets compared to traditional attention-based models and more advanced graph neural networks.

- Implicit Sentiment Recognition:

Significant gains are noted, especially in the identification of implicit sentiment expressions, underscoring the robustness of the proposed pre-training schema.

Analysis and Implications

Conclusion

The introduction of SCAPT marks a significant progression in handling implicit sentiment within ABSA tasks. The paper demonstrates how supervised contrastive learning aligns sentiment representations across explicit and implicit domains, thereby equipping models with refined sentiment detection capabilities. This work lays the groundwork for future exploration into leveraging large labeled corpora for improved sentiment analysis, with the potential for broader applications across various natural language processing tasks.