- The paper demonstrates that incorporating stochastic neural representations significantly enhances adversarial robustness by modifying geometric signatures.

- It employs manifold analysis to quantify the trade-off between representation overlap and network performance under adversarial and clean conditions.

- Findings suggest an optimal noise level that balances robust perception and clean performance, offering a computationally efficient alternative to adversarial training.

Neural Population Geometry: The Role of Stochasticity in Robust Perception

Introduction

The study entitled "Neural Population Geometry Reveals the Role of Stochasticity in Robust Perception" (2111.06979) explores the intersection of computational neuroscience and machine learning to address the discrepancies between artificial neural networks (ANNs) and biological sensory systems, particularly in the context of adversarial robustness. Adversarial examples, subtle perturbations imperceptible to humans but sufficient to alter ANN outputs, pose a significant challenge to the deployment of machine learning models in real-world applications. This paper introduces and evaluates biologically-inspired stochastic methodologies to mitigate adversarial vulnerabilities and enhance model robustness across both visual and auditory domains.

Geometric Signatures in Neural Representations

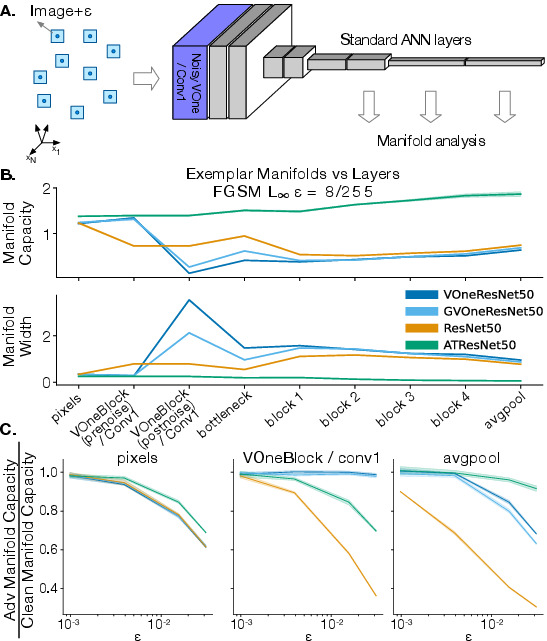

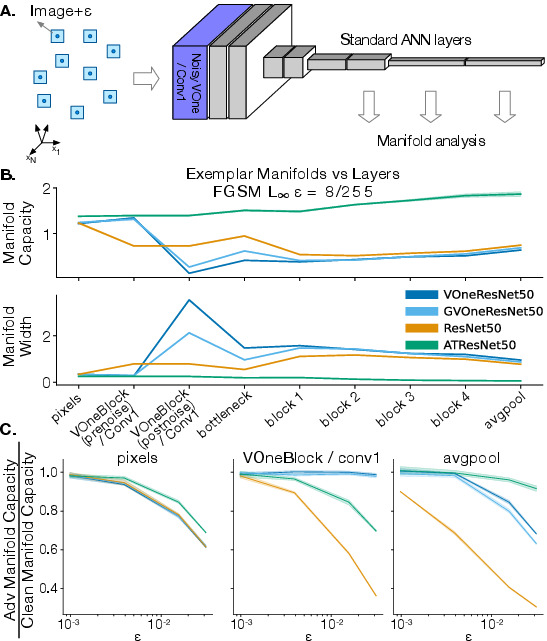

The authors apply manifold analysis techniques to characterize the internal neural population geometry of various network architectures, including standard, adversarially trained, and stochastic networks. This approach reveals distinct geometric signatures in response to clean and adversarial stimuli. In visual networks, adversarial training stabilizes the width of perturbation manifolds, while stochastic networks expand them, suggesting differing robustness strategies (Figure 1).

Figure 1: Geometry and capacity of adversarially perturbed exemplar manifolds in neural networks.

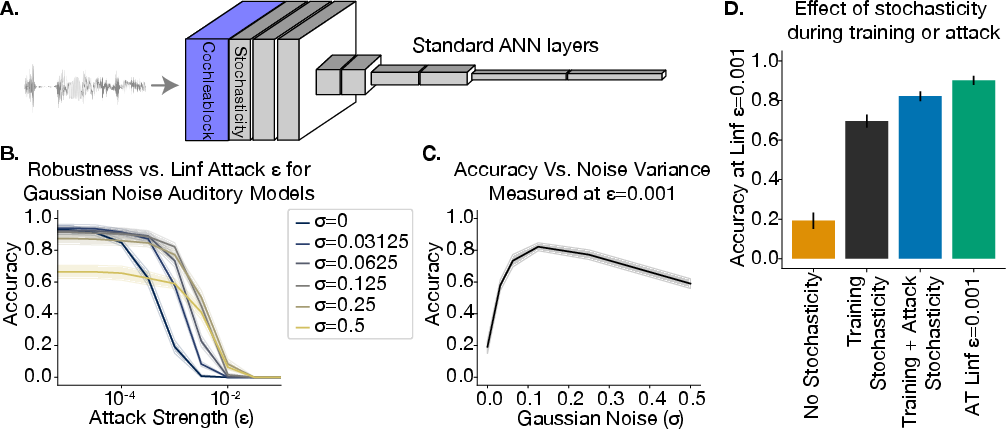

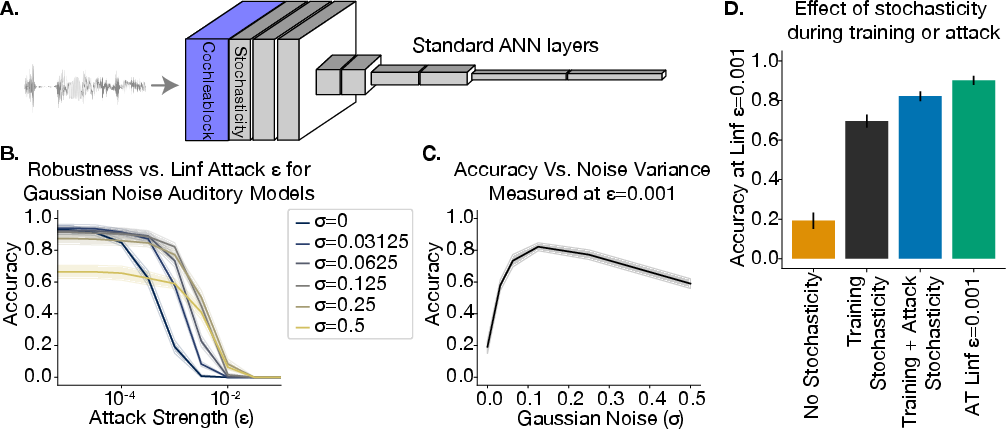

Additionally, a novel auditory model, StochCochResNet50, demonstrates the generality of these observations. Stochastic cochleagram representations yield improved adversarial robustness and share similar geometric profiles with visual networks, highlighting the modality-independent efficacy of stochastic responses (Figure 2).

Figure 2: Robustness of auditory networks trained with stochastic cochlear representations.

Protective Overlap and Trade-offs

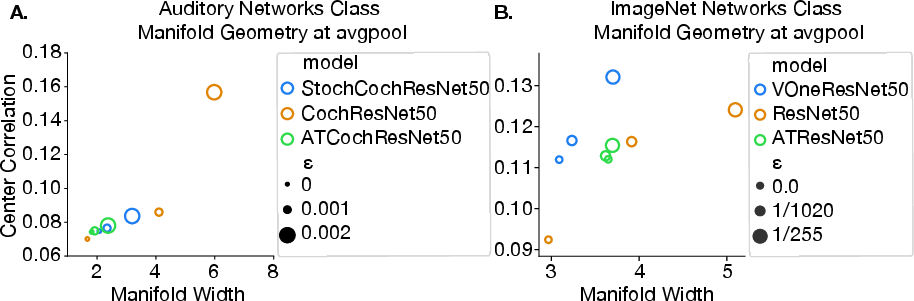

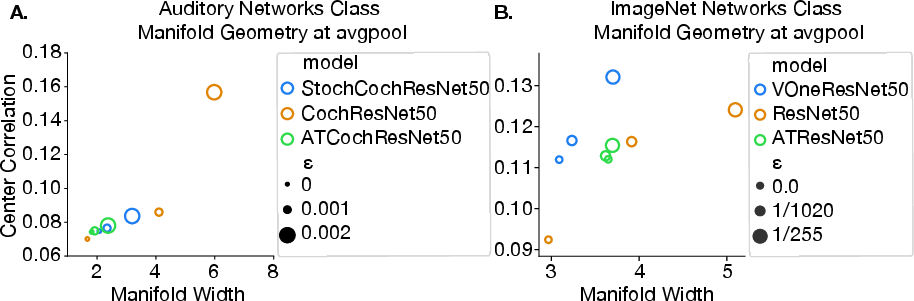

The analysis identifies an overlap between the representations of clean and adversarial stimuli within stochastic networks, quantitatively mapping a trade-off between representation overlap and network performance. This overlap is hypothesized to mediate robustness against small perturbations by situating adversarial examples within the natural manifold of stochastic responses (Figure 3).

Figure 3: Similarities between class manifold geometry in auditory and ImageNet networks.

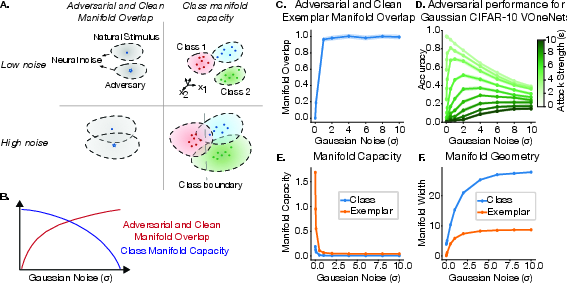

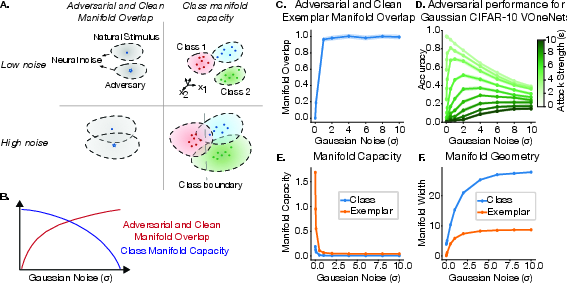

The study further explores the balancing act between noise-induced adversarial robustness and maintenance of clean performance. As stochasticity increases, representations overlap, which enhances adversarial robustness but potentially reduces the capacity and linear separability of class manifolds, ultimately affecting clean performance. This trade-off suggests an optimal noise level for stochasticity, appropriate for maximizing both clean and adversarial resilience (Figure 4).

Figure 4: Stochastic representations induce opposing geometric effects that determine a model's performance.

Implications and Future Directions

The implications of this research extend to both practical applications and theoretical developments in AI. Practically, incorporating stochasticity within network architectures offers a promising avenue for enhancing adversarial robustness without the computational overhead of adversarial training. Theoretically, the work challenges the conventional understanding of robustness mechanisms in ANNs, proposing neural geometry as a lens through which robust perception in both biological and artificial systems can be further understood.

Future explorations could benefit from more nuanced manifold analysis techniques and extend evaluations to naturally occurring disturbances beyond adversarial attacks. Moreover, understanding the integration of stochasticity within neural models opens a new frontier for biological plausibility in ANN designs, potentially realizing even more robust architectures inspired by biological computation principles.

Conclusion

This paper presents compelling evidence that stochastic neural representations can mitigate adversarial vulnerabilities across sensory domains. The findings point to a robust geometric framework capable of elucidating the complexities of neural population coding and its role in enhancing model resilience. By continuing to align insights from computational neuroscience with machine learning, the work paves the way for a more unified and robust approach to understanding and improving artificial perception systems.