- The paper introduces a novel hypothesis-free approach that combines neural networks for prediction with symbolic regression for explanation.

- It employs a three-layer MLP and symbolic regression to rediscover Kepler’s laws and Newton’s gravitational insights with low prediction errors.

- The study underscores effective human-AI collaboration where AI-generated models are interpreted by experts to validate fundamental scientific laws.

Explainable AI for Science Discovery

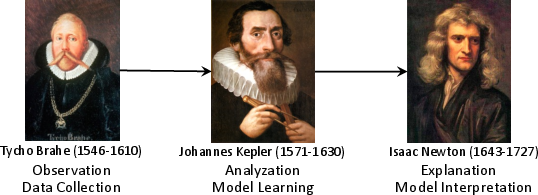

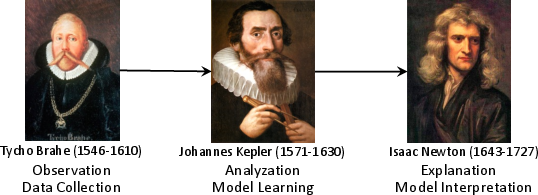

The paper "From Kepler to Newton: Explainable AI for Science" (2111.12210) explores the integration of Explainable AI with the scientific discovery process. It presents an innovative, hypothesis-free science discovery paradigm that leverages Explainable AI to interpret data, formulate hypotheses, and generate understandable scientific insights. The paper exemplifies this approach by re-examining the discoveries of Kepler and Newton using Tycho Brahe's astronomical observations.

Traditional vs. Explainable AI Paradigm

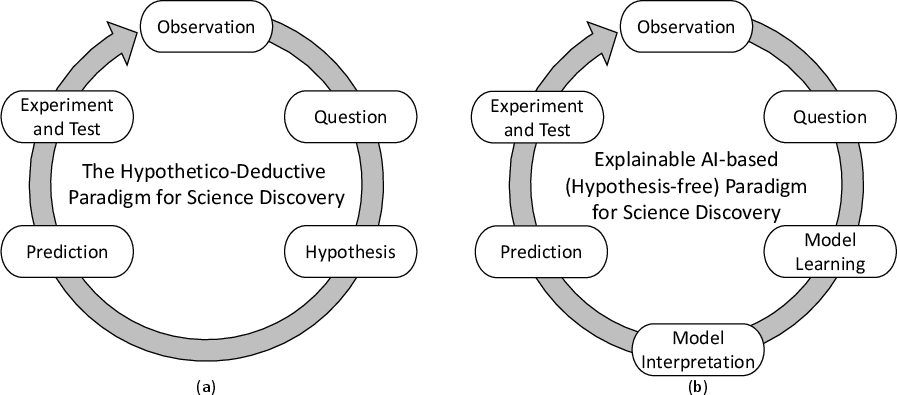

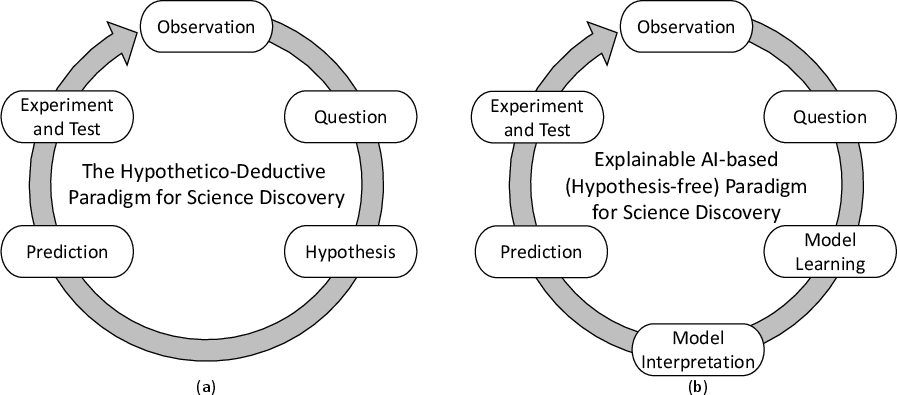

The traditional scientific discovery paradigm, often referred to as the Hypothetico-Deductive method, involves observation, hypothesis formulation, prediction, and experimental verification. In contrast, the Explainable AI paradigm replaces manual hypothesis generation with automatic AI-driven model learning and interpretation (Figure 1). The Explainable AI paradigm employs black-box models, such as neural networks, for accurate prediction and data augmentation, and white-box models, notably symbolic regression, to transform the black-box models into human-understandable forms.

Figure 1: The Hypothetico-Deductive paradigm for science discovery and the Explainable AI-based (hypothesis-free) paradigm for science discovery. The new paradigm uses Explainable AI to generate verifiable hypothesis.

Rediscovering Kepler's Laws

Black-box Model for Prediction

The paper utilizes a three-layer multilayer perceptron (MLP) to represent the neural network model for predicting Mars's distance from the Sun and to augment the initial dataset. The model trained using limited historical data achieves a robust fit, as evidenced by low mean squared errors on both training and validation datasets.

White-box Model for Explanation

Symbolic regression interprets the black-box model into a more intuitive physical model of Mars’ orbit. The regression results in an equation that reveals Mars orbits in an ellipse with the Sun at one focus, aligning with Kepler's first law of planetary motion. The extracted eccentricity closely matches that calculated by Kepler and is consistent with modern observations.

Rediscovering Newton's Laws

Prediction and Data Augmentation

A neural network model θ=NN(t) is utilized to predict Mars's angular position over time and to facilitate data augmentation. Despite the complexity of solving planetary motion equations, the model accurately approximates this relationship.

Explanation through Symbolic Regression

The paper demonstrates that symbolic regression can reveal r3ω2=c from the motion data of Mars, where r is the Sun-Mars distance and ω the angular velocity, revealing a consistent rule with Newton’s law of universal gravitation within reasonable error margins. This exercise highlights how AI models can uncover fundamental physical relationships which, albeit known today, weren't explicitly formulated prior to Newton.

Conceptual Insights and Human-AI Collaboration

The paper emphasizes the importance of human interpretation in assigning meanings and deriving insights from AI-discovered equations. For instance, while AI can suggest r3ω2=c, it is human experts who identify this as an expression of gravitational forces. Explainable AI can point towards significant laws or constants, guiding human intuition and validating discoveries.

Conclusion

The practical application of Explainable AI in rediscovering historical scientific insights underscores its potential in managing and understanding complex data in various fields. This paradigm facilitates the extraction of interpretable scientific rules from data, crucial to advancing human knowledge amidst growing technological complexities. Future work aims to expand the methodology to contemporary challenges in fields such as astronomy and particle physics, ensuring human comprehension of AI findings to continually advance our theoretical understanding and technological prowess.

Figure 2: Tycho Brahe, Johannes Kepler, Isaac Newton and their roles in the science discovery process.