- The paper introduces MultiVerS, a model that integrates full-document context and weak supervision to improve scientific claim verification.

- The system employs a Longformer encoder and multitask strategy to predict sentence-level rationales and document-level claims effectively.

- Experimental results on SciFact, HealthVer, and COVIDFact datasets show significant performance gains over traditional baselines.

MultiVerS: Improving Scientific Claim Verification

This essay explores "MultiVerS: Improving scientific claim verification with weak supervision and full-document context," which presents a novel approach to the scientific claim verification task using a system named MultiVerS. MultiVerS is designed to enhance the capability of NLP systems in verifying claims by integrating complete document context into predictions and employing weak supervision techniques. This paper provides a thorough investigation of the model's architecture, training regimes, datasets, and experimental outcomes.

Introduction to MultiVerS

MultiVerS is a multitask system aiming to improve scientific claim verification through innovative model design and training strategies. The system tackles two significant challenges: ensuring predictions are based on comprehensive document context and facilitating weakly-supervised domain adaptation. The paper introduces two primary modeling advancements. Firstly, MultiVerS utilizes a shared encoding of claims and document context to make predictions, thus guaranteeing all necessary information is incorporated into decision-making. Secondly, the model learns from document-level labels without sentence-level rationales, enabling training on large datasets annotated via high-precision heuristics.

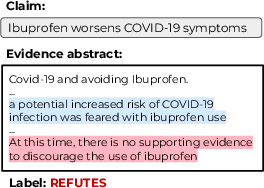

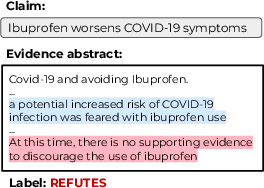

Figure 1: A claim from the HealthVer dataset, refuted by a research abstract, indicative of the importance of context in interpretation.

Model Architecture

The MultiVerS architecture employs the Longformer encoder to manage long sequences, crucial for encoding claims alongside full abstract contexts. This approach contrasts with traditional extract-then-label models by predicting fact-checking labels directly and ensuring consistency between labels and selected rationales during decoding. The Longformer facilitates attention over extensive document contexts, improving the ability to capture relevant information dispersed throughout scientific texts.

The model executes a multitask prediction strategy, where it simultaneously determines sentence-level rationales and document-level claims. During training, rationales are predicted using binary classification, which allows for adaptability in various supervision settings, including full, few-shot, and zero-shot regimes.

Training and Weak Supervision

MultiVerS leverages weak supervision by training on datasets annotated using heuristics, thus preparing the model for domain adaptation. The pretraining step combines general domain data with in-domain weakly labeled data, which significantly enhances model performance in zero-shot and few-shot settings. This strategy is particularly beneficial in specialized domains, where labeled data are sparse and expensive to procure.

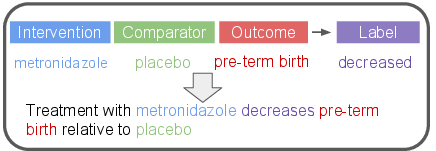

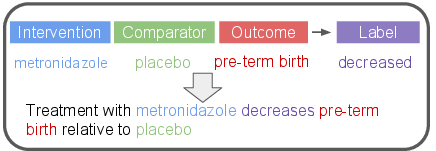

Figure 2: An illustration of converting an evidence inference prompt into a claim using templates for automatic generation of refuted claims.

Dataset Utilization

The paper evaluates MultiVerS using three scientific claim verification datasets: SciFact, HealthVer, and COVIDFact. Each dataset presents distinct characteristics in terms of claim complexity, retrieval settings, and negation methods, providing a comprehensive assessment of the model's adaptability and robustness. SciFact focuses on biomedical claims, HealthVer on COVID-related claims, and COVIDFact on claims extracted from social media, covering a diverse range of scientific topics and claim formats.

Experimental Results

MultiVerS demonstrates superior performance over existing baselines, particularly in zero-shot and few-shot training scenarios. The model achieves significant improvements in abstract-level labeling, showcasing its effectiveness in integrating document-wide context. Ablations reveal that in-domain weakly supervised pretraining contributes substantially to improved task performance. Additionally, the Longformer encoder proves advantageous for handling datasets with lengthy documents.

Analysis and Future Directions

The study highlights areas for improvement, such as addressing the limitations of traditional pipeline models, which may over-fit to rationale selection inaccuracies. Future research directions include exploring claim verification across broader academic and specialized domains, scaling verification tasks to full papers, and incorporating more sophisticated domain adaptation techniques.

Conclusion

MultiVerS advances scientific claim verification by integrating full-document context and employing weak supervision for domain adaptation. Through comprehensive experimentation and analysis, the paper demonstrates meaningful progress in addressing misinformation in scientific domains. MultiVerS's approach paves the way for the development of more robust and adaptive fact-checking systems in scientific and other discourse-heavy fields.