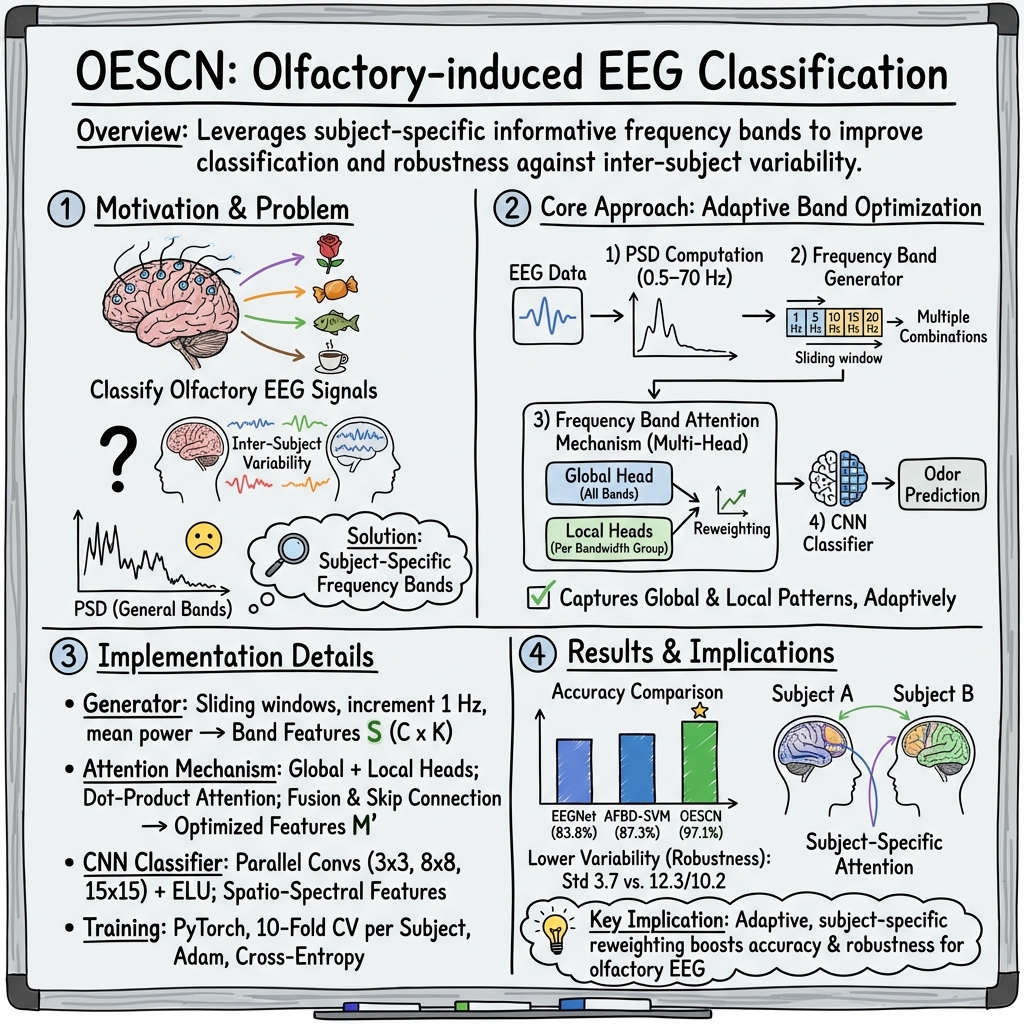

- The paper introduces a network that utilizes frequency band generation and attention to enhance EEG classification.

- The method employs sliding window analysis on PSD features and a CNN to achieve an accuracy of 97.1%.

- Results demonstrate improved robustness against inter-subject variability and potential extensions to broader EEG applications.

An Olfactory EEG Signal Classification Network Based on Frequency Band Feature Extraction

Introduction

Electroencephalogram (EEG) signals provide a crucial methodology for examining brain activity across various sensory modalities including visual, auditory, and motor imagery. Recently, the olfactory sense has gained attention due to its ability to evoke potent memories and trigger emotional responses. The study of olfactory-induced EEG signals is vital across various fields, such as neuroscience and brain-computer interfaces. Existing methods typically utilize power-spectral-density (PSD) to extract features, focusing on specific frequency bands. However, the variability between subjects necessitates the extraction of frequency bands that include subject-specific information rather than generalizable features.

Methodology

The paper proposes a novel network that exploits specific frequency bands within the EEG signals for classifying olfactory-related data. The network comprises several key components including a frequency band generator, an attention mechanism, and a convolutional neural network (CNN) for classification. The frequency band generator employs the sliding window technique on PSD features to derive frequency bands vital for classifying EEG signals. Subsequently, the frequency band attention mechanism adjusts the frequency bands to cater to individual subject differences. Finally, a CNN extracts spatio-spectral information and delivers classifications.

Experimental Setup

The experimental procedures were conducted on EEG data from olfactory stimulation involving 13 different odors across 11 subjects. The EEG signals were recorded using a 32-channel system, in compliance with the international 10-20 arrangement, ensuring robust data collection. The dataset underwent a 10-fold cross-validation to assess the classification accuracy of the proposed method against baseline approaches such as EEGNet and the Average Frequency Band Division with Support Vector Machine (AFBD-SVM).

Results

The results indicate that the proposed olfactory EEG signal classification network (OESCN) significantly outperforms the baselines, with an average accuracy of 97.1%, compared to 83.8% for EEGNet and 87.3% for AFBD-SVM. The method also demonstrated robustness to inter-subject variability, with a lower inter-subject standard deviation. These outcomes establish the noitceable enhancement provided by the frequency band generator and attention mechanism, which improve both the accuracy and robustness of classification models when handling inter-subject variability.

Discussion and Implications

The frequency band attention mechanism appears critical in adjusting features to the individual subject, highlighting its utility in situations of high inter-subject variability, which is typical in EEG data. Additionally, the proposed approach showcases potential extensions beyond the olfactory stimuli. By incorporating various spectral-domain features, this method exhibits generalizability, making it relevant to broader applications within EEG analysis where subject-specific variations are predominant.

Conclusion

Overall, this paper contributes an advanced tool for classifying olfactory EEG signals by harnessing spectral-domain information through a sophisticated frequency band extraction process. The impressive performance on subject-specific variability sets a groundwork for future studies to develop more refined, adaptable methodologies applicable to diverse EEG studies, potentially influencing technologies reliant on accurate brainwave interpretations, notably in brain-computer interfaces and neurological assessments.