- The paper presents a novel framework using container orchestration tools to enhance resource management and collaboration in analysis facilities.

- It details deployment strategies and security measures implemented at Coffea-casa and Fermilab’s Elastic Analysis Facility.

- The study demonstrates how GitOps and Helm integration reduce recovery time and enable dynamic, scalable deployments.

Collaborative Computing Support for Analysis Facilities Exploiting Software as Infrastructure Techniques

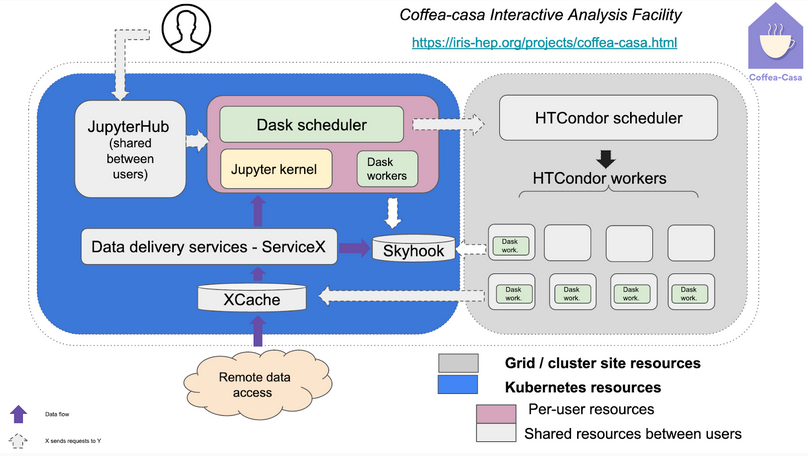

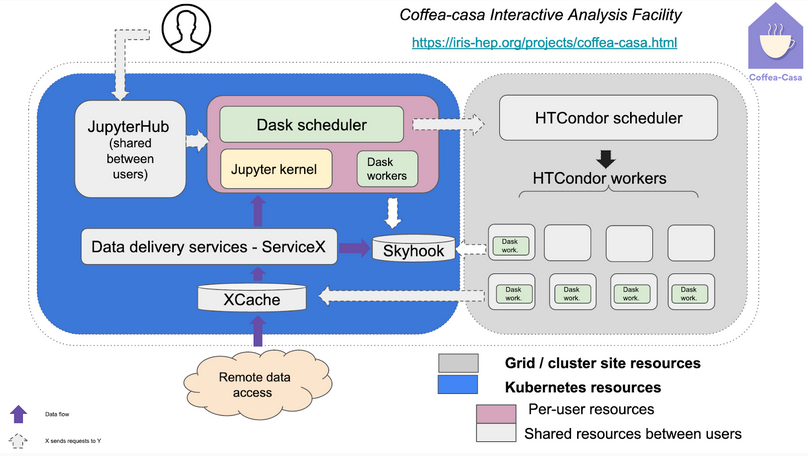

The paper presents methodologies and frameworks that have significantly facilitated collaborative computing support across diverse analysis facilities, specifically focusing on software as infrastructure techniques enabled by Kubernetes and OKD platforms. These techniques are instrumental in abstracting complex system configurations, thus improving resource management and collaboration. The paper elaborates on two notable analysis facilities, "Coffea-casa" at the University of Nebraska and the "Elastic Analysis Facility" at Fermilab, exploring their deployment strategies, security implementations, and future expectations.

Container-based Infrastructure and Orchestration

The introduction of containers within these facilities provides an optimal balance between flexibility, portability, and isolation. Kubernetes is particularly highlighted for its role in orchestrating containers, allowing for efficient deployment of infrastructure-as-code on a large scale. Coffea-casa leverages Kubernetes's features to host services such as user-authenticated JupyterHub instances that allocate dedicated analysis pods for user computations. These pods incorporate several components, enhancing responsiveness and resource management.

Figure 1: CMS analysis facility at the University of Nebraska.

On the other hand, Fermilab's Elastic Analysis Facility utilizes OKD, enhancing the security features necessary for compliance while accommodating multi-tenant requirements. Both facilities benefit from orchestration frameworks, dynamically provisioning computational clusters using HTCondor schedulers.

Ensuring Reliability through Helm & Binderhub Integration

Helm charts play a critical role in the modularity and portability of infrastructural models, facilitating swift deployments and rollback capabilities. By employing these charts, both facilities exemplify modular architectures capable of rapid recovery and re-deployments.

Binderhub, which transforms code repositories into executable Jupyter notebooks, contributes significantly to reproducibility and user interaction. Due to its cloud-based nature, Binderhub undergoes stringent security measures at Fermilab to prevent misuse, integrating seamlessly with JupyterHub APIs for consistent user authentication and data access control. This integration highlights the flexibility of resources, allowing developers to manage arbitrary user code securely across diverse computational demands.

GitOps in Scientific Computing

GitOps, implemented via tools like Flux and ArgoCD, has revolutionized the management of infrastructure in scientific analysis facilities. By utilizing version control systems as a single source of truth, both sites maintain deterministic and collaborative administration workflows. GitOps principles advocate for continuous integration and delivery practices, improving software quality and reducing deployment times. This approach yields substantial operational improvements, enabling efficient response and recovery from infrastructural failures.

Repeatability, Reliability, and Scalability

GitOps facilitates seamless error recovery by maintaining all configurations in version-controlled repositories, providing complete histories of all changes. This systematic management parallels audit and transaction logs, vital for rollback and failure mitigation. The reduced mean-time-to-recovery (MTTR) represents a significant operational advantage, minimizing downtime and preserving service integrity even under adverse conditions.

Conclusion

The paper outlines significant strides taken toward optimizing analysis facilities through containerization and orchestration platforms, employing cutting-edge technologies to elevate collaborative computing capabilities. By integrating Kubernetes, OKD, Helm, and GitOps, these facilities have paved the way for sustainable, scalable deployments, promising broader applicability across scientific computing domains. As development continues, leveraging these methodologies could serve as a blueprint for future facilities striving for efficiency and adaptability in high-capacity environments.