- The paper introduces deep learning methods augmented with attention mechanisms that outperform classical feature-based techniques.

- It employs robust techniques including spatiotemporal interest points and temporal convolutional networks to analyze 99 cataract surgery video samples.

- Results demonstrate improved AUCs and predictive performance, indicating potential for unbiased skill evaluation and enhanced surgical training.

Video-based Assessment of Intraoperative Surgical Skill

Introduction

The paper "Video-based assessment of intraoperative surgical skill" (2205.06416) investigates the use of video analysis to assess surgical skill, a vital factor impacting patient outcomes. The study aims to compare feature-based methods, traditionally used in benchtop settings, with novel deep learning approaches for evaluating surgical skill directly from intraoperative video footage. It focuses on the specific surgical task of capsulorhexis in cataract surgery, evaluating 99 video samples through state-of-the-art machine learning techniques, including spatiotemporal interest points and neural networks augmented with attention mechanisms.

Feature-based Methods

Feature-based methods identify spatiotemporal interest points (STIPs) and extract descriptors such as HoG, HoF, and MBH. These methods attempt to encapsulate motion dynamics and spatial information, typically analyzed with linear classifiers. Techniques like Augmented Bag-of-Words (Aug. BoW) expand upon traditional BoW by incorporating temporal dependencies, thereby offering a comprehensive temporal representation that improves performance.

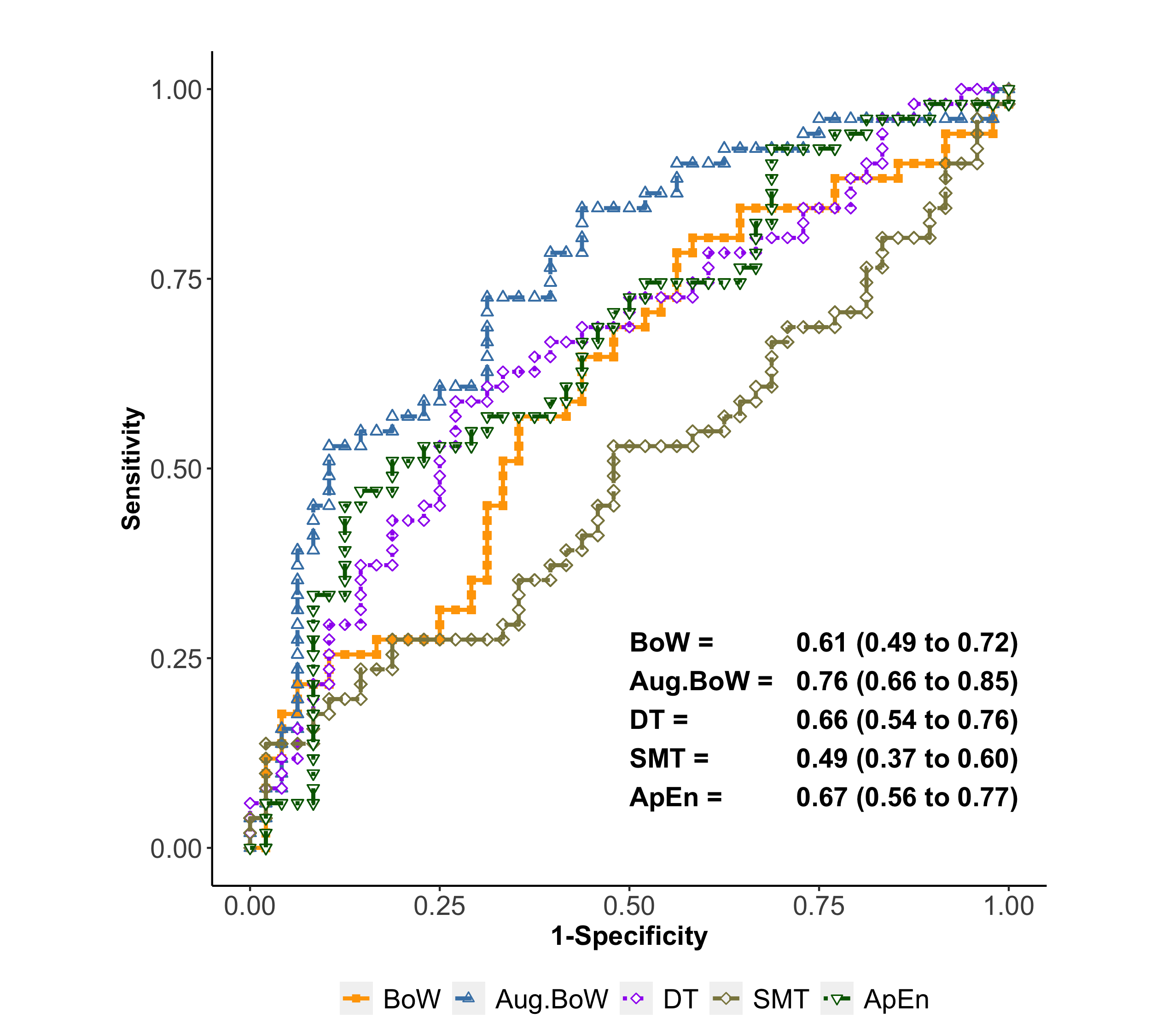

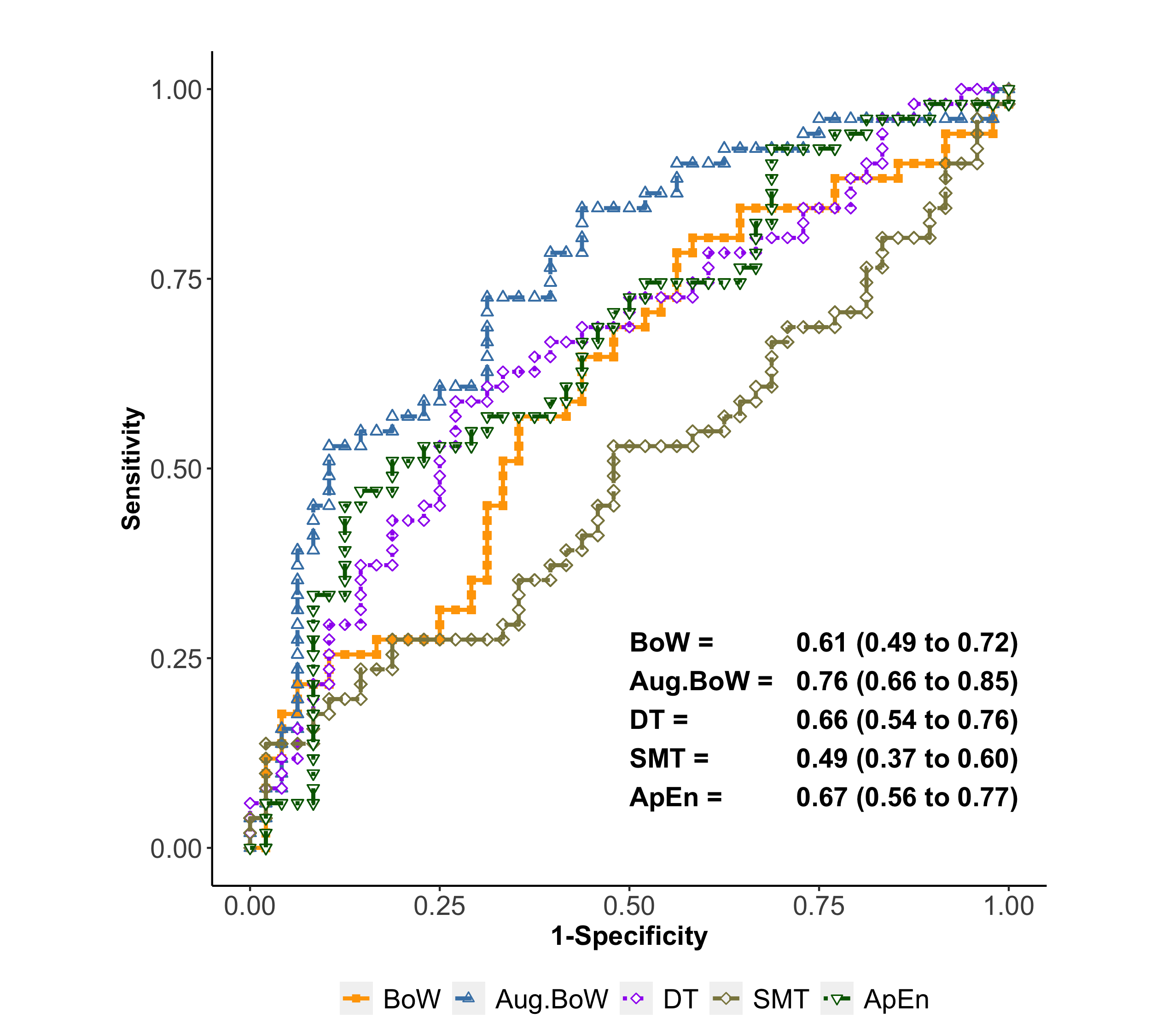

Figure 1: ROC plots for interest-point based methods. Numbers on plots are AUC and 95% confidence intervals.

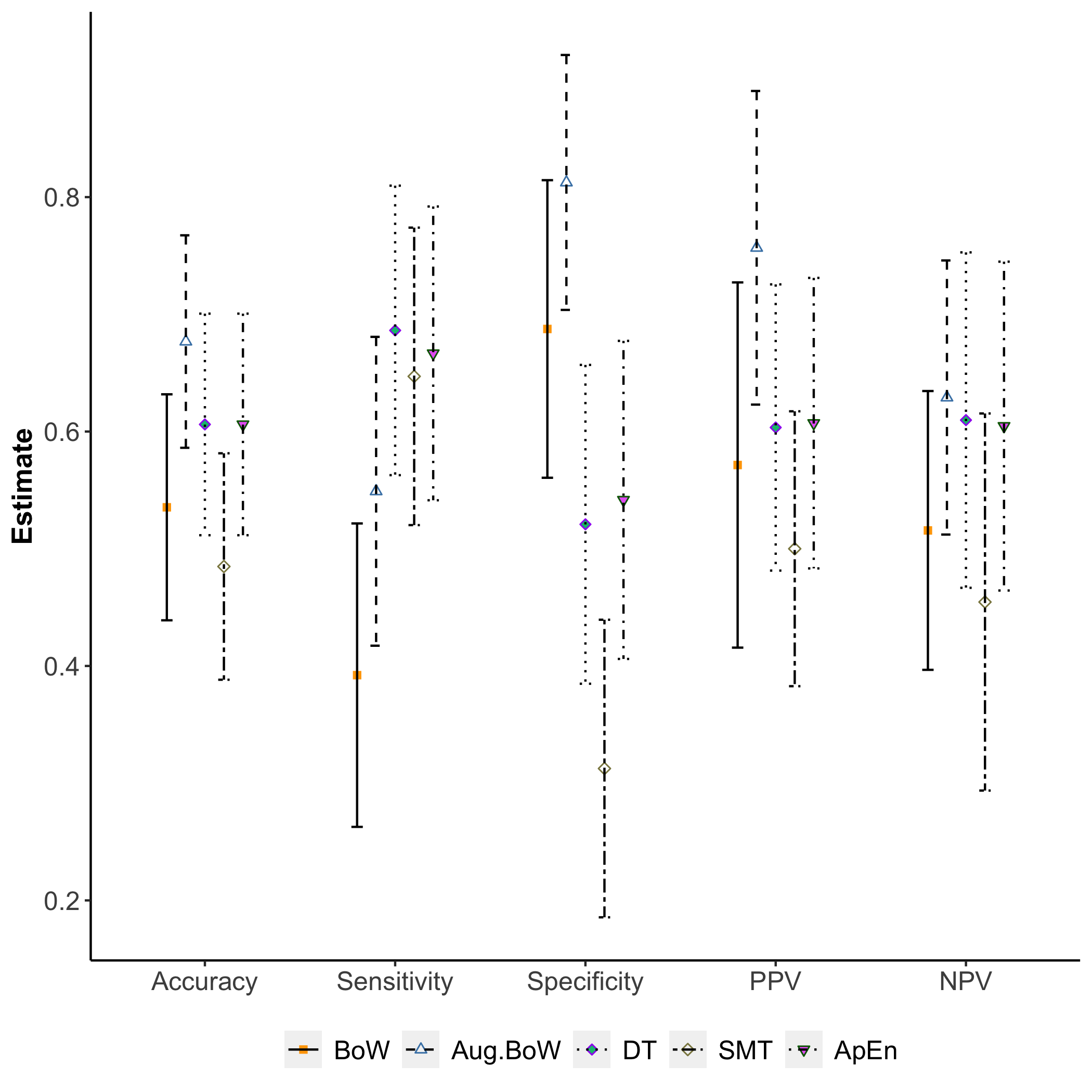

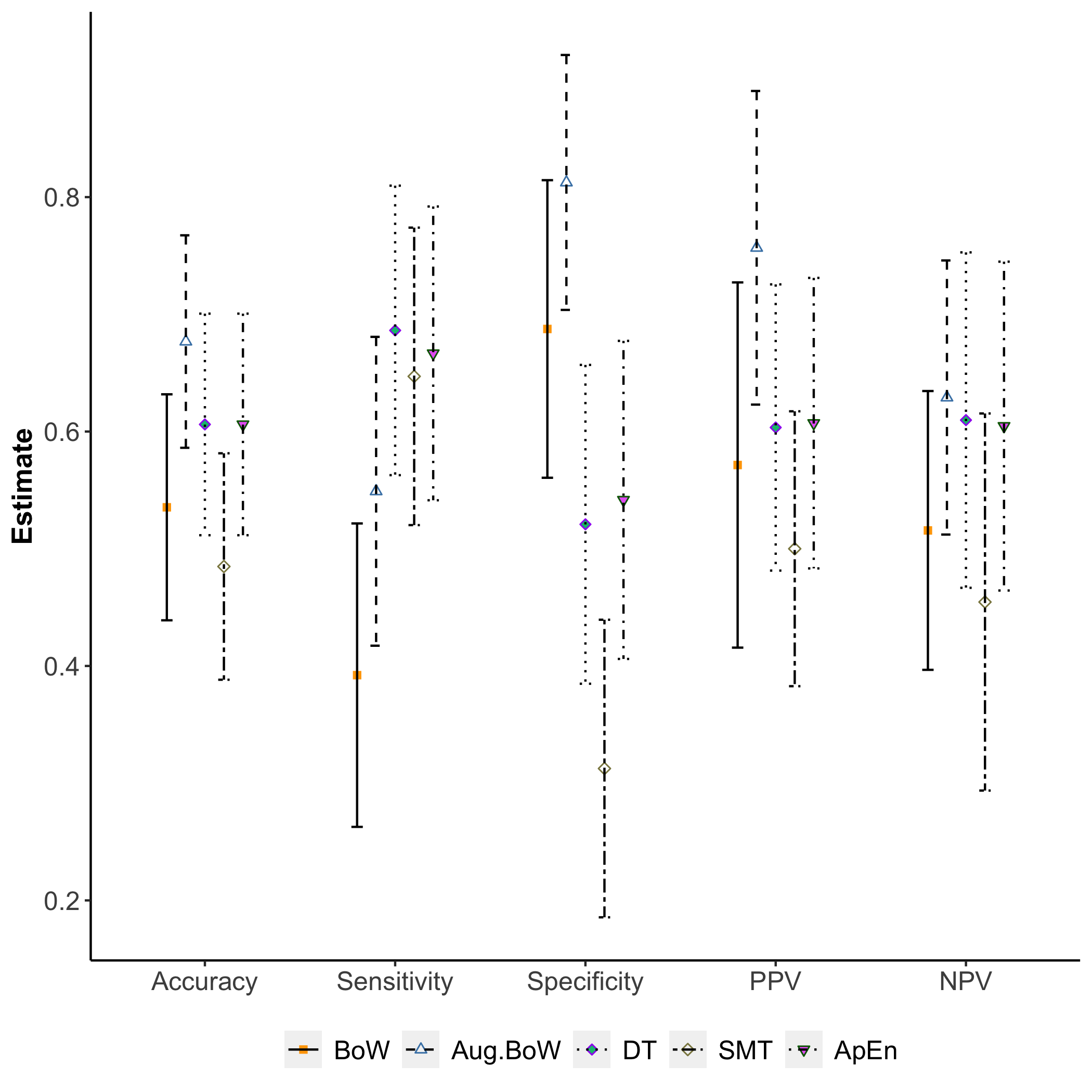

Despite their structured approach, these methods provide limited sensitivity and specificity, as shown in the ROC plots (Figure 1) and predictive performance assessments (Figure 2). Their reliance on predefined feature extraction may hinder adaptive capabilities in complex surgical environments.

Figure 2: Predictive performance of interest-point based methods.

Deep Learning Methods

Deep learning methods surpass conventional approaches by directly analyzing raw video data with architectures such as Temporal Convolutional Networks (TCN) and dual-attention networks. The TCN-based approach utilizes predicted surgical tool tip trajectories, efficiently encoding movement dynamics essential for skill discrimination. However, integrating attention mechanisms significantly enhances performance by enabling the model to focus on relevant spatio-temporal regions in the video, addressing limitations in purely motion-based analysis.

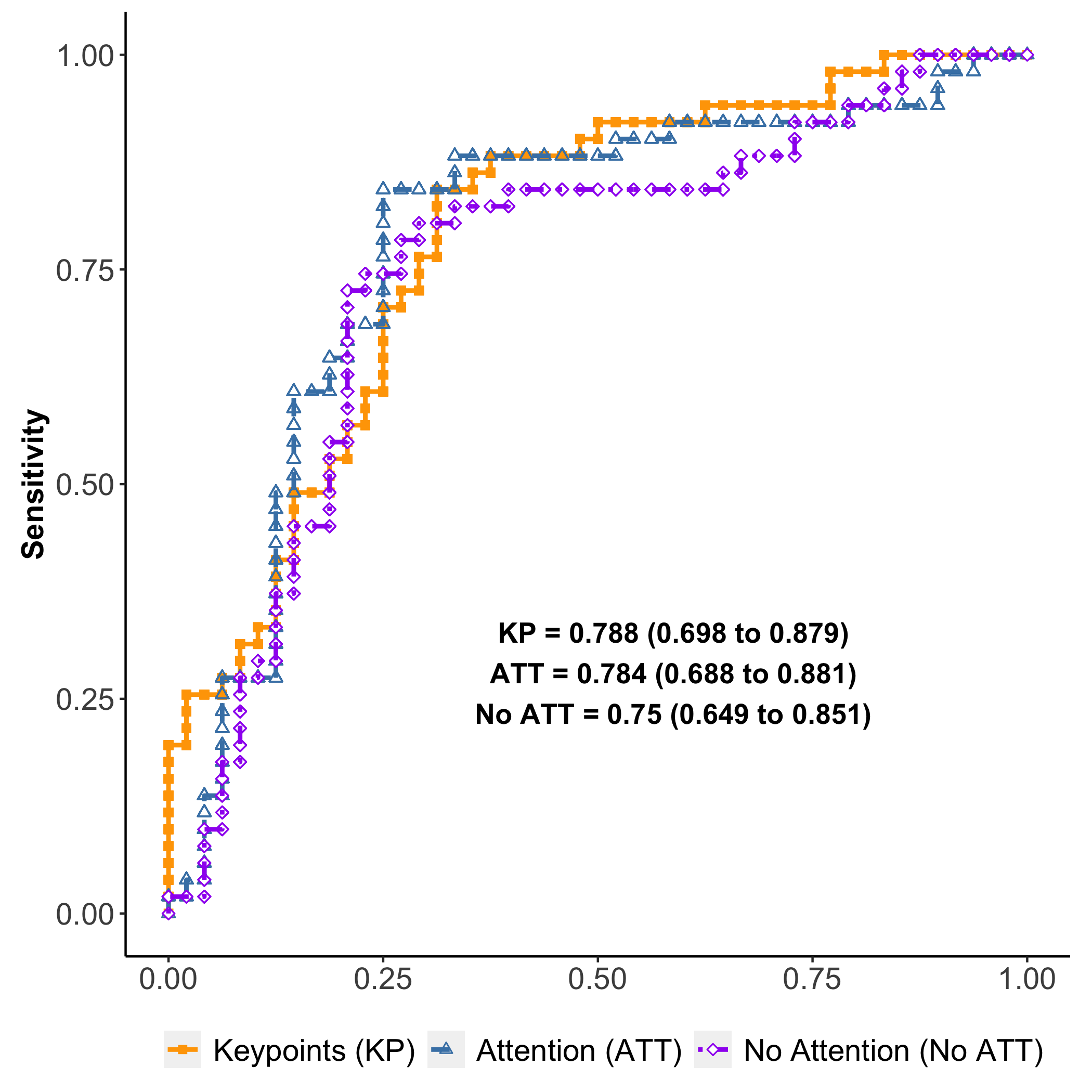

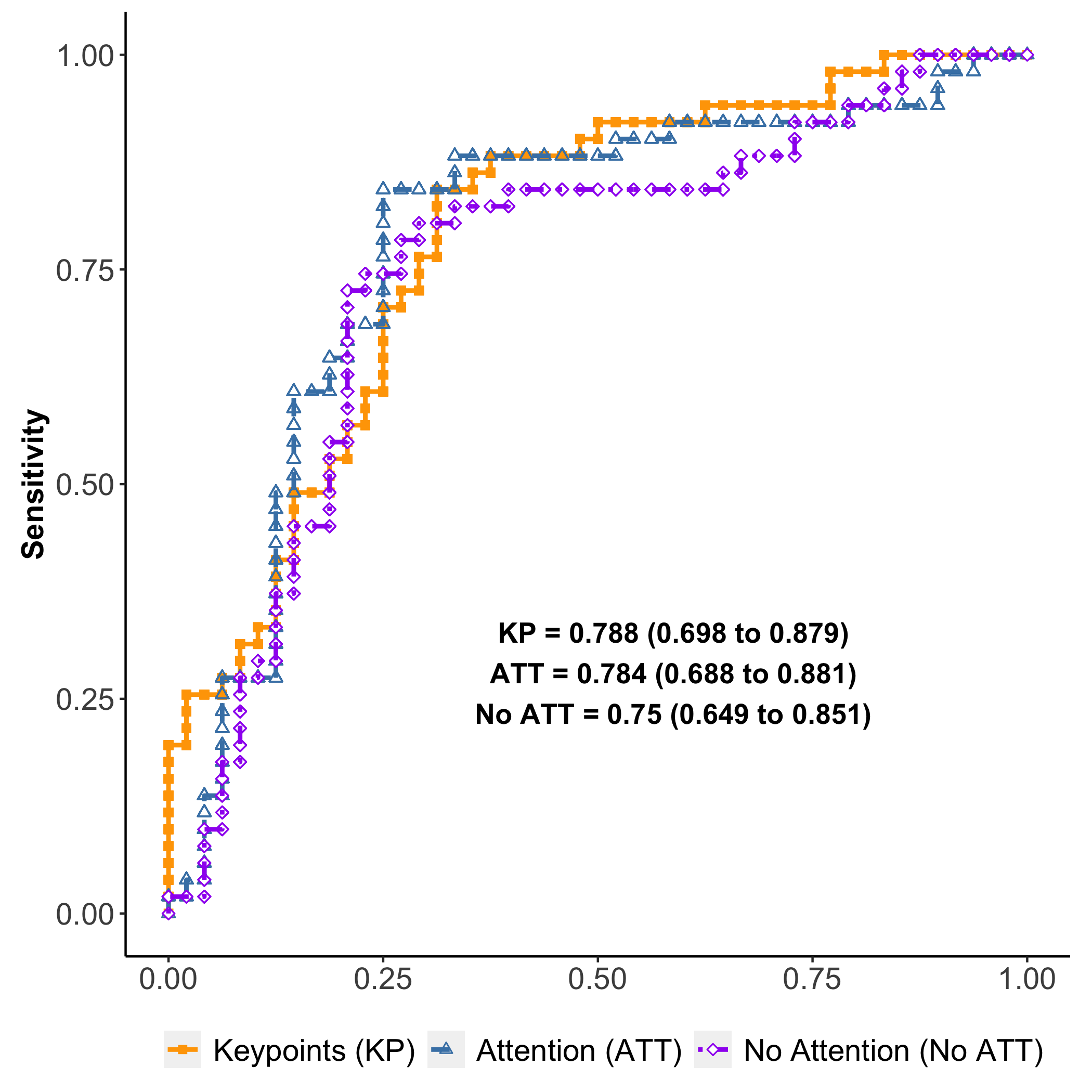

Figure 3: ROC plots for deep learning methods. Numbers on plots are AUC and 95\% confidence intervals.

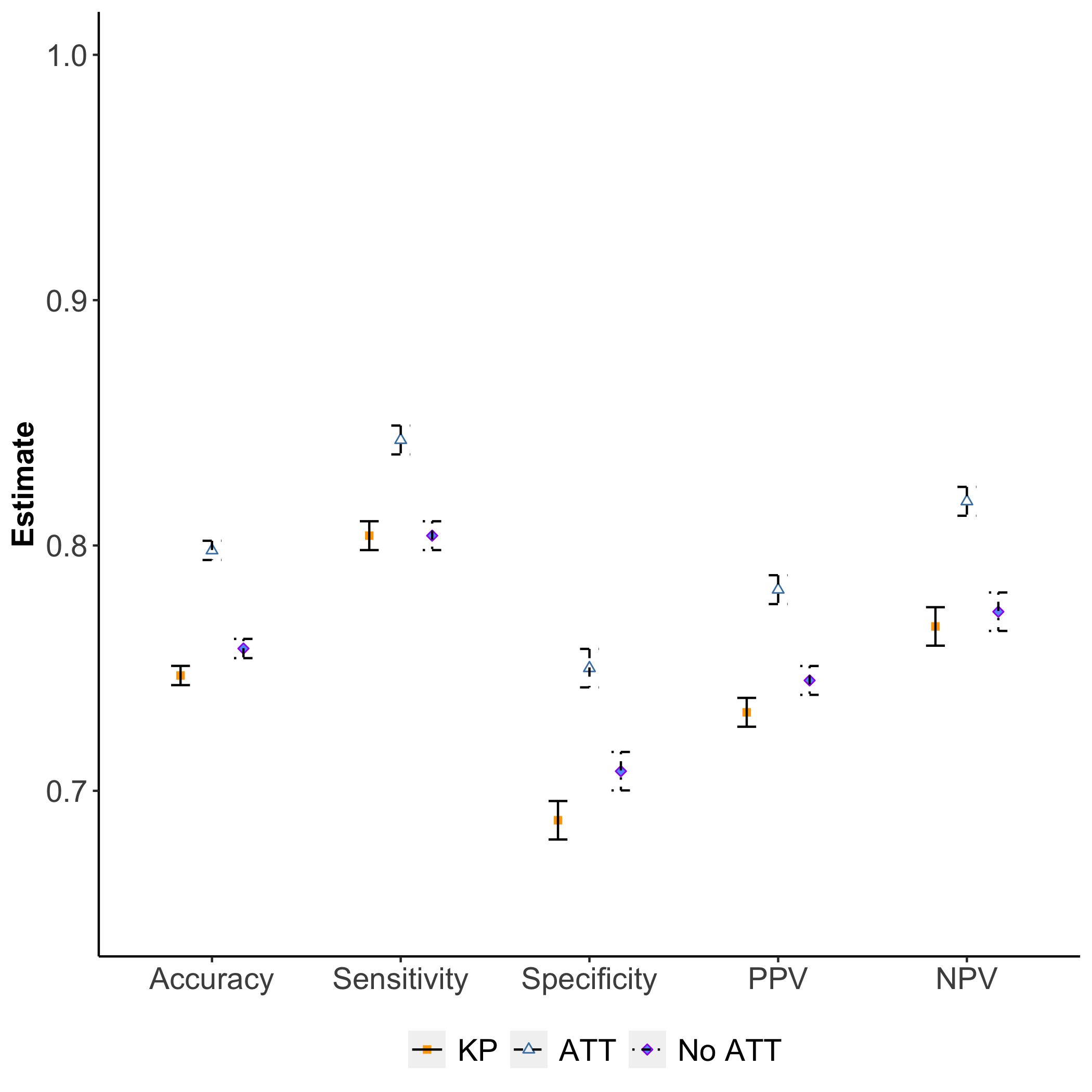

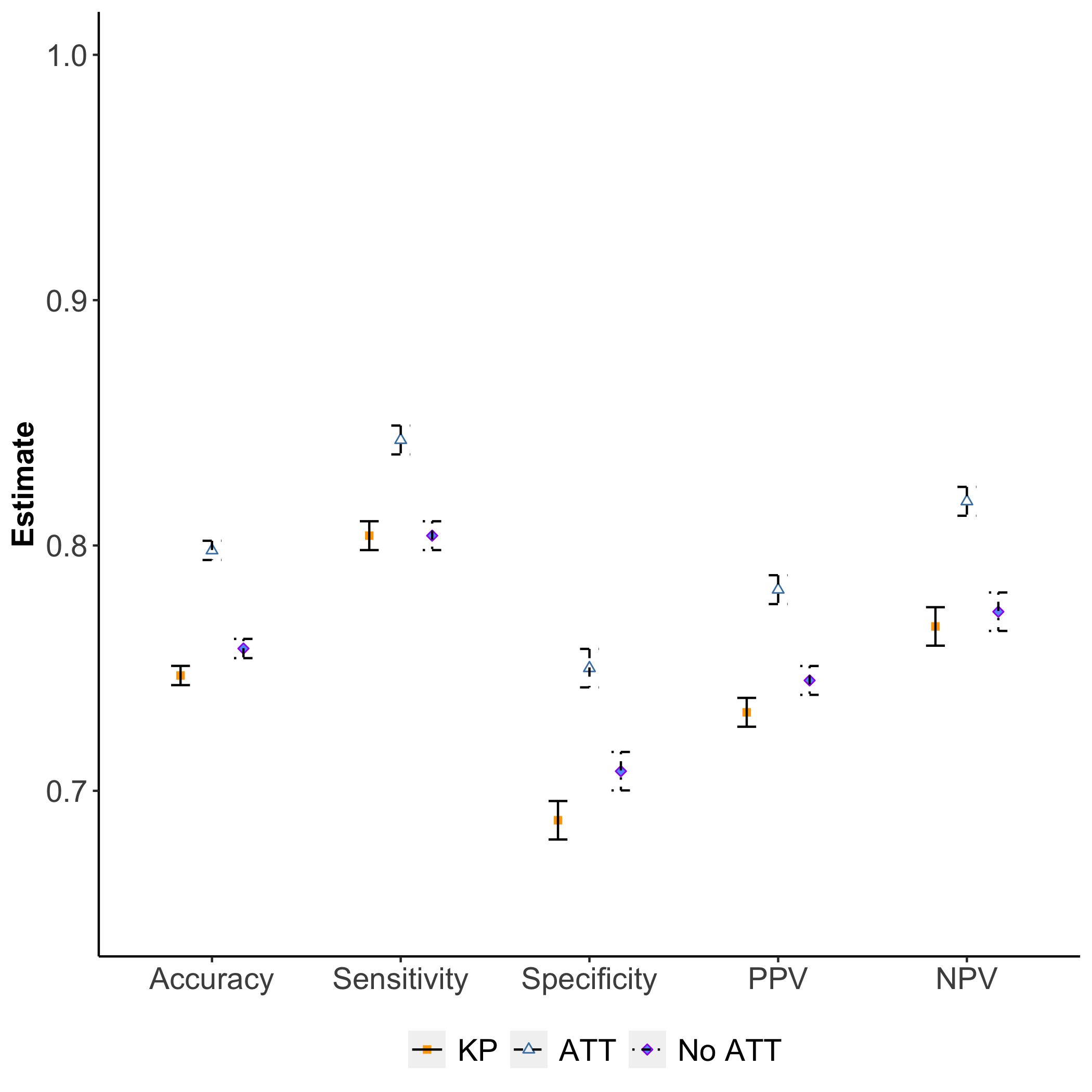

The ROC plots for deep learning methods (Figure 3) demonstrate superior AUCs compared to feature-based counterparts, with attention mechanisms yielding higher sensitivity and specificity (Figure 4). These results indicate the effectiveness of attention in capturing contextual data beyond tool motions, leading to more accurate skill assessments.

Figure 4: Predictive performance of deep learning methods.

Dataset and Experiments

The study utilizes a dataset of 99 video samples of capsulorhexis, where each video is annotated with skill ratings based on standardized rubrics. It employs a rigorous 5-fold cross-validation technique to ensure robustness in evaluation across various experimental settings, including binary classification and multi-class labeling of skill level.

Implications and Future Work

The findings suggest that deep learning models, particularly those utilizing attention mechanisms, provide a reliable framework for assessing surgical skill from video data. This approach has potential applications in training, certification, and real-time surgical skill enhancement, offering a quantifiable and unbiased metric for skill evaluation.

Future work could focus on broadening the applicability of these methods across varying surgical procedures and environments, improving generalizability. Additionally, further analysis of attention maps could enable interpretable assessments, offering actionable insights into skill development.

Conclusion

Deep learning approaches, particularly those augmented with attention, are crucial for advancing video-based assessments of surgical skill. Their ability to process rich contextual information from intraoperative videos positions them as the optimal solution for unbiased, routine skill evaluations in surgical practice. Further validation across diverse datasets is necessary to establish external validity and optimize algorithmic performance for practical application.