- The paper proposes a novel physically-based method that combines scene reconstruction and neural rendering to enable accurate indoor lighting editing.

- It introduces editable representations for both visible and invisible light sources by using specialized networks to predict parameters such as position, intensity, and orientation.

- Quantitative evaluations demonstrate high accuracy and flexibility in relighting tasks, supporting interactive scene editing and augmented reality applications.

Physically-Based Editing of Indoor Scene Lighting from a Single Image

The paper proposes a novel method for realistic editing of indoor scene lighting using only a single image input. This is accomplished through sophisticated physically-based model reconstructions and neural rendering techniques that facilitate accurate scene relighting, including editing of light sources, materials, and geometric configurations.

Methodology

The method is built on two primary components: a comprehensive scene reconstruction and a neural rendering framework.

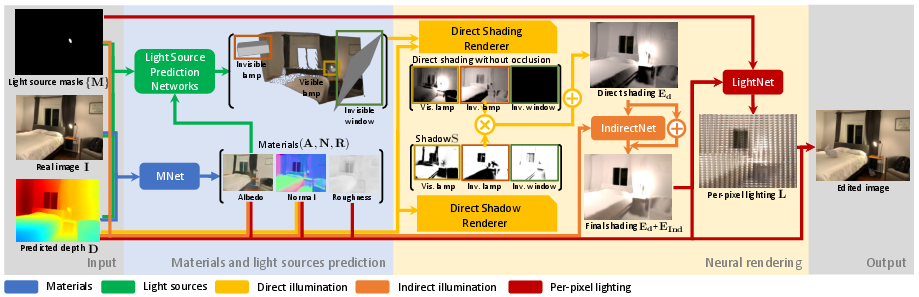

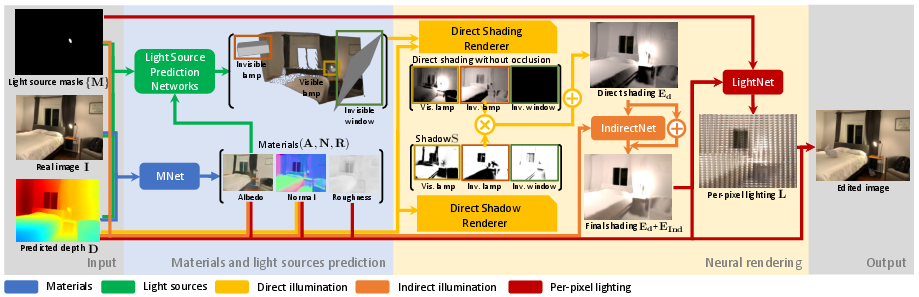

- Scene Reconstruction: The reconstruction process estimates scene reflectance and 3D parametric lighting. It predicts material parameters like albedo, normal, and roughness and separates visible from invisible light sources for intuitive editing. The light source modeling includes both ambient and directional components to capture complex light transport phenomena.

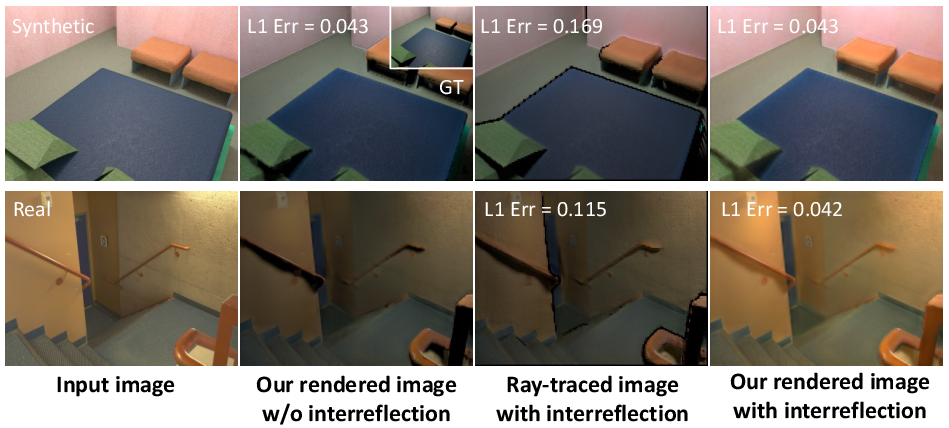

- Neural Rendering Framework: This framework combines classical rendering techniques with neural networks to handle direct and indirect illumination, including soft shadows and interreflections. The approach integrates Monte Carlo ray tracing with learned components to approximate global illumination efficiently.

Figure 1: Overview of the method from RGB input to edited scene rendering, utilizing neural rendering modules for direct and indirect shading.

Light Source Representation and Prediction

The study introduces editable representations for indoor light sources, which are essential for effective relighting:

- Visible and Invisible Light Sources: Prediction involves using neural networks to differentiate and model light sources with respect to their geometry and radiance properties. For windows, a multi-lobe spherical Gaussian model represents directional radiance influenced by external sun lighting (Figure 2).

- Prediction Networks: There are specific networks for lamps and windows to estimate parameters like position, intensity, and orientation. These predictions are refined through rendering loss, ensuring that the predicted lighting aligns with the observed shading patterns in the image.

Neural Rendering and Differentiable Modules

The neural renderer is designed for efficiency and quality:

The method's capabilities are demonstrated with quantitative evaluations against state-of-the-art benchmarks in scene relighting tasks:

- Accuracy: It achieves high fidelity in predicting scene light parameters, allowing realistic object insertion and scene editing.

- Flexibility: The framework supports a variety of editing applications such as turning on fictional light sources and changing object materials with consistent global interaction effects (Figure 5).

The effectiveness is underscored by low errors in light prediction metrics and superior qualitative results compared with existing solutions.

Conclusion

The paper presents a significant advancement in relighting scenes using limited input data. This approach, through physically-based and neural rendering, opens up new possibilities for interactive scene editing and augmented reality applications. Future developments may extend the method to multi-view scenarios, enhancing robustness, and versatility.

代码公开使这些技术在实际应用中具有普遍性和拓展性。