- The paper demonstrates a novel integration of SCNet-50-V1D with ELM, significantly reducing pose estimation error to an MSE of 3.74.

- The framework employs LSTM and GRU-based RNNs to predict robotic arm movements, with GRU showing slightly superior performance on short-term trajectories.

- The study provides actionable insights for enhancing safety in human-robot collaboration through accurate movement forecasting and risk mitigation.

A Framework for Robotic Arm Pose Estimation and Movement Prediction

Introduction

The paper "A framework for robotic arm pose estimation and movement prediction based on deep and extreme learning models" explores the development of a system aimed at enhancing safety and efficiency in human-robot collaboration (HRC). The framework involves using Deep Learning (DL) models, specifically Self-Calibrated Convolutions and Extreme Learning Machines (ELM), to detect robotic arm keypoints and predict their future movements.

Framework Overview

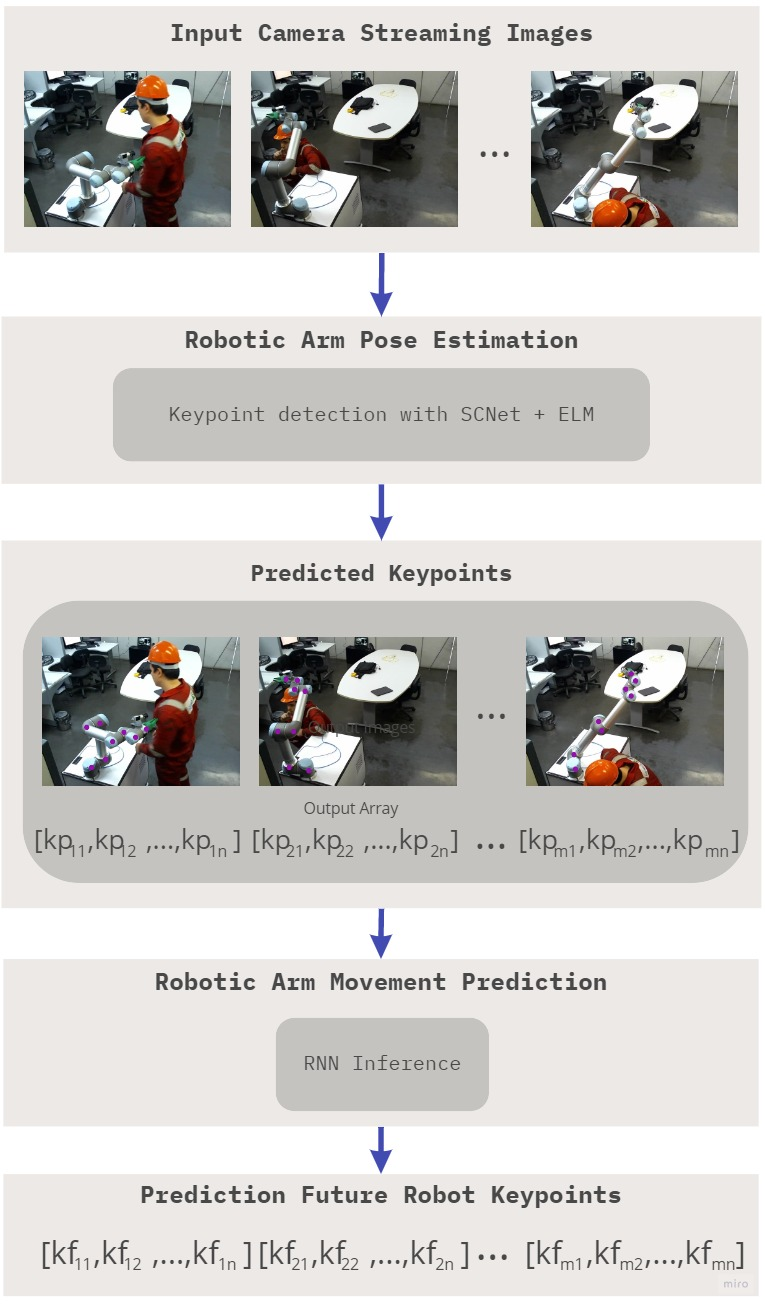

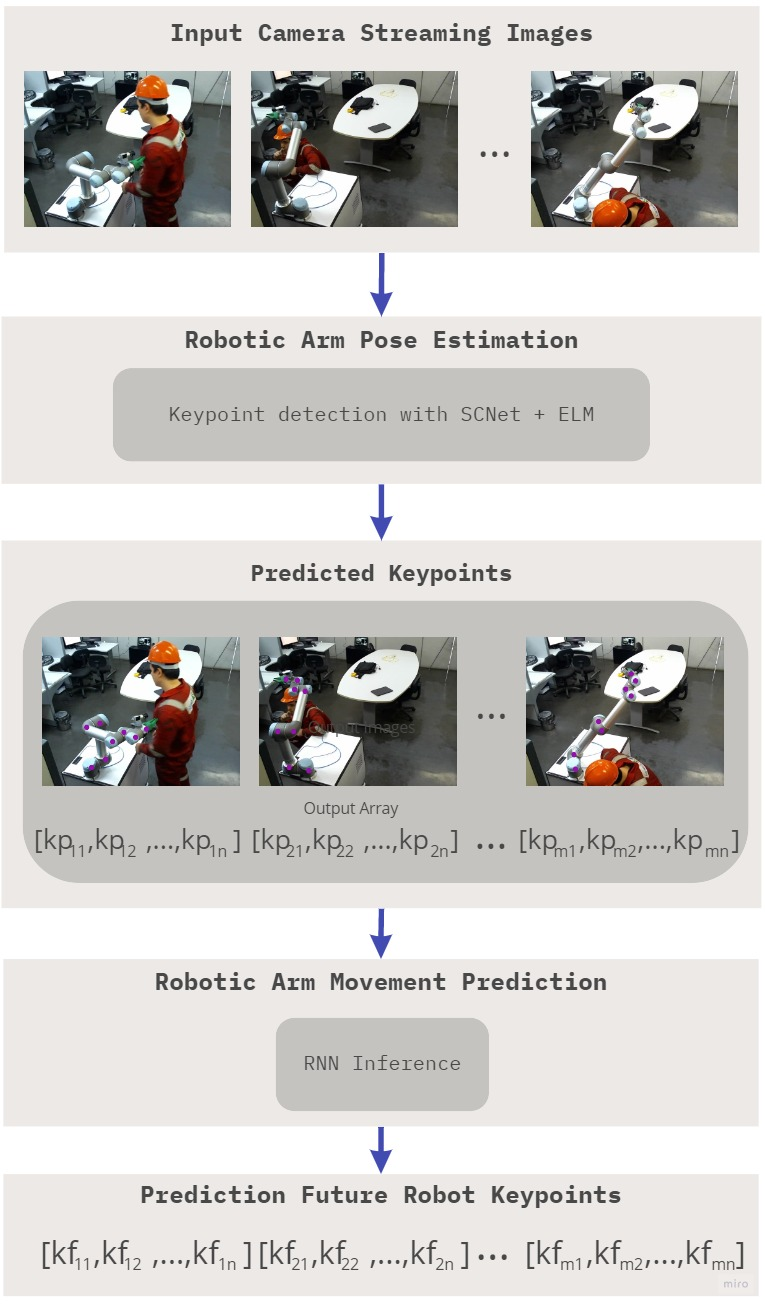

The proposed framework consists of two main components: robotic arm pose estimation and movement prediction.

Robotic arm pose estimation utilizes the SCNet-50-V1D model, which leverages self-calibrated convolutions (SCConvs) to enhance feature representation for regression tasks. This component is further refined using an ELM network to improve detection accuracy. The integration of ELM reportedly reduces the error in the pose estimation task, outperforming several state-of-the-art models analyzed.

Figure 1: Framework for robotic arm pose estimation and prediction of future movement.

Robotic arm movement prediction is achieved using Recurrent Neural Networks (RNN), with a focus on LSTM and GRU architectures. This module encodes past movements to forecast future trajectories, aiding in anticipating and mitigating potential risks in HRC environments.

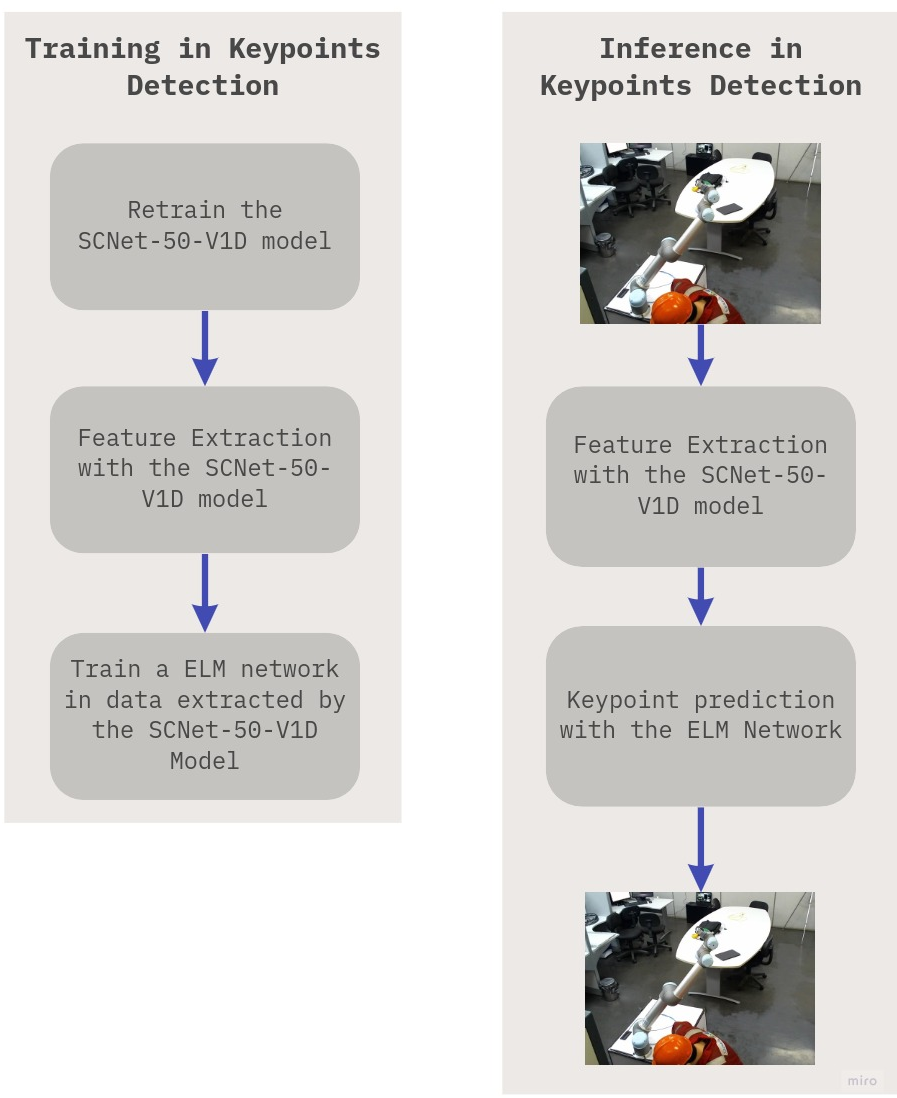

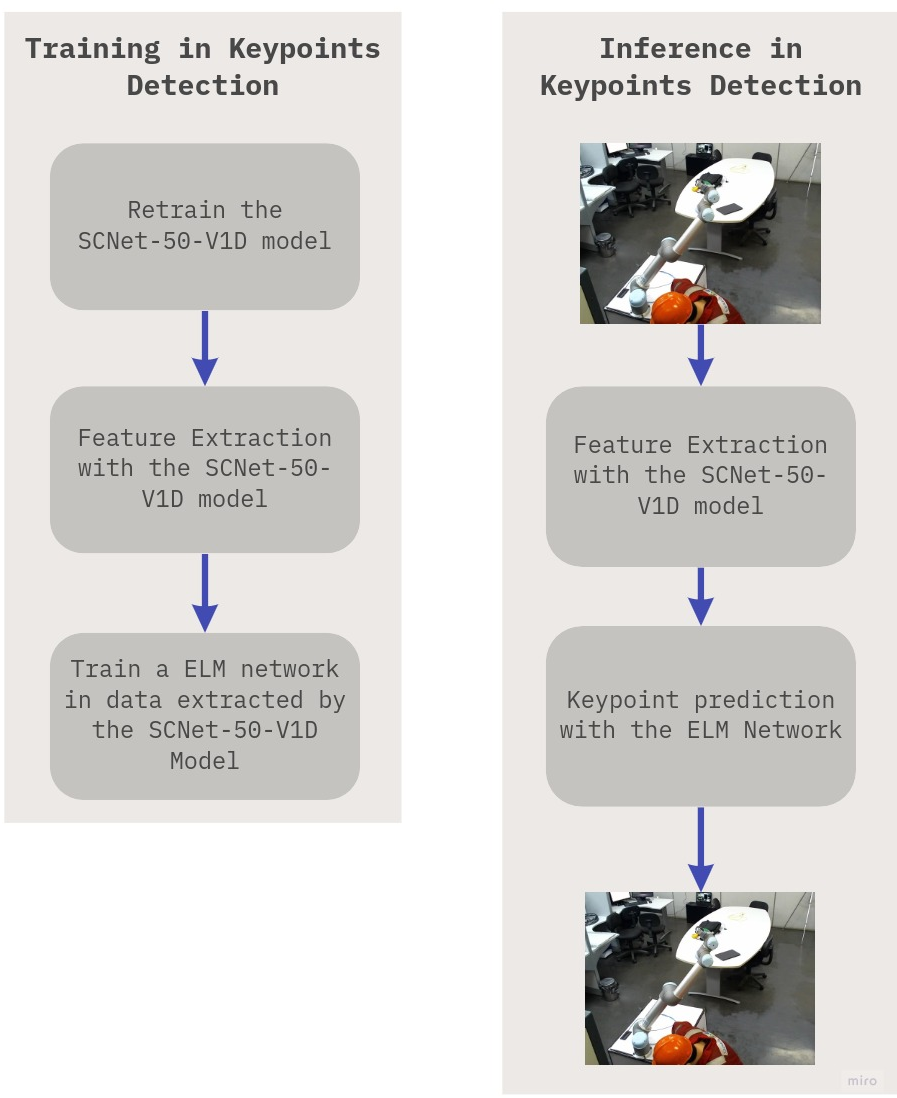

Figure 2: Module for robotic arm pose estimation.

Pose Estimation Implementation

Pose estimation is carried out by re-training SCNet-50-V1D on a custom dataset containing robot keypoints. The network is pretrained on ImageNet and fine-tuned for the new regression challenge by substituting the final fully connected layer with a linear activation layer tailored to predict $16$ joint coordinates (x,y).

An integration of ELM with SCNet-50-V1D, denoted as SCNet-50-V1D+ELM, is employed. ELM networks with various activation functions are tested, and RBF-L2 and Linear activation functions result in the lowest mean squared error (MSE), indicating greater precision in pose estimation.

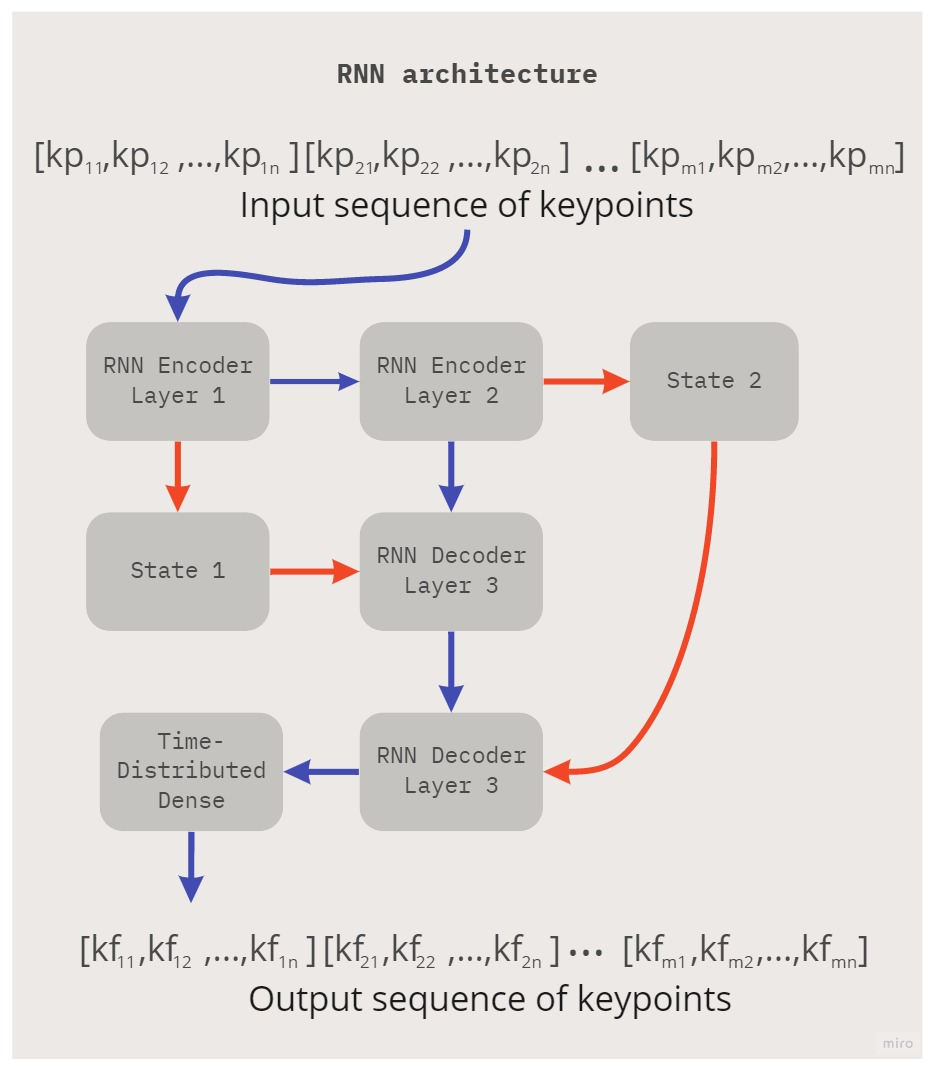

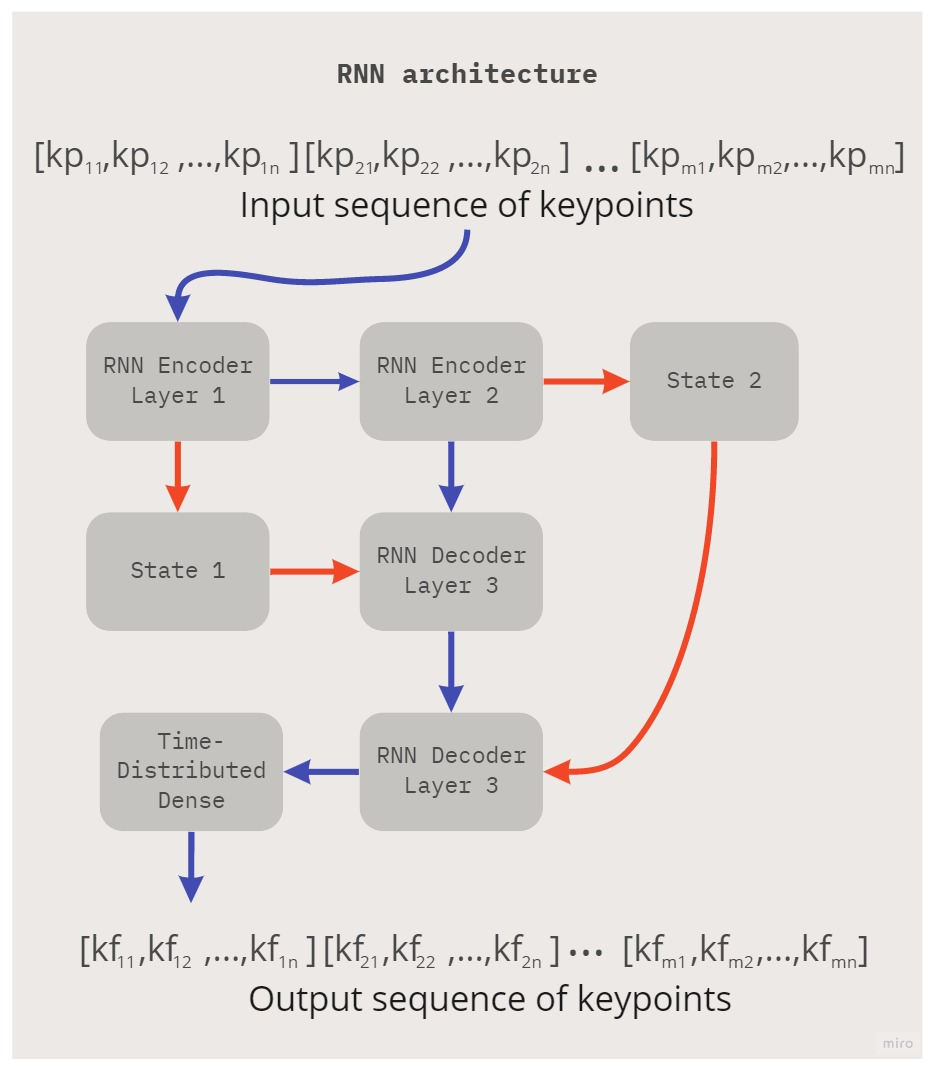

Movement Prediction Implementation

The prediction model uses double-stacked RNNs, specifically LSTM and GRU, configured with encoder-decoder architectures. This configuration ensures efficient learning of long-term dependencies, critical for accurately predicting the arm's future states.

Data for prediction is derived from poses estimated by SCNet-50-V1D+ELM, running on a high-frame rate dataset. Grid search experiments are conducted to determine optimal past and future window sizes for analysis, revealing that GRU slightly edges out LSTM, likely due to GRU's simpler structure fitting better with smaller datasets.

Figure 3: Detailed view of the module for robotic arm movement prediction. Orange arrows refer to copy operations, and blue arrows refer to the normal feedforward process of the neural network.

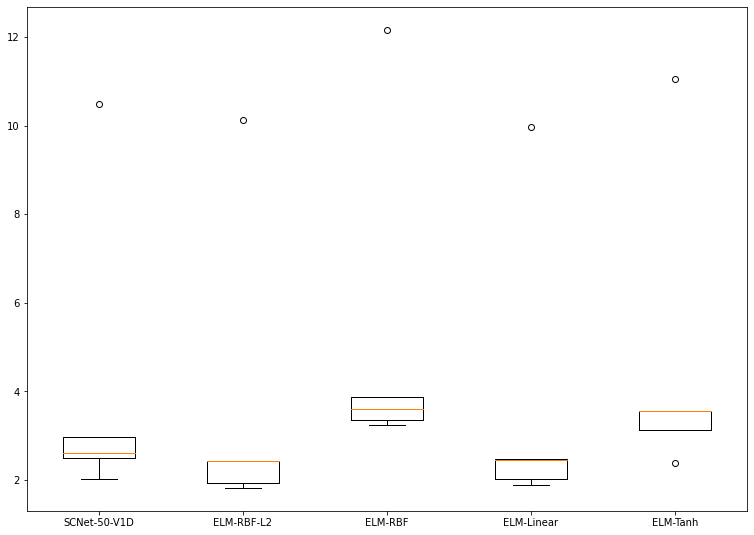

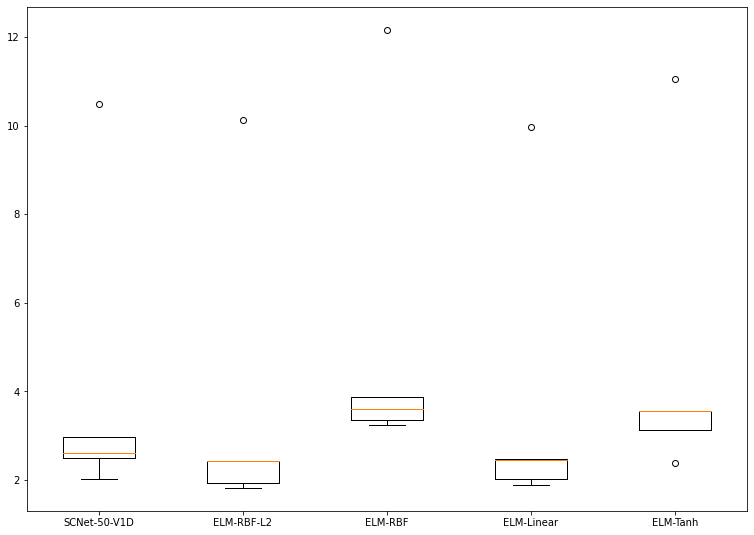

Quantitative Results

The framework reports a significant reduction in error rates when using SCNet-50-V1D+ELM—achieving an MSE of 3.74—compared to a standalone SCNet-50-V1D, which yielded an MSE of 4.12. This highlights the effectiveness of incorporating ELM into DL models for pose estimation tasks.

In movement prediction, GRU models showed superior results when futures were relatively short, with performance declining as the future window increased. The framework establishes low latency and high accuracy, offering practical implementation potential for minimizing risks in HRC setups.

Figure 4: Boxplot representation for MSE results reached by SCNet-50-V1D with and without ELM network.

Conclusion

The paper presents a comprehensive framework that integrates advanced convolutional techniques and extreme learning paradigms to address safety challenges in human-robot interactions. By leveraging these models, the proposed system demonstrates an enhanced capability to detect and predict robot movements with low error rates.

Future work could extend to integrating human pose estimation to provide holistic risk assessment in collaborative robot environments. There's an opportunity to refine these models in more complex settings involving diverse interactions, thereby advancing towards a robust system for collision detection and avoidance in multifaceted HRC scenarios.