A Theoretical Framework for Inference Learning

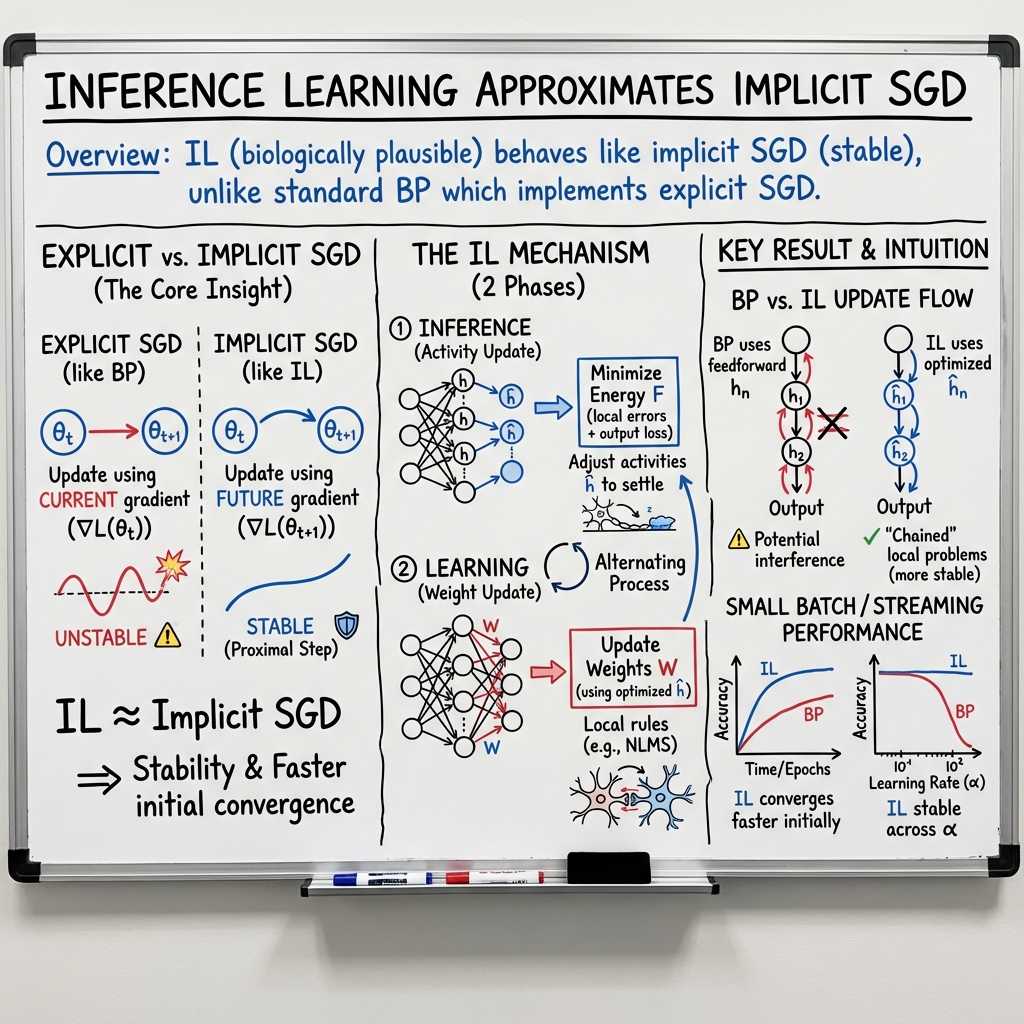

Abstract: Backpropagation (BP) is the most successful and widely used algorithm in deep learning. However, the computations required by BP are challenging to reconcile with known neurobiology. This difficulty has stimulated interest in more biologically plausible alternatives to BP. One such algorithm is the inference learning algorithm (IL). IL has close connections to neurobiological models of cortical function and has achieved equal performance to BP on supervised learning and auto-associative tasks. In contrast to BP, however, the mathematical foundations of IL are not well-understood. Here, we develop a novel theoretical framework for IL. Our main result is that IL closely approximates an optimization method known as implicit stochastic gradient descent (implicit SGD), which is distinct from the explicit SGD implemented by BP. Our results further show how the standard implementation of IL can be altered to better approximate implicit SGD. Our novel implementation considerably improves the stability of IL across learning rates, which is consistent with our theory, as a key property of implicit SGD is its stability. We provide extensive simulation results that further support our theoretical interpretations and also demonstrate IL achieves quicker convergence when trained with small mini-batches while matching the performance of BP for large mini-batches.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Explain it Like I'm 14

What is this paper about?

This paper looks at a learning method for neural networks called inference learning (IL) and explains how it works using solid math. The goal is to show that IL is not just “brain-like,” but also a proper optimization method. The big idea is that IL is very close to a technique called implicit stochastic gradient descent (implicit SGD), which can make learning more stable than the usual method used in deep learning, backpropagation (BP).

What questions did the researchers ask?

The paper asks simple but important questions:

- How does inference learning (IL) actually optimize a neural network?

- How is IL different from backpropagation (BP), the usual method in deep learning?

- Can we describe IL with math that shows it’s a reliable and effective way to learn?

- Does IL have advantages, like being more stable or faster in certain situations?

How did they study it?

The authors developed a clear, general version of IL (they call it Generalized IL, or G-IL) and compared it to backpropagation in both theory and experiments.

Backpropagation vs. Inference Learning

- Backpropagation (BP) updates the network’s weights by computing error gradients (how much each weight should change) and then takes a step in the direction that reduces the error. It’s very effective but hard to match with how real brains might work, because it uses information that isn’t “local” to each connection.

- Inference Learning (IL) first adjusts the “activities” (the values of neurons) to reduce a measure called free energy. Think of free energy as a score that says how well the network’s current activities and predictions match the target. After these activities settle to good values, IL updates the weights using only local information (what’s happening between the connected neurons), which is more brain-like.

Explicit vs. Implicit SGD (simple analogy)

- Explicit SGD (used by BP) is like saying, “Given where I am now, I’ll step downhill using the current slope.”

- Implicit SGD is like saying, “I’ll choose my next step so that, after I move, the new slope looks good and I haven’t jumped too far.” It balances improving the loss and not changing too much at once. This usually makes learning more stable.

The “proximal update” is the math way to do implicit SGD: it tries to reduce the loss while keeping the weight changes small.

Their new version: Generalized IL and IL-prox

- The authors describe a general IL method (G-IL): first tune neuron activities to reduce free energy, then update weights using local prediction errors.

- They introduce IL-prox, a variant that makes IL match implicit SGD even more closely by using a normalized update (called NLMS). In plain terms, this update automatically scales the learning step based on the size of the input signal, which helps stability.

What did they find?

Here are the main findings, explained simply:

- IL closely matches implicit SGD: They prove that IL (especially IL-prox) computes updates that are essentially the same as the proximal/implicit update, particularly when training on one data point at a time (mini-batch size 1).

- Better stability: IL is more stable across different learning rates (how big a step you take each time). This fits with what implicit SGD is known for—stable learning even when steps are large.

- Faster start with small batches: When training with tiny mini-batches (like 1 example at a time), IL improves faster in early training than BP, and can match BP’s performance later. This is useful because brains don’t train on big batches—they learn from streaming, single experiences.

- Comparable performance on standard tasks: With normal (large) mini-batches, IL reaches similar accuracy to BP on tasks like CIFAR-10 image classification and autoencoders, especially when using modern optimizers like Adam.

- A math bridge to other methods: They show IL’s activity updates connect to a technique called Gauss-Newton (a way to approximate smart downhill steps), further grounding IL in standard optimization theory.

Why does it matter?

This work suggests a way to build learning algorithms that are both brain-like and mathematically strong:

- Biological plausibility: IL uses local learning rules (each connection updates using nearby signals), which fits better with how synapses in the brain work.

- Stability: Because IL behaves like implicit SGD, it’s more robust when learning rates vary. In real brains, learning speed can change due to chemicals (neuromodulators), so stability is important.

- Real-world training: IL learns quickly with small mini-batches and streaming data, which is closer to how the brain learns from experience.

- Practical impact: IL could inspire new training methods for energy-efficient, brain-inspired hardware (neuromorphic chips) and lead to algorithms that are less fragile and easier to tune than standard BP.

In short, the paper provides a solid theoretical foundation for IL, shows it can be more stable and sometimes faster than BP in realistic setups, and hints that implicit SGD might be the “hidden” optimization style behind how biological brains learn.

Collections

Sign up for free to add this paper to one or more collections.