- The paper introduces an ARS-based reinforcement learning framework for optimizing mixed electric and human-driven platoon control at signalized intersections.

- It formulates the control problem as a delayed reward MDP that balances energy consumption and travel delay over complete intersection crossings.

- Simulation results using SUMO demonstrate up to 82.51% energy savings while maintaining improved traffic flow.

Learning the Policy for Mixed Electric Platoon Control at Signalized Intersections

This paper presents a reinforcement learning framework tailored for controlling mixed electric platoons, which consist of both connected automated vehicles (CAVs) and human-driven vehicles (HDVs), at signalized intersections.

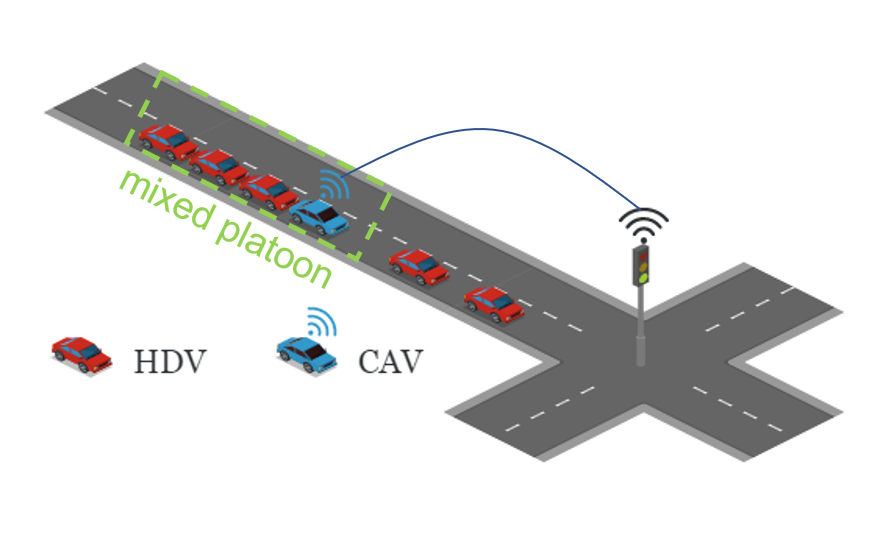

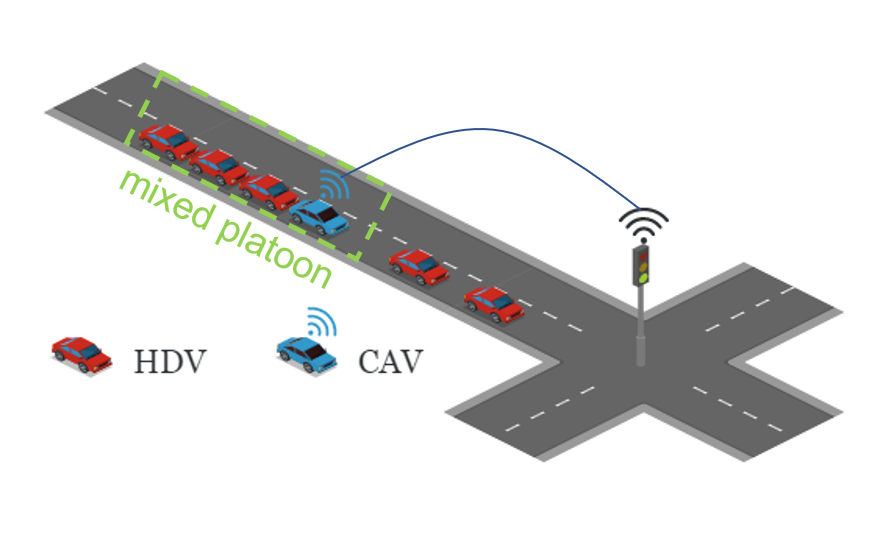

The study addresses the need to optimize the control of mixed traffic scenarios where CAVs can interact with HDVs at traffic bottlenecks such as intersections. The paper proposes a Markov Decision Process (MDP) to model the control strategy for a platoon led by a CAV.

Figure 1: The illustration of the studied scenario.

The research emphasizes the development of a control strategy that can enhance traffic flow and energy efficiency without impairing mobility, focusing specifically on intersections where traditional traffic signal control challenges exist.

Reinforcement Learning Framework

A distinctive aspect of this work is the formulation of the control problem as a delayed reward MDP, where rewards are dependent on the entire journey through an intersection rather than stepwise progress. This approach allows for the agent to optimize across larger spans of action and effect, critical for vehicle platoons in mixed environments.

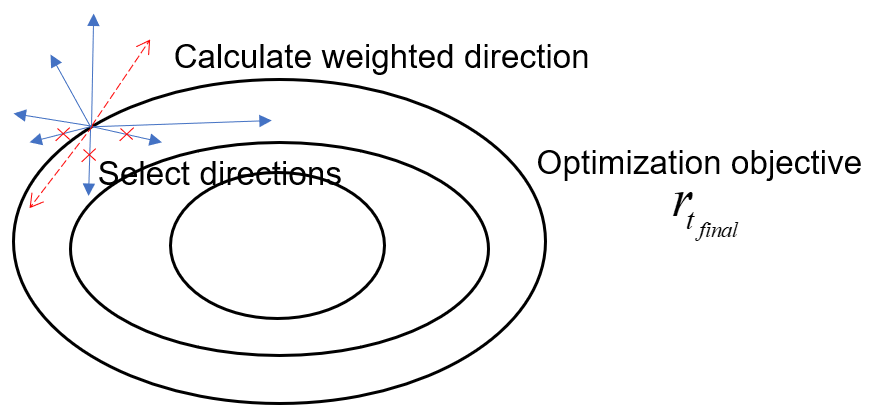

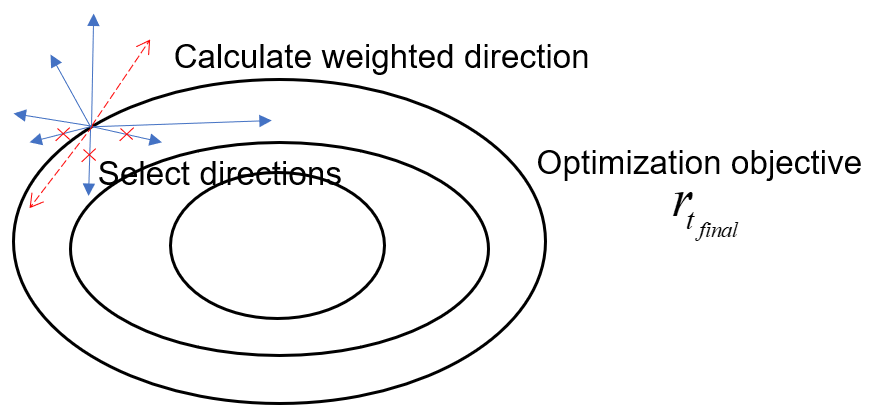

The framework employs Augmented Random Search (ARS), a reinforcement learning algorithm chosen for its robustness and sample efficiency, especially in handling the delayed rewards involved in real-world traffic conditions.

Figure 2: The sketch for the idea of ARS.

Design Considerations

- State Representation: The state representation accounts for all vehicles in the platoon along with relevant intersection traffic signal data.

- Action Space: Adjustments in the vehicle's longitudinal acceleration are controlled, adhering to dynamic constraints to ensure safety and adherence to traffic rules.

- Reward Function: The reward considers total energy consumption and travel delay, computed after the platoon crosses the intersection.

Simulation and Evaluation

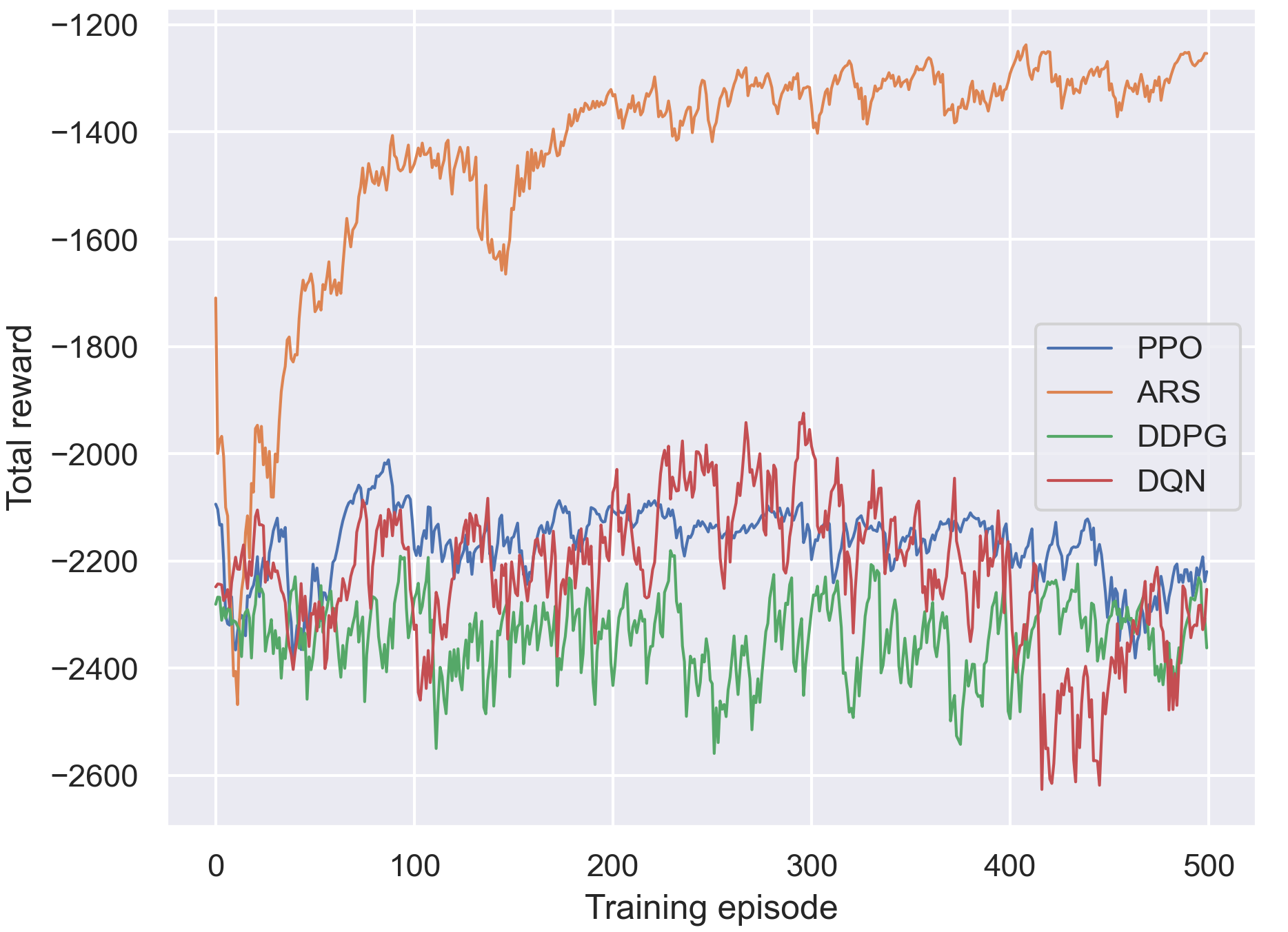

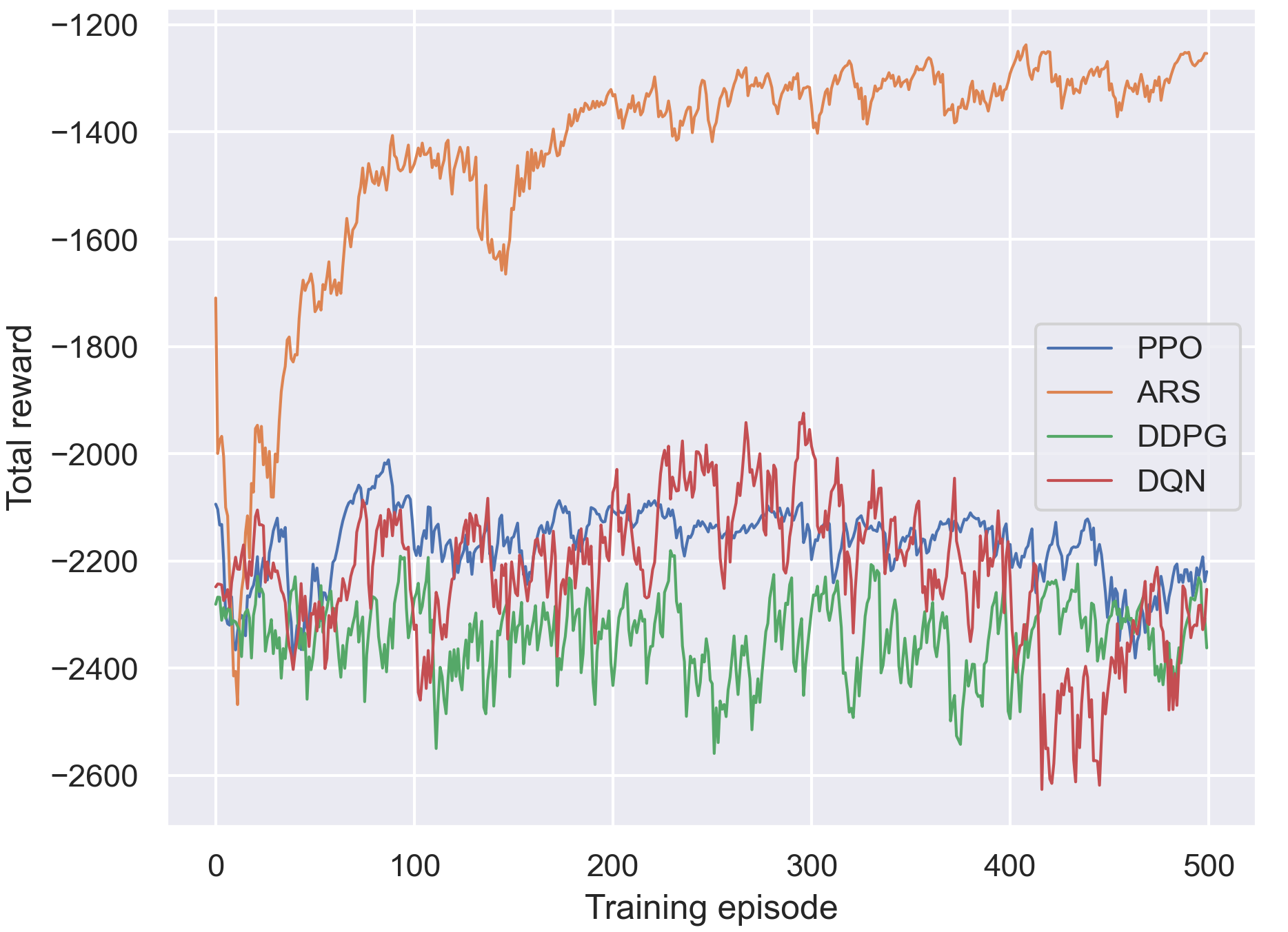

Simulations were performed using the SUMO traffic microsimulation tool. The framework's efficacy was benchmarked against state-of-the-art reinforcement learning algorithms such as PPO, DDPG, as well as traditional methods like IDM.

Figure 3: The comparison for different algorithms of the training process.

The ARS-based approach demonstrated superior performance, notably in energy savings, realized by enabling smoother and more efficient vehicle control strategies, reducing up to 82.51% of energy consumption while managing delays.

Sensitivity and Generalization

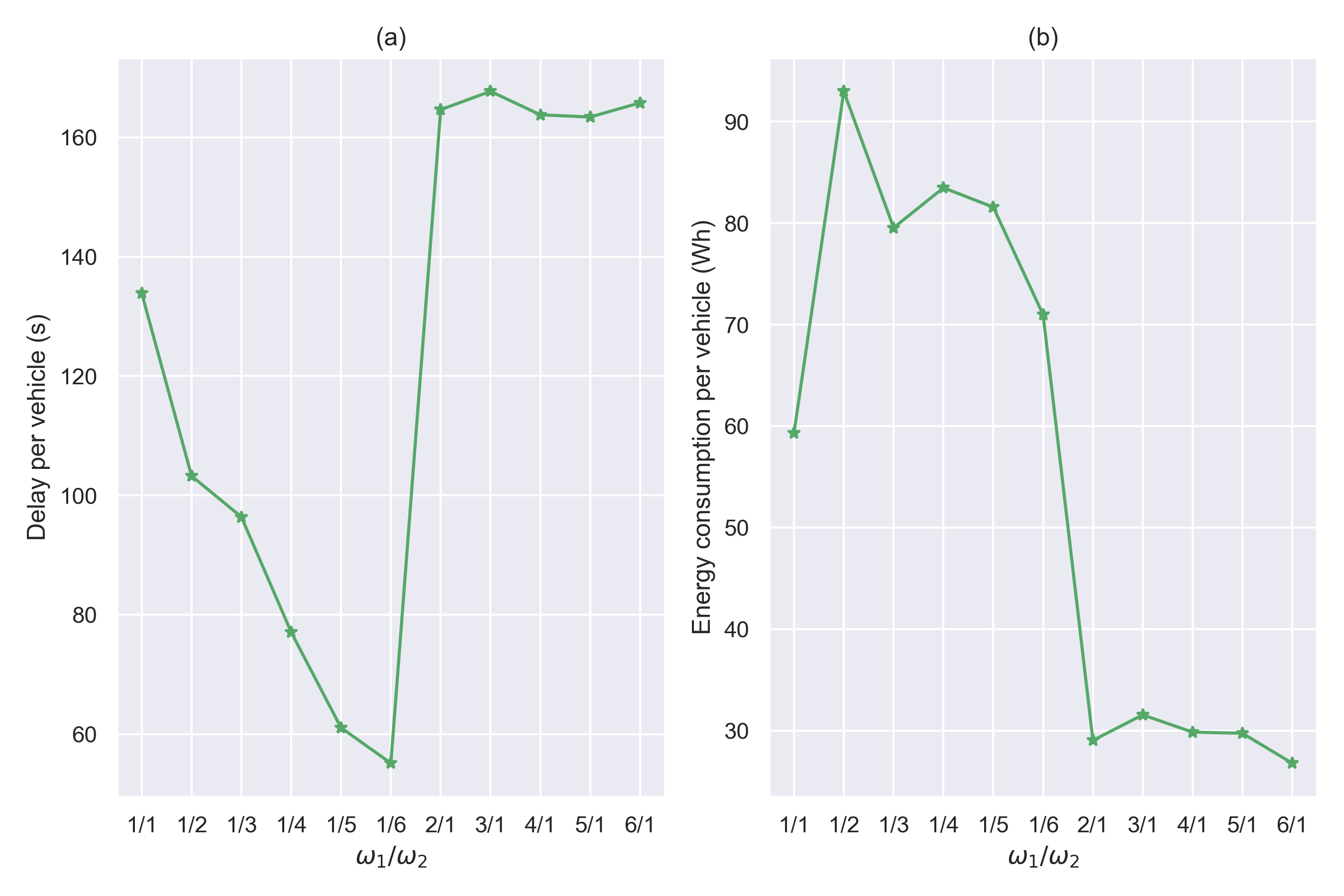

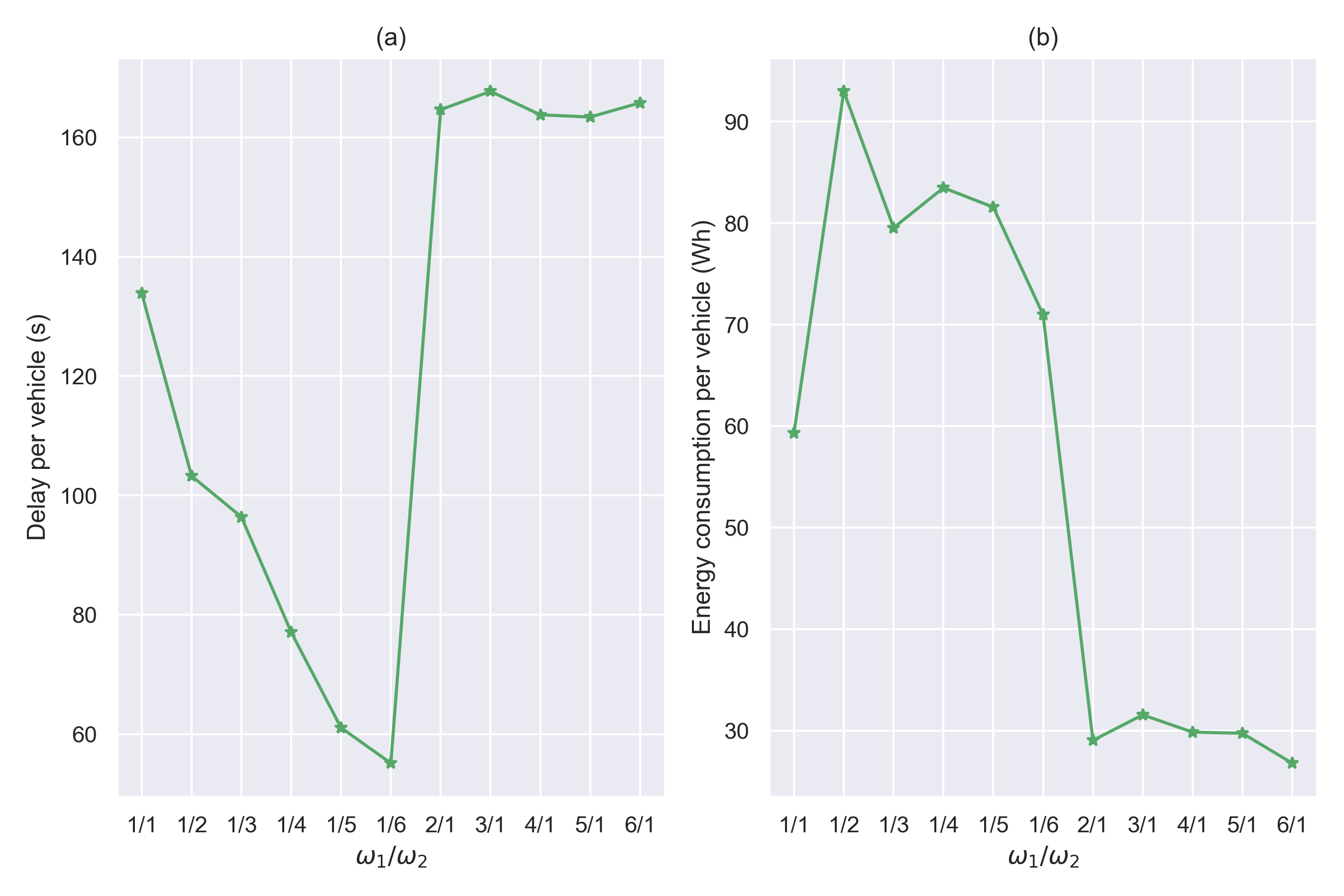

The study examined the influence of reward function weighting parameters on performance, providing insights into the strategic tuning of policy priorities between energy efficiency and travel time.

Figure 4: The variation curve with different settings of ω1 and ω2. (a) Delay per vehicle. (b) Energy consumption per vehicle.

Conclusion

The proposed ARS framework successfully enhances energy efficiency and traffic flow in mixed vehicle platoons at intersections. The study validates ARS's capability to deal with delayed rewards, offering a promising methodology for real-world deployments where computational efficiency and adaptive learning are essential.

Future work may explore extending the framework to multi-intersection scenarios, integrating lateral vehicle control, and refining inter-vehicle communication strategies to further improve overall traffic system performance.