- The paper introduces a policy that integrates closed-loop next-best-view planning with real-time grasp detection to optimize sensor positioning and mitigate occlusions.

- It employs a TSDF-based volumetric map and a convolutional neural network (VGN) to evaluate grasp quality and dynamically update grasp predictions.

- The approach reduces grasp search times while maintaining high success rates compared to fixed camera setups, demonstrating robustness in cluttered environments.

Closed-Loop Next-Best-View Planning for Target-Driven Grasping

Introduction

The paper introduces a novel approach to robotic grasping in cluttered environments, focusing on a method that integrates closed-loop next-best-view planning with real-time grasp detection. The methodology proposed in this work aims to optimize sensor placement to enhance the visibility of occluded object parts, thus improving the robustness and efficiency of grasp executions. This is achieved by a policy that continuously updates grasp predictions based on an evolving scene reconstruction and real-time sensor measurements. The approach effectively reduces execution times without compromising grasp success rates compared to fixed camera view baselines.

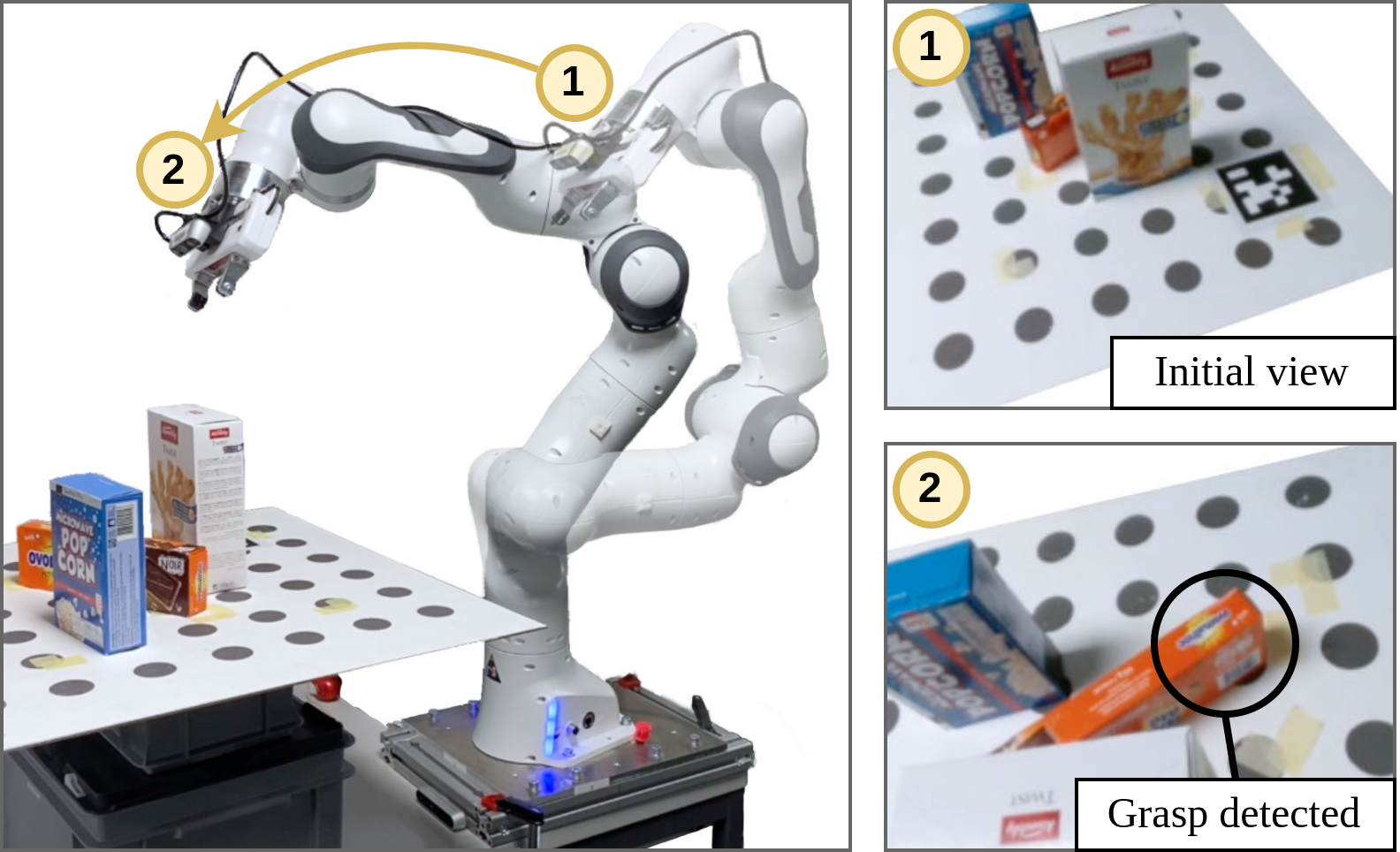

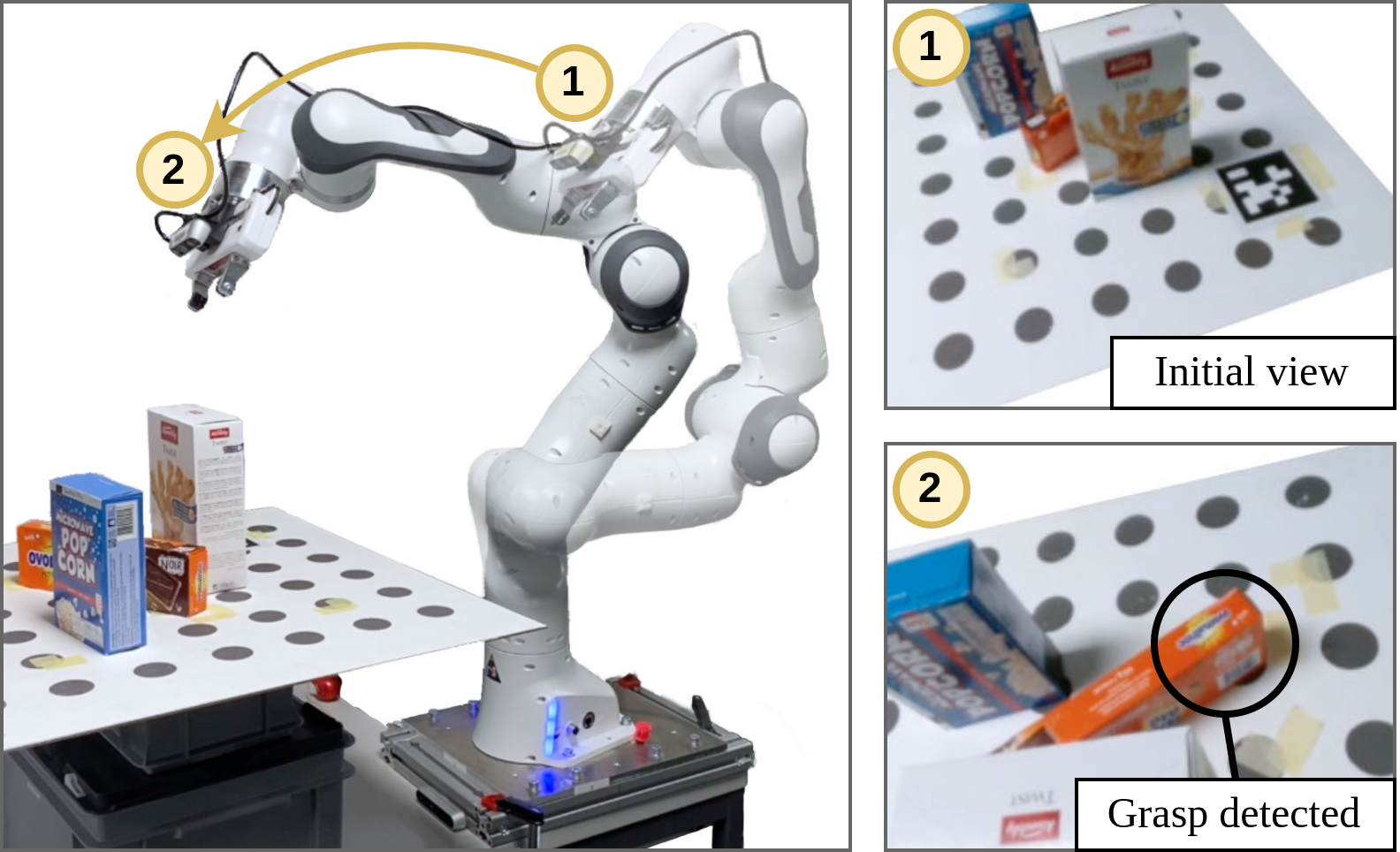

Figure 1: Detecting grasps on the orange packet using the wrist-mounted camera is hindered by occlusions from surrounding objects. By moving the sensor, our next-best-view grasp planner successfully discovers a grasp on the target item.

Methodology

The proposed framework comprises several critical components:

- Volumetric Map Integration: The method employs a Truncated Signed Distance Function (TSDF) to create a volumetric representation of the scene. This allows for efficient integration of new sensor data and aids in accurate grasp detection.

- Grasp Detection: A fully convolutional network, termed Volumetric Grasping Network (VGN), is utilized for grasp synthesis. This network computes grasp quality scores and associated grasp parameters at every voxel within the TSDF representation, filtering for grasps that are feasible both geometrically and kinematically.

- Next-Best-View Planning: The policy generates potential sensor placements, selecting the one maximizing predicted information gain. The information gain metric is computed using ray casting to evaluate the visibility improvement of occluded voxels from new viewpoints.

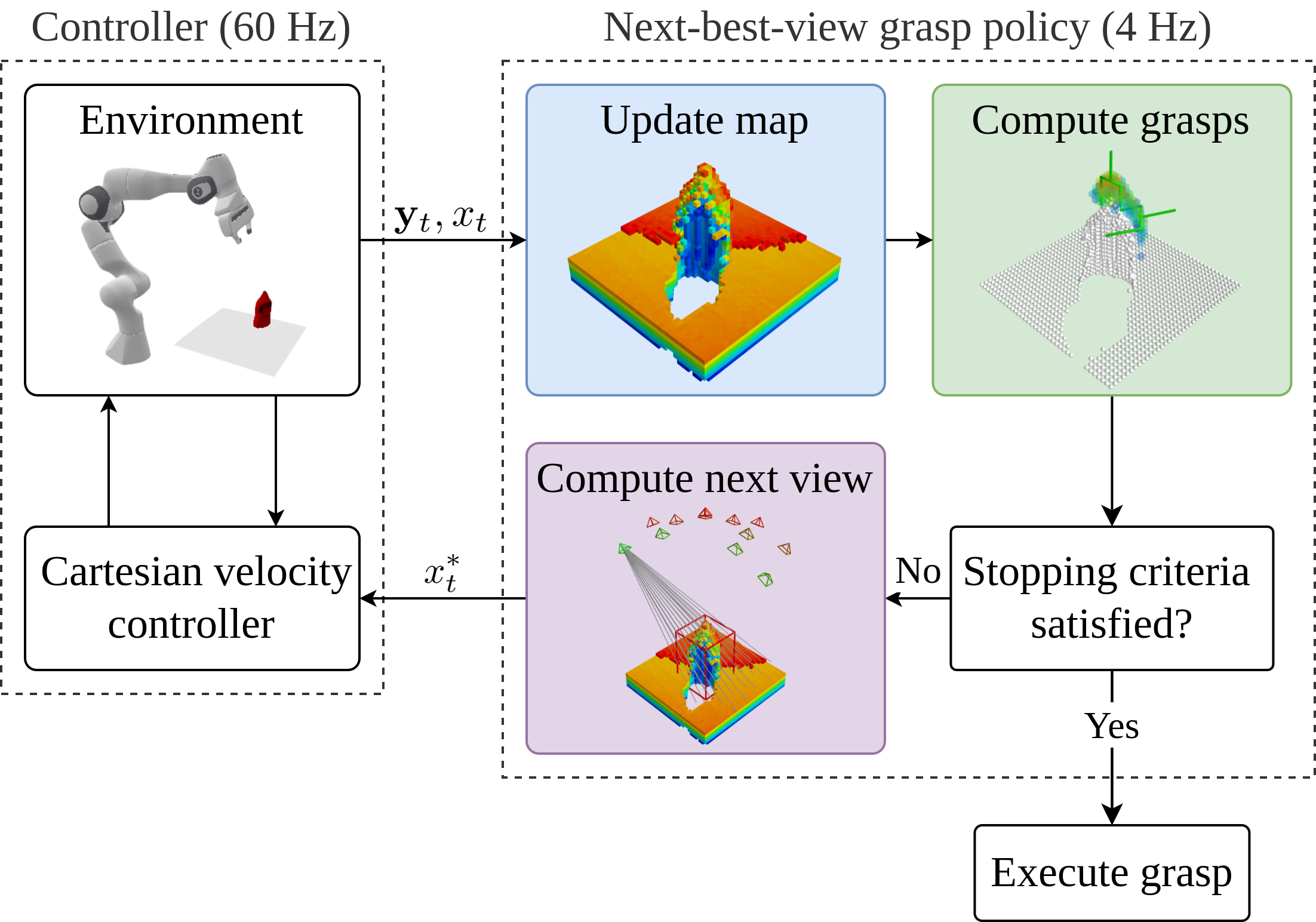

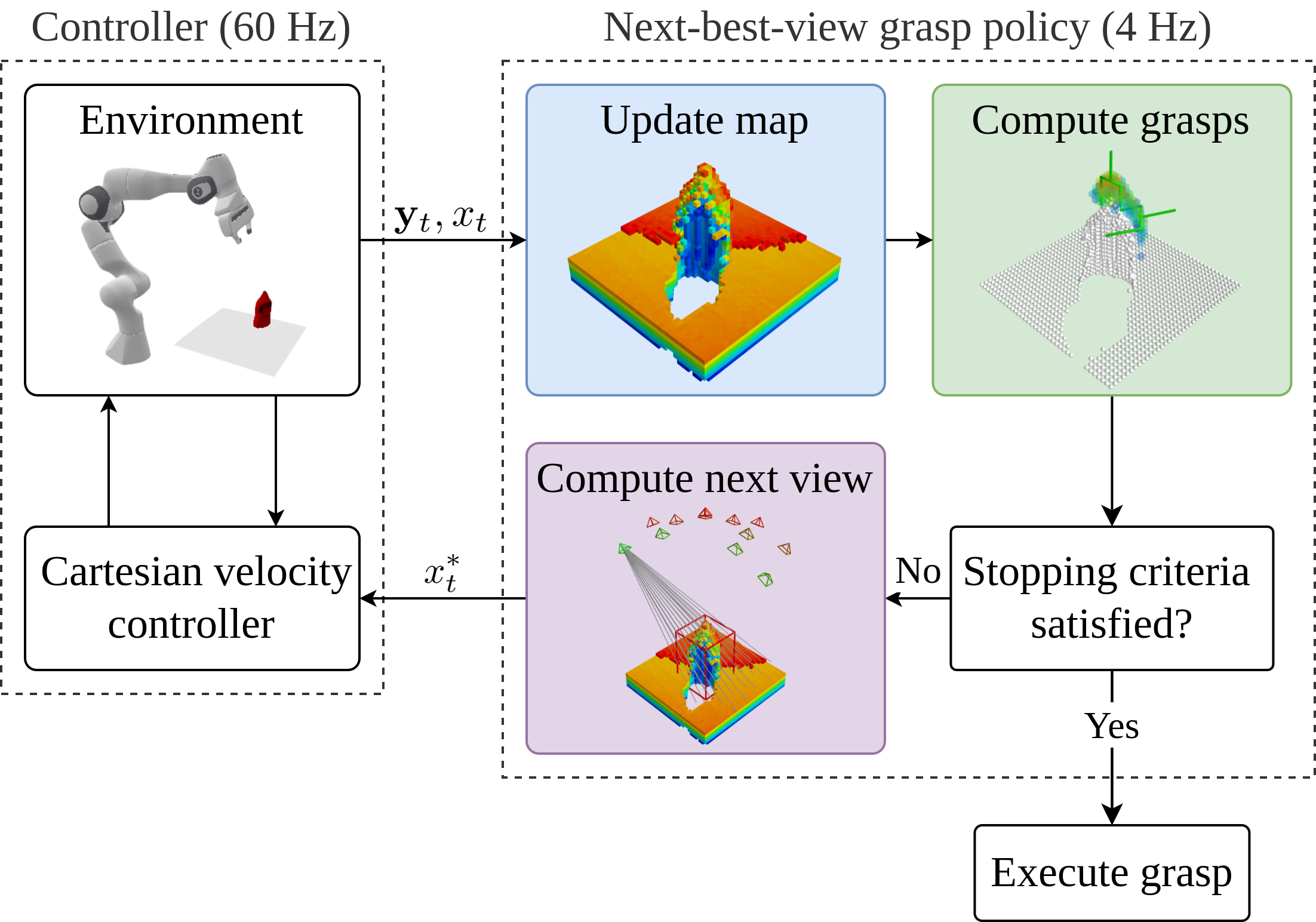

Figure 2: Overview of the framework. Our policy continuously integrates sensor measurements into a volumetric map of the scene, computes grasps, and re-plans informative views until a stable grasp is detected.

Implementation and Evaluation

Significant emphasis is placed on the responsiveness and adaptability of the exploration and grasping policy. Key implementation details include:

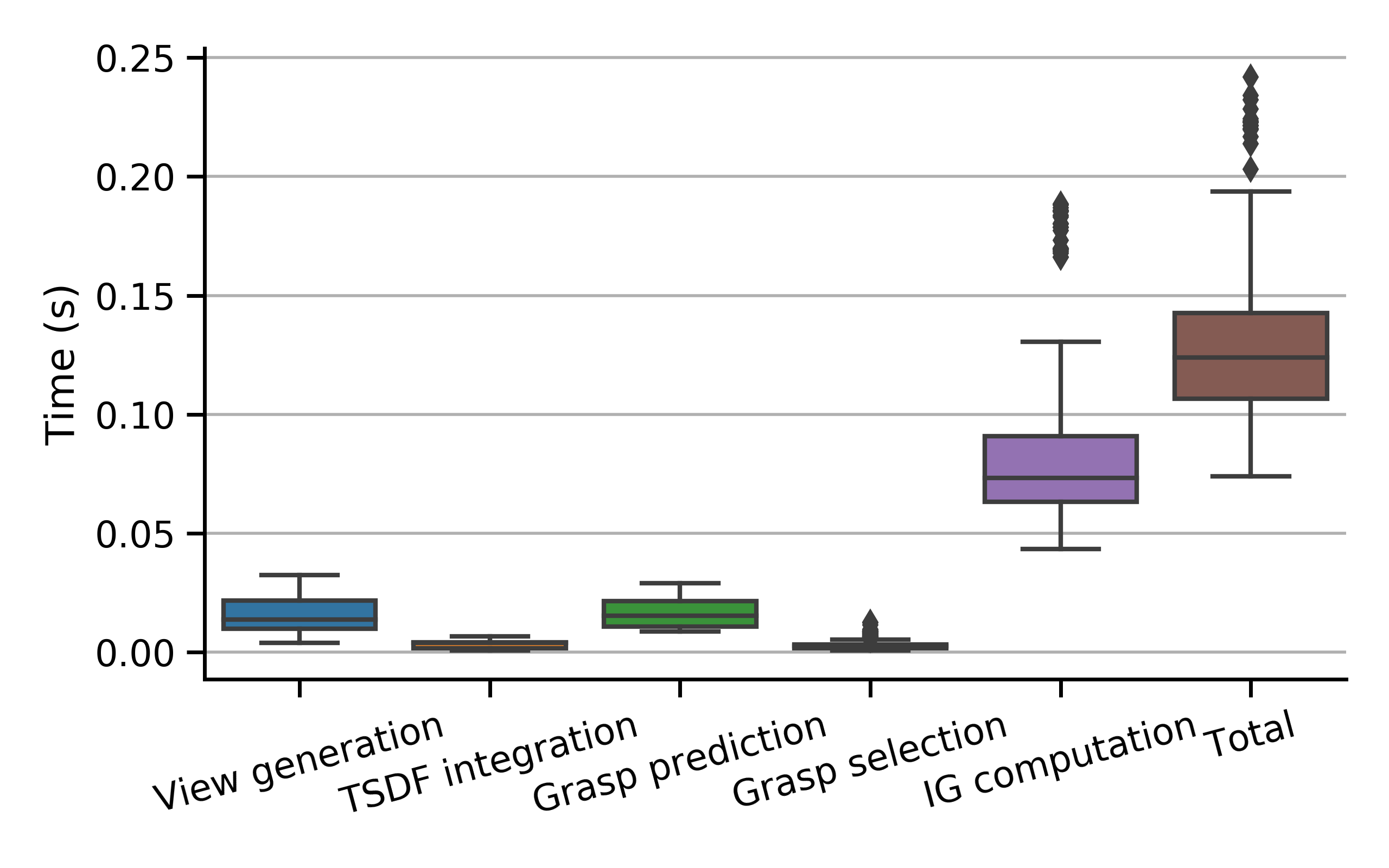

- Policy Execution Rate: The policy re-evaluates sensor data at 4 Hz, ensuring timely adaptations to grasp predictions based on evolving scene information.

- Stopping Criteria: Several criteria are defined to balance exploration with exploitation, including a time budget, minimum information gain threshold, and a grasp stability check based on historical predictions.

The experimental evaluation in both simulation and real-world scenarios showcases the efficacy of the approach. The proposed method significantly reduces search time while maintaining comparable or improved grasp success rates relative to baseline methods employing fixed camera placements.

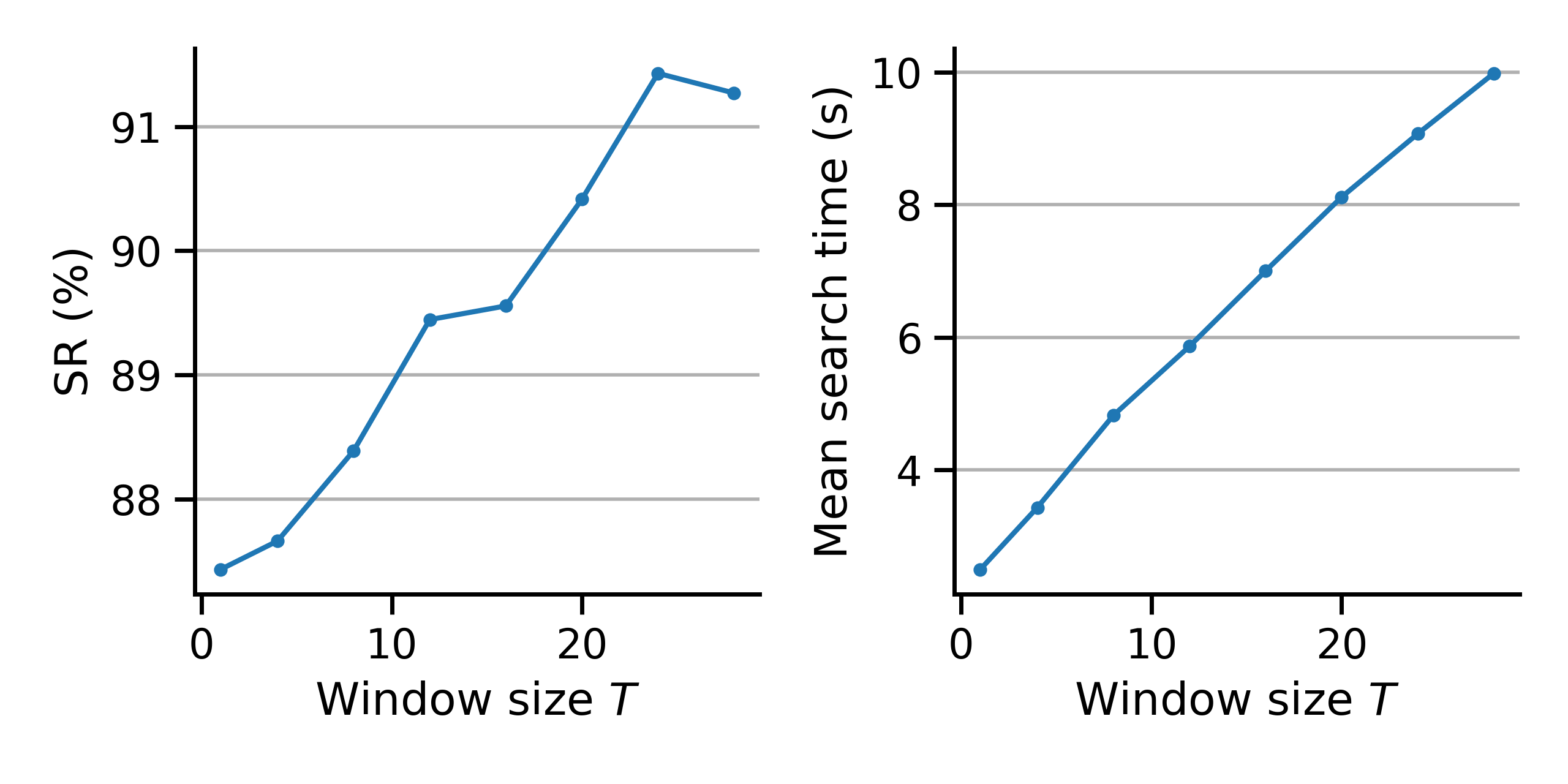

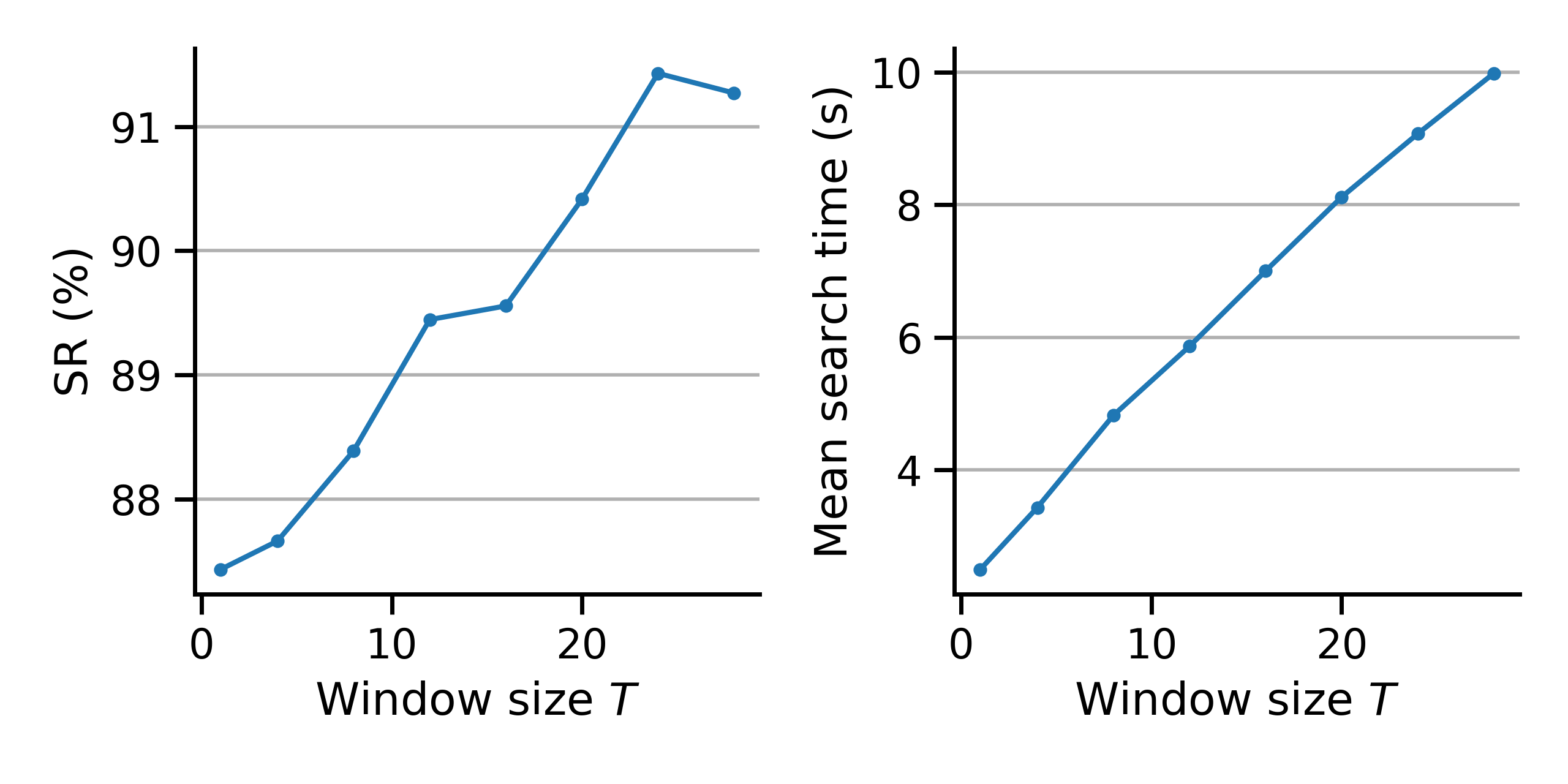

Figure 3: Success rate and search time vs window size T. Larger values of T force the policy to explore longer, leading to higher success rates at the cost of longer search times.

Discussion

While the approach demonstrates robustness and efficiency, several areas are identified for future research:

Conclusion

The paper presents a comprehensive strategy for target-driven grasping in cluttered environments, focusing on dynamic sensor placement to mitigate occlusions. By continuously incorporating new sensory data, the framework efficiently identifies stable grasp configurations, displaying robustness across diverse configurations. The approach offers promising directions for future enhancements in grasping technology, particularly in automating operations in unstructured environments.

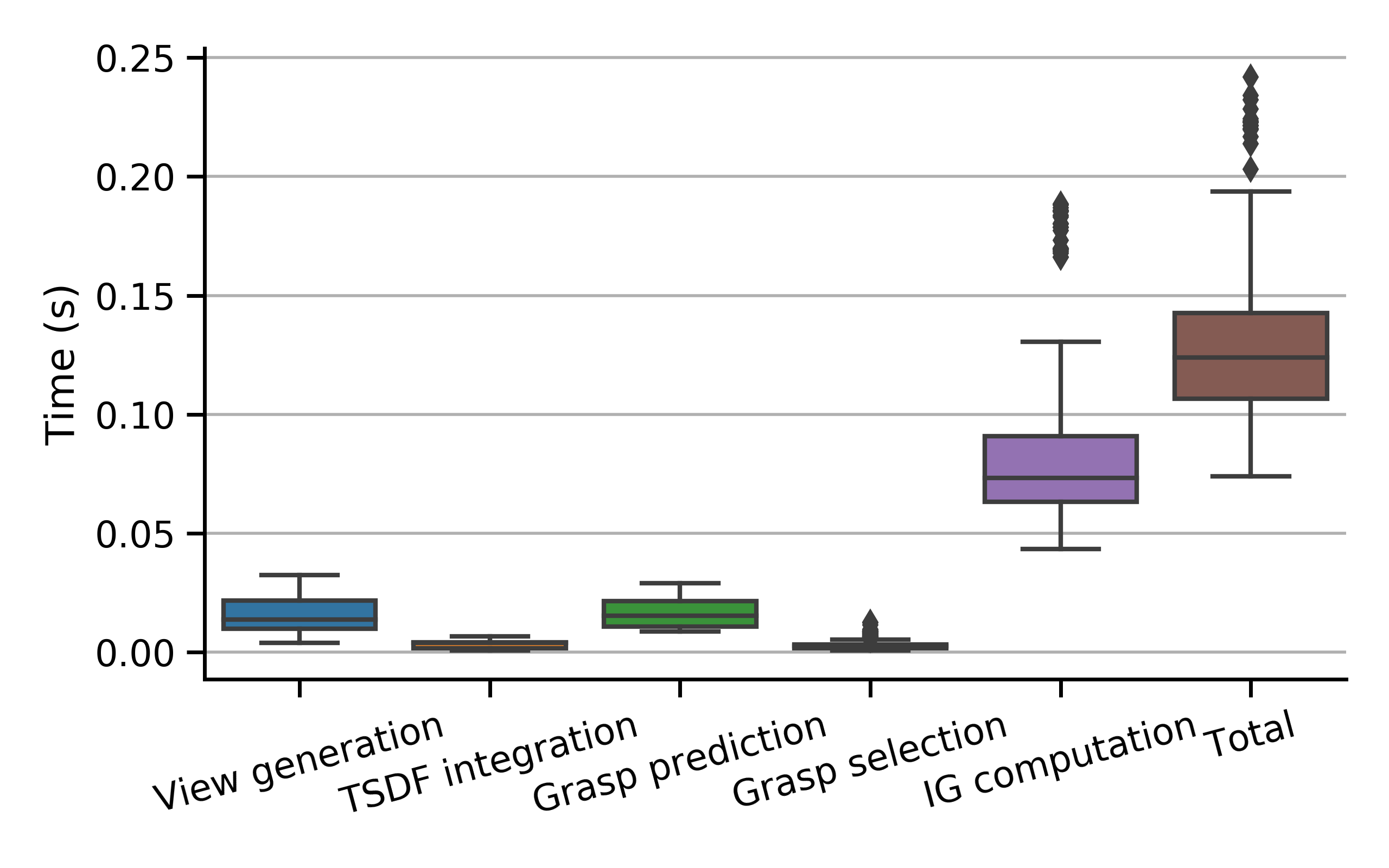

Figure 5: Computation times for one update of our nbv-grasp policy measured over 40 simulation runs.