- The paper demonstrates that LLMs can effectively repair multilingual code by leveraging compiler errors and few-shot learning.

- It details ring’s three-phase methodology: fault localization, code transformation using relevant bug-fix examples, and candidate ranking based on log probabilities.

- Results show ring outperforms language-specific engines in several languages, underscoring the promise of language-agnostic automated program repair.

Repair Is Nearly Generation: Multilingual Program Repair with LLMs

This essay provides a comprehensive summary of the paper titled "Repair Is Nearly Generation: Multilingual Program Repair with LLMs" (2208.11640). The paper introduces a novel approach for automated program repair (APR) using a LLM specifically trained on code, referred to as ring. The focus is on enabling efficient, multilingual program repairs across various programming languages with minimal effort.

Introduction to Multilingual Program Repair

The study addresses the problem that programmers frequently make minor errors termed "last-mile mistakes," which disrupt workflow but require minimal edits for correction. Existing APR methods are often language-specific, necessitating substantial engineering for new languages. The authors propose ring, which leverages Codex, a large-scale LLM trained on code, to efficiently handle these errors in multiple languages.

The process follows a flipped model approach for software development assistants, where the programmer writes code, and the LLM suggests corrections, thus easing the developers' debugging process.

ring's Architecture and Methodology

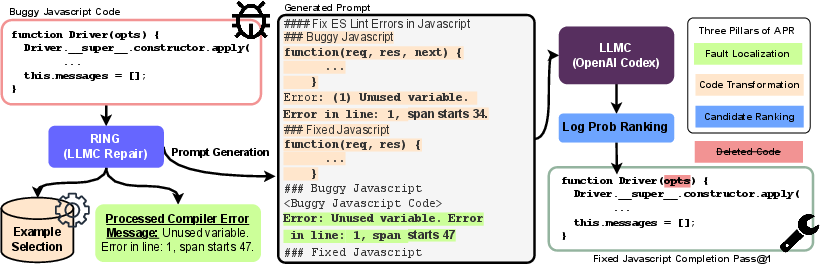

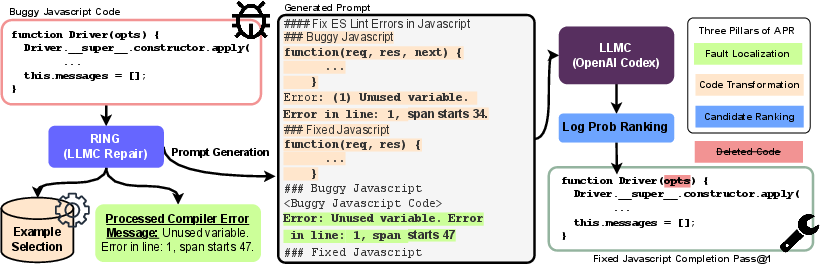

ring’s architecture consists of three phases — fault localization, code transformation, and candidate ranking — closely mimicking human debugging strategies.

Fault Localization

Fault localization involves identifying the precise location of errors in the code. This step is critical, as accurately identifying bugs is often the most challenging aspect of debugging. ring utilizes compiler error messages to guide the LLM in this phase. The error messages are preprocessed to create a standardized prompt that ring can interpret effectively.

Figure 1: ring, powered by a LLM trained on Code (LLMC), performs multi-lingual program repair. ring obtains fault localization information from error messages and leverages LLMC's few-shot capabilities for code transformation through example selection, forming the prompt. A simple yet effective technique ranks repair candidates.

For code transformation, ring exploits Codex’s few-shot learning capabilities. It uses a smart selection strategy to choose examples from a repository of bug-fix pairs, ensuring the transformation is relevant to the specific error type.

Candidate Ranking

Finally, candidate ranking is performed by evaluating the log probabilities generated during the decoding process. ring prefers variations generated with a higher temperature parameter, which increases diverseness in solutions. The ranking is based on the cumulative log-probabilities of tokens, sorting candidates to prioritize the most likely correct fixes.

Evaluation and Results

The paper evaluates ring across six languages: Excel, Power Fx, Python, JavaScript, C, and PowerShell. The evaluation demonstrates that ring can outperform language-specific repair engines in Excel, Python, and C, and shows comparable results for JavaScript and Power Fx. In PowerShell, which lacked a specific baseline, ring provided a novel capability.

The study uses metrics like pass@k rate to assess effectiveness, demonstrating robust performance improvements through smart few-shot selection strategies. The results confirm the viability of multilingual repair and the substantial capability of Codex in APR tasks.

Implications and Future Directions

ring offers significant implications for the development of language-agnostic automated repair systems. It demonstrates that leveraging LLMs trained on code can simplify multilingual repair processes through intuitive prompt-based strategies. The paper opens avenues for further research into integrating LLMs with symbolic methods or developing more sophisticated ranking mechanisms.

Future work may explore iterative querying techniques and tighter integration of lightweight symbolic constraints to enhance repair capabilities and address complex bug types more effectively. The broader impact includes a shift towards AI-driven code maintenance, significantly reducing the burden of bug fixes across diverse programming environments.

Conclusion

ring showcases an innovative use of LLMs for program repair, offering compelling evidence that multilingual repair is feasible and effective. By integrating LLMs like Codex into the debugging process, ring exemplifies a new paradigm in APR, highlighting the potential of AI to facilitate efficient programming assistance and program correctness across different languages.